1 Starting a fabulous adventure

This opening chapter introduces the book’s idea of “fabulous” algorithms and data structures: techniques that solve real problems while also surprising, delighting, or changing how a programmer thinks. The author emphasizes approaches that go beyond standard classroom material, such as immutable structures, backtracking across different domains, algorithms that feel like magic tricks, and solutions that use language features or theoretical ideas in powerful ways. The chapter frames programming as both practical problem solving and creative exploration.

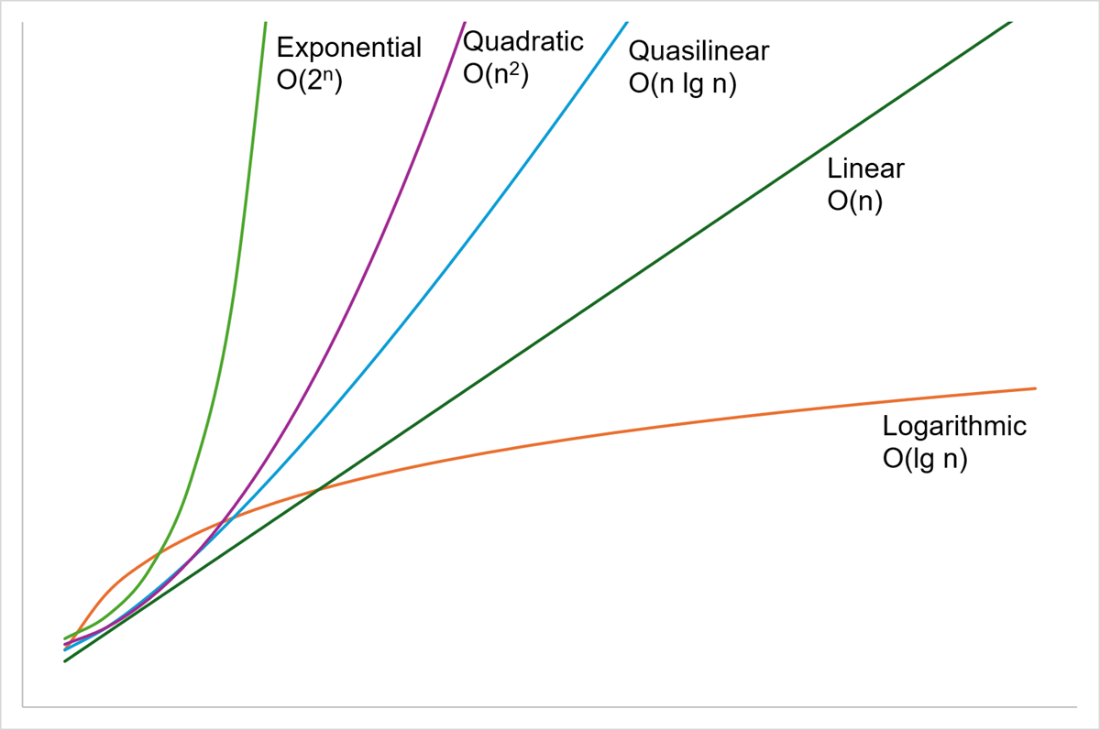

The chapter then defines the core concepts of data structures, algorithms, and complexity. A data structure organizes information, an algorithm is a finite procedure for solving a problem, and complexity describes how the cost of solving a problem grows as the problem size increases. It reviews common Big O categories, from constant and logarithmic time through linear, quasilinear, quadratic, exponential, and factorial growth. It also stresses that performance analysis should consider not only time, but also memory, resource use, and best-, average-, and worst-case behavior, while remembering that asymptotically “better” algorithms are not always better for typical real-world inputs.

As a warmup, the chapter builds and analyzes an immutable linked list in C#. This example shows how even simple code can hide design and performance issues, such as unsafe object states, generated equality behavior, possible stack overflow from recursive comparisons, and accidentally quadratic string concatenation. The chapter then demonstrates reversing an immutable linked list by walking through the original list and pushing each value onto a new list, producing an unchanged original and a reversed copy in linear time and memory. It closes by previewing later topics, including persistent structures, memoization, backtracking, unification, probability tools, randomness, Bayesian inference, and theoretical connections to everyday data types.

A graph comparing the growth curves for logarithmic, linear, quasilinear, quadratic and exponential growth. Logarithmic is the shallowest curve, growing very slowly, and exponential is the fastest.

Summary

- There are lots of books about standard data structures such as lists, trees and hash tables, and the algorithms for sorting and searching them. And there are lots of implementations of them already in class libraries. In this book we’ll look at the less well-known, more off-the-beaten-path data structures and algorithms that I had to learn about during my career. I’ve chosen the topics I found the most fabulous: the ones that are counterintuitive, or seemingly magical, or that push boundaries of languages.

- I had to learn about almost all the topics in this book when I needed a new tool in my toolbox to solve a job-related problem. I hope this book saves you from having to read all the abstruse papers that I had to digest if you too have such a problem to solve.

- But more important to me is that all these fabulous adventures gave me a deeper appreciation for what computer programmers are capable of. They changed how I think about the craft of programming. And I had a lot of fun along the way! I hope you do too.

- Asymptotic complexity gives a measurement of how a solution scales to the size of the problem you’re throwing at it. We generally want algorithms to be better than quadratic if we want them to scale to large problems.

- Complexity analysis can be subtle. It’s important to remember that time is not the only resource users care about, and that they might also have opinions on whether avoiding a bad worst-case scenario is more or less important than achieving an excellent average performance.

- If problems are always small, then asymptotic complexity matters less.

- Reversing a linked list is straightforward if you have an immutable list! We’ll look at other kinds of immutable lists next.

Fabulous Adventures in Data Structures and Algorithms ebook for free

Fabulous Adventures in Data Structures and Algorithms ebook for free