1 Small Language Models

The chapter introduces Small Language Models as a practical alternative to highly hyped, general-purpose Large Language Models. It explains that SLMs use the same Transformer-based foundations as larger models but operate at a smaller scale, typically with far fewer parameters, lower memory needs, and reduced computational requirements. Because they can run locally on edge devices, commodity hardware, on-premises servers, or small clusters, they are especially valuable when privacy, latency, offline operation, cost, and energy efficiency matter.

The chapter also gives a high-level view of how modern language models emerged from the Transformer architecture. It contrasts earlier recurrent neural networks with Transformers, emphasizing self-attention, parallel processing, and word embeddings as key advances that made large-scale self-supervised training possible. It then describes important Transformer families, including encoder-based models suited to classification and prediction tasks and decoder-based models suited to text generation, while also noting techniques such as reinforcement learning from human feedback. Language models are shown to support a wide range of tasks, including question answering, summarization, classification, code generation, dialogue, reasoning, and work with symbolic text-like representations.

The chapter argues that closed-source generalist LLMs, while powerful and convenient, introduce business risks around data exposure, lack of transparency, limited interpretability, hallucinations, hidden training data, infrastructure dependence, and misuse. Open source models offer organizations more control and can reduce development costs by providing pretrained foundations that can be specialized rather than built from scratch. The chapter concludes that domain-specific SLMs and customized language models can provide greater value in regulated, privacy-sensitive, or highly specialized industries because they can be tuned on relevant data, deployed within organizational boundaries, achieve better task-specific accuracy, and reduce computational and environmental costs compared with very large generalist systems.

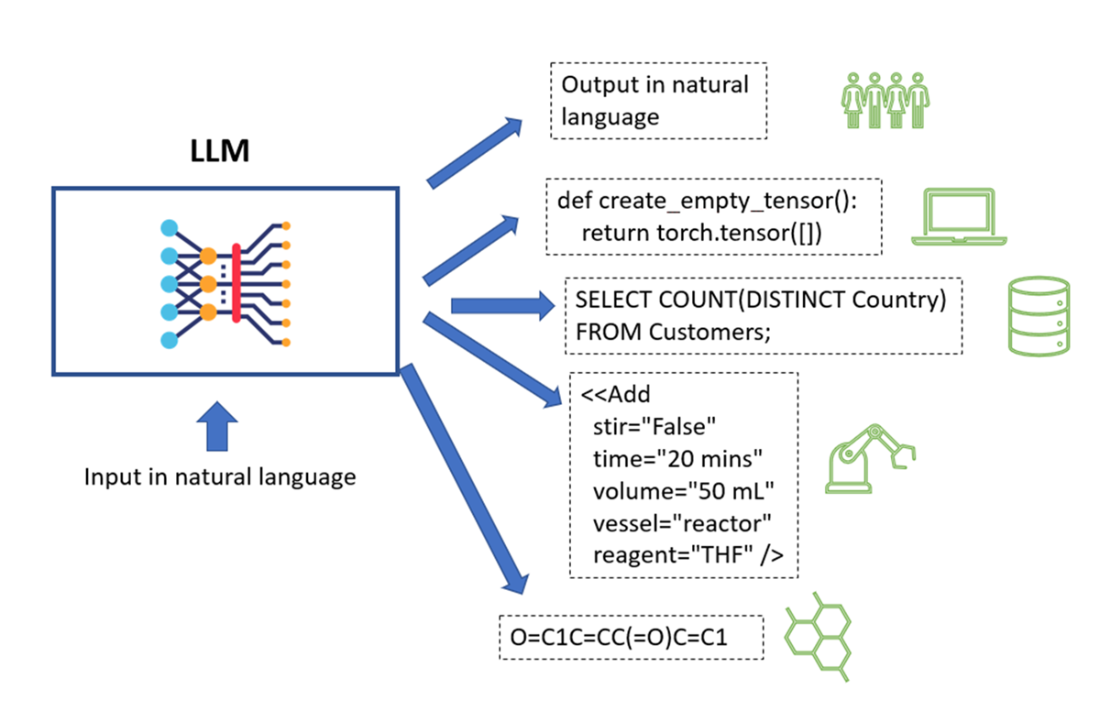

Some examples of diverse content an LLM can generate.

The timeline of LLMs since 2019 (image taken from paper [3])

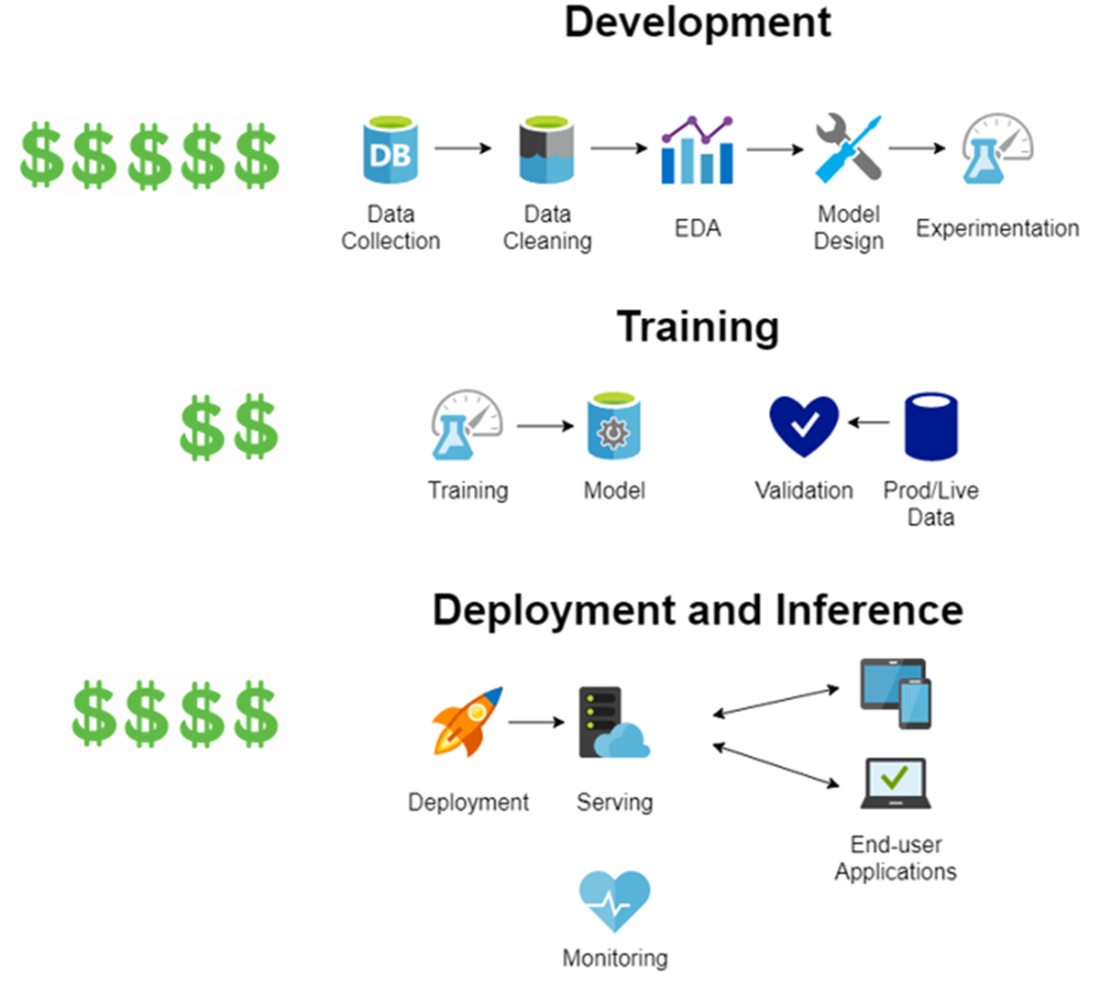

Order of magnitude of costs for each phase of LLM implementation from scratch.

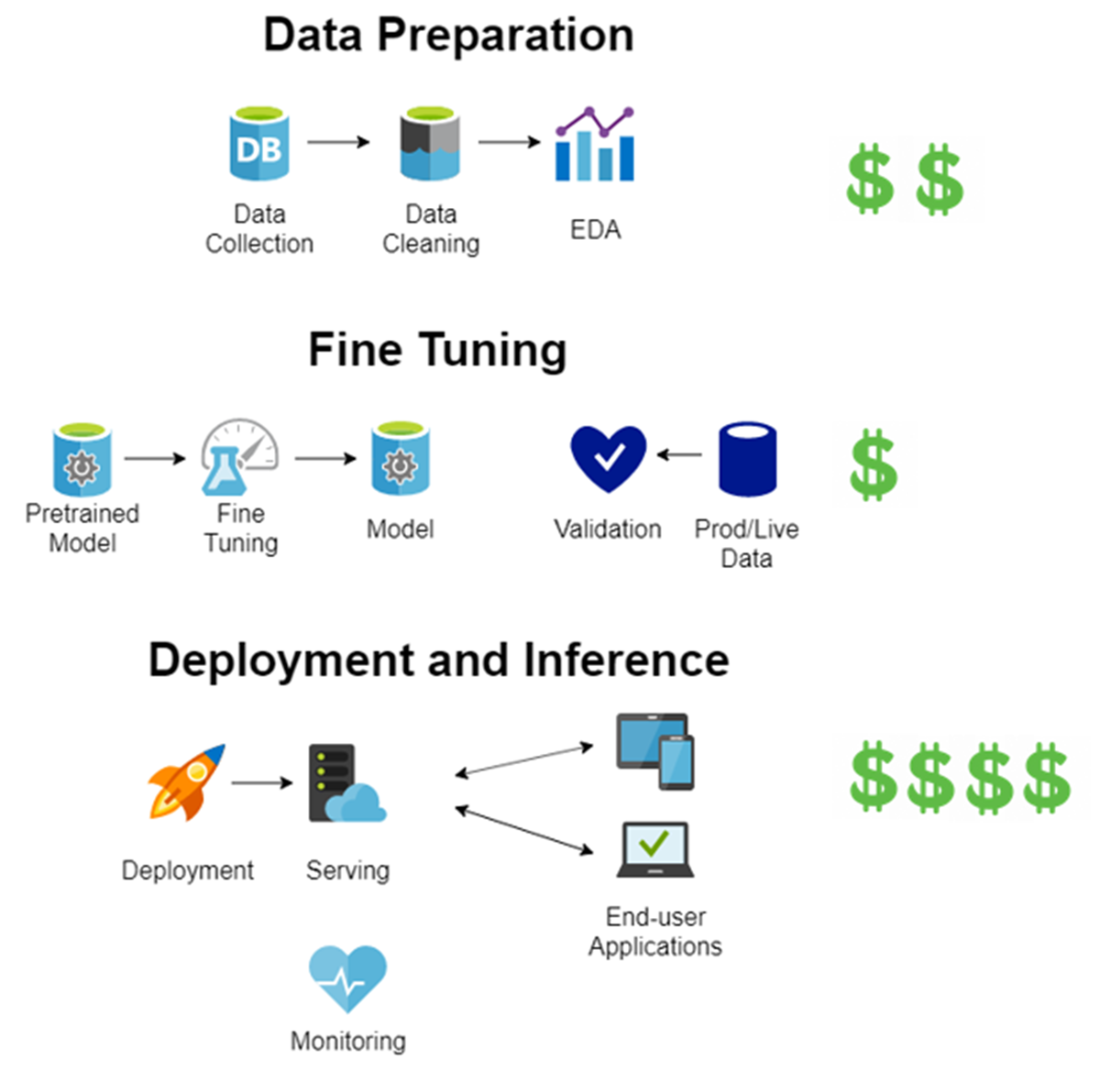

Order of magnitude of costs for each phase of LLM implementation when starting from a pretrained model.

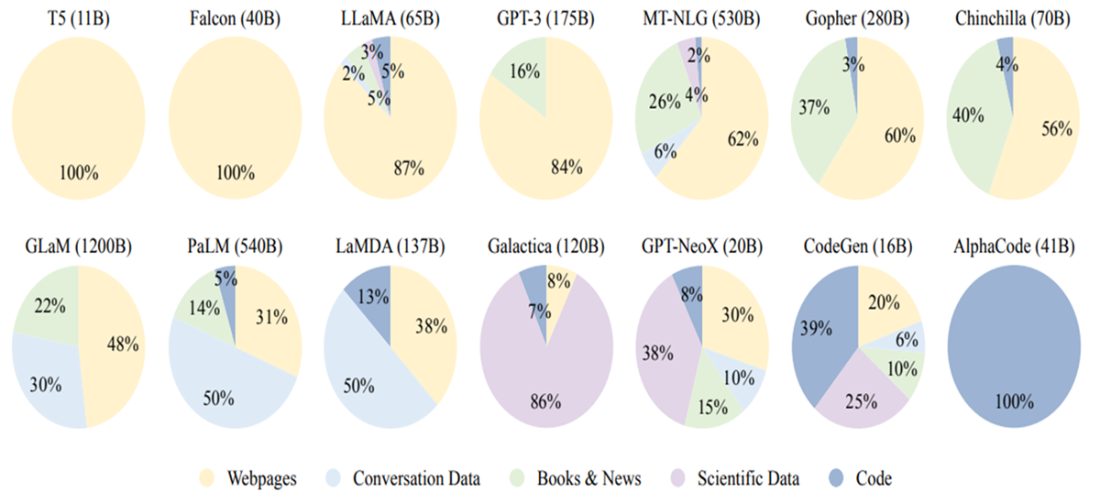

Ratios of data source types used to train some popular existing LLMs.

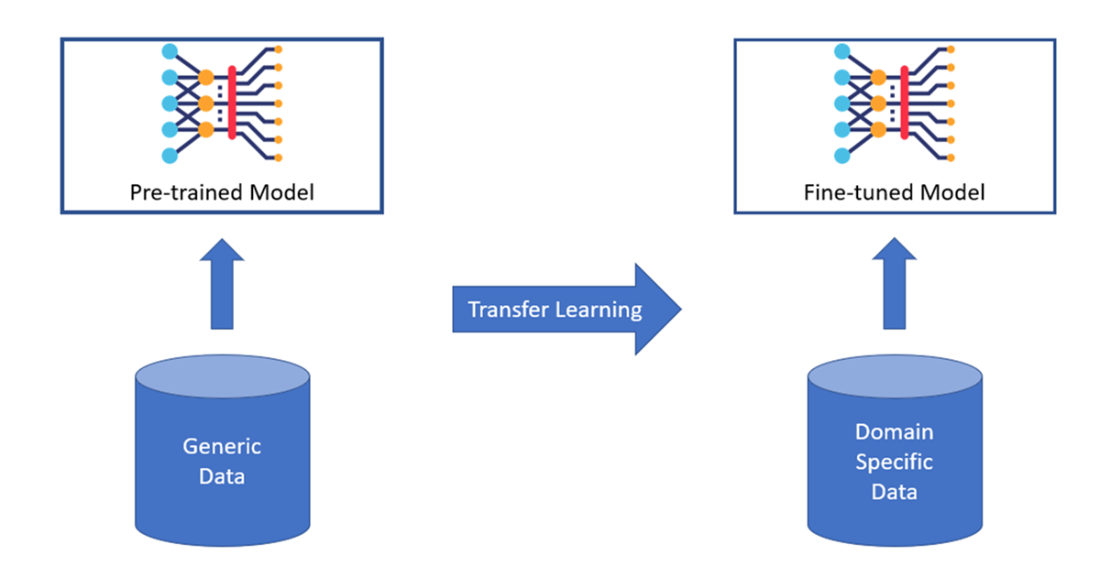

Generic model specialization to a given domain.

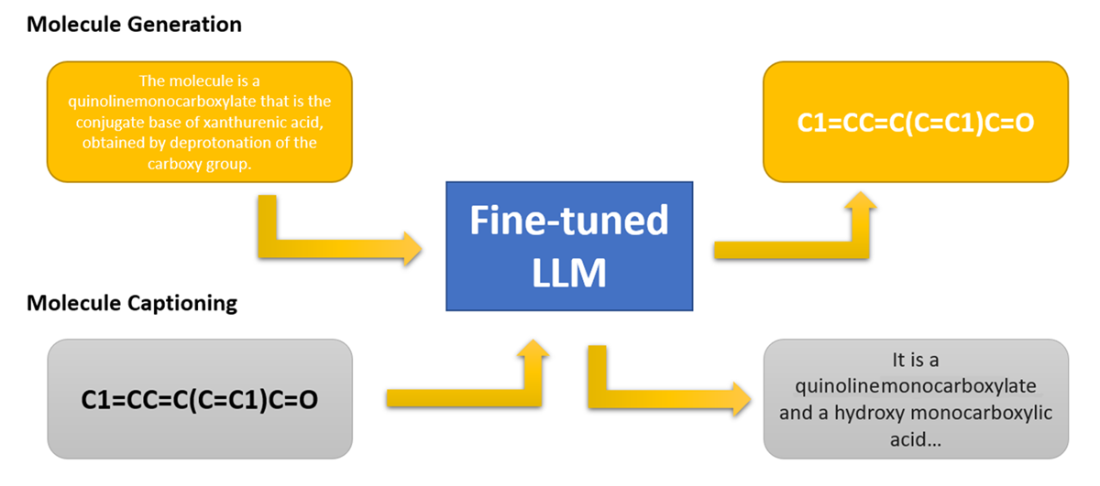

A LLM trained for tasks on molecule structures (generation and captioning).

Summary

- The definition of SLMs.

- Transformers use self-attention mechanisms to process entire text sequences at once instead of word by word.

- Self-supervised learning creates training labels automatically from text data without human annotation.

- BERT models use only the encoder part of Transformers for classification and prediction tasks.

- GPT models use only the decoder part of Transformers for text generation tasks.

- Word embeddings convert words into numerical vectors that capture semantic relationships.

- RLHF uses reinforcement learning to improve LLM responses based on human feedback.

- LLMs can generate any symbolic content including code, math expressions, and structured data.

- Open source LLMs reduce development costs by providing pre-trained models as starting points.

- Transfer learning adapts pre-trained models to specific domains using domain-specific data.

- Generalist LLMs risk data leakage when deployed outside organizational networks.

- Closed source models lack transparency about training data and model architecture.

- Domain-specific LLMs provide better accuracy for specialized tasks than generalist models.

- Smaller specialized models require less computational power than large generalist models.

- Fine-tuning costs significantly less than training models from scratch.

- Regulatory compliance often requires domain-specific models with known training data.

Domain-Specific Small Language Models ebook for free

Domain-Specific Small Language Models ebook for free