1 What is an AI agent?

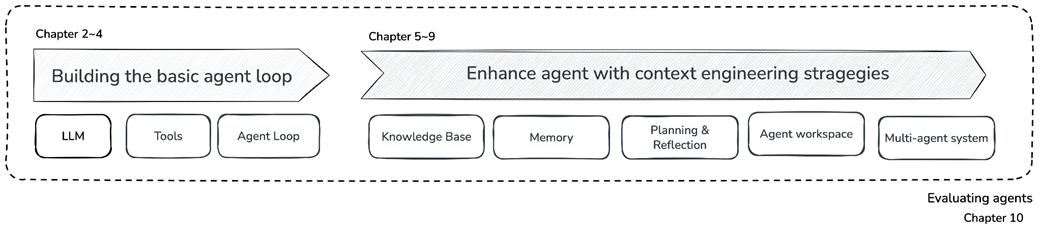

This chapter introduces AI agents as LLM-powered systems that can autonomously decide what actions to take, use tools, and continue working until a goal is reached. Rather than relying only on agent frameworks, the book emphasizes building agents from scratch so developers understand the internal mechanics needed to debug failures. It surveys today’s agent landscape, including personal assistants, customer-facing business agents, and specialized agents for tasks such as coding and deep research.

The core idea is that an LLM agent combines three elements: an LLM as the decision-making brain, tools that let it interact with external systems, and a loop that allows repeated reasoning, acting, observing, and deciding whether to continue or stop. The chapter contrasts agents with workflows: workflows follow developer-defined paths and are best for predictable tasks, while agents are useful when the number of steps, required tools, or information sources cannot be known in advance. It also stresses that agents should not be used everywhere, because they introduce higher cost, latency, and risk of cascading errors.

The chapter then explains how to evaluate and improve agents. GAIA is presented as a practical benchmark for agent development because its tasks require multi-step reasoning, information gathering, tool use, and clear answer checking. Finally, the chapter introduces context engineering: the practice of giving the LLM the right information, at the right time, in the right form. Since many agent failures come from missing or poorly managed context rather than weak model intelligence, effective agents depend on strategies such as generating, retrieving, writing, reducing, and isolating context.

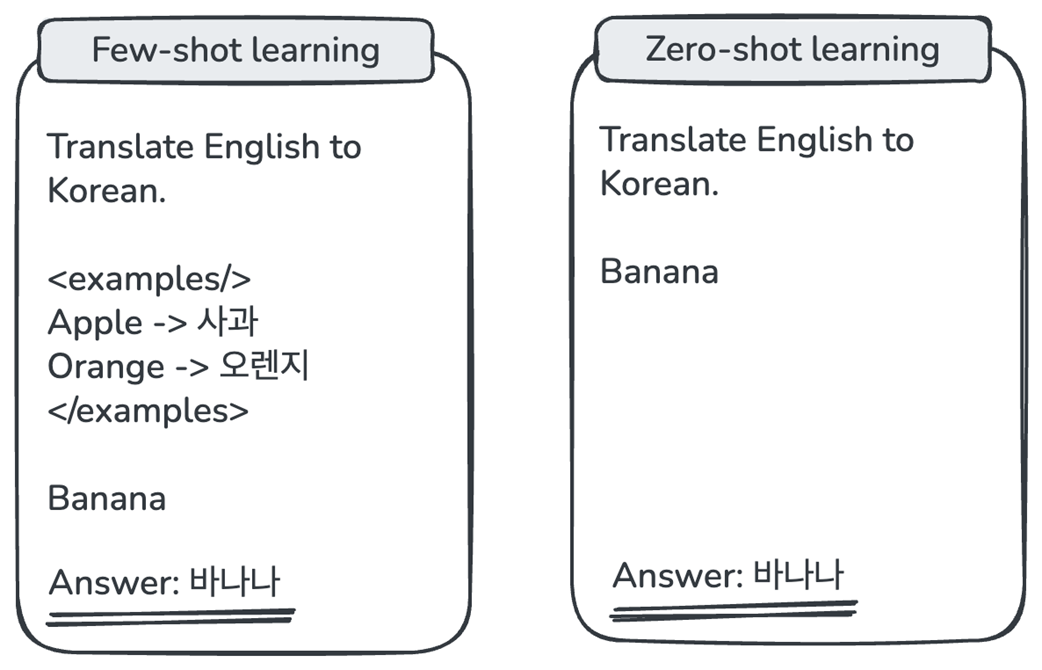

Example of a language model’s generalization capability.

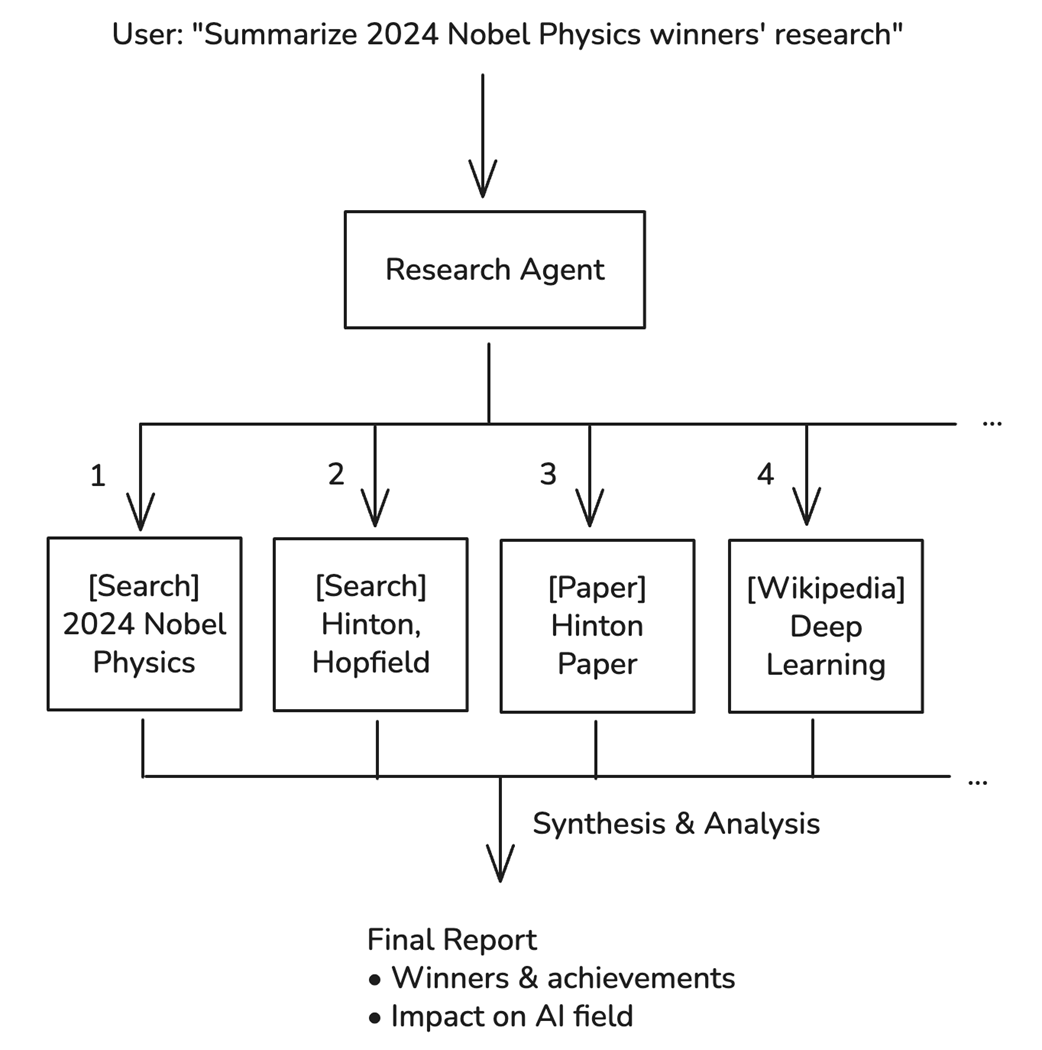

User requests flow through the research agent, which branches into multiple searches and synthesis.

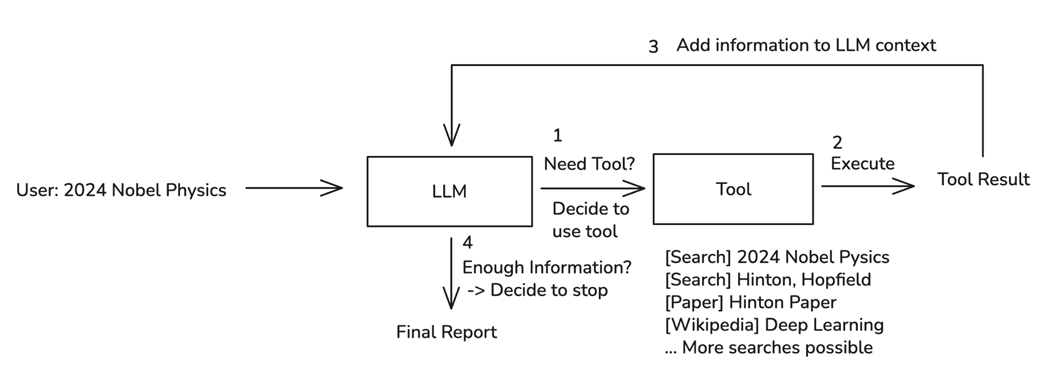

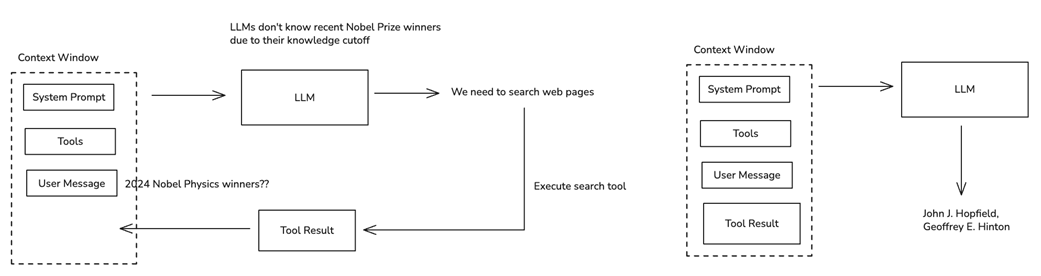

The LLM Agent's decision loop is an iterative process of LLM decision-making and tool use.

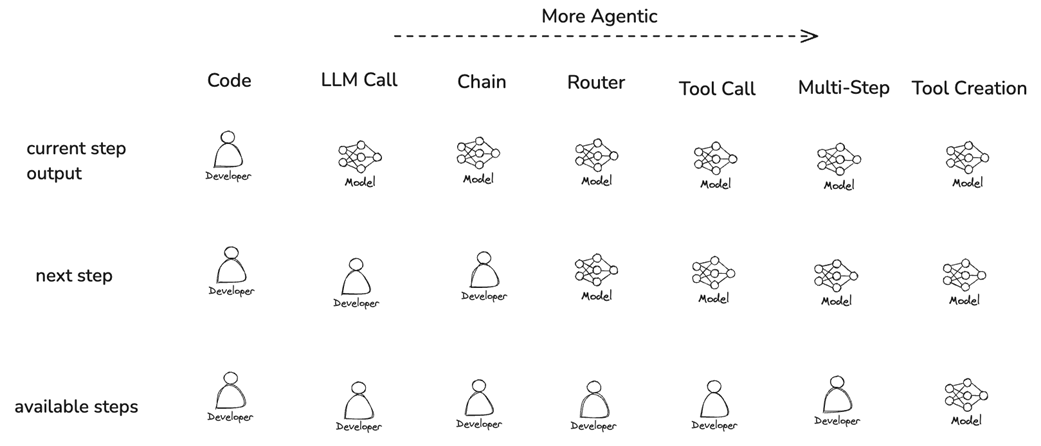

Progression of agency levels in LLM applications.

LLMs can only produce accurate, high-quality responses when sufficient information is provided in the context.

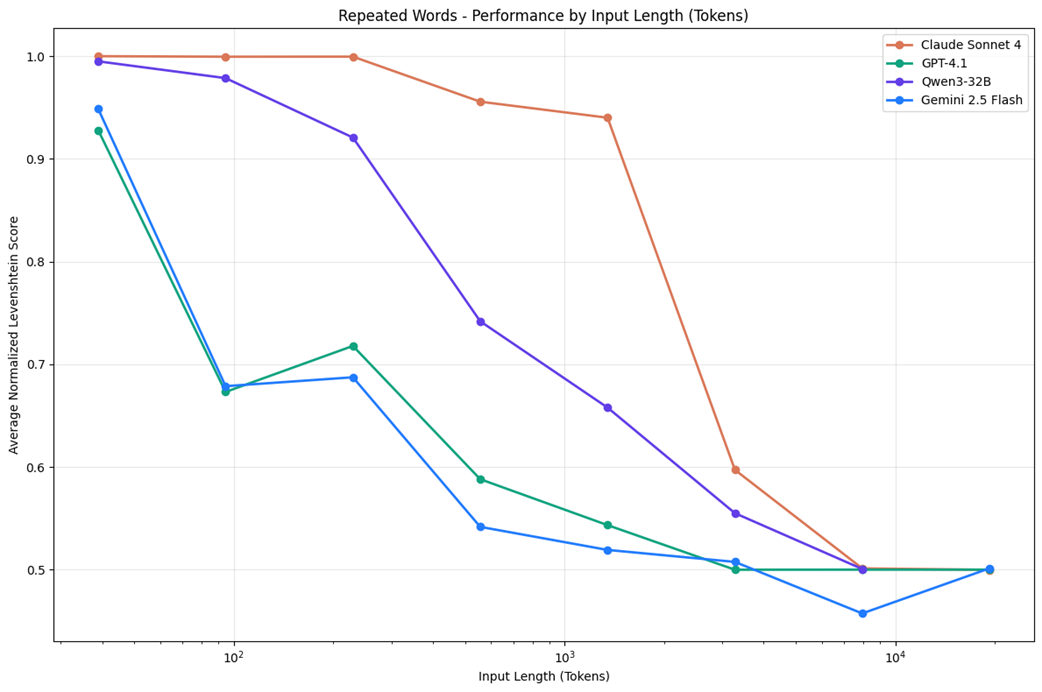

Even with large context windows, longer inputs can degrade model performance(Source: https://research.trychroma.com/context-rot).

An overview of the journey through the book.

Summary

- AI agents span a wide spectrum, from personal assistants like ChatGPT and Claude to customer-facing agents and specialized tools like Claude Code and Cursor. All share a common foundation: LLMs as their decision-making core.

- An LLM agent consists of three elements: the LLM (brain), tools (means of interacting with the external world), and a loop (iterative process until goal completion). The LLM decides which tool to use and when to stop.

- Workflows are developer-defined execution flows where LLMs perform specific steps. Agents are LLM-directed flows where the model dynamically determines its own process. Production systems often combine both approaches.

- Use agents when tasks require multiple unpredictable steps, provide sufficient value to justify costs, and allow for error detection. The GAIA benchmark provides ideal practice problems for agent development.

- Context engineering is the discipline of providing the right information at the right time. Five strategies (Generation, Retrieval, Write, Reduce, Isolate) form the framework for building effective agents throughout this book.

FAQ

What is an AI agent?

An AI agent is a program that autonomously decides what actions to take and when to stop based on its current context and goals. In modern systems, this usually means an LLM-powered agent that can reason about a task, choose tools, observe results, and continue iterating until it has enough information to produce an answer.

Why does this book build AI agents from scratch instead of relying on frameworks?

The book builds agents from scratch because agent development is largely about debugging failures. When an agent gives a wrong answer, loops endlessly, or uses a tool incorrectly, you need to understand what happened internally. Building each component yourself helps you develop the mental model needed to troubleshoot agents, whether you later use frameworks like LangGraph, CrewAI, AutoGen, or OpenAI Agents.

What role does an LLM play in an AI agent?

The LLM acts as the agent’s decision-making core, or “brain.” It evaluates the current context, decides whether a tool is needed, chooses which tool to use, interprets tool results, and determines whether to continue or stop. Tools and loops extend the LLM’s capabilities beyond text generation.

What are the three essential elements of an LLM agent?

The three essential elements are the LLM, tools, and a loop. The LLM makes decisions, tools allow the agent to interact with external systems such as web search, code execution, databases, or APIs, and the loop lets the agent repeatedly reason, act, observe results, and continue until the task is complete.

How does the agent loop work?

The agent loop follows an iterative process. First, the LLM evaluates the current context and decides what to do next. If needed, it selects and calls a tool. The tool result is then added back into the context. Finally, the LLM decides whether it has enough information to answer or whether another iteration is needed.

What is the difference between a workflow and an agent?

A workflow has a developer-defined execution path. The developer decides the sequence of steps in advance, and the LLM performs specific tasks within that structure. An agent, by contrast, has an LLM-directed flow. The LLM dynamically decides which actions to take, which tools to use, and when the task is complete.

When should I use a workflow instead of an agent?

Use a workflow when the task is predictable, the steps can be predefined, and reliability, speed, and cost are important. Examples include classification, summarization, routing a query to a known handler, or processing structured data. Workflows are usually cheaper, faster, and easier to debug than agents.

When is an agent the right solution?

An agent is appropriate when the task requires an LLM and the number or type of steps cannot be predicted in advance. Agents are useful for complex research, open-ended information gathering, multi-step reasoning, tool use, and tasks where the agent must adapt based on intermediate results. However, their higher cost, latency, and error risk must be justified by the value of the task.

What is GAIA, and why is it used in this book?

GAIA, or General AI Assistants, is a benchmark released by Meta and HuggingFace that contains question-answer tasks requiring multi-step reasoning, web searches, calculations, and information synthesis. The book uses GAIA because it provides clear answers for fast feedback, supports the observe-analyze-improve development cycle, and does not require specialized domain knowledge.

What is context engineering?

Context engineering is the discipline of providing the information an LLM needs at the right time and in the right form. It goes beyond prompt engineering by managing system prompts, user messages, conversation history, tool results, retrieved documents, memory, and other information in the model’s context. Good context engineering is critical because many agent failures happen when necessary information is missing, irrelevant, or buried in too much context.

Build an AI Agent (From Scratch) ebook for free

Build an AI Agent (From Scratch) ebook for free