1 Understanding reasoning models

Large language models are moving beyond pure pattern matching toward reasoning—the deliberate production of intermediate steps before an answer—to tackle complex, multi-step tasks in math, coding, and logic, as well as to power more capable agents. This chapter frames reasoning in practical engineering terms, emphasizing why and when it matters, how it benefits tool use and recovery from mistakes, and why a code-first approach helps demystify the methods. Readers start from an existing pre-trained model and learn to add, test, and iterate on reasoning behaviors, with math used as a convenient, auto-gradable domain rather than an end in itself.

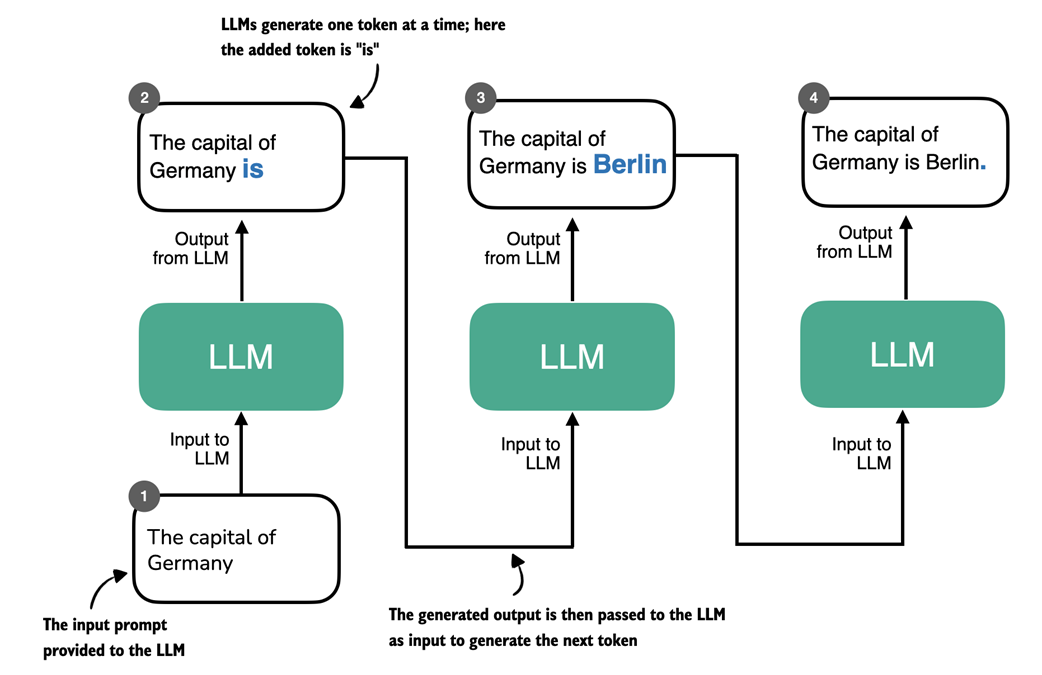

The chapter clarifies that chain-of-thought style reasoning—visible or hidden—differs fundamentally from deterministic, rule-based logic: LLMs generate tokens probabilistically, so their “steps” can look persuasive without guaranteeing logical soundness. After reviewing the conventional pipeline of pre-training (next-token prediction at scale) and post-training (instruction and preference tuning), it introduces three major paths to stronger reasoning: inference-time compute scaling (test-time techniques like multi-sampling and structured thinking without changing weights), reinforcement learning (updating weights using verifiable rewards for task success), and distillation (supervised transfer of reasoning patterns from stronger models). It contrasts pattern matching with logical reasoning via examples that expose how LLMs often simulate reasoning by leveraging statistical associations rather than explicitly tracking premises and contradictions.

Finally, the chapter explains why building reasoning models from scratch is valuable: the industry is converging on models that “know when to think,” but reasoning is not universally beneficial due to added cost, verbosity, latency, and potential overthinking. Understanding the mechanics enables better trade-offs, evaluations, and debugging of multi-step failures. The roadmap is straightforward: load a capable base model, establish rigorous automatic evaluations, apply inference-time enhancements, then use training-time methods such as reinforcement learning and distillation to turn the base model into a purpose-built reasoning model—always looping back to evaluation to verify real gains.

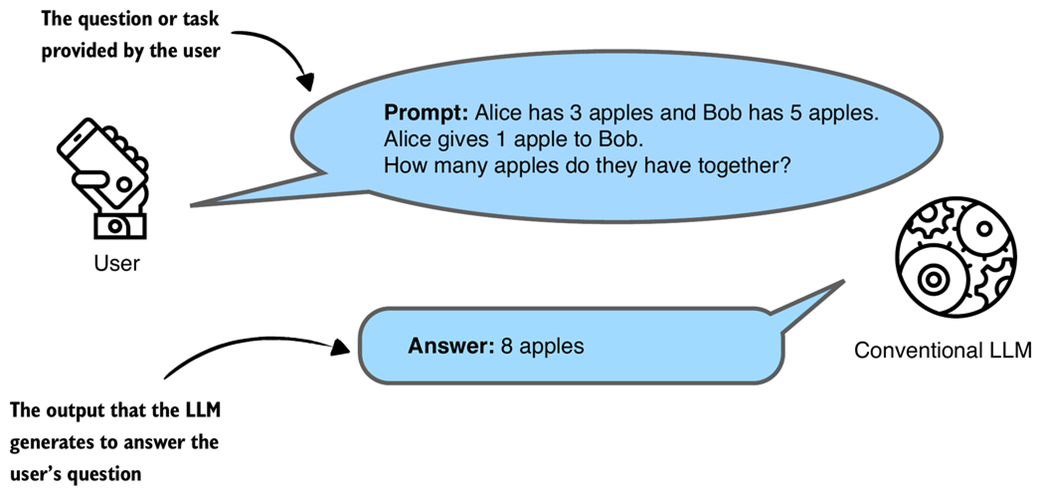

A simplified illustration of how a conventional, non-reasoning LLM might respond to a question with a short answer.

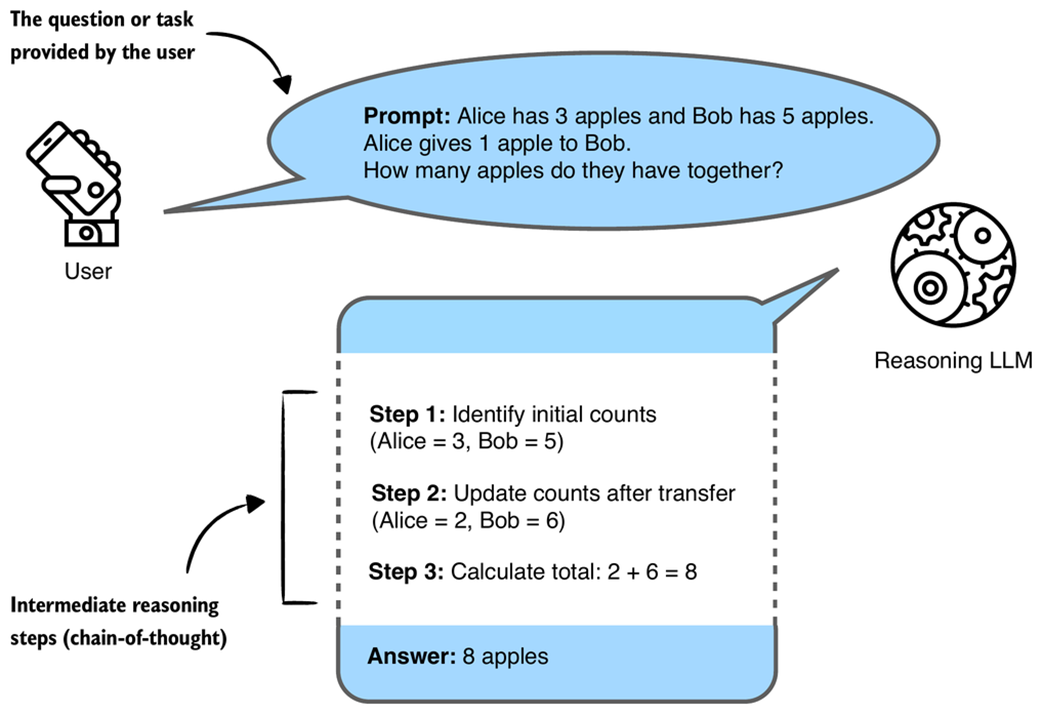

A simplified illustration of how a reasoning LLM might tackle a multi-step reasoning task using a chain-of-thought. Rather than just recalling a fact, the model combines several intermediate reasoning steps to arrive at the correct conclusion. The intermediate reasoning steps may or may not be shown to the user, depending on the implementation.

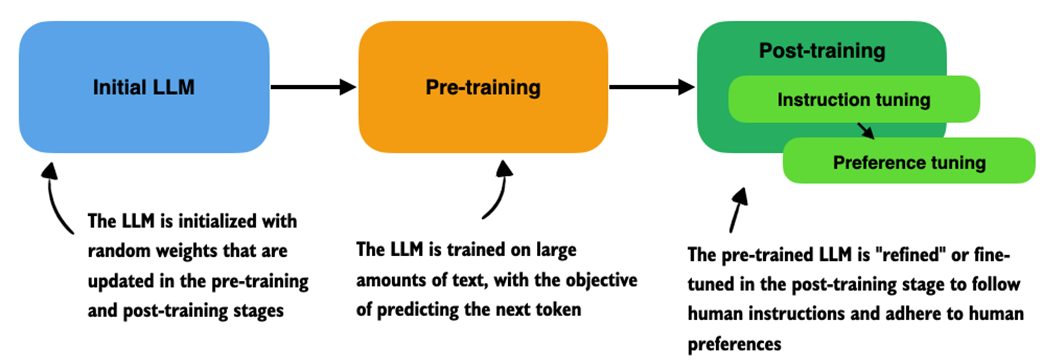

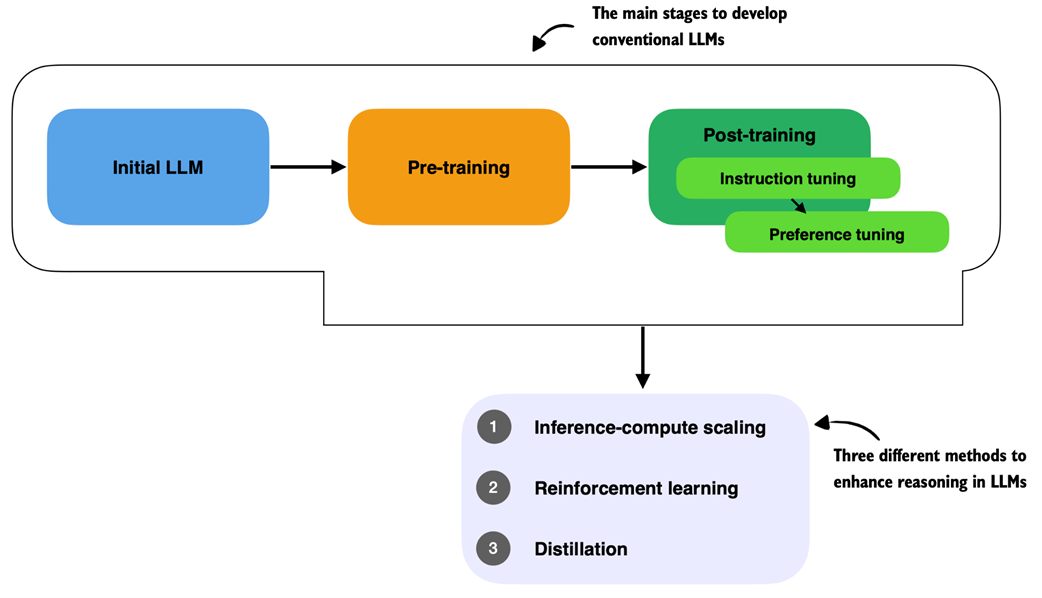

Overview of a typical LLM training pipeline. The process begins with an initial model initialized with random weights, followed by pre-training on large-scale text data to learn language patterns by predicting the next token. Post-training then refines the model through instruction fine-tuning and preference fine-tuning, which enables the LLM to follow human instructions better and align with human preferences.

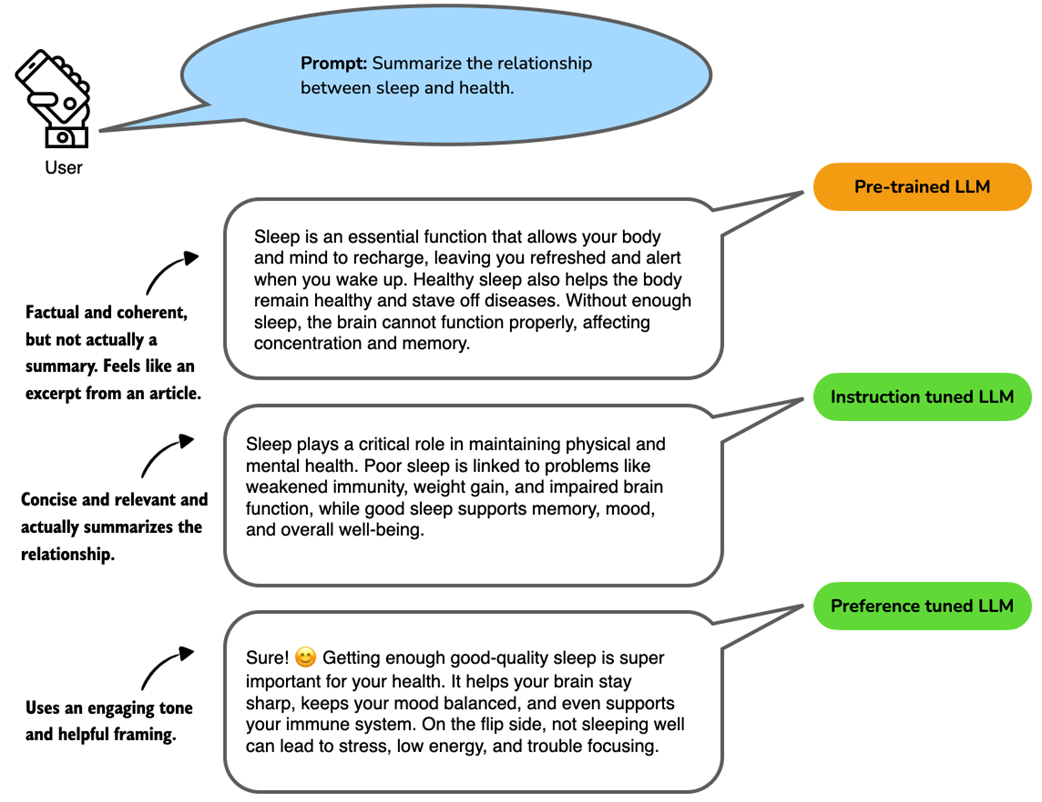

Example responses from a language model at different training stages. The prompt asks for a summary of the relationship between sleep and health. The pre-trained LLM produces a relevant but unfocused answer without directly following the instructions. The instruction-tuned LLM generates a concise and accurate summary aligned with the prompt. The preference-tuned LLM further improves the response by using a friendly tone and engaging language, which makes the answer more relatable and user-centered.

Three approaches commonly used to improve reasoning capabilities in LLMs. These methods (inference-compute scaling, reinforcement learning, and distillation) are typically applied after the conventional training stages (initial model training, pre-training, and post-training with instruction and preference tuning), but reasoning techniques can also be applied to the pre-trained base model.

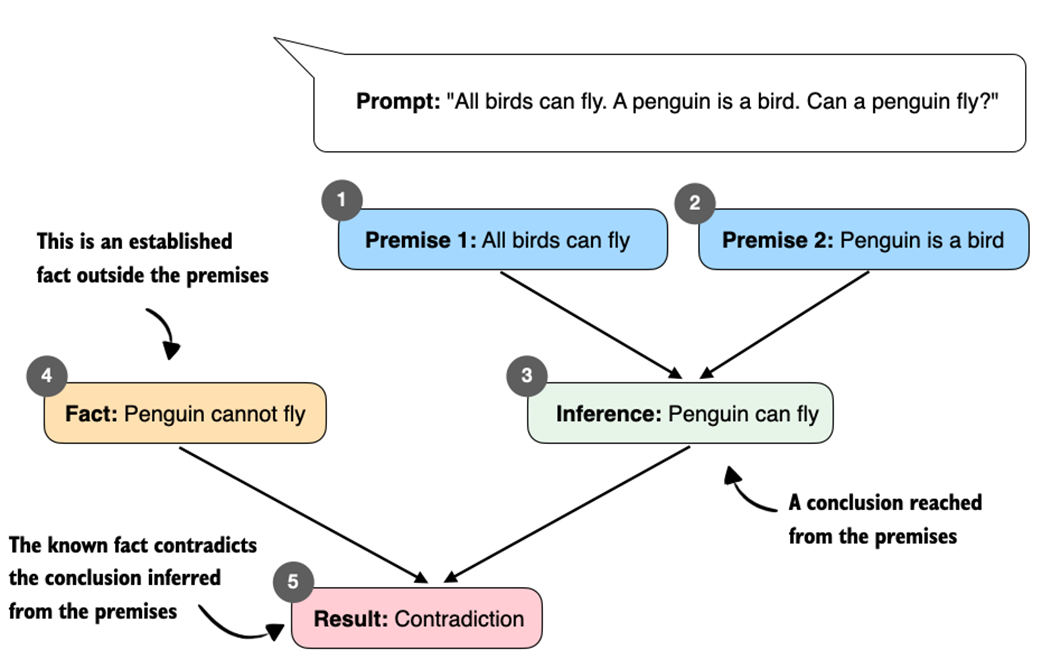

Contradictory premises lead to a logical inconsistency. From "All birds can fly" and "A penguin is a bird," we infer "Penguin can fly." This conclusion conflicts with the established fact "Penguin cannot fly," which results in a contradiction.

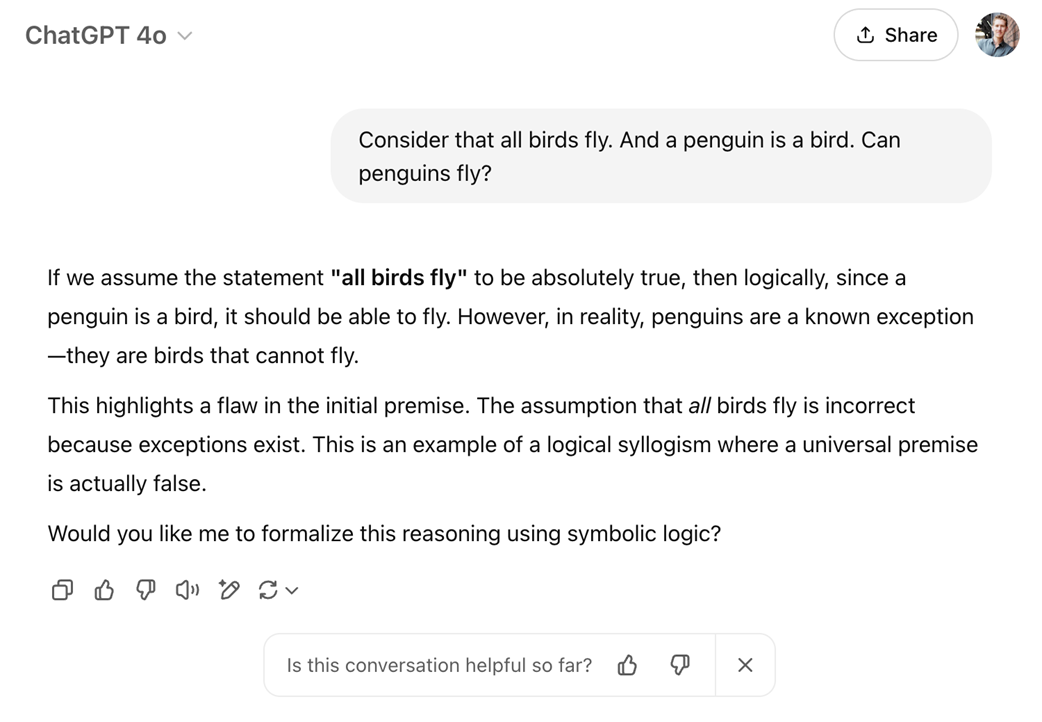

An illustrative example of how a language model (GPT-4o in ChatGPT) appears to "reason" about a contradictory premise.

Token-by-token generation in an LLM. At each step, the LLM takes the full sequence generated so far and predicts the next token, which may represent a word, subword, or punctuation mark depending on the tokenizer. The newly generated token is appended to the sequence and used as input for the next step. This iterative decoding process is used in both standard language models and reasoning-focused models.

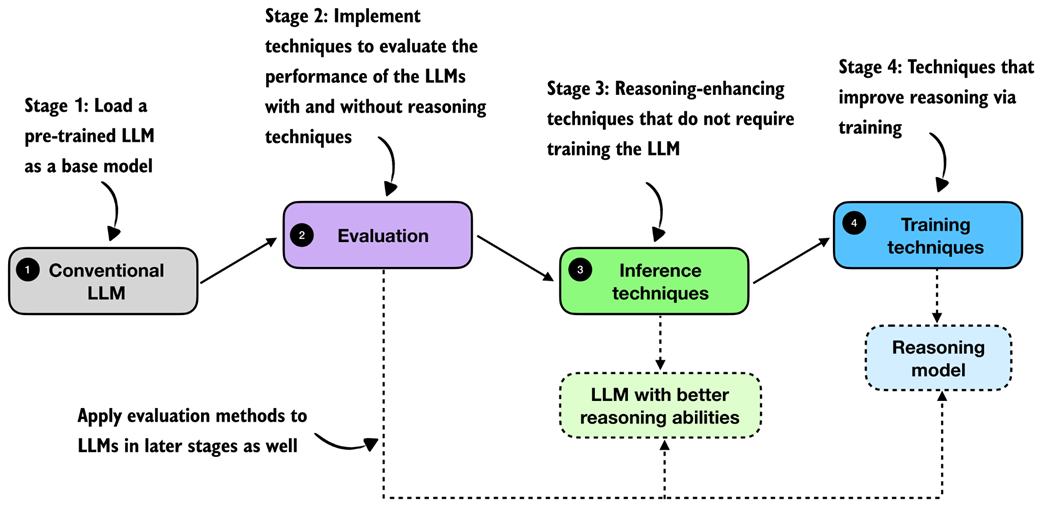

A high-level roadmap of what we build in this book. We start with a conventional LLM, add evaluation methods so that we can measure progress, and then explore two broad families of reasoning improvements, namely, inference techniques and training techniques.

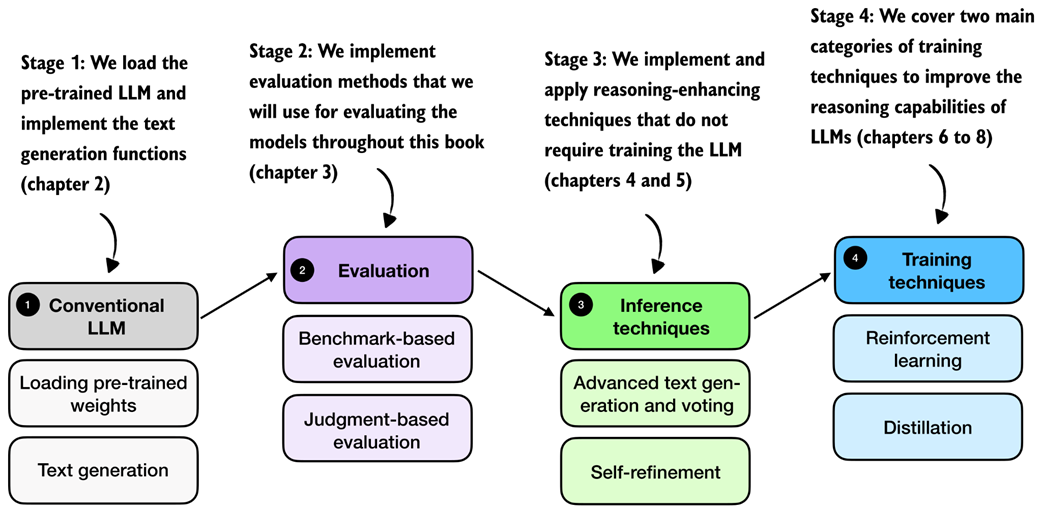

A detailed roadmap of the chapter-level substeps. After loading the base model, we cover benchmark-based and judgment-based evaluation, then inference-time methods such as advanced text generation and voting plus self-refinement, and finally training-time methods based on reinforcement learning and distillation.

Summary

- Conventional LLM training occurs in several stages:

- Pre-training, where the model learns language patterns from vast amounts of text.

- Instruction fine-tuning, which improves the model's responses to user prompts.

- Preference tuning, which aligns model outputs with human preferences.

- Reasoning methods are applied on top of a conventional LLM.

- Reasoning in LLMs refers to improving a model so that it explicitly generates intermediate steps (chain-of-thought) before producing a final answer, which often increases accuracy on multi-step tasks.

- Reasoning in LLMs is different from rule-based reasoning and it also likely works differently than human reasoning; currently, the common consensus is that reasoning in LLMs relies on statistical pattern matching.

- Pattern matching in LLMs relies purely on statistical associations learned from data, which enables fluent text generation but lacks explicit logical inference.

- Improving reasoning in LLMs can be achieved through:

- Inference-time compute scaling, enhancing reasoning without retraining (e.g., chain-of-thought prompting).

- Reinforcement learning, training models explicitly with reward signals.

- Supervised fine-tuning and distillation, using examples from stronger reasoning models.

- Building reasoning models from scratch provides practical insights into LLM capabilities, limitations, and computational trade-offs.

FAQ

What does “reasoning” mean for an LLM in this book?

Reasoning means the model generates intermediate steps before the final answer. Those steps can be visible (step-by-step explanations, often called chain-of-thought) or hidden (e.g., inside special tags). The key idea is to spend tokens on intermediate problem solving instead of jumping directly to the conclusion.How is a reasoning model different from a conventional LLM?

A reasoning model is an LLM that has been prompted or trained to produce intermediate steps, which often boosts accuracy on multi-step tasks (math, coding, logic). A conventional LLM may output a short answer without showing how it arrived there. Reasoning in LLMs is probabilistic and exists on a spectrum—some non-reasoning models can still simulate reasoning via patterns learned at scale.What is Chain-of-Thought (CoT), and does the model need to show its “thoughts”?

CoT is the style of generating intermediate, step-by-step reasoning. These steps can be shown to the user or kept hidden; either way, the model is encouraged to think through the problem. The term is used in a practical engineering sense and does not imply human-like thinking.How does LLM “reasoning” differ from symbolic logic or human reasoning?

Symbolic logic is deterministic, rule-based, and guarantees consistency given the same premises. LLMs generate text autoregressively, one token at a time, based on learned statistical patterns—so their steps can look convincing but are not guaranteed to be logically sound. Humans can deliberately apply logic or reason over a world model; LLMs rely on pattern prediction.What are the standard stages of LLM training?

- Pre-training: learn next-token prediction on massive text corpora, yielding fluent, capable base models with some emergent skills.- Post-training: supervised fine-tuning (instruction tuning) to follow instructions, and preference tuning (e.g., RLHF) to align outputs with human preferences and style. A separate chat layer handles multi-turn interaction.

Build a Reasoning Model (From Scratch) ebook for free

Build a Reasoning Model (From Scratch) ebook for free