1 What are LLM Agents and Multi-Agent Systems?

Large Language Models can express intentions, plans, and tool-use requests in text, but they cannot directly carry out actions on their own. LLM agents address this gap by wrapping a backbone LLM in an orchestration system that executes tool calls, manages intermediate steps, and returns results for the model to synthesize. Multi-agent systems extend this idea by coordinating multiple specialized LLM agents so they can collaborate on larger tasks that benefit from decomposition, specialization, and combined outputs.

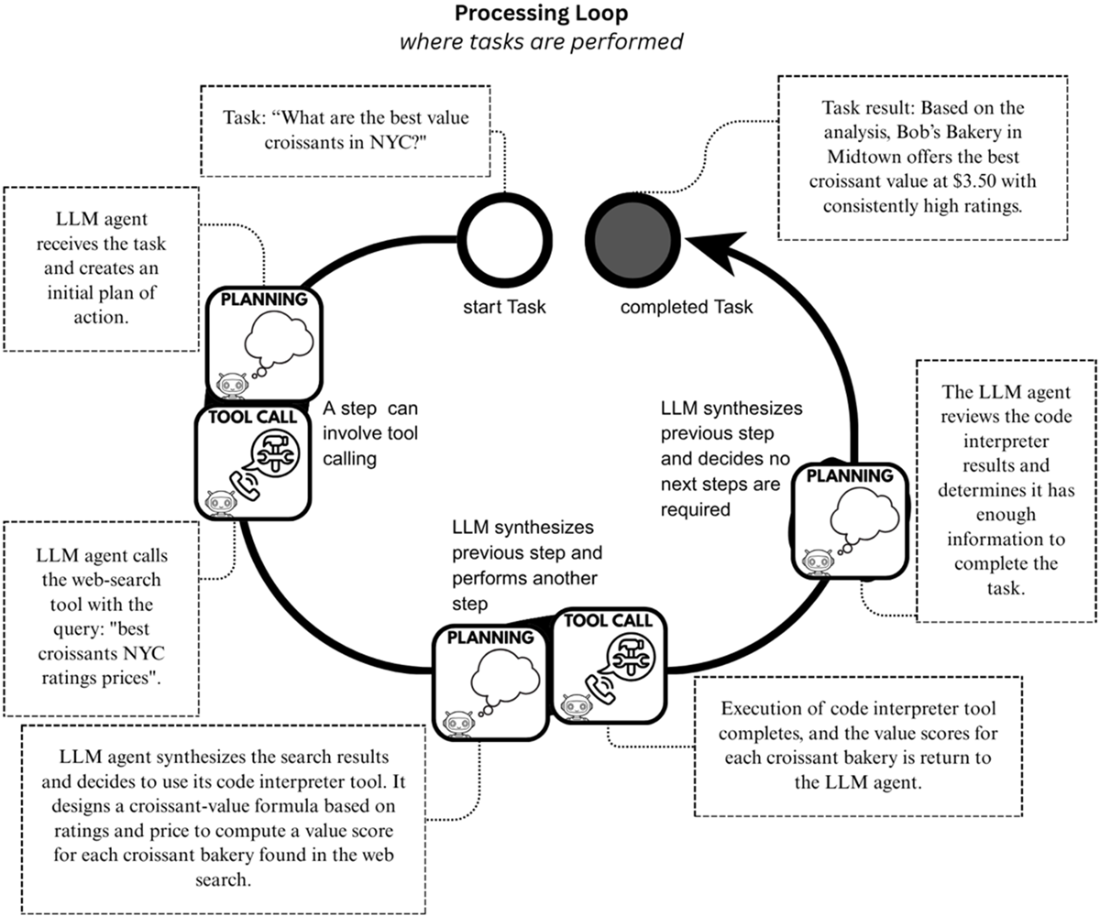

The chapter explains that effective LLM agents depend heavily on planning and tool calling. A typical agent operates through a processing loop: it receives a task, forms or updates a plan, selects and invokes tools, observes results, and repeats until the task is complete or a stopping condition is reached. This loop can produce useful trajectories for debugging and improvement. The chapter also surveys common applications such as report generation, web and deep search, agentic retrieval-augmented generation, coding agents, and computer-use agents, while noting that these systems require monitoring because errors, hallucinations, and poor plans can cascade through later steps.

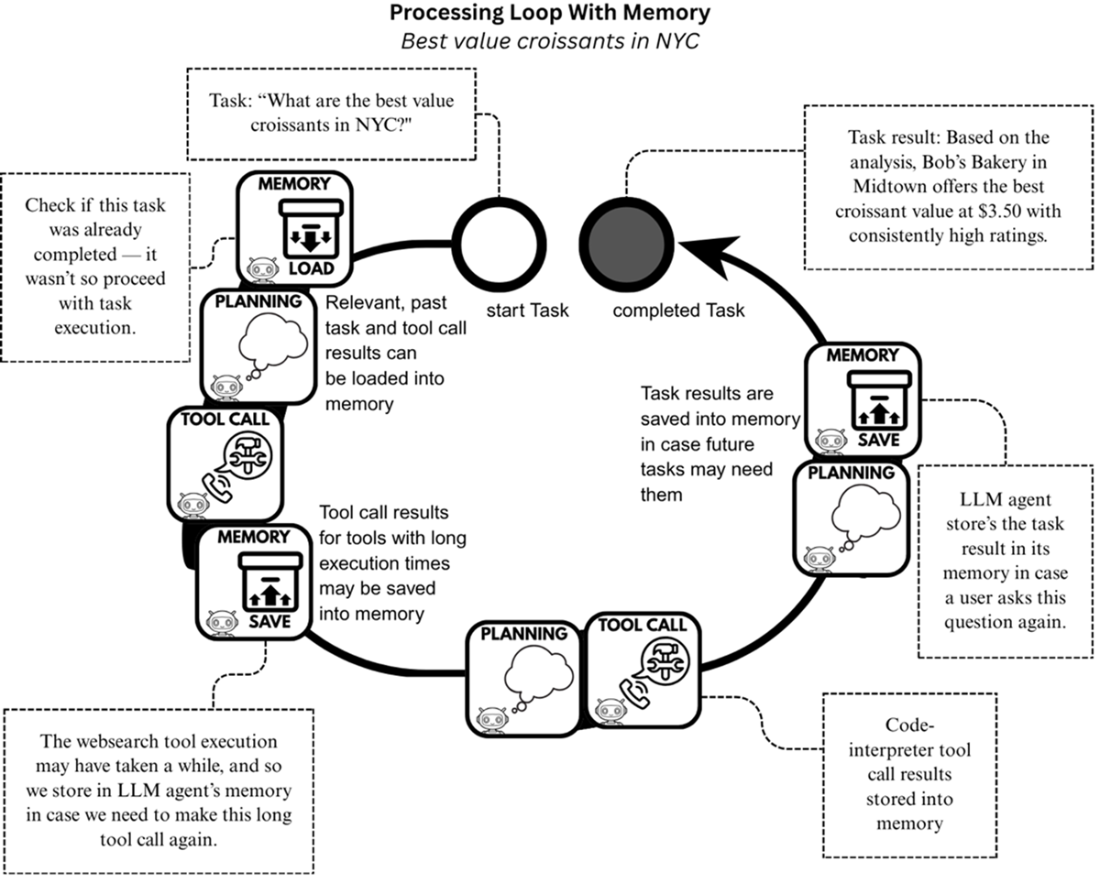

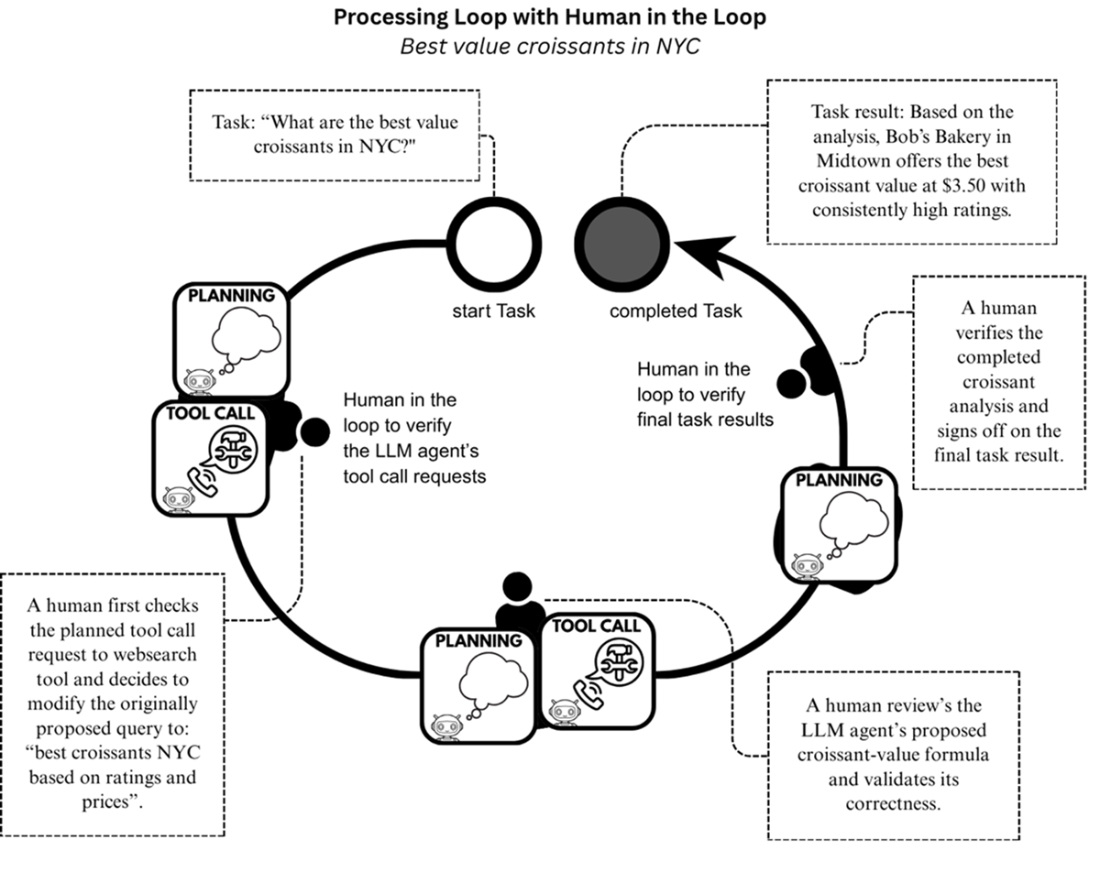

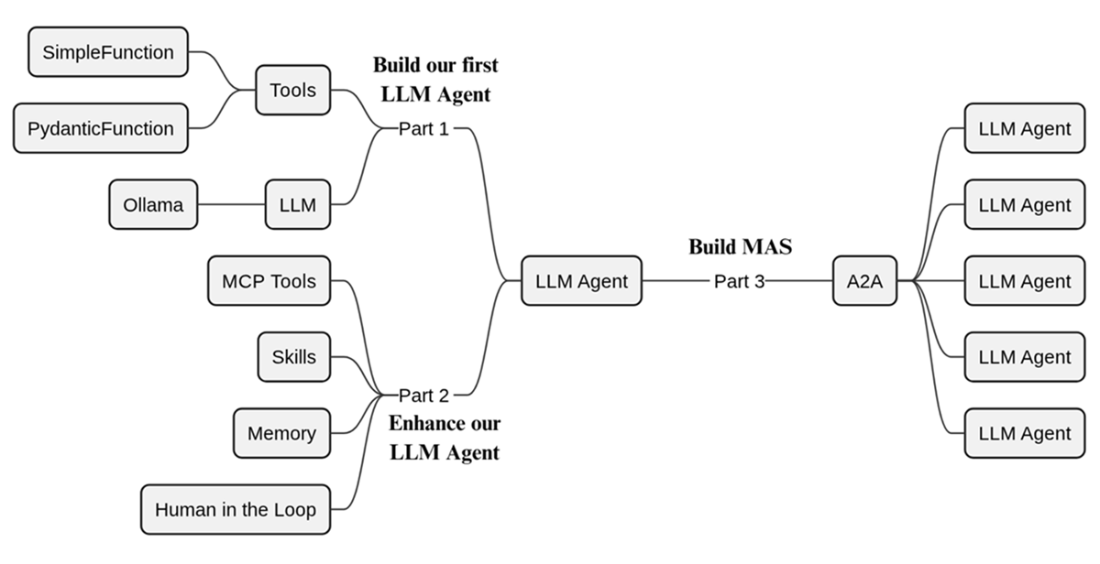

The chapter also introduces important enhancements and ecosystem standards. Memory allows agents to store and reuse information from past executions, while human-in-the-loop designs let people review plans, approve actions, validate outputs, or redirect failed attempts at the cost of slower execution. Protocols such as MCP, Agent Skills, and A2A help standardize tool access, reusable workflows, and agent-to-agent communication. Finally, the book’s roadmap is framed around building an educational LLM agent framework from scratch: first implementing tools, LLM interfaces, and a core agent loop; then adding MCP, skills, memory, and human oversight; and finally assembling multiple agents into a coordinated multi-agent system.

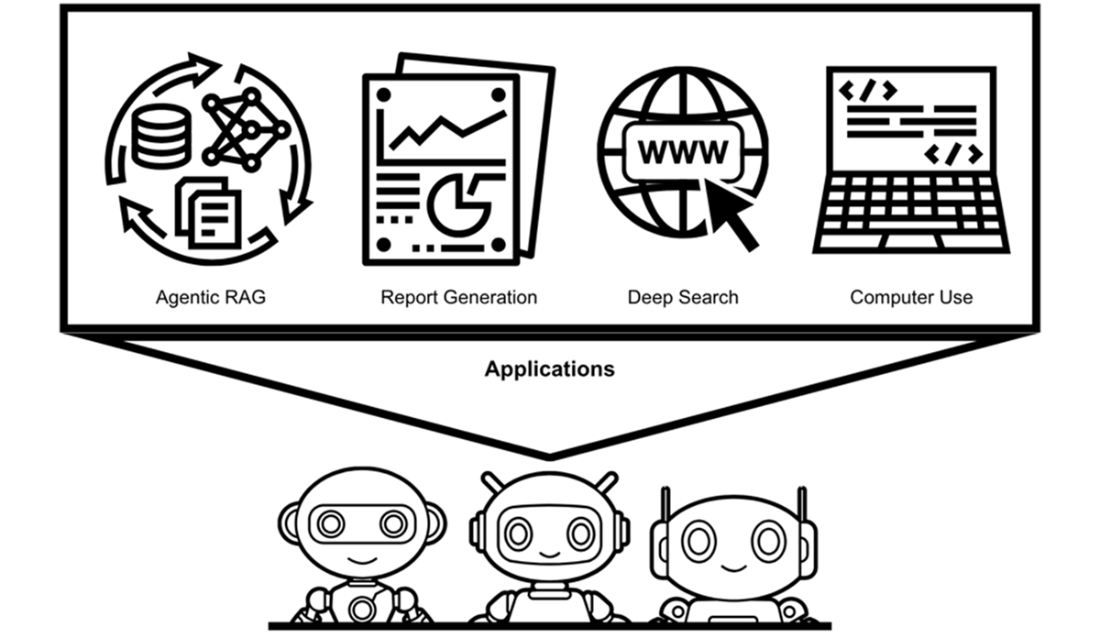

The applications for LLM agents are many, including agentic RAG, report generation, deep search and computer use, all of which can benefit from MAS.

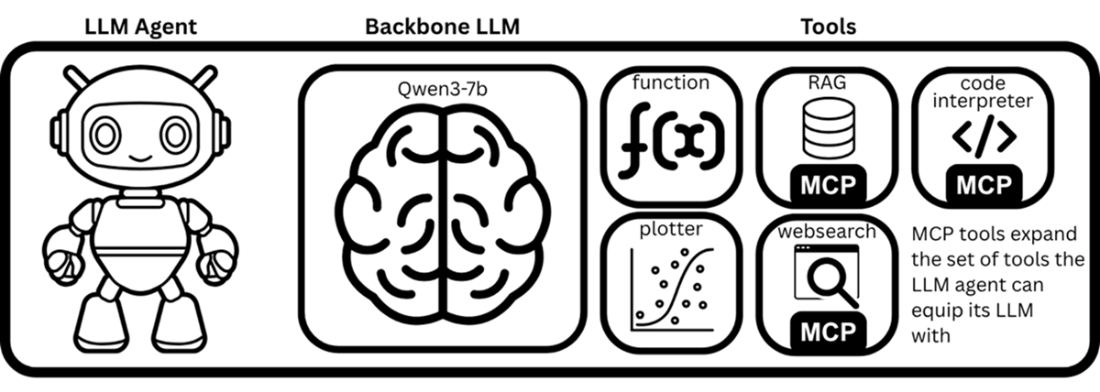

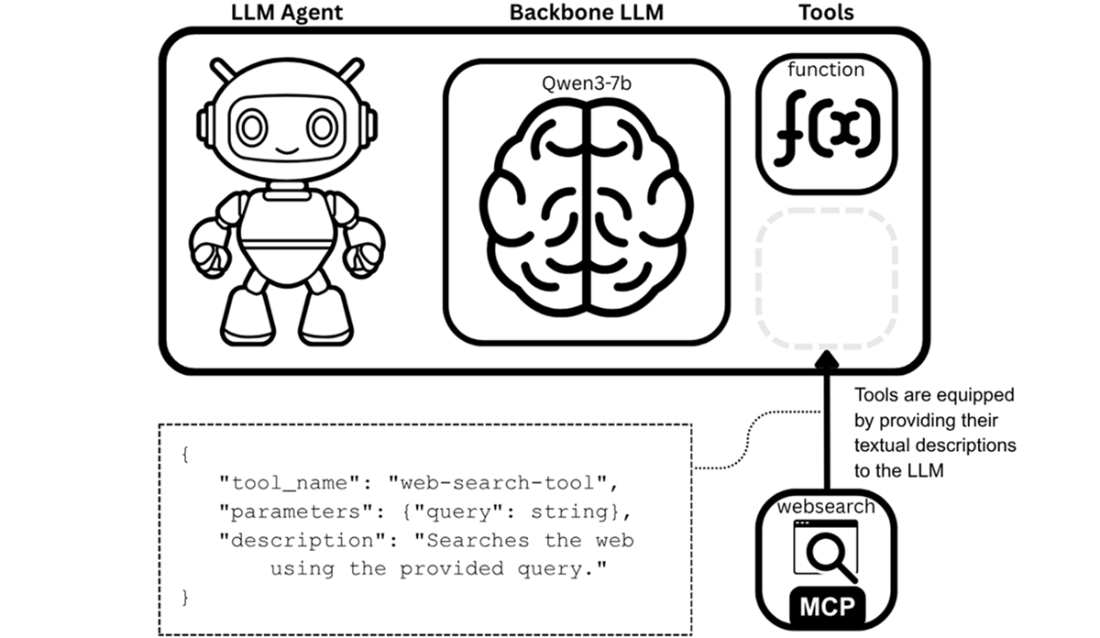

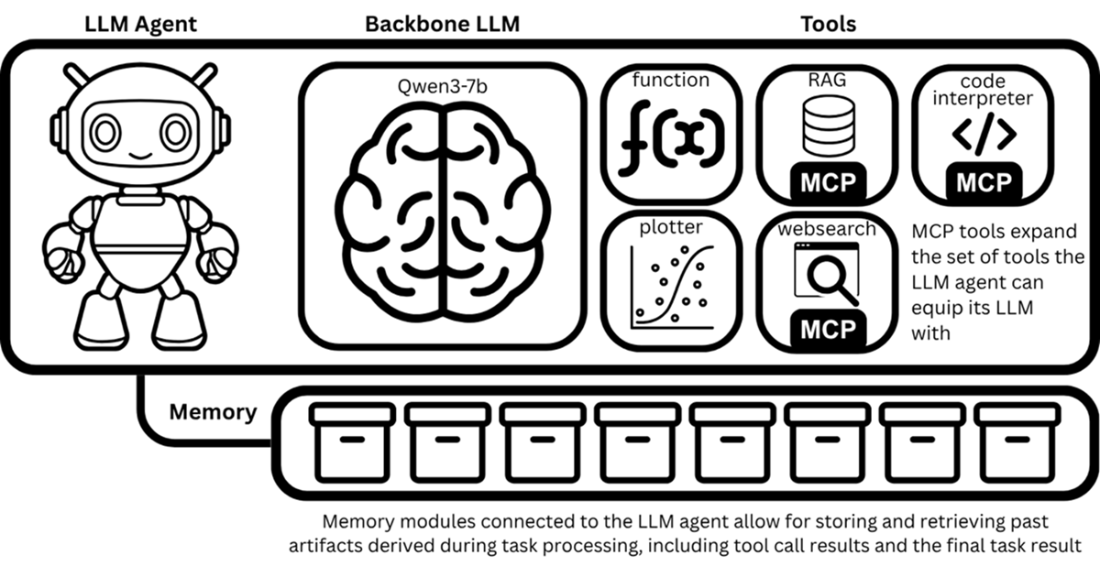

An LLM agent is comprised of a backbone LLM and its equipped tools.

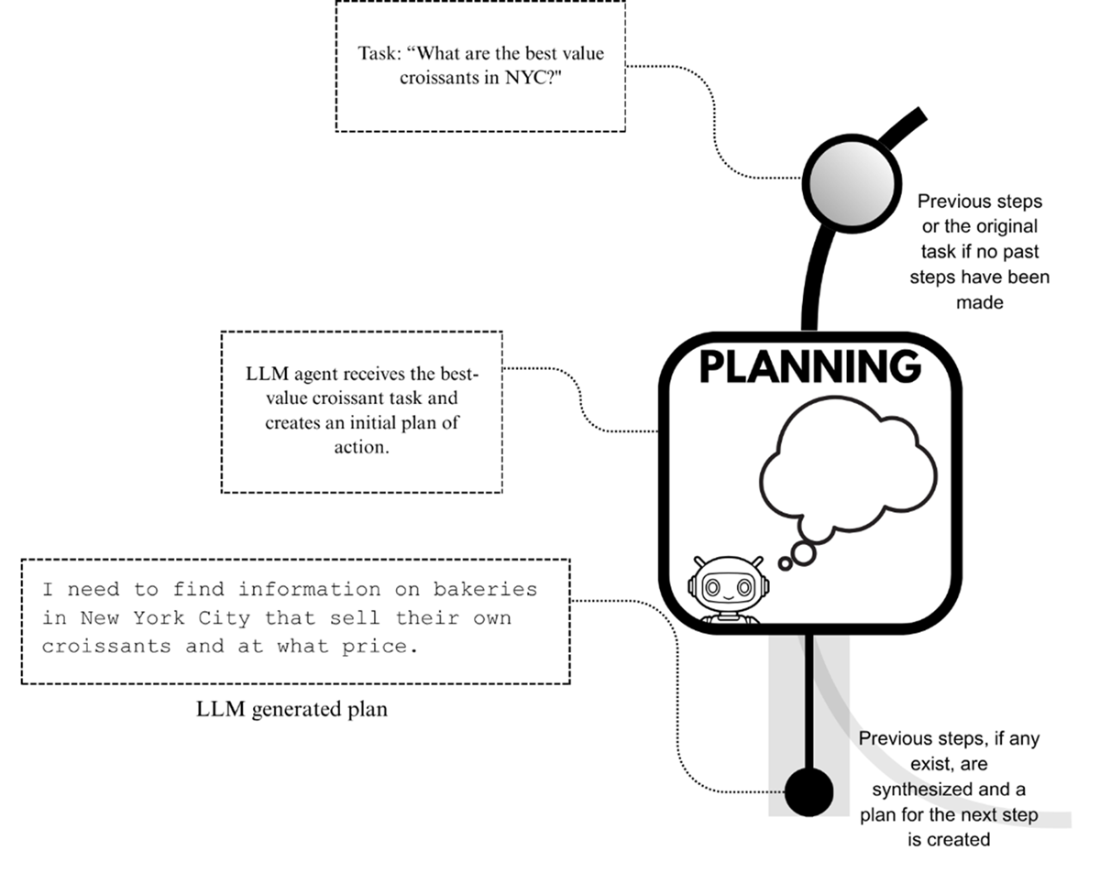

LLM agents utilize the planning capability of backbone LLMs to formulate initial plans for tasks, as well as to adapt current plans based on the results of past steps or actions taken towards task completion.

An illustration of the tool-equipping process, where a textual description of the tool that contains the tool’s name, description and its parameters is provided to the LLM agent.

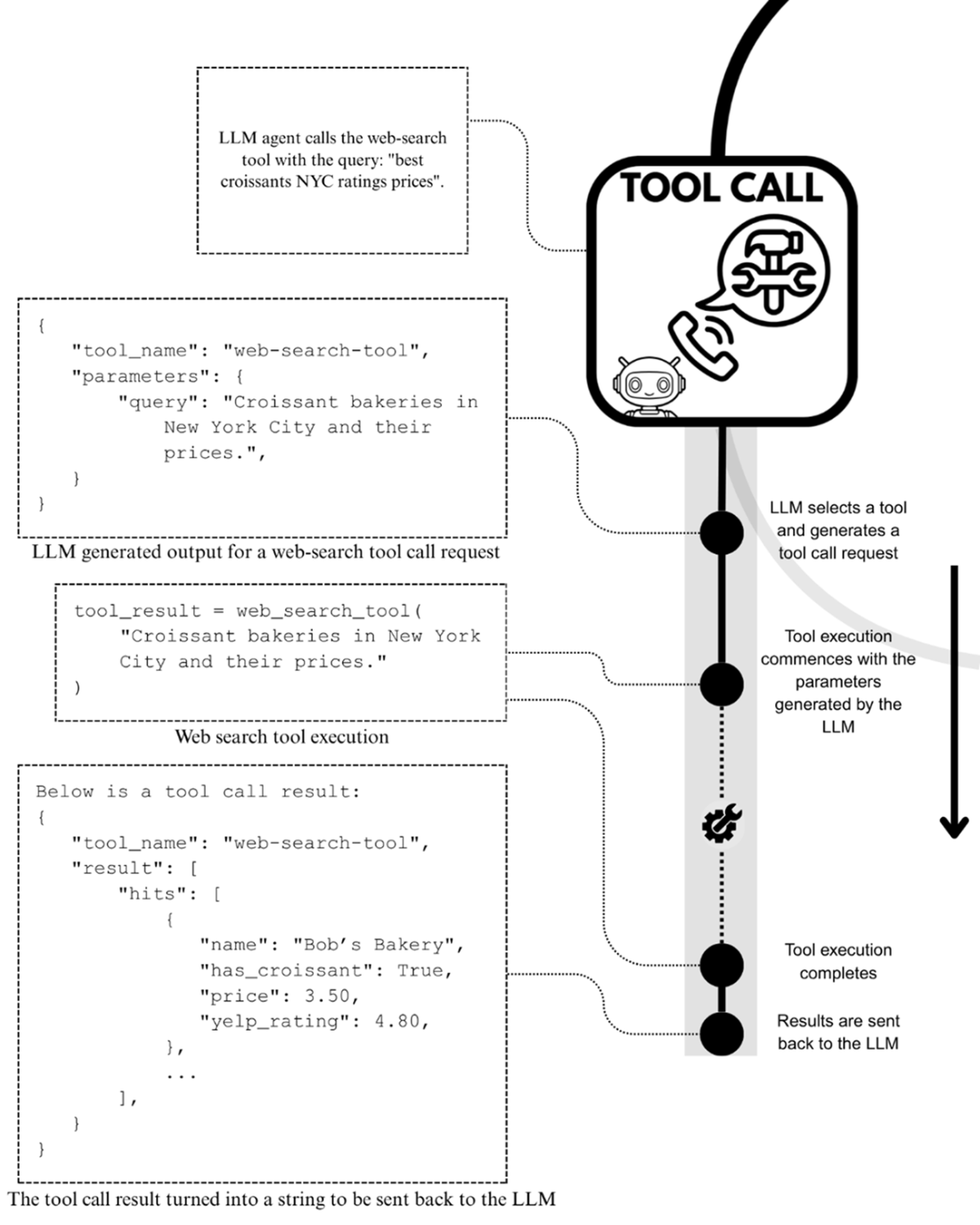

The tool-calling process, where any equipped tool can be used.

A mental model of an LLM agent performing a task through its processing loop, where tool calling and planning are used repeatedly. The task is executed through a series of sub-steps, a typical approach for performing tasks.

An LLM agent that has access to memory modules where it can store key information of task executions and load this back into its context for future tasks.

A mental model of the LLM agent processing loop that has memory modules for saving and loading important information obtained during task execution.

An LLM agent processing loop with access to human operators. The processing loop is effectively paused each time a human operator is required to provide input.

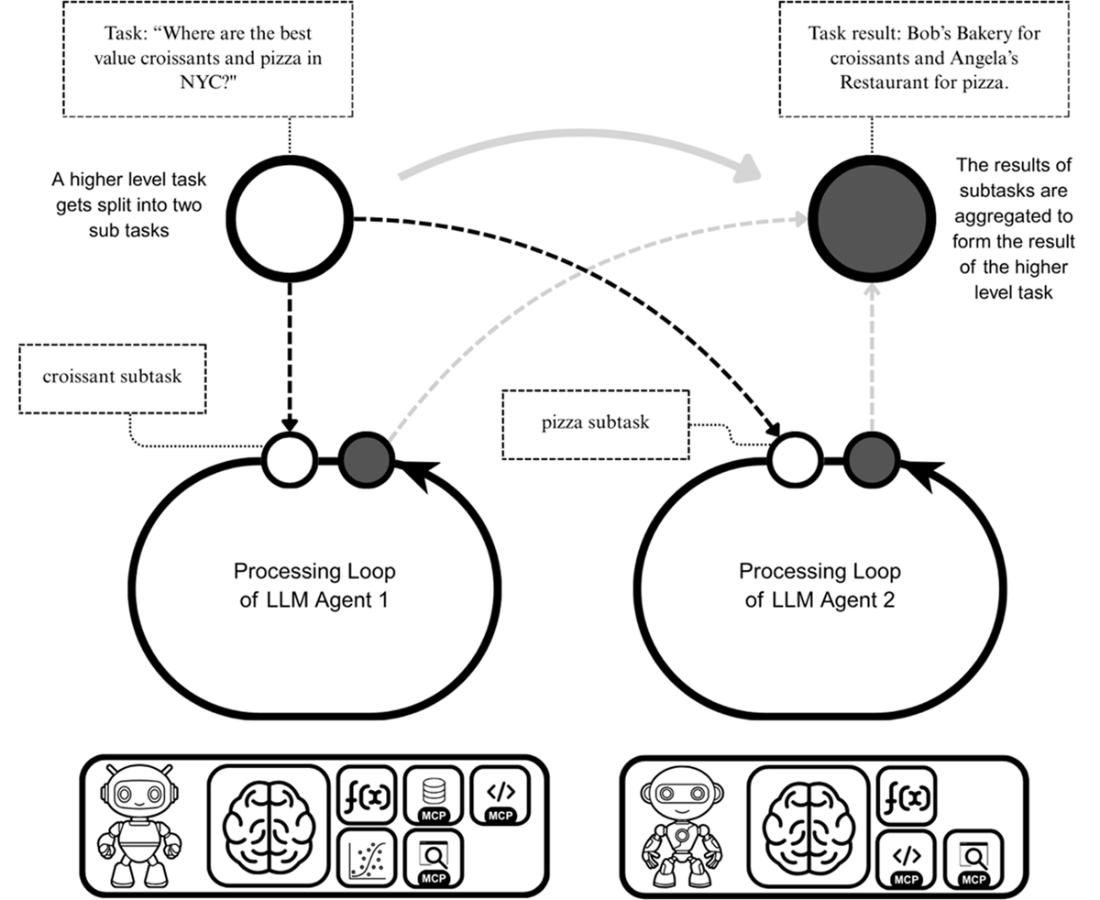

Multiple LLM agents collaborating to complete an overarching task. The outcomes of each LLM agent’s processing loop are combined to form the overall task result.

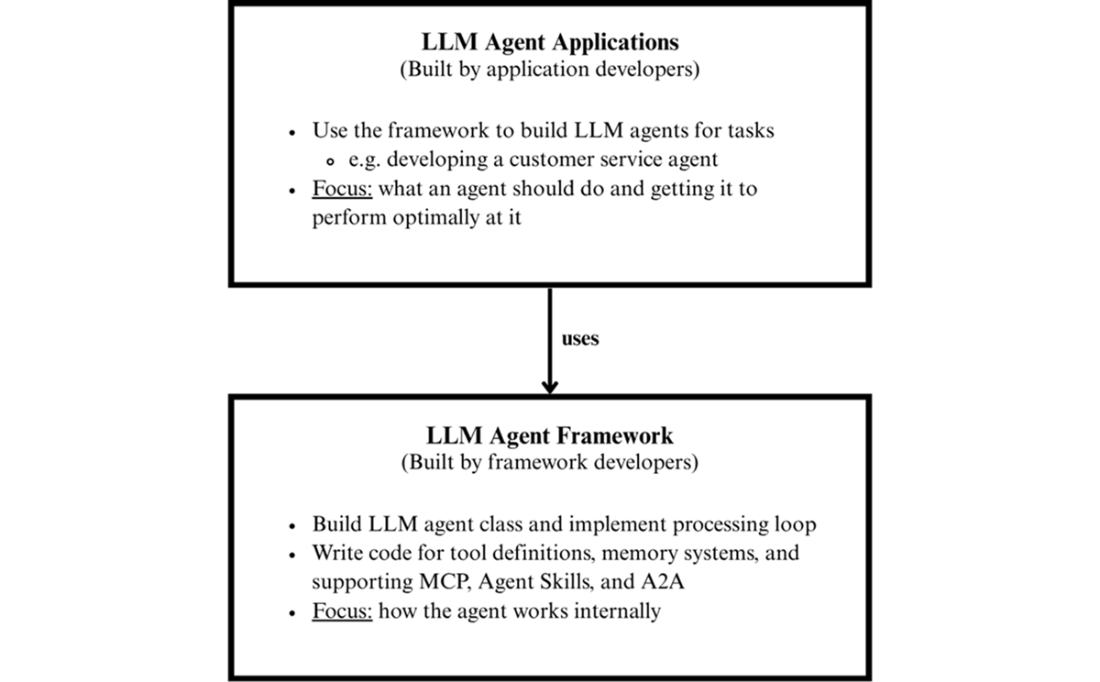

The difference between LLM agent framework developers and application developers

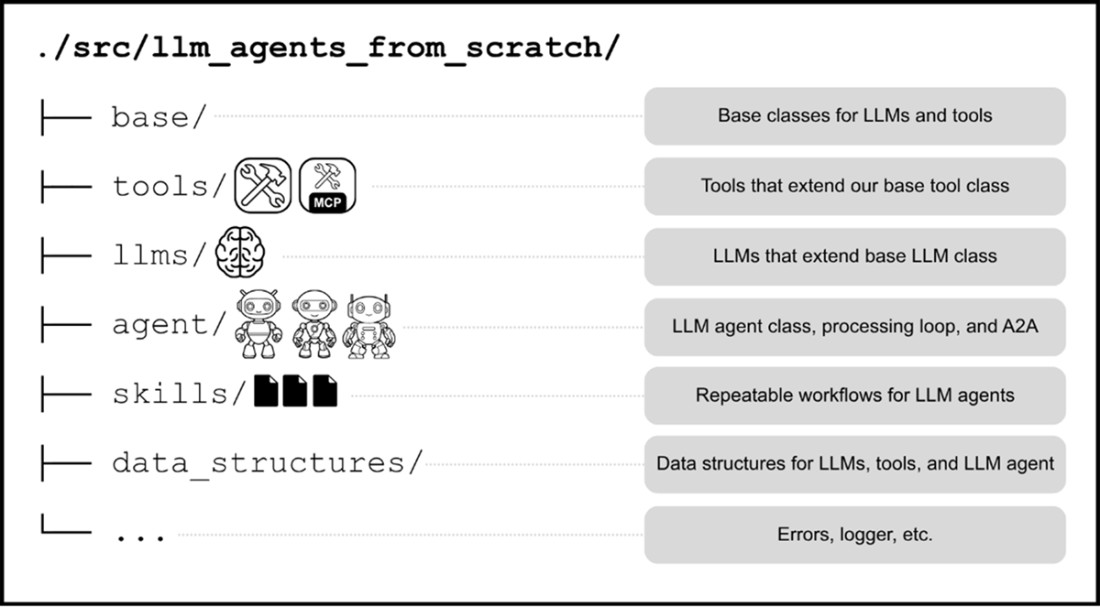

A first look at the llm-agents-from-scratch framework that we’ll build together.

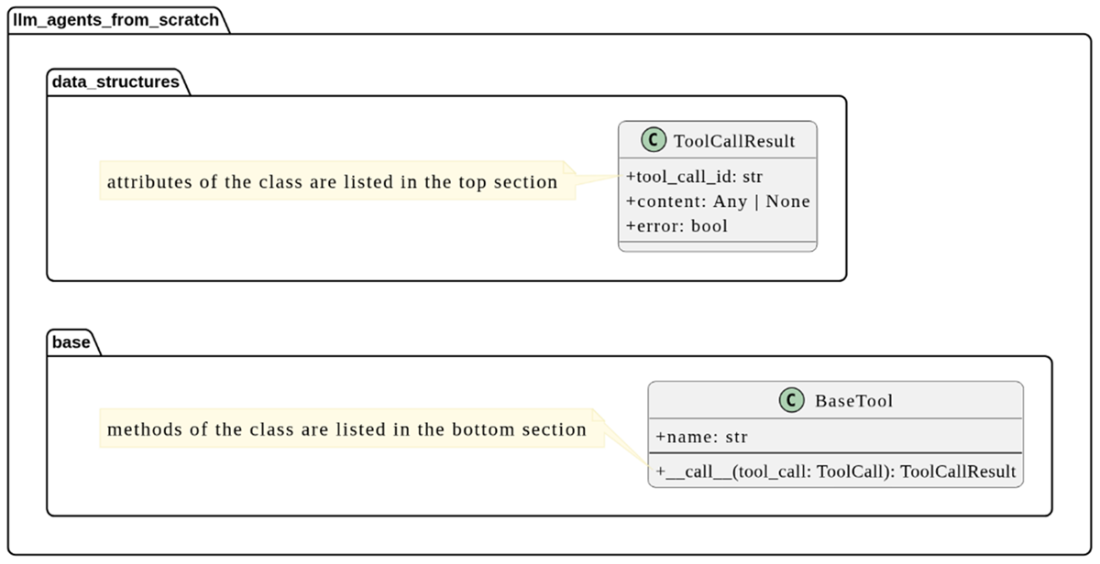

A simple UML class diagram that shows two classes from the llm-agents-from-scratch framework. The BaseTool class lives in the base module, while the ToolCallResult lives in the data_structures module. The attributes and methods of both classes are indicated in their respective class diagrams and the relation between them is also described.

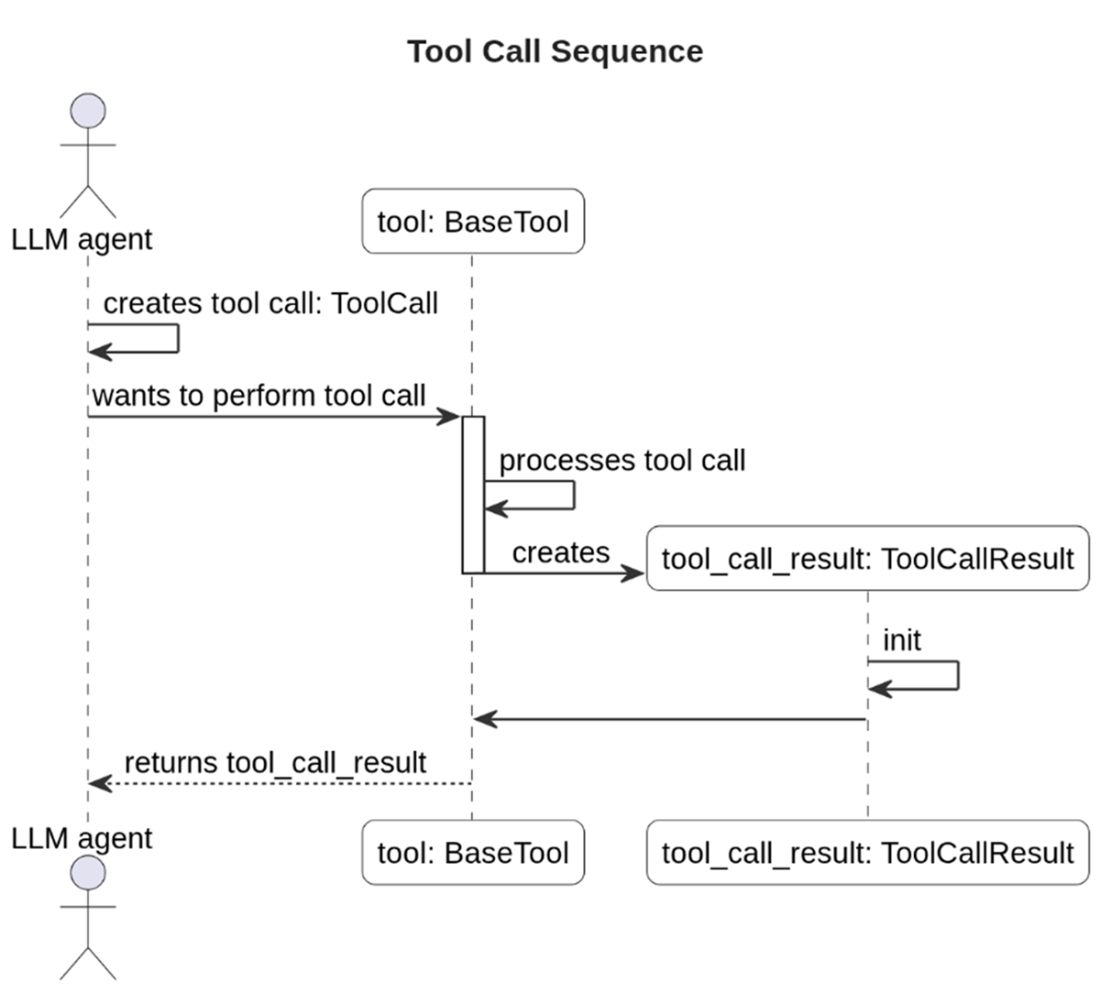

A UML sequence diagram that illustrates how the flow of a tool call. First, an LLM agent prepares a ToolCall object and invokes the BaseTool, which initiates the processing of the tool call. Once completed, the BaseTool class constructs a ToolCallResult which then gets sent back to the LLM agent.

The build plan for our llm-agents-from-scratch framework

Summary

- LLMs have become very powerful text generators that have been applied successfully to tasks like text summarization, question-answering, and text classification, but they have a critical limitation in that they cannot act; they can only express an intent to act (such as making a tool call) through text. That’s where LLM agents come in to bring in the ability to carry out the intended actions.

- Applications for LLM agents are many, such as report generation, deep research, computer use and coding.

- With MAS, individual LLM agents collaborate to collectively perform tasks.

- Many applications for LLM agents can further benefit from MAS. In principle, MAS excel when complex tasks can be decomposed into smaller subtasks, where specialized LLM agents outperform general-purpose LLM agents.

- LLM agents are systems comprised of an LLM and tools that can act autonomously to perform tasks.

- LLM agents use a processing loop to execute tasks. Tool calling and planning capabilities are key components of that processing loop.

- Protocols like MCP, Agent Skills, and A2A have helped to create a vibrant LLM agent ecosystem that is powering the growth of LLM agents and their applications.

- MCP is a protocol developed by Anthropic that has paved the way for LLM agents to use third-party provided tools.

- Agent Skills is another protocol that originated from Anthropic that standardizes how reusable workflows are documented and used by LLM agents

- A2A is a protocol developed by Google to standardize agent-to-agent interactions in MAS.

- In this book, we’ll primarily be framework developers, building our own LLM agent framework to learn the internals of LLM agents more deeply.

- Supplementary materials in the form of additional example notebooks and capstone projects are provided in the book’s GitHub repository to deepen your learning.

Build a Multi-Agent System (from Scratch) ebook for free

Build a Multi-Agent System (from Scratch) ebook for free