1 The world of the Apache Iceberg Lakehouse

Modern data platforms have been shaped by a long search for the right balance of performance, cost, flexibility, and governance. The data lakehouse emerged to unify the strengths of data warehouses and data lakes while preserving openness and interoperability. Apache Iceberg sits at the center of this shift as an open table format that lets organizations treat files in distributed storage like reliable, high-performance database tables, enabling multiple engines to work from a single, governed copy of data.

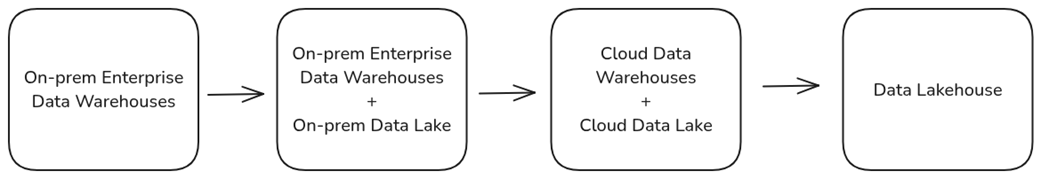

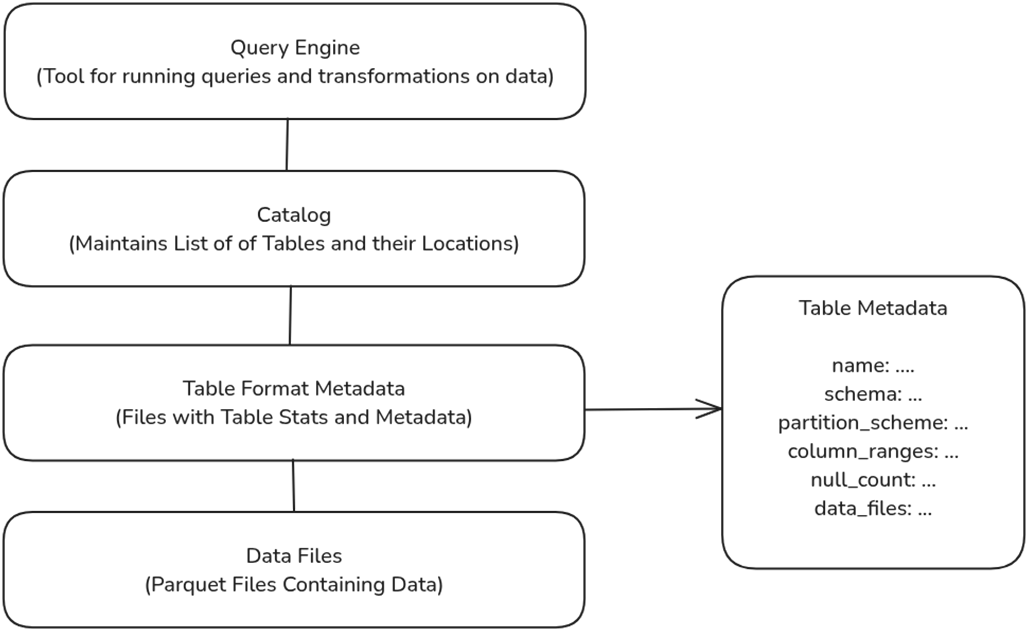

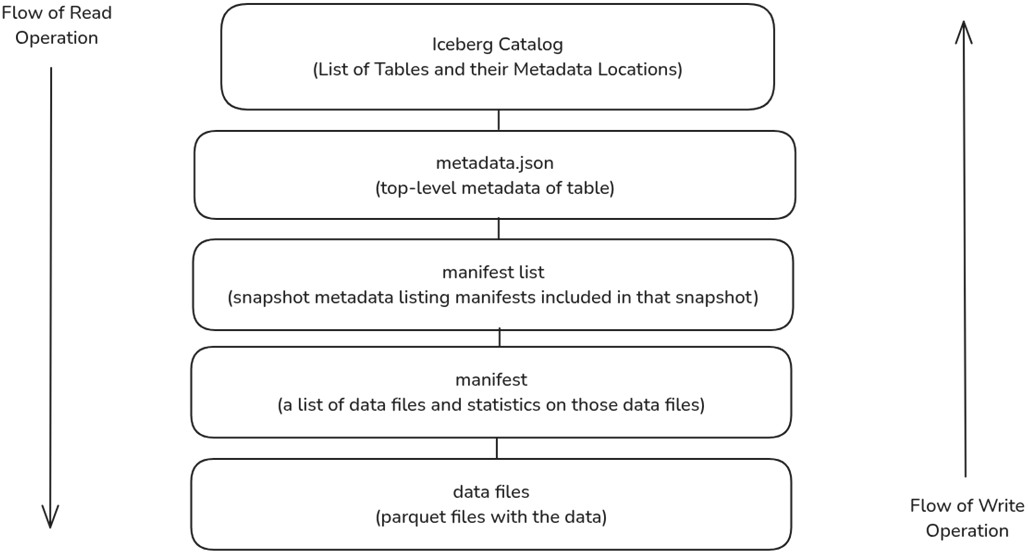

Historically, OLTP systems gave way to enterprise and cloud data warehouses for analytics, but these approaches introduced cost, rigidity, data movement, and lock-in. Hadoop-era data lakes lowered storage costs and accepted diverse data, yet often suffered from weak consistency, slow queries, and governance gaps that led to “data swamps.” Iceberg, created to overcome these limitations, brings warehouse-grade capabilities to the lake through a layered metadata architecture (table metadata, manifest lists, and manifests), ACID transactions for safe concurrent reads and writes, and powerful optimizations such as file and partition pruning. It also supports schema and partition evolution, hidden partitioning that prevents accidental full scans, and time travel for auditing and recovery—all while enjoying broad, vendor-agnostic ecosystem support.

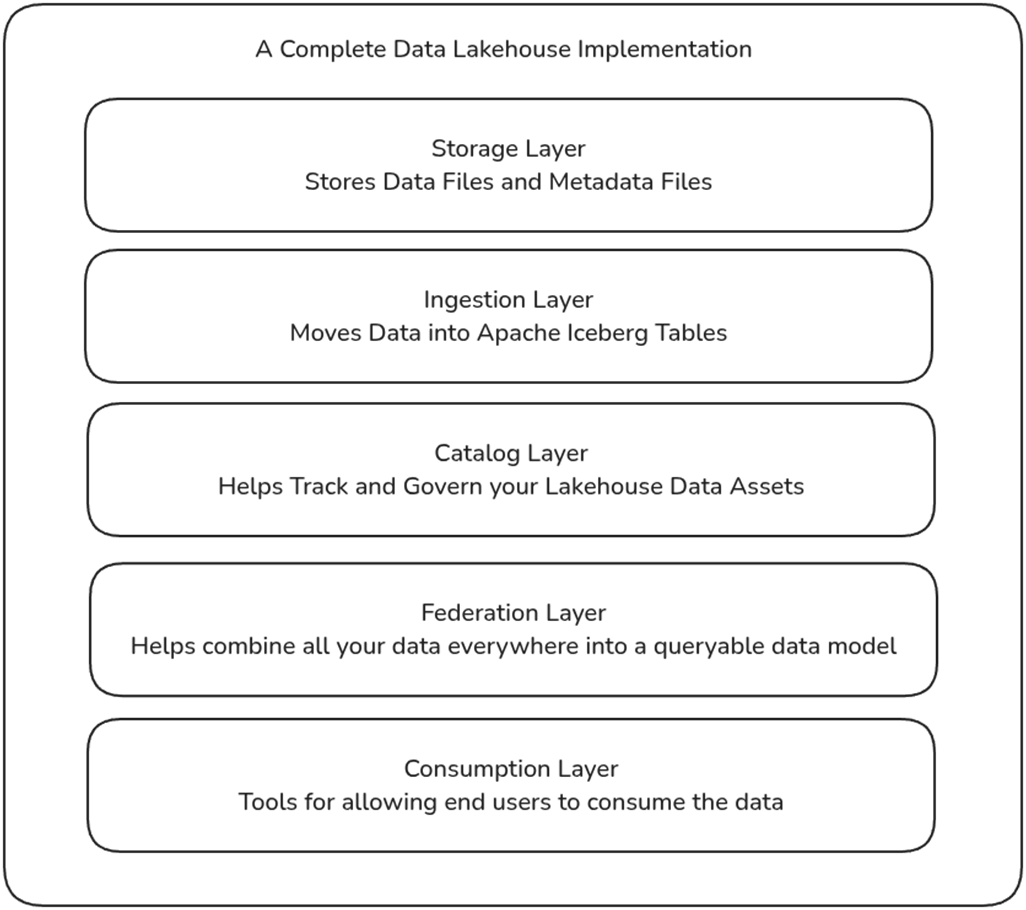

An Iceberg lakehouse delivers practical business outcomes: a single source of truth shared across teams, fast analytics directly on the lake, and meaningful cost savings by reducing duplication and ETL sprawl. Its modular design combines a low-cost storage layer, flexible batch and streaming ingestion, a catalog for discovery and governance, a federation/modeling layer for unification and acceleration, and a consumption layer that serves BI, AI/ML, and applications. By adopting Iceberg’s open standard and rich interoperability, organizations can build scalable, high-performance, and AI-ready platforms without vendor lock-in—ideal when you need multi-engine access, strong governance, evolving data models, and efficient analytics at scale.

The evolution of data platforms from on-prem warehouses to data lakehouses.

The role of the table format in data lakehouses.

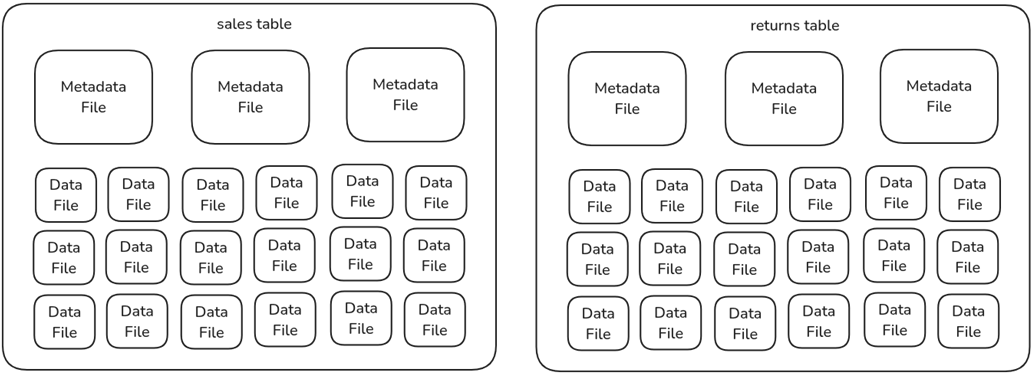

The anatomy of a lakehouse table, metadata files, and data files.

The structure and flow of an Apache Iceberg table read and write operation.

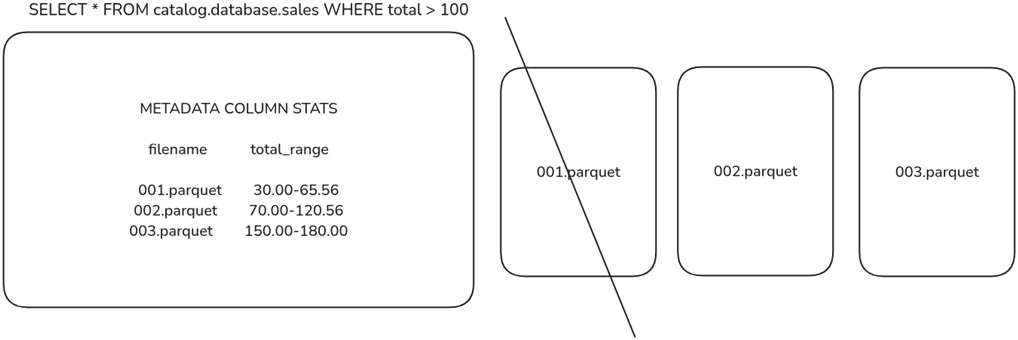

Engines use metadata statistics to eliminate data files from being scanned for faster queries.

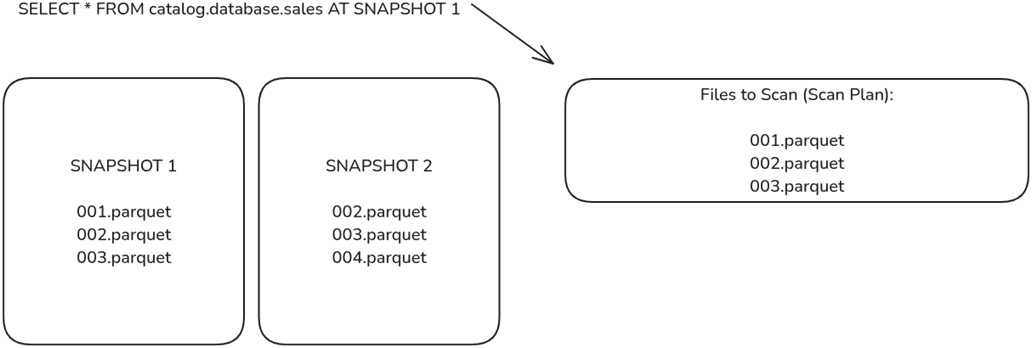

Engines can scan older snapshots, which will provide a different list of files to scan, enabling scanning older versions of the data.

The components of a complete data lakehouse implementation

Summary

- Data lakehouse architecture combines the scalability and cost-efficiency of data lakes with the performance, ease of use, and structure of data warehouses, solving key challenges in governance, query performance, and cost management.

- Apache Iceberg is a modern table format that enables high-performance analytics, schema evolution, ACID transactions, and metadata scalability. It transforms data lakes into structured, mutable, governed storage platforms.

- Iceberg eliminates significant pain points of OLTP databases, enterprise data warehouses, and Hadoop-based data lakes, including high costs, rigid schemas, slow queries, and inconsistent data governance.

- With features like time travel, partition evolution, and hidden partitioning, Iceberg reduces storage costs, simplifies ETL, and optimizes compute resources, making data analytics more efficient.

- Iceberg integrates with query engines (Trino, Dremio, Snowflake), processing frameworks (Spark, Flink), and open lakehouse catalogs (Nessie, Polaris, Gravitino), enabling modular, vendor-agnostic architectures.

- The Apache Iceberg Lakehouse has five key components: storage, ingestion, catalog, federation, and consumption.

Architecting an Apache Iceberg Lakehouse ebook for free

Architecting an Apache Iceberg Lakehouse ebook for free