1 Governing Generative AI

Generative AI is transforming work across industries, but its speed, scale, and open-ended behavior amplify familiar risks into urgent governance challenges. High-profile failures—hallucinated legal citations, prompt-injection exploits, leaked chat logs, and memory manipulation—show why traditional software controls and “fix it later” approaches no longer suffice. Adoption is racing ahead while harms can propagate instantly and publicly, stretching detection, attribution, and remediation far beyond legacy playbooks. As regulators tighten expectations (from the EU AI Act to U.S. sectoral oversight), organizations need a practical way to translate Responsible AI principles into daily decisions that protect people, reputation, and value creation.

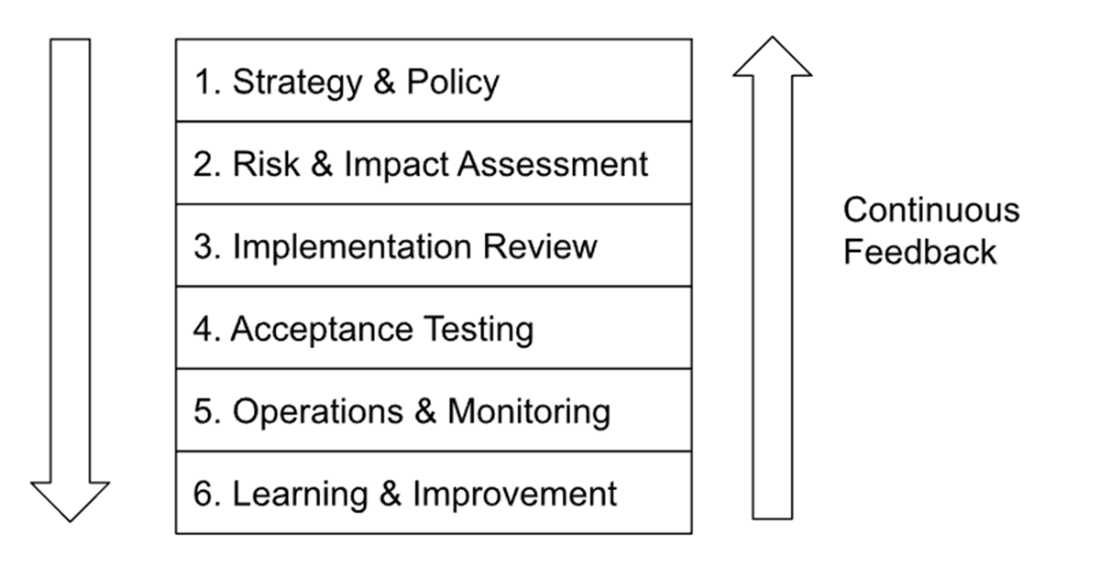

The chapter frames Governance, Risk, and Compliance (GRC) for GenAI as an enabler of safe innovation rather than a box-ticking burden, and proposes a lifecycle-based model that integrates privacy, security, and compliance from concept to retirement. The six-level framework—Strategy & Policy; Risk & Impact Assessment; Implementation Review; Acceptance Testing; Operations & Monitoring; and Learning & Improvement—adapts classic PDCA cycles and aligns with emerging standards like ISO/IEC 42001. It clarifies ownership across the AI supply chain (model providers, integrators, and end users), embeds continuous monitoring and red-teaming, and makes room for residual-risk decisions with explicit executive sign-off. The result is governance tuned for probabilistic systems, vendor dependence, evolving threats, and real-time content generation.

Concrete risks discussed include hallucinations and misinformation, prompt injection and jailbreaks, data poisoning and adversarial content, model extraction, memorization and privacy violations, and bias that can trigger legal and ethical fallout. Illustrative scenarios—from bank copilots to healthcare claims to consumer chat assistants—show how gaps across multiple levels of governance compound harms. To operationalize controls, the chapter previews tools and practices such as threat modeling, AI firewalls and output filters, adversarial and bias testing, detailed logging and lineage, drift detection, anomaly alerting, vendor due diligence, and post-incident learning loops and trust metrics. The central message is pragmatic: disciplined, lifecycle GRC is the price of sustainable GenAI innovation, reducing the frequency and impact of failures while preserving the speed and flexibility that make these systems valuable.

Generative models such as ChatGPT can often produce highly recognizable versions of famous artwork like the Mona Lisa. While this seems harmless, it illustrates the model's capacity to memorize and reconstruct images from its training data; a capability that becomes a serious privacy risk when the training data includes personal photographs.

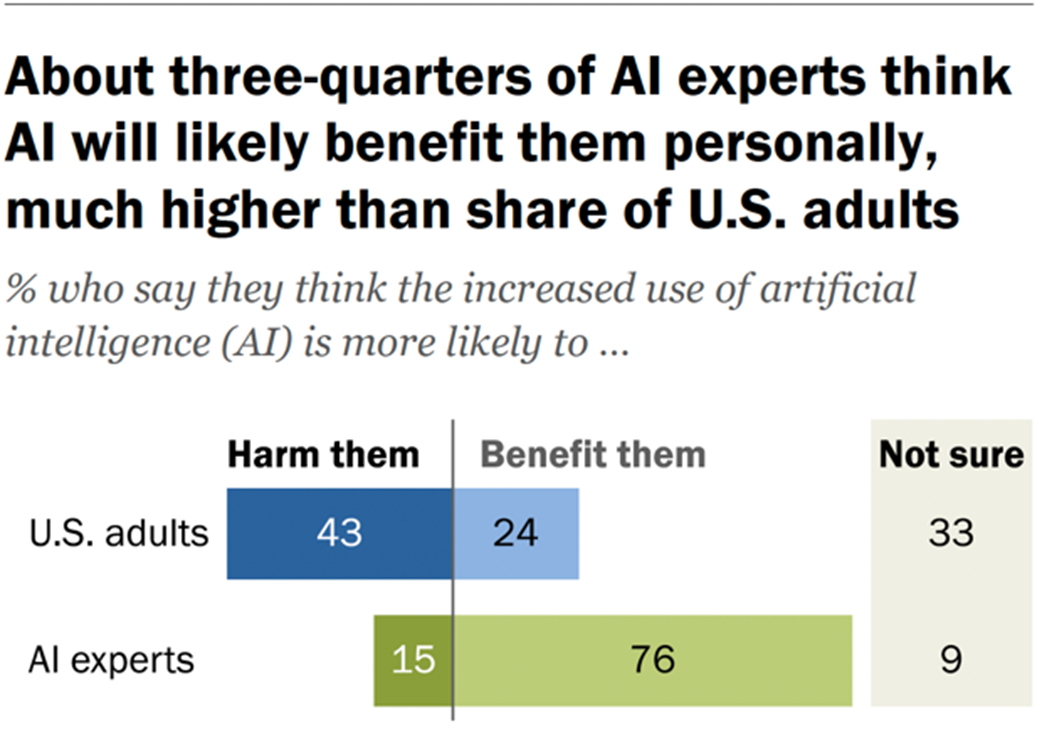

Trust in AI: experts vs general population. Source: Pew Research Center[46].

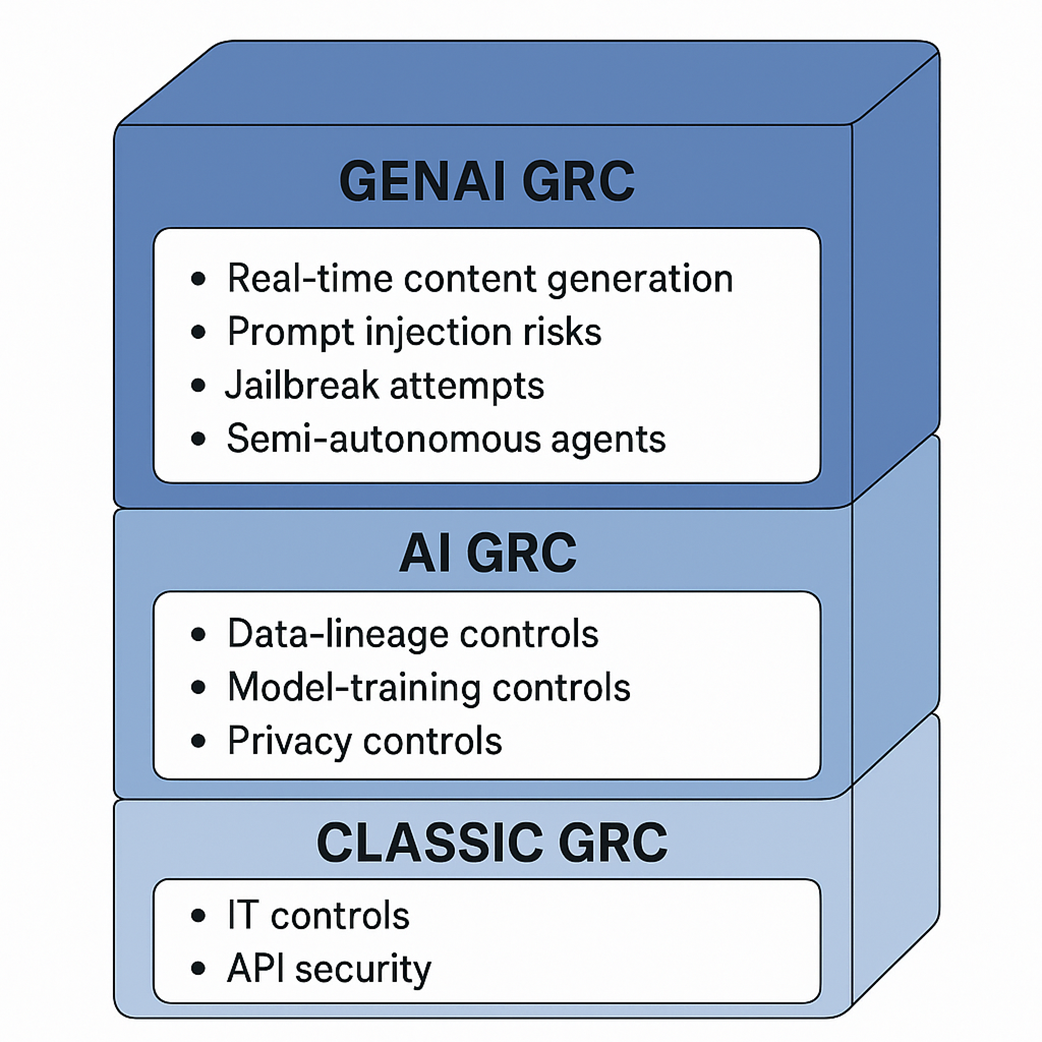

Classic GRC compared with AI GRC and GenAI GRC

Six Levels of Generative AI Governance. Chapter 2 will expand into what control tasks attach to each checkpoint.

Conclusion: Motivation and Map for What’s Ahead

By now, you should be convinced that governing AI is both critically important and uniquely challenging. We stand at a moment in time where AI technologies are advancing faster than the governance surrounding them. There’s an urgency to act: to put frameworks in place before more incidents occur and before regulators force our hand in ways we might not anticipate. But there’s also an opportunity: organizations that get GenAI GRC right will enjoy more sustainable innovation and public trust, turning responsible AI into a strength rather than a checkbox.

In this opening chapter, we reframed GRC for generative AI not as a dry compliance exercise, but as an active, risk-informed, ongoing discipline. We introduced a structured governance model that spans the AI lifecycle and multiple layers of risk, making sure critical issues aren’t missed. We examined real (and realistic) examples of AI pitfalls: from hallucinations and prompt injections to model theft and data deletion dilemmas. We have also provided a teaser of the tools and practices that can address those challenges, giving you a sense that yes, this is manageable with the right approach.

As you proceed through this book, each chapter will dive deeper into specific aspects of AI GRC using case studies. We’ll tackle topics like establishing a GenAI Governance program (Chapter 2). We will then address different risk areas such as security & privacy (Chapter 3) and trustworthiness (Chapter 4). We’ll also devote time to regulatory landscapes, helping you stay ahead of laws like the EU AI Act, and to emerging standards (you’ll hear more about ISO 42001, NIST, and others). Along the way, we will keep the tone practical – this is about what you can do, starting now, in your organization or projects, to make AI safer and more reliable.

By the end of this book, you'll be equipped to:

- Clearly understand and anticipate GenAI-related risks.

- Implement structured, proactive governance frameworks.

- Confidently navigate emerging regulatory landscapes.

- Foster innovation within a secure and ethically sound AI governance framework.

Before we move on, take a moment to reflect on your own context. Perhaps you are a product manager eager to deploy AI and thinking about how the concepts here might change your planning. Or you might be an executive worried about AI risks and consider where your organization has gaps in this new form of governance. Maybe you are a compliance professional or lawyer and ponder how a company’s internal GRC efforts could meet or fall short of your expectations. Wherever you stand, the concepts in this book aim to bridge the gap between AI’s promise and its risks, giving you the knowledge to maximize the former and mitigate the latter. By embracing effective AI governance now, you not only mitigate risks. You position your organization to lead responsibly in the AI era.

AI Governance ebook for free

AI Governance ebook for free