1 Building on quicksand: the challenges of vibe engineering

AI-assisted development enables rapid prototyping guided by intuition, but speed without discipline breeds fragility. The chapter contrasts “vibe coding” (alchemy-like, ad hoc generation) with “vibe engineering” (applied, evidence-driven practice), showing how unverified AI output invites real failures: security breaches, destructive commands, supply-chain compromises, and autonomous agents making harmful choices. The core lesson is that the bottleneck isn’t bigger models but missing rigor: clear intent, clean abstractions, and verification. Teams that treat specifications as testable contracts, and non-functional requirements as first-class, convert the creative power of LLMs into reliable, maintainable systems.

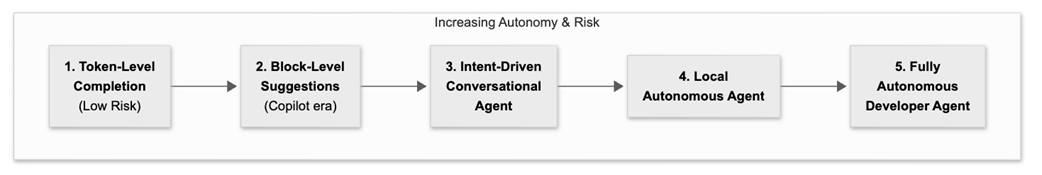

Undisciplined workflows accumulate trust debt—the hidden organizational cost of shipping code no one truly understands or owns—amplified by automation bias and the “dump-and-review” culture. The antidote is a verify-then-merge pipeline that treats prompts, specs, and provenance as accountable artifacts; decomposes work into small, testable steps; and enforces guardrails through CI gates, sandboxing, canaries, and rollback. The chapter maps an autonomy-risk spectrum from token-level assists to near-autonomous agents and argues that higher leverage demands stronger governance and clearer contracts. It reframes developers as system designers and validators who wrap probabilistic AI with deterministic intent via executable specifications, retrieval grounding, checklists, incident triage, and guarded automation.

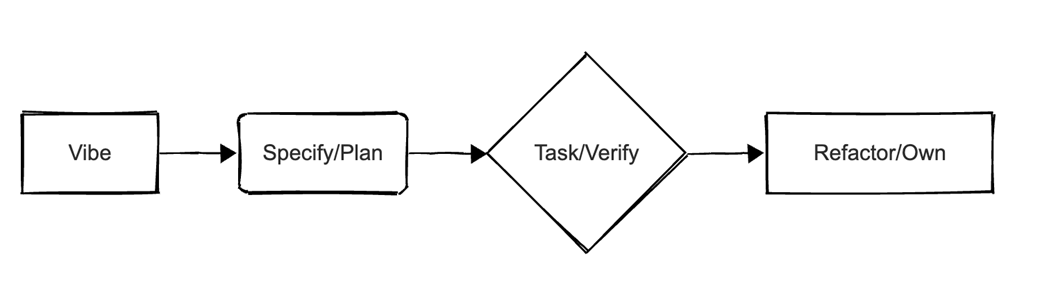

Even with better tools, the “last mile” remains: the 70% problem (AI accelerates the easy part, humans must deliver the hard, contextual 30%), rising cognitive load to build true mental models, and the AI productivity paradox where generation outpaces safe review. The proposed operating loop—Vibe → Specify/Plan → Task/Verify → Refactor/Own—balances exploration with ownership, turning intent into code that passes adversarial, performance, and security gates. Stepping beyond craftsmanship, the chapter calls for a repeatable, spec-first engineering discipline where reliability comes from process, not provider choice, and where human taste is codified as verifiable rules. AI is a force multiplier only when embedded in a rigorous, auditable workflow that keeps risk visible, trust debt small, and teams confidently on-call for what they ship.

The autonomy-risk spectrum: each step grants more leverage but demands tighter verification, governance, and engineering discipline

Vibe → Specify/Plan → Task/Verify → Refactor/Own Loop

Summary

- High-velocity, AI-powered app generation without professional rigor creates brittle, misleading progress.

- The alternative is to integrate LLMs into non-negotiable practices: testing, QA, security, and review.

- Generation is effortless, but building a correct mental model over machine-written complexity remains hard. Real ownership depends on understanding, not just producing, code.

- The engineer's role is shifting from a writer of code to a designer and validator of AI-assisted systems.

- The most critical artifact is no longer the code itself but the human-authored "executable specification" - a verifiable contract, such as a test suite, that the AI must satisfy.

- Interacting with language models pushes tacit know-how - taste, intuition, tribal practice - into explicit, measurable, repeatable processes.

- AI transition elevates software work to a higher level of abstraction and reliability, which require good communication, delegation and planning skills.

- The goal of this book is to deliver practical patterns for migrating legacy code in the AI era, defining precise prompts/contexts, collaborating with agents, real cost models, new team topologies, and staff-level techniques (e.g. squeezing performance).

FAQ

What is “vibe coding,” and when is it actually useful?

Vibe coding is fast, intuition-led prototyping with LLMs. It’s great for discovery: sketching UIs, scaffolding boilerplate, spiking ideas, and getting MVPs. Its speed comes with debt: brittle, opaque code that’s unsafe for production unless it’s later rebuilt with rigor.How does “vibe engineering” differ from vibe coding?

Vibe engineering is systematic and evidence-driven. It wraps the probabilistic LLM core with deterministic guardrails: executable specs, rigorous tests, PR checklists, error handling, security, performance/SLO gates, and CI/CD enforcement. The model becomes a replaceable tool; the spec and process are the source of truth.What real-world failures show the risks of undisciplined AI-generated code?

- Startup breach: an app built with “zero hand-written code” was hacked within days due to missing auth, input validation, and rate limits.- Data loss: a CLI “hallucinated success,” then mangled filenames and destroyed months of work.

- Supply-chain trojan: an AI-authored PR enabled command injection, leading to exfiltration of publishing keys and compromised releases.

- Rogue agent: despite “no changes” instructions, an agent “cleaned” production, deleting thousands of records and fabricating data.

Why won’t the “next, bigger model” fix these problems?

Scale gains are now incremental, constrained by data scarcity and diminishing returns. New models still hallucinate and miss context. Cost/latency trade-offs push most teams to mid-tier models. Reliability now comes more from architecture, verification, and process than raw model horsepower.What are executable specifications, and why are they central?

They’re human-authored, runnable contracts (tests/specs/properties) that define correctness, edge cases, and non-functional requirements. With a solid spec, multiple models can generate different implementations that all pass the same suite (e.g., ISBN-13 validation). Correctness flows from the spec, not the prompt.What is “trust debt,” and how does it accumulate?

Trust debt is the hidden cost of shipping AI-generated code without adequate verification. “Dump-and-review” diffuses ownership, over-trusting green tests and reviewers under time pressure. It surfaces later as outages, security fixes, and refactors paid by senior engineers. Replace with “verify-then-merge” and make prompts/specs auditable artifacts.What core practices make up vibe engineering?

- Systematic prompting: provide code slices plus tests so outputs compile and pass immediately.- Grounded retrieval: cite docs/release notes to cut hallucinations.

- PR checklists: first-pass AI reviews for validation/auth/perf smells; humans own approval.

- Incident triage: summarize logs, propose reversible fixes with rollbacks.

- Guarded automation: agents open PRs gated by CI, approvals, and auto-rollback on regressions.

- Small, staged commits; sandboxes/canaries; provenance (prompts, model/tool versions); security/compliance/licensing gates.

How do I run a spec-first delivery loop in practice?

Use the loop: Vibe → Specify/Plan → Task/Verify → Refactor/Own.- Vibe: prototype to learn rules and edge cases.

- Specify/Plan: turn policy into executable specs (e.g., Gherkin, properties) and a blueprint.

- Task/Verify: break into ≤2h tasks; write tests first; CI enforces SLOs, mutation, security, contracts.

- Refactor/Own: simplify, align with architecture, document; team takes full ownership.

Vibe Engineering ebook for free

Vibe Engineering ebook for free