12 Cloud and services

This chapter traces the shift from static, manually provisioned infrastructure to dynamic, elastic cloud environments and argues for precise, shared definitions of cloud, cloud-native, serverless, and services. It presents elasticity—acquiring and releasing resources on demand—as the foundation for scalability and reliability, contrasting earlier proactive, predictive operations with reactive, self-regulating systems. The aim is to provide concise mental models that align with industry practice and improve communication.

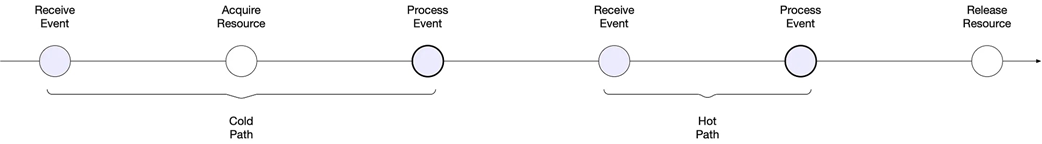

Cloud computing is defined by a clear separation between resource consumers and providers and by on-demand acquisition and release of resources. A cloud-native application is scalable and reliable by construction, leveraging platform primitives such as supervisors, autoscalers, and load balancers; lifting and shifting alone does not make an application cloud-native, and specific technologies like containers are not defining characteristics. Serverless computing acquires resources reactively after an event arrives, requiring the system to infer resources per request, and leads to cold and hot paths depending on whether resource acquisition lies on the critical path of request processing.

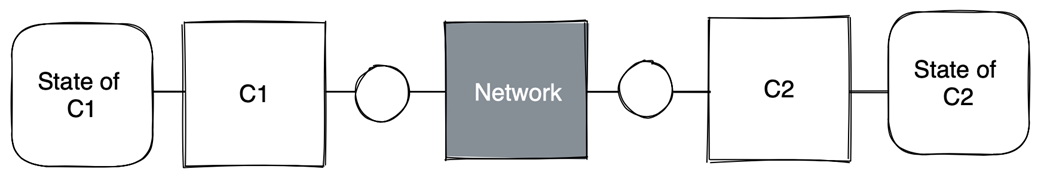

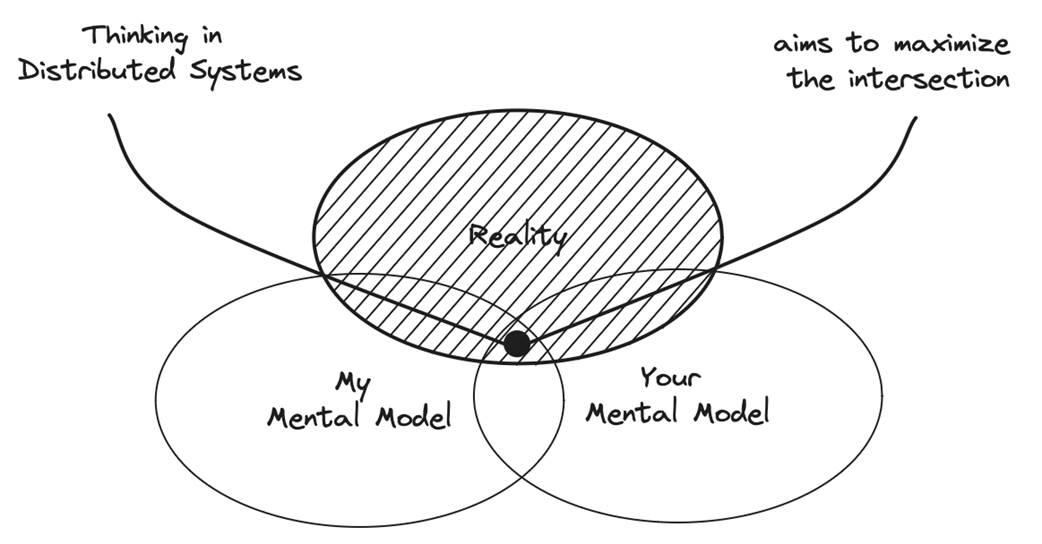

Reframing microservices as services, the chapter defines a service as a contract between a component and the rest of the system, emphasizing a logical view over the physical set of implementing components. A recommendation service example shows how a stable contract can be realized by a dynamic, redundant assemblage of processes, data stores, load balancers, and autoscalers that scale and fail independently while preserving the same external behavior. The chapter closes by stressing that shared, accurate mental models reduce misalignment and improve engineering and collaboration, encouraging readers to keep probing concepts until they are truly understood.

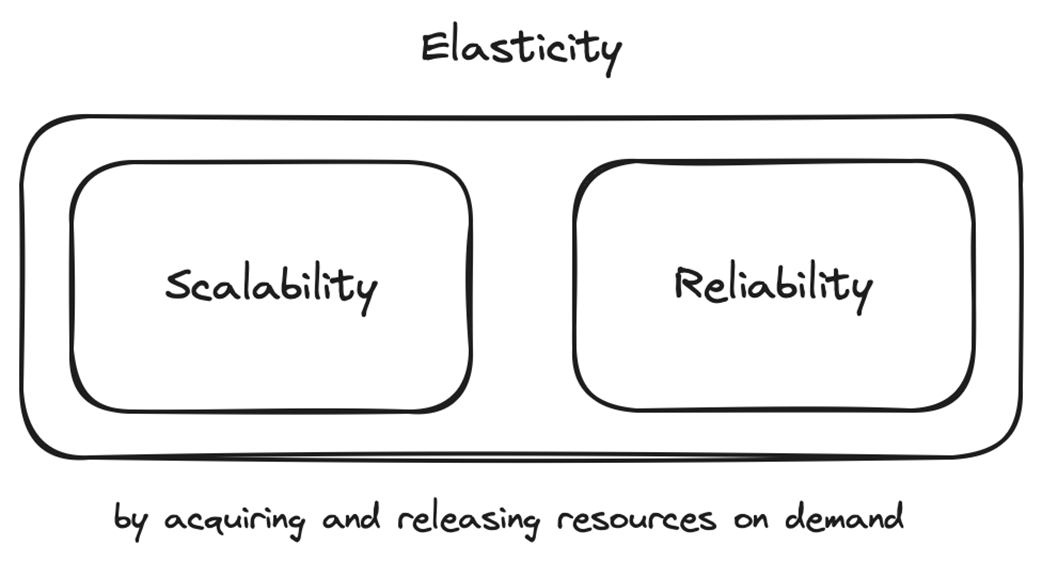

Elasticity in terms of scalability and reliability

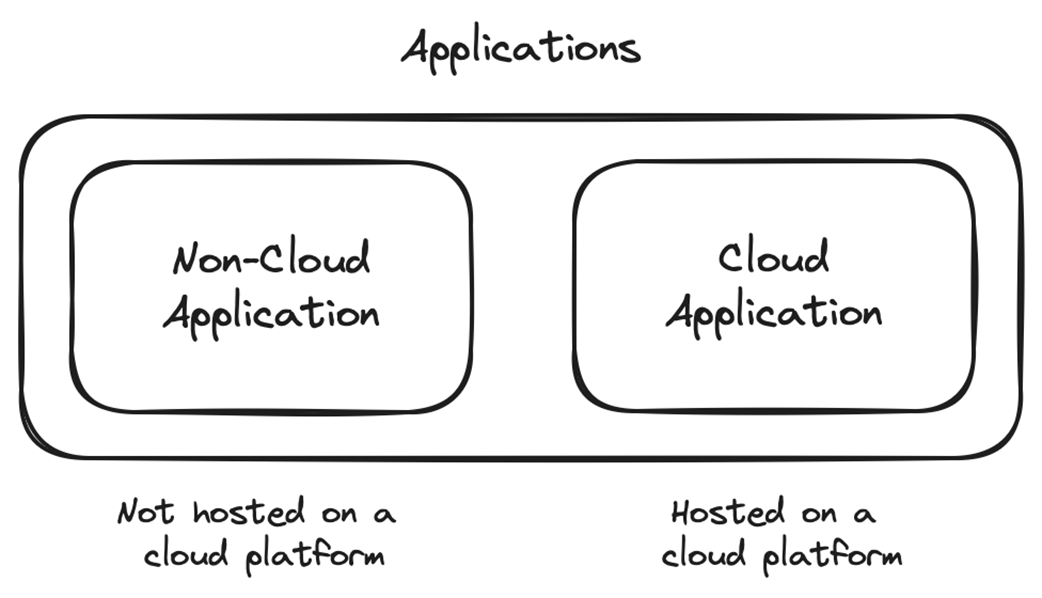

Non-cloud application versus cloud application

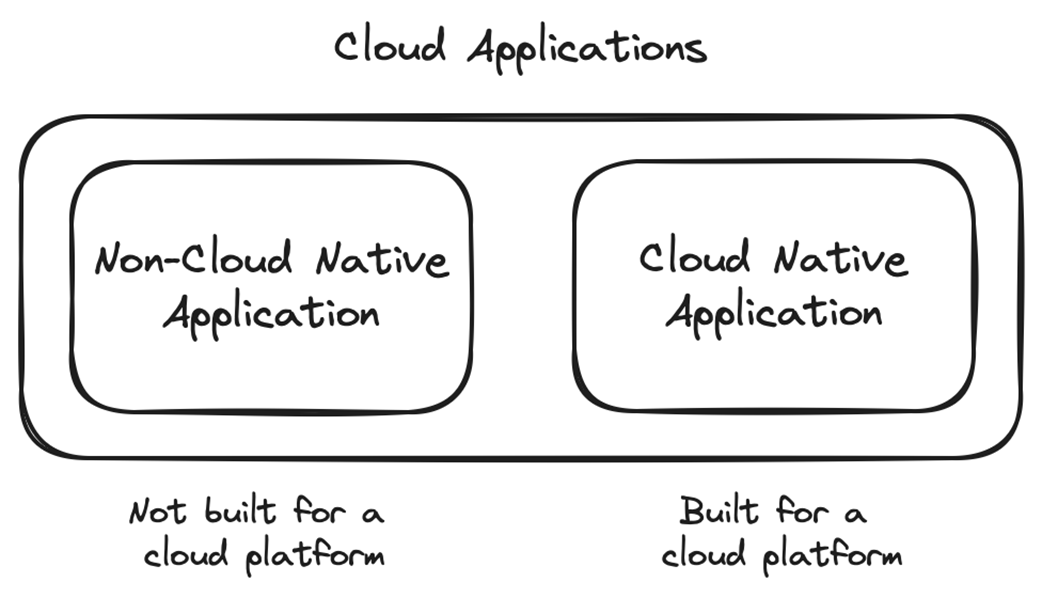

Non-cloud-native application versus cloud-native application

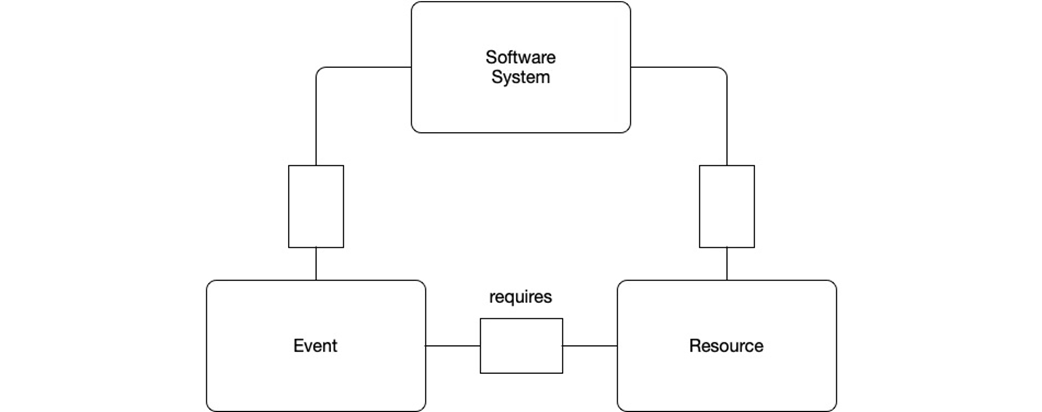

Minimal model of computation to reason about serverless computing

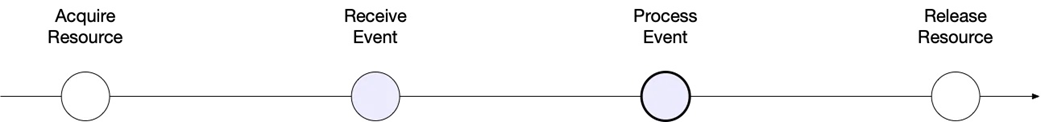

Order of events in traditional computing; acquiring resources happens proactively.

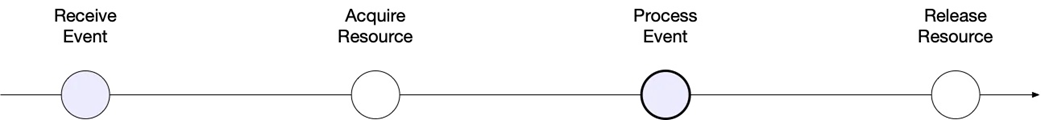

Order of events in serverless computing; acquiring resources happens reactively.

Cold path versus hot path

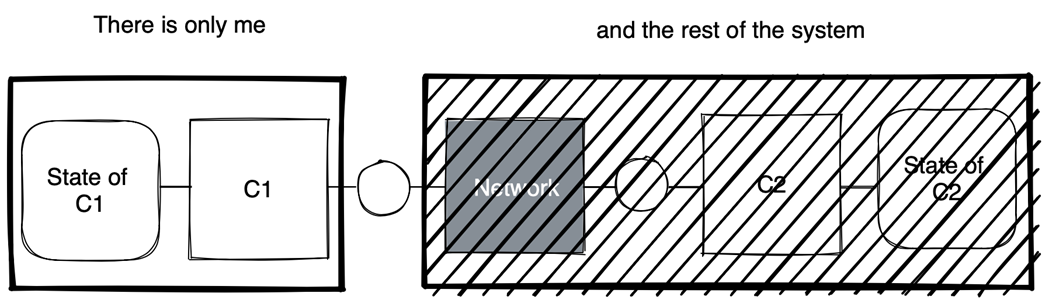

Global point of view: C1 and C2

From C1’s point of view, there is only C1 and the rest of the system.

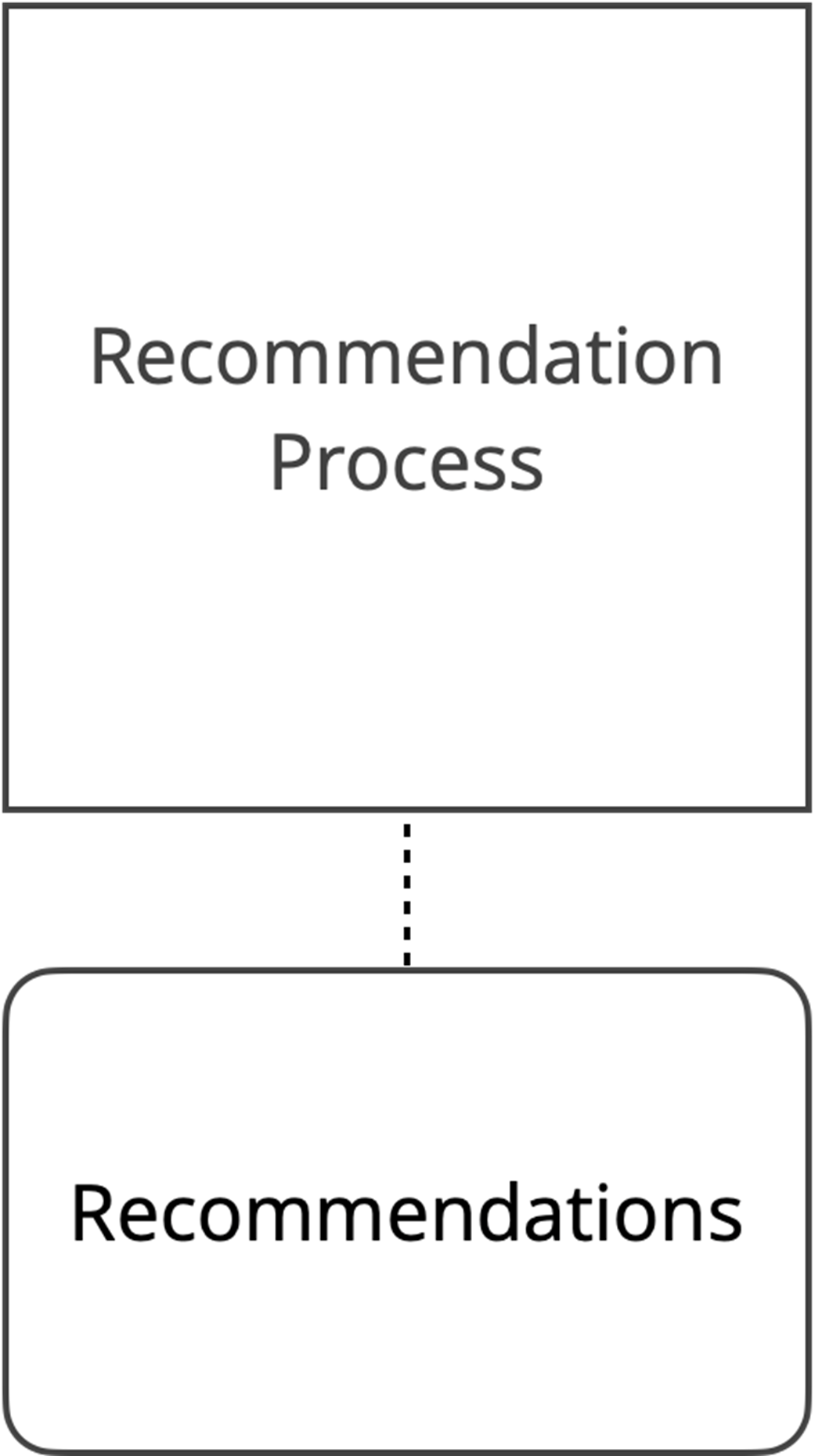

Initial model of the recommendation service

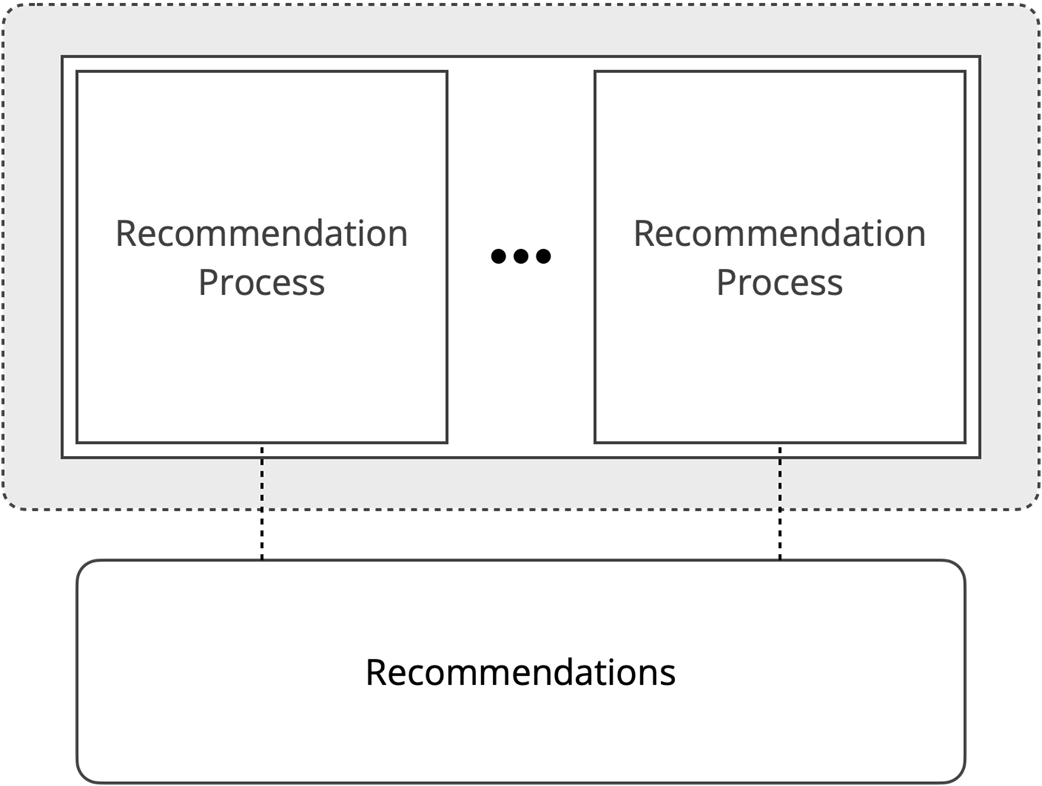

Redundant implementation of the recommendation service

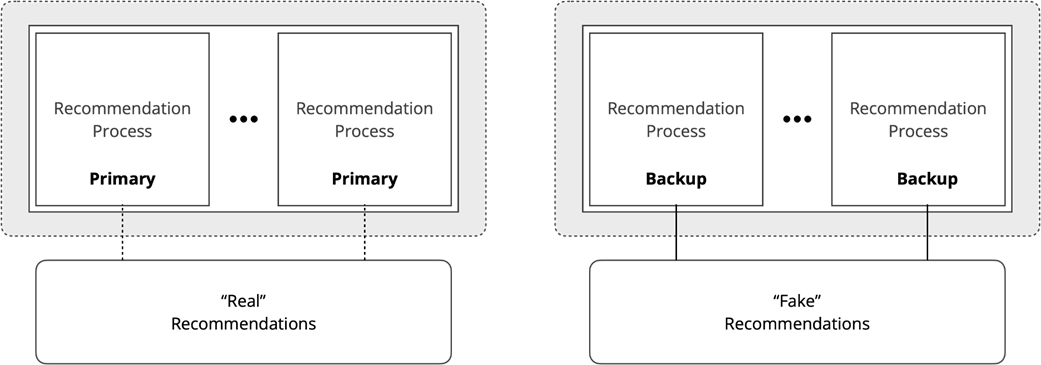

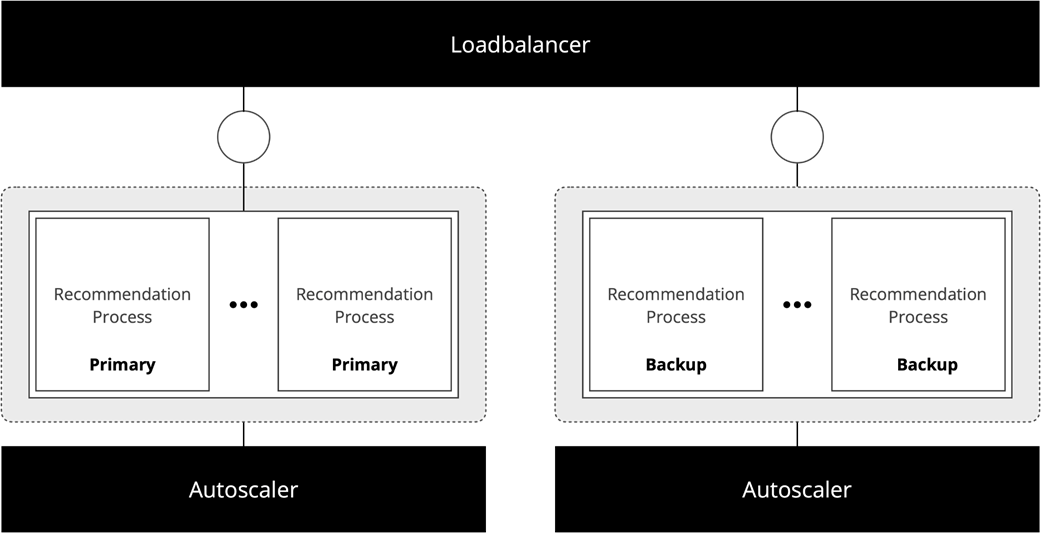

Primary and backup

Loadbalancer and Autoscaler

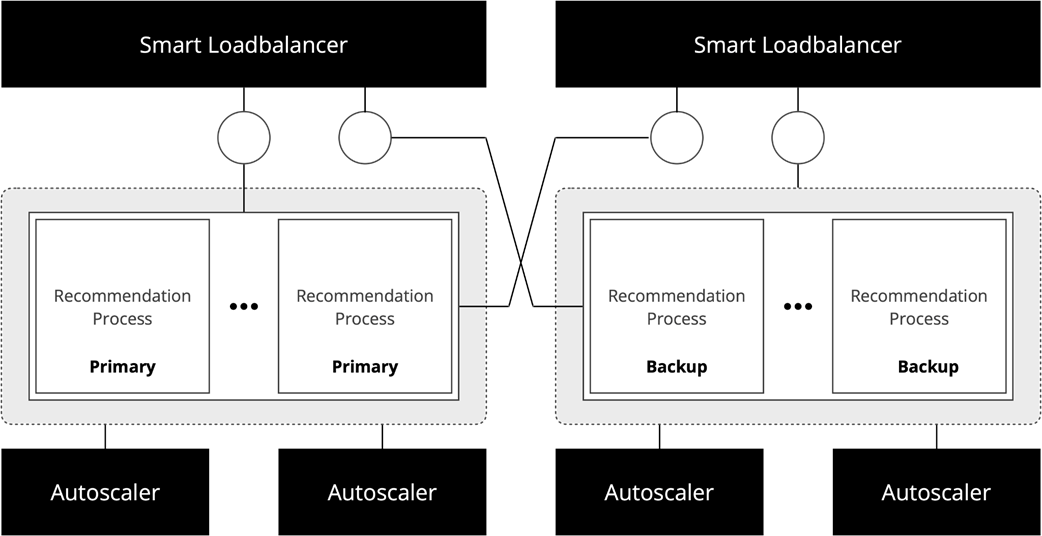

Redundant Loadbalancers and Autoscalers

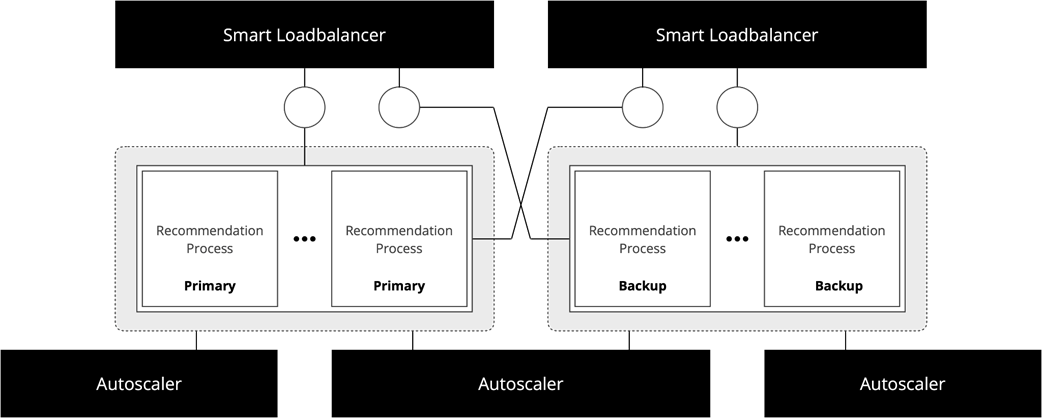

Final model of the Recommendation Service

Thinking in distributed systems aims to maximize the intersection of our mental models with reality and each other.

Summary

- Elasticity refers to a system's ability to ensure scalability and reliability by dynamically acquiring or releasing resources to match demand.

- Cloud computing divides the world into resource consumers and providers, allowing consumers to acquire and release virtually unlimited resources on demand through public or private cloud platforms.

- Cloud computing fundamentally transformed computing, replacing static, long-lived system topologies with dynamic, on-demand system topologies.

- A cloud application is any application hosted on a cloud platform, while a cloud-native application is a cloud application that is elastic by construction.

- Traditional systems acquire resources that are necessary to process events proactively, before receiving the event.

- Serverless systems acquire resources that are necessary to process events reactively, after receiving the event.

- Services are contracts between components and the system, focusing on logical interactions that remain constant instead of physical interactions that may change over time.

Think Distributed Systems ebook for free

Think Distributed Systems ebook for free