1 How RAG research prevents disasters

Retrieval-Augmented Generation (RAG) connects large language models to external knowledge so they can synthesize answers grounded in evidence rather than relying solely on frozen training data. The chapter explains how a RAG system’s offline indexing and real-time query pipelines collaborate to fetch, rank, and condition generation on the most relevant information, turning “find sources” into “use sources.” Framed as an enduring architectural pattern, RAG directly addresses three persistent limits of standalone models—knowledge currency, hallucination control, and private data access—while promoting research literacy so reliability becomes measurable, improvable, and a source of competitive advantage.

The risks of ungrounded generation are illustrated by real-world failures, where models confidently invent policies or cite non-existent procedures despite having access to correct documents. To replace trial-and-error with engineering discipline, the chapter presents a failure taxonomy spanning retrieval, augmentation, and generation, including issues such as missing content, ranking misses, factually inconsistent hallucinations, and incomplete answers. It emphasizes the “trust barrier”: a few early, high-stakes errors can undermine user confidence and erase productivity gains. Systematic evaluation, targeted test suites, and guardrails that force strict adherence to retrieved context are positioned as essential practices to prevent silent, confident mistakes from reaching end users.

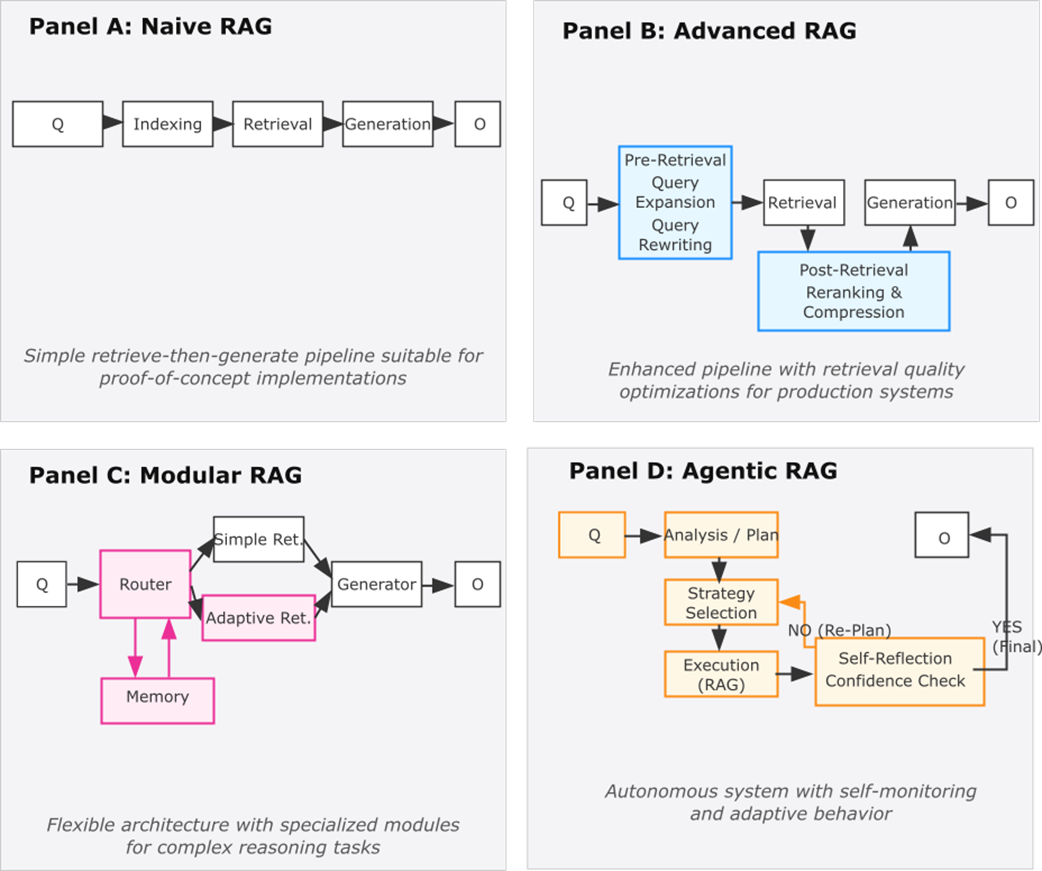

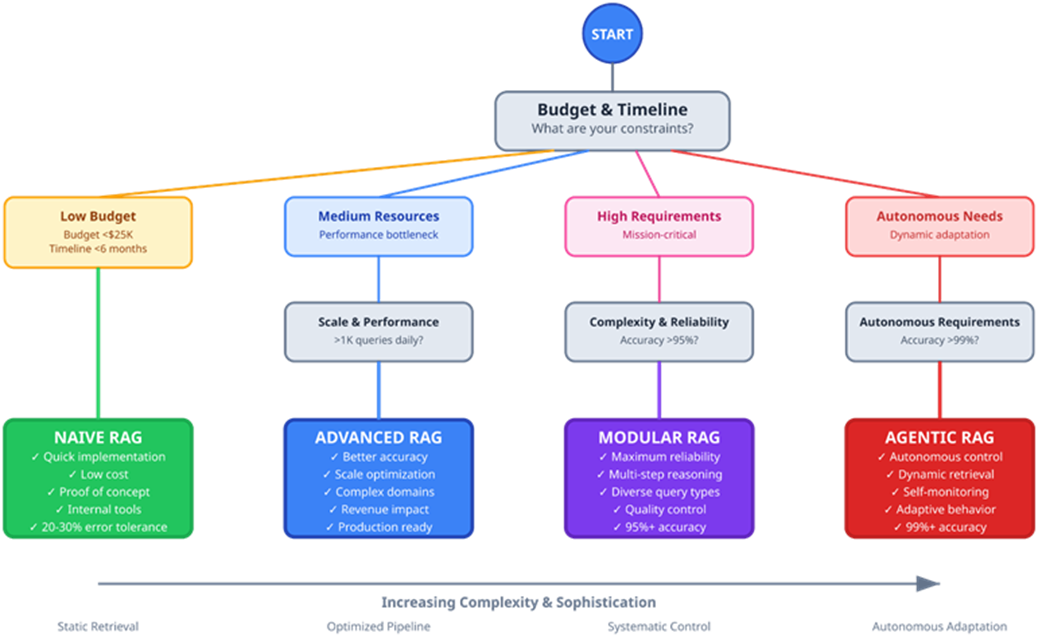

Reliability is achieved by matching solutions to failure points and evolving architecture only as needed: start with Naive RAG for simplicity; move to Advanced RAG to improve chunking, query rewriting, hybrid retrieval, reranking, and context optimization; adopt Modular RAG for composability, verification stages, and independent scaling; and add Agentic behaviors for dynamic planning, retrieval triggering, and self-critique when requirements demand it. Research-backed techniques such as HyDE, RAG-Fusion, Self-RAG, FLARE, and resilience layers like CRAG are presented as proactive fixes, complemented by cost guardrails (semantic caching, small models for planning, recursion limits, and real-time telemetry). The chapter concludes that RAG’s retrieval-first precision, modular extensibility, and evaluability justify infrastructure investment and, when guided by the literature, turn reliability from a hope into a property you can design, measure, and continuously improve.

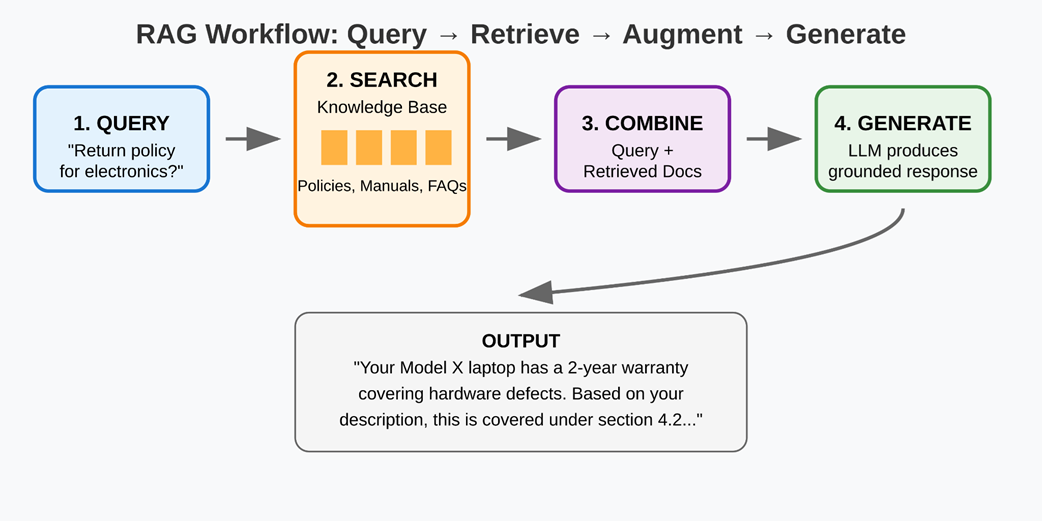

The RAG workflow — from user queries to grounded responses through retrieval and generation.

RAG architectural evolution from Naive to Agentic implementations. Each paradigm builds upon its predecessors while adding specialized components and capabilities to address increasingly complex requirements.

RAG Implementation Decision Tree — Business Decisions guiding RAG choices.

Summary

- Retrieval-Augmented Generation addresses three critical limitations that make standalone language models unreliable in production: knowledge boundaries that prevent access to current information, hallucinations that generate unverifiable claims, and the inability to incorporate private organizational knowledge essential for business decisions.

- The seven-point failure taxonomy provides a systematic approach to diagnosing RAG system problems, replacing guesswork with targeted solutions. Failure points such as “Missed the Top Rank” and “Factually Inconsistent Hallucination” enable precise identification of problems and selection of research-backed solutions for specific failure modes.

- RAG systems evolve through four architectural stages, based on complexity requirements and business needs. Naive RAG establishes basic retrieve-and-generate functionality for proof-of-concept applications. Advanced RAG optimizes retrieval quality and context processing for production use. Modular RAG implements adaptive strategies and quality control for mission-critical applications. Agentic RAG introduces autonomous planning and self-correction into the retrieval and generation loop.

- The core RAG architecture integrates two specialized components: retrieval systems that locate relevant information from external knowledge sources and generation systems that synthesize retrieved context with user queries to produce grounded, factual responses. This integration enables AI systems that combine broad language capabilities with specific, up-to-date, and verifiable knowledge.

- Research literacy transforms technology evaluation from reactive debugging to proactive problem-solving. Understanding the academic foundations of RAG techniques lets you assess new approaches independently, plan strategically for system evolution, and adapt to changing requirements without relying on tutorials or expert opinions.

FAQ

What is Retrieval-Augmented Generation (RAG) and how is it different from search engines or standalone LLMs?

RAG is an architectural pattern that connects a large language model to external knowledge sources at inference time. Unlike search engines that return documents for humans to read, or standalone LLMs that rely solely on their training data, RAG retrieves relevant evidence and then generates an answer grounded in that evidence. In short: search finds sources; RAG uses sources to synthesize reliable, verifiable answers.

How does RAG help prevent disasters like the Air Canada chatbot incident?

The Air Canada case was a factually inconsistent hallucination: the bot contradicted a retrieved policy and invented a discount. RAG reduces this risk by enforcing strict adherence to retrieved context and adding verification layers. Techniques such as Self-RAG (self-critique and confidence indicators) and CRAG-style resilience can detect low-confidence generations, trigger additional retrieval, and block outputs that contradict sources—so failures are intercepted before they reach users.

How do RAG systems work end to end?

RAG has two cooperating pipelines:

- Indexing (offline): chunk content, create searchable representations (e.g., embeddings), and store them.

- Query (online): (1) query processing, (2) retrieval, (3) ranking/reranking, and (4) grounded generation. Each stage targets specific failure modes—for example, retrieval/ranking address “Missing Content” and “Missed the Top Rank,” while generation focuses on avoiding hallucinations and ensuring complete extraction from context.

Which fundamental reliability problems does RAG address?

- The knowledge boundary: LLMs are frozen at their training cutoff; RAG fetches up-to-date information.

- The hallucination challenge: LLMs can produce confident but wrong text; RAG grounds answers in retrieved evidence and adds verification.

- The private knowledge gap: Enterprise decisions require secure access to proprietary data; RAG integrates private sources with access controls rather than baking them into model weights.

What are the seven common failure points in RAG systems?

- FP1: Missing Content — the answer isn’t in the knowledge base.

- FP2: Missed the Top Rank — the right document exists but is ranked too low.

- FP3: Factually Inconsistent Hallucination — the model contradicts the retrieved information.

- FP4: Not in Context — relevant chunks were retrieved but filtered out or truncated.

- FP5: Not Extracted — information is in context but not used in the answer.

- FP6: Incorrect Specificity — the response is too generic or too detailed for the need.

- FP7: Incomplete — the answer is partial when a complete, multi-step response is required.

Which research-backed techniques map to these failures?

- FP1/FP2 (coverage/ranking): Hybrid search, cross-encoder reranking, RAG-Fusion, and HyDE (bridges query–document vocabulary gaps; helps surface the right evidence and improve specificity).

- FP3 (hallucinations): Self-RAG (reflection/confidence tokens, self-critique), post-generation verification/critique layers.

- FP4 (context loss): Context compression/selection and smart chunking to keep crucial clauses.

- FP5 (missed extraction): Targeted prompting, chain-of-extraction, and answer citation requirements.

- FP6 (specificity): HyDE-driven query rewriting, query routing, and audience-aware prompting.

- FP7 (completeness): Coverage checklists, decomposition (FiD/Atlas-style), and verifier checks for missing steps.

- Reliability triggering: FLARE detects uncertainty and retrieves proactively; CRAG adds resilience layers.

When should I choose Naive, Advanced, Modular, or Agentic RAG?

- Naive RAG: fast proofs of concept, well-structured domains, limited budgets/timelines.

- Advanced RAG: when accuracy plateaus due to retrieval quality, vocabulary mismatch, or scale; add query expansion (HyDE), reranking, hybrid retrieval, and context optimization.

- Modular RAG: enterprise settings with diverse data/tools; need composable components, routing, independent scaling, and stronger quality control.

- Agentic RAG: dynamic, research-like workflows where the system plans, retrieves, and verifies iteratively; use minimally agentic loops to balance adaptability with cost/predictability.

Why is RAG an enduring pattern despite longer context windows, LoRA fine-tuning, and caching?

These advances enhance but don’t replace RAG’s core job: integrating external, current, and private knowledge with grounded generation. Long context still needs precision retrieval to avoid “lost in the middle.” LoRA improves domain reasoning within RAG without solving knowledge currency or access control. Caching reduces cost/latency but operates inside the same retrieval-plus-generation framework.

How does RAG make reliability measurable and improvable rather than trial-and-error?

By tying techniques to specific failure modes and stages, teams can instrument retrieval coverage, ranking precision, grounding consistency, extraction rate, specificity, and completeness. Test suites target FP1–FP7; telemetry and confidence signals (e.g., from Self-RAG/FLARE) enable data-driven thresholds, automated retries, and human escalation, overcoming the Trust Barrier.

What cost and latency guardrails does the chapter recommend?

- Semantic caching (for repeated queries/contexts) and cache-augmented generation.

- Model tiering: use small language models (SLMs) for planning/routing and reserve larger LLMs for final synthesis.

- Hard limits on recursion/iterations in agentic loops.

- Real-time telemetry to monitor token use, retrieval depth, and verification triggers.

Where is the business ROI for investing in RAG infrastructure?

RAG automates synthesis—reading, cross-referencing, and summarizing—so users access more relevant data faster, with fewer unhelpful answers. Reported outcomes include large productivity gains (e.g., financial research assistants), improved retrieval precision in specialized domains (e.g., legal), and better multi-source risk detection (e.g., supply chains). These translate into meaningful time savings, error reduction, and competitive advantage.

Retrieval Augmented Generation, The Seminal Papers ebook for free

Retrieval Augmented Generation, The Seminal Papers ebook for free