1 Why rearchitecting LLMs matters

Large language models deliver remarkable breadth—writing, analysis, translation, coding—but their generality often makes them oversized, slow, costly, and hard to govern in production. This chapter argues that the path to real-world value is rearchitecting: reshaping models to fit concrete objectives and building ecosystems of small, specialized language models that collaborate with software components. By moving beyond prompt tweaks and one-size-fits-all stacks, organizations can achieve faster inference, tighter cost control, differentiated capabilities, and behavior that is easier to audit and maintain.

The chapter maps the pain as POCs scale: runaway token and API bills, unpredictable usage, misaligned infrastructure, and the “generic trap” where identical frontier APIs yield indistinguishable outputs. It explains why prompt engineering, RAG, and fine-tuning closed models rarely produce durable advantage—base expertise stays generic, inference gets pricier, and vendor lock-in deepens. Even open-source models can be overengineered for leaderboards, shipping massive context windows or mixture-of-experts that add little in narrow domains. Regulated settings expose the black-box problem, but surgical inspection of neuron activations offers a way to understand and influence behavior. Targeted pruning combined with knowledge recovery shows that substantial size reductions are possible with minimal performance loss on specialized tasks.

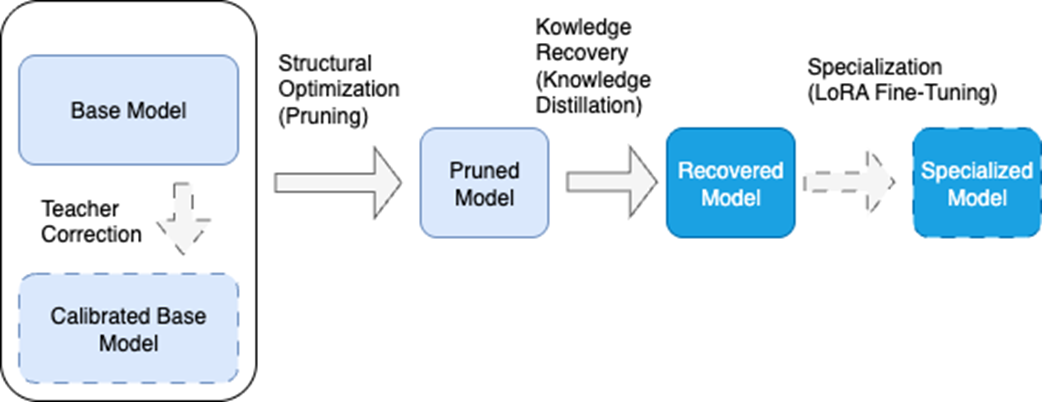

The proposed solution is a model rearchitecting pipeline. First, prune structurally to remove components that contribute least to target objectives; second, recover capabilities via knowledge distillation that transfers not only answers but intermediate reasoning; third, optionally specialize with parameter-efficient fine-tuning (such as LoRA). A light “teacher correction” pass can improve stability, and a domain dataset guides calibration, pruning decisions, recovery goals, and final specialization. The same pipeline also supports pure efficiency goals for edge deployment without domain data. The book provides the practical toolkit (PyTorch, Hugging Face, evaluation suites) and a stepwise roadmap—from fundamentals to activation-level analysis and fairness pruning—so readers can become architects who build smaller, faster, more reliable models tailored to their use cases.

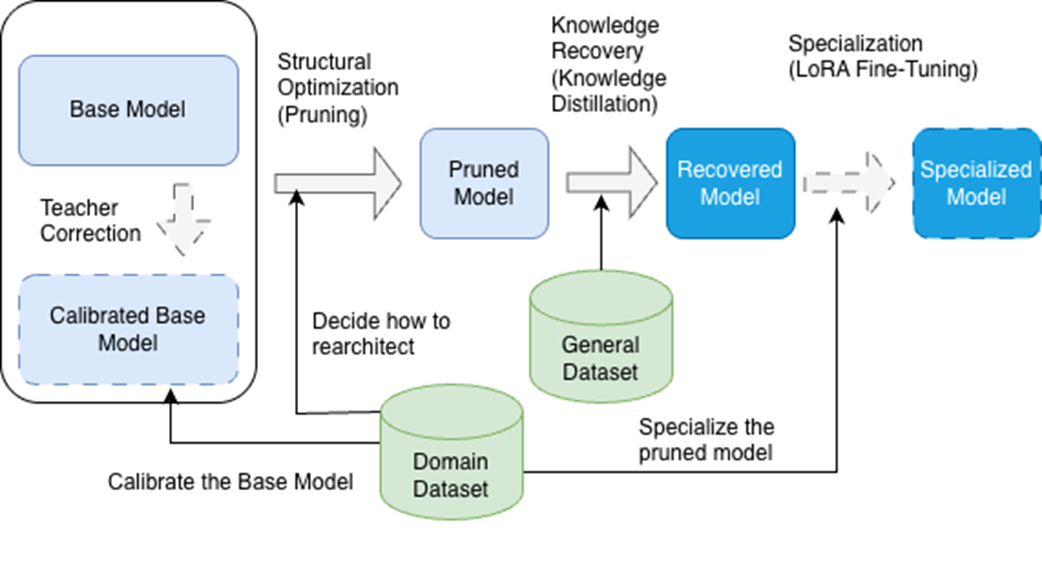

The model tailoring pipeline consists of core phases (shown with solid arrows) and optional phases (shown with dashed arrows). In the first phase, we adapt the structure to the model's objectives through pruning. Next, we recover capabilities it may have lost through knowledge distillation. Finally, we can optionally specialize the model through fine-tuning. An optional initial phase calibrates the base model using the dataset we'll use to specialize the final model.

Dataset integration in the tailoring pipeline. The domain-specific dataset guides calibration of the base model, informs structural optimization decisions, and enables final specialization through LoRA fine-tuning. A general dataset supports Knowledge Recovery, ensuring the pruned model retains broad capabilities before domain-specific specialization. This dual approach optimizes each phase for the project’s objectives.

Summary

- The use of oversized, generic LLMs can lead to high production costs, little differentiation from competitors, and no explainability of decisions.

- Models become more effective and efficient by adapting their architecture to a specific domain and task.

- The model-tailoring process consists of three phases: structure optimization, knowledge recovery, and specialization.

- The domain-specific dataset is a key element and common thread throughout the process, ensuring each optimization and specialization phase aligns with the final objective.

- Knowledge distillation transfers capabilities from the original teacher model to the pruned student model, enabling the student to learn not only the correct answers but also the reasoning process that leads to them.

- Fine-tuning techniques such as LoRA allow domain specialization by training only a small number of parameters, drastically reducing cost and time.

- Modern architectures like LLaMA, Mistral, Gemma, and Qwen share structural traits that make them well suited to rearchitecting techniques.

- By mastering these techniques, developers can go from being model users to model architects.

Rearchitecting LLMs ebook for free

Rearchitecting LLMs ebook for free