1 Facing the Efficiency Wall

The chapter explains why efficiency has become a first-class concern in modern ML inference. As model sizes and context lengths have grown, the limiting factors have shifted from arithmetic throughput to memory movement and power. Traditional remedies—better hardware, architectural tweaks, or batching—no longer keep latency low or utilization high. The core message is that bytes, not FLOPs, dominate the cost of serving large transformers, and that quantization—reducing weights and activations from floating point to lower-bit integers—directly addresses this reality without changing model semantics. The book targets practitioners deploying models in production and sets out to provide the judgment needed to navigate the new trade-offs.

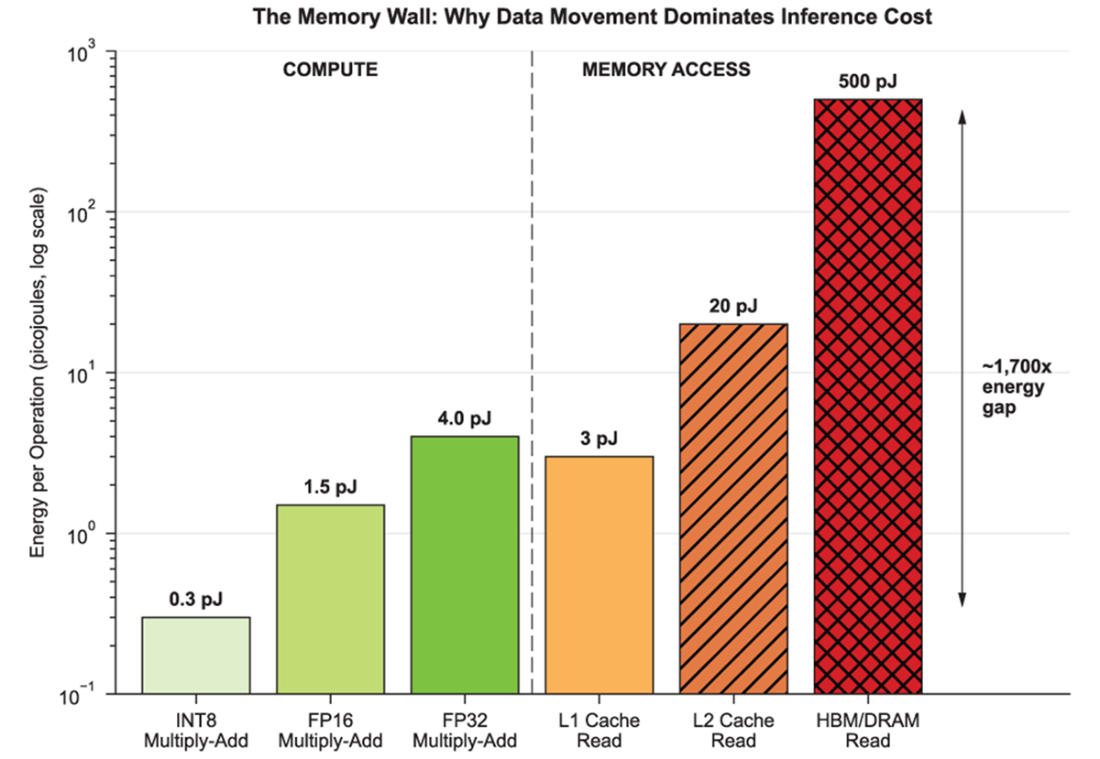

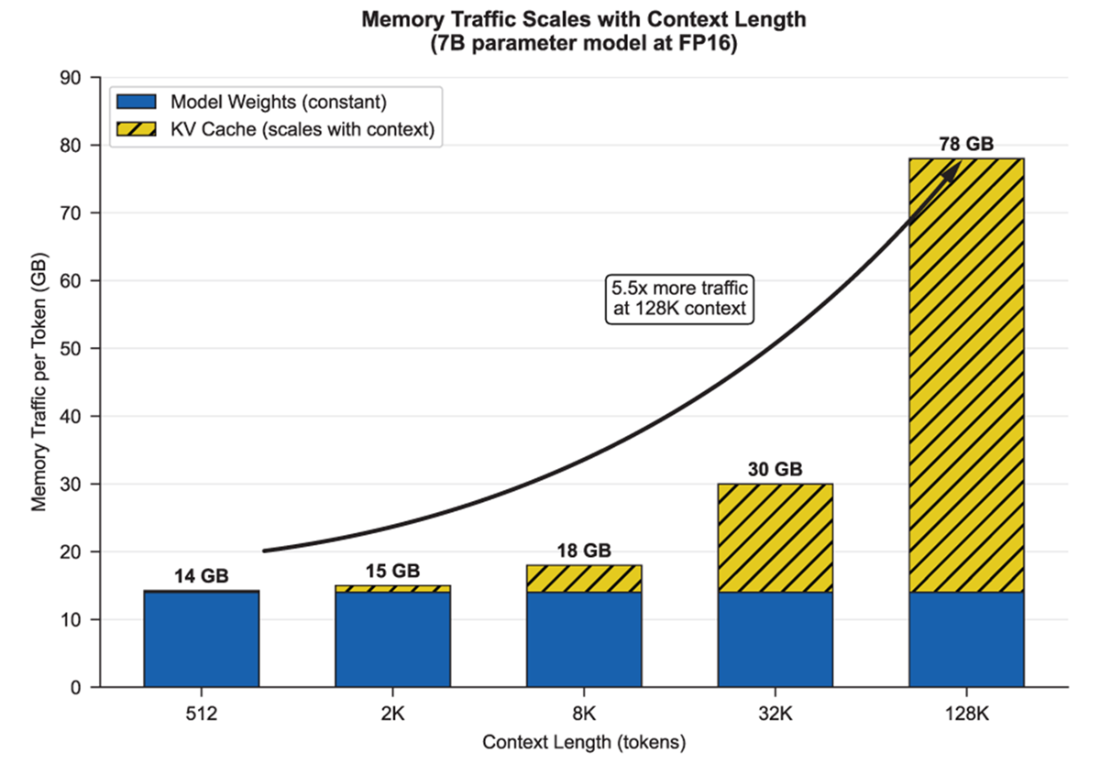

Through energy and bandwidth reasoning and a running 7B-parameter example, the chapter makes the “efficiency wall” concrete: fetching data from memory costs orders of magnitude more energy than arithmetic, and each generated token pulls gigabytes through the hierarchy, especially as KV caches scale with context length. Even at modest serving rates, steady-state power and cost accumulate rapidly, while GPU utilization appears low and latency plateaus—symptoms of a memory-bound system. The key mental anchor is that bit precision is primarily an energy decision: every extra bit inflates memory footprint, traffic, and energy per token, long before compute becomes the limit.

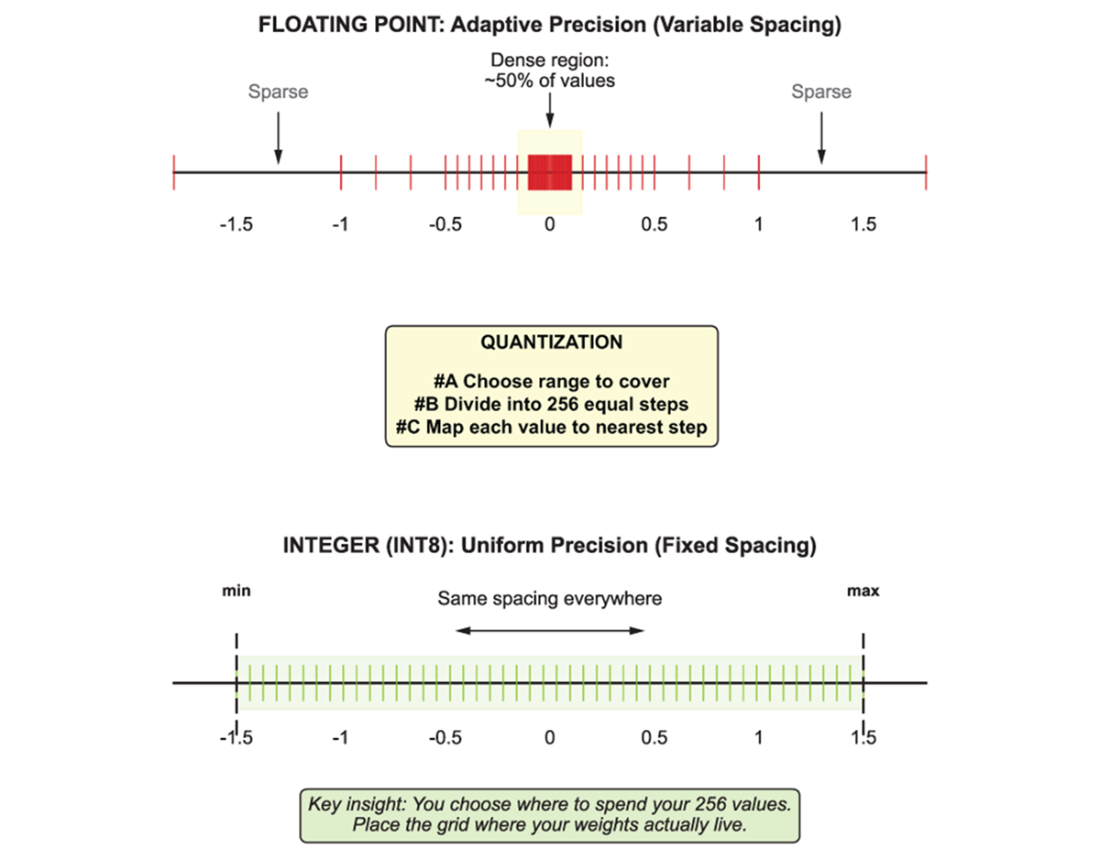

Given this diagnosis, the chapter positions quantization as the practical lever because it linearly shrinks the dominant term—bytes moved per token—while composing with existing optimizations. Alternatives like sparse/conditional computation, attention kernel improvements, smarter batching, or distillation help in specific ways but do not systematically reduce memory traffic across the whole model. Quantization often preserves quality at 8- and 4-bit because neural networks are redundant and tolerant to bounded, structured noise; the real work lies in choosing what to quantize (weights, activations, KV cache), when (PTQ, QAT, low-bit adaptation), and to which bit-widths. The chapter closes by framing the floating-point-to-integer transition as a shift from adaptive expressiveness to fixed, efficient grids—ultimately seeking sufficient precision at minimum energy.

Fetching data from HBM costs roughly 1,700× more energy than an INT8 multiply-add. This gap explains why inference systems are bottlenecked by memory, not compute.

Memory traffic per token as context length grows. Model weights (filled) stay constant, but KV cache (hatched) scales linearly with context. At 128K tokens, total memory traffic reaches 78 GB per token—5.5× more than at 512 tokens.

Floating point concentrates precision near zero (top), leaving large values sparsely represented. Integers use uniform spacing across your chosen range (bottom). Quantization is the act of deciding where to place that fixed grid.

Summary

- Modern LLM inference is constrained by memory bandwidth and power consumption, not raw compute—a 7B parameter model moves roughly 15 GB through memory for every token generated, consuming nearly 2 joules of energy per token.

- Quantization reduces precision (typically from 16-bit to 8-bit or 4-bit integers), directly cutting memory footprint, bandwidth, and energy consumption in proportion to the bit reduction.

- Neural networks tolerate quantization because they encode directions and correlations rather than exact values—their inherent redundancy absorbs the bounded approximation error that lower precision introduces.

- The core trade-off in quantization is range versus resolution: integers force you to choose a fixed grid where every number must fit, unlike floating point which auto-scales at the cost of hardware complexity.

- Quantization decisions span what to quantize (weights, activations, KV cache), when to quantize (post-training or during training), how aggressively to quantize (8-bit, 4-bit, mixed), and where the model runs (GPU, CPU, edge).

Quantization and Fast Inference ebook for free

Quantization and Fast Inference ebook for free