1 Old questions, new machines

Artificial intelligence has moved from narrow demonstrations to everyday tools, with large language models bringing humanlike fluency to search, drafting, analysis, and code. This visibility turns an old question—what, if anything, counts as machine understanding—into a practical concern for organizations and society. The chapter frames the issue as a gap between performance and interpretation, arguing that judging these systems requires crossing disciplinary lines—philosophy, cognitive science, computer science, and business—while resisting the tendency to mistake convincing outputs for genuine comprehension.

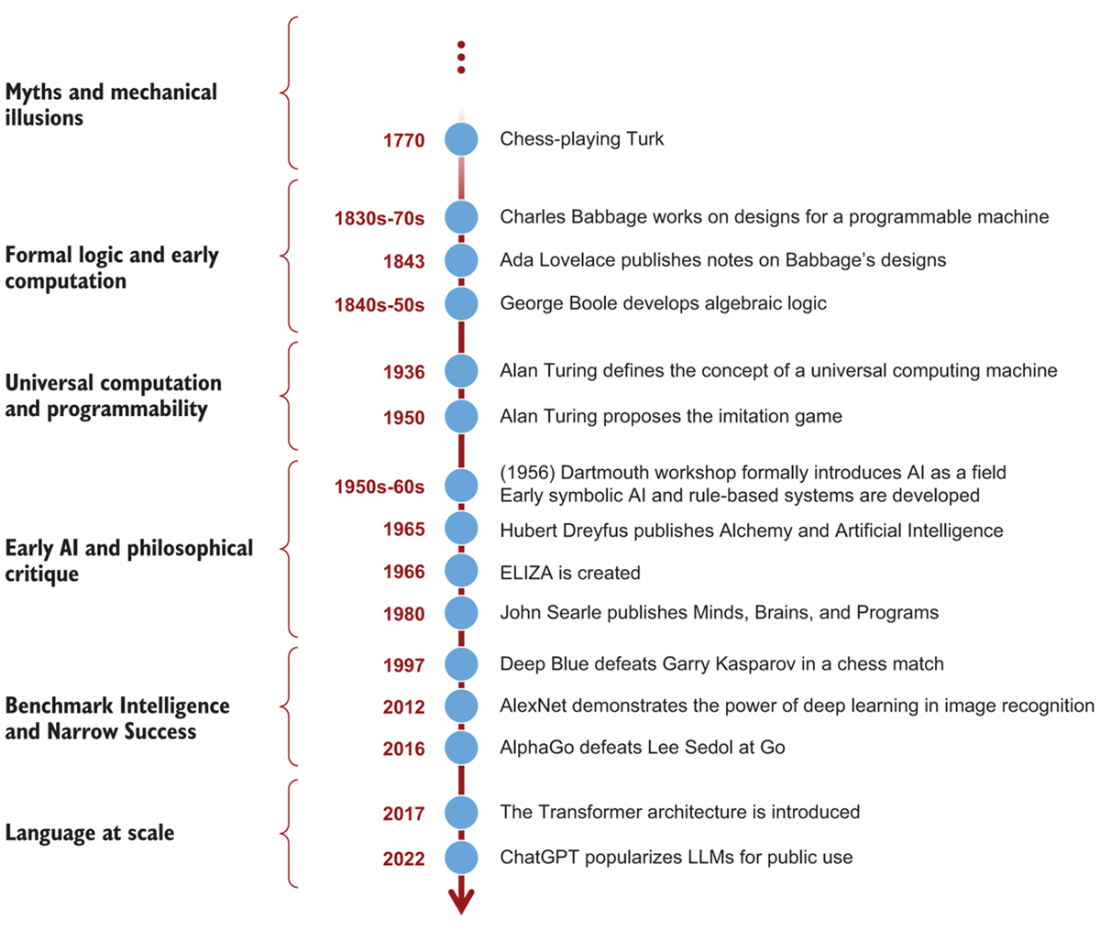

To show how the present took shape, the narrative traces a long arc from myths and automata (and the seduction of imitation, exemplified by the Chess-Playing Turk) to the foundations of computation. Boole’s logical formalism, Babbage’s architectures, and Lovelace’s programmable vision set the stage for Turing’s universal machine and his imitation game, which shifted “thinking” from definition to demonstrable behavior. As AI evolved from rule-based systems to probabilistic models and then to neural networks trained at internet scale, it gained capability but also opacity—the “black box” trade-off—while advancing in recurring cycles of optimism, limits, and renewal.

These technical shifts reignited core philosophical debates. Dreyfus emphasized embodied, context-sensitive skill and warned against equating symbol manipulation with situated understanding; Searle’s Chinese Room argued that fluent outputs (syntax) do not guarantee meaning (semantics). Large language models intensify this tension: they learn rich, relational structures from text that resemble a kind of proto-semantics yet remain largely ungrounded in lived experience. The chapter concludes that imitation and understanding may lie on a continuum and that evaluating machine intelligence—amid real-world stakes of risk, governance, and trust—demands careful, contextual judgments rather than defaults to hype or dismissal.

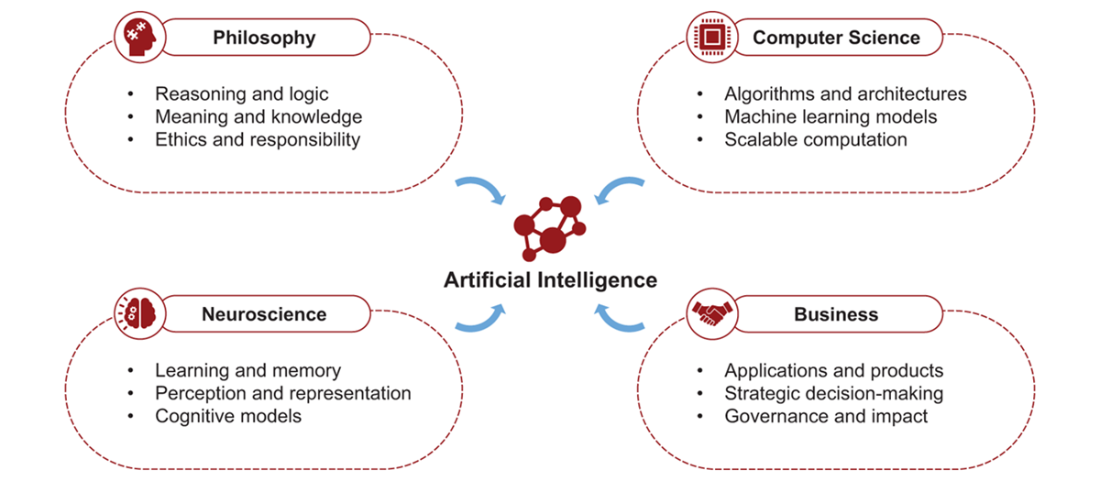

Artificial intelligence emerges from the convergence of several disciplines. Philosophy explores reasoning, meaning, and ethics; neuroscience studies how minds learn and perceive; computer science builds the algorithms and computational systems that make intelligent behavior possible; and business shapes how these technologies are applied and governed in the real world.

Selected milestones discussed in this chapter, showing how the idea of machine intelligence has evolved from early mechanical illusions to modern language models. These events frame the historical and philosophical themes explored in the sections that follow.

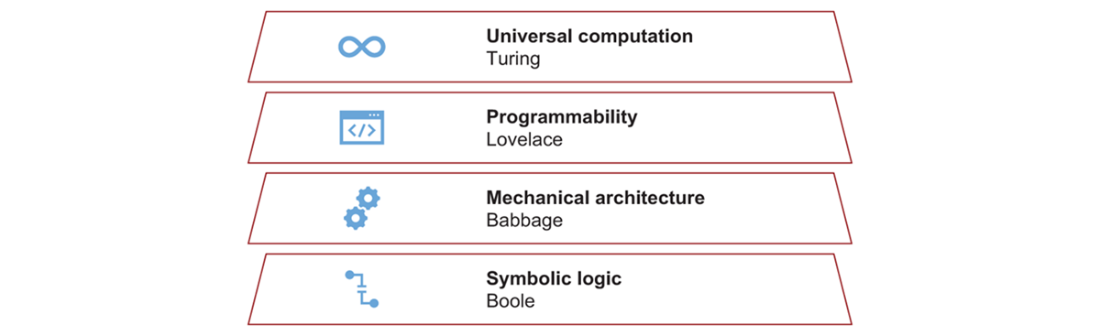

Foundations of modern computation. Modern computing emerged from several conceptual breakthroughs: Boole’s symbolic logic, Babbage’s mechanical computing architecture, Lovelace’s idea of programmable machines, and Turing’s concept of universal computation.

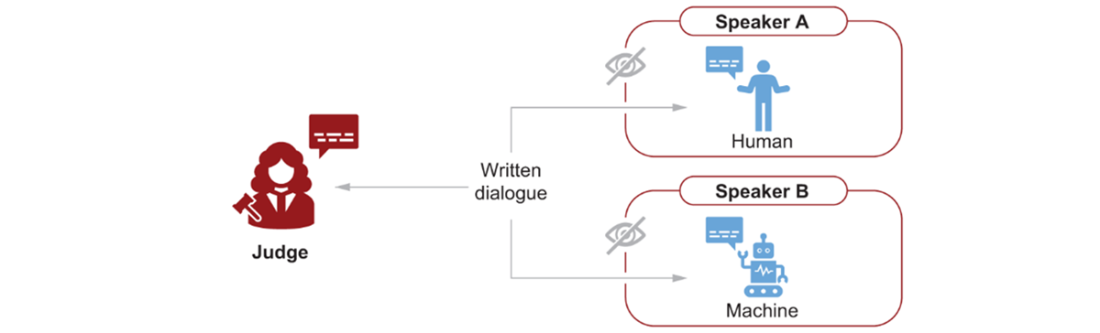

The Turing Test. A human judge engages in written dialogue with two unseen participants. One is human and the other a machine, but their identities are hidden from the judge. If the judge cannot reliably distinguish the machine from the human based on their responses, the machine is said to pass the test.

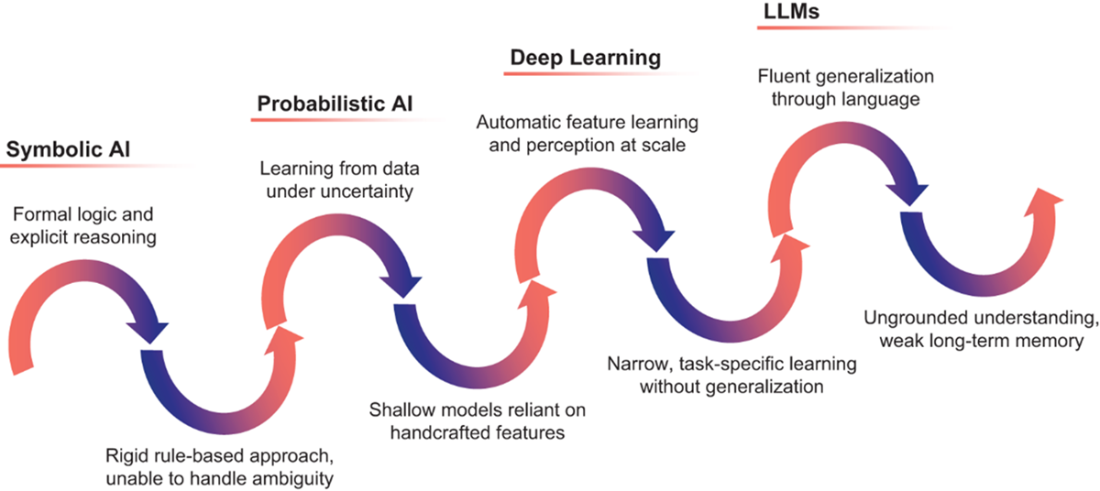

Cycles of AI progress. The wave pattern represents the recurring dynamic in which peaks mark moments when a new approach expands what machines can do, while the valleys reflect the limitations that motivate the next generation of techniques. The sequence traces the field’s evolution from symbolic AI (rule-based expert systems), to probabilistic models (such as Bayesian networks), to deep learning (neural networks used for perception tasks), and finally to large language models such as GPT. Earlier approaches rarely disappear entirely; symbolic reasoning, for example, continues to reappear in hybrid systems that combine structured knowledge with modern learning-based models.

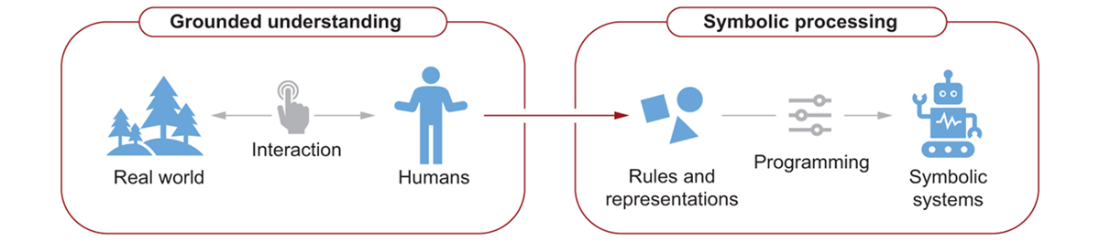

Grounded understanding and symbolic AI. Human intelligence emerges from interaction with the real world, where perception and experience shape understanding. Symbolic AI systems instead operate on rules and representations extracted from human knowledge and encoded into machines, allowing them to manipulate symbols without direct engagement with the environments those symbols describe.

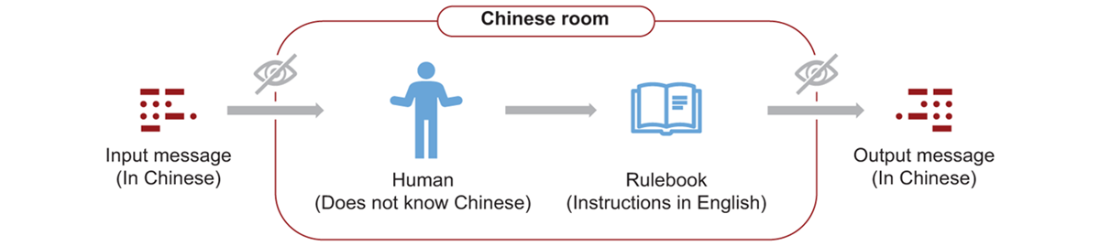

The Chinese Room thought experiment. A person who does not understand Chinese sits inside a room with a rulebook written in English that explains how to manipulate Chinese symbols. By following these instructions, the person produces correct responses to messages written in Chinese. To an external observer the replies appear meaningful, even though no one inside the room understands the language.

Summary

- Artificial intelligence is shaped by insights from philosophy, psychology, computer science, and business, reflecting the interdisciplinary nature of intelligence as an object of study.

- The ability of modern AI systems to generate fluent language creates a powerful impression of understanding, renewing the question of what kind of intelligence, if any, machines possess.

- Asking whether machines think is not only a philosophical concern but also a practical one that shapes how we use, build, and evaluate intelligent systems.

- The idea of thinking machines originated in ancient myths and philosophical thought, long before the invention of modern computers.

- The foundations of modern computation emerged from the fusion of logic, mechanical execution, and symbolic abstraction developed in the nineteenth century.

- Alan Turing’s concept of the universal machine demonstrated that rule-based processes could, in principle, be carried out computationally, giving rise to the distinction between software and hardware.

- The Turing Test reframed machine intelligence as a matter of observable behavior rather than metaphysical essence.

- Rule-based systems initially aimed to replicate human thought, but they failed when confronted with ambiguity, contradiction, and incomplete information.

- Probabilistic models and learning algorithms enabled machines to reason under uncertainty, shifting the focus from logic to inference.

- The combination of massive data availability and computing power has enabled modern AI systems to learn complex behaviors at scale.

- As AI systems grow in complexity, their inner workings become less transparent, revealing that progress in intelligence often deepens the mystery of how they operate.

- Artificial intelligence progresses in cycles, in which the failure of one paradigm often leads to the birth of another.

- Humans are prone to mistake fluency for comprehension, especially when machines demonstrate high-level language or strategic behavior.

- Embodied critiques of AI, such as Dreyfus’s, emphasize that intelligence may depend on sensory experience and context.

- The Chinese Room argument challenges the idea that manipulating symbols can produce genuine understanding by distinguishing syntax from semantics.

- Large language models create a statistical form of semantics that maps linguistic relationships without experiential grounding.

- Each stage in AI development redefines what counts as intelligence, turning machines into experimental mirrors of human cognition.

- When imitation becomes functionally indistinguishable from understanding, traditional criteria for intelligence come under pressure.

Machines that Think ebook for free

Machines that Think ebook for free