1 Getting started with MLOps and ML engineering

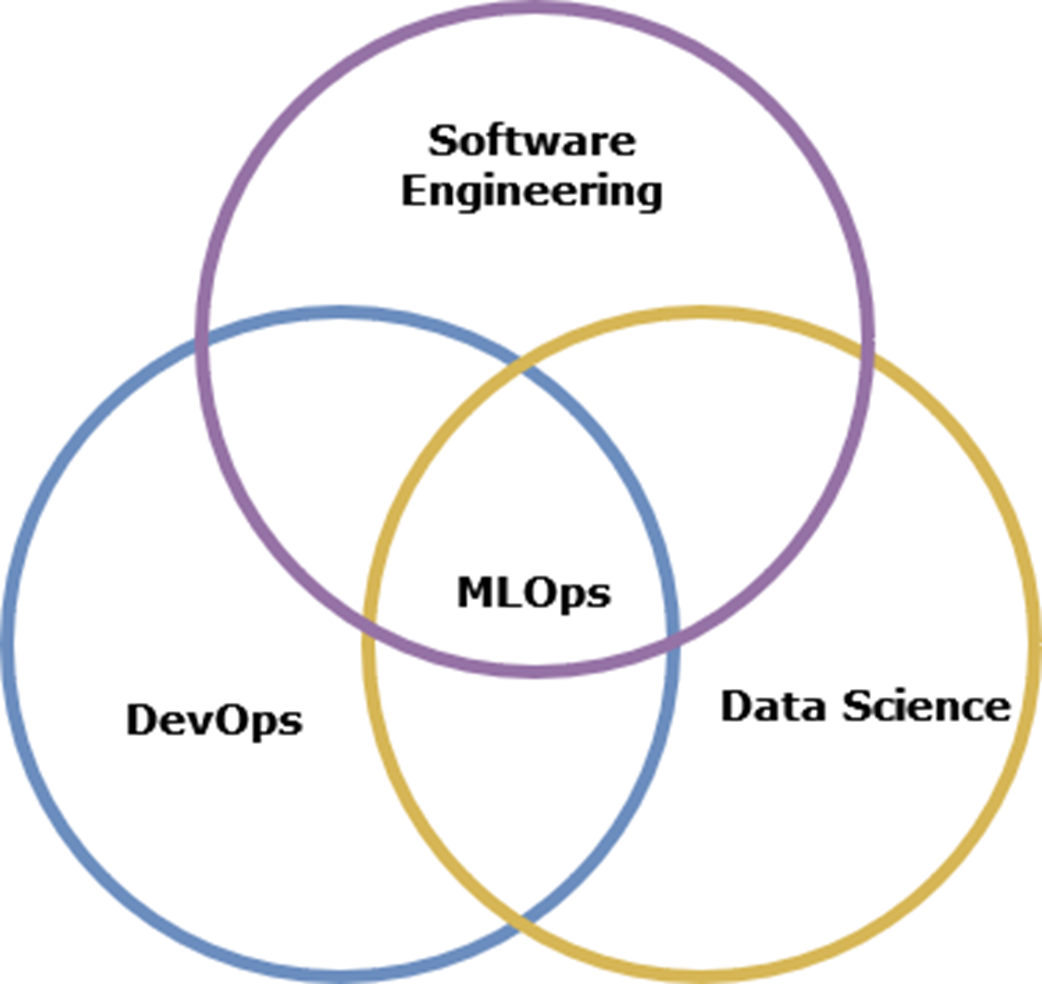

This chapter sets the stage for building production-grade machine learning systems by bridging the gap between model development and real-world operations. It introduces MLOps as the discipline that makes ML reliable, scalable, and auditable in practice, emphasizing that most failures stem from system and process issues rather than model complexity. With a hands-on, iterative approach, the book aims to turn readers—whether data scientists, software engineers, or aspiring ML engineers—into confident practitioners who can take projects from conception to deployment and continuous improvement.

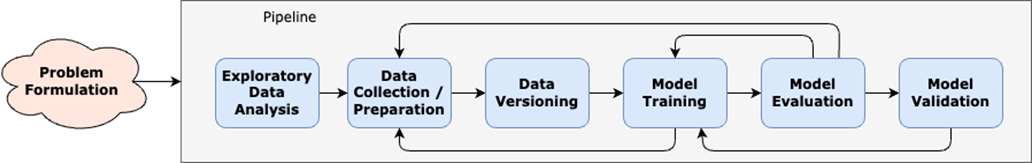

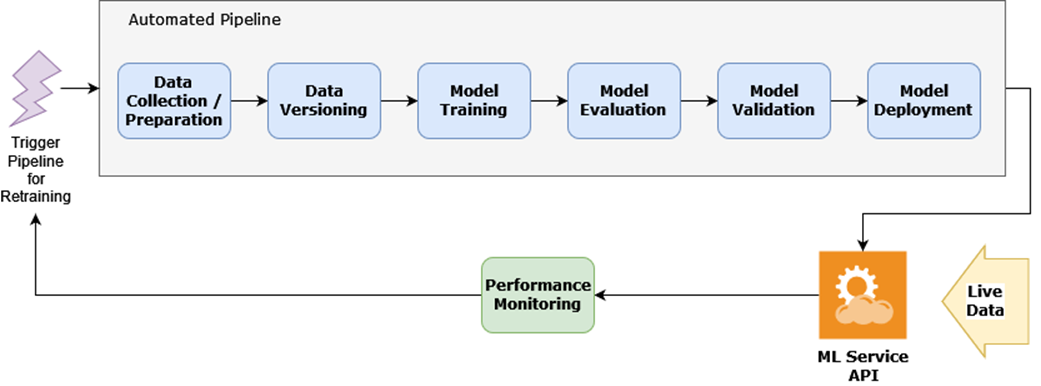

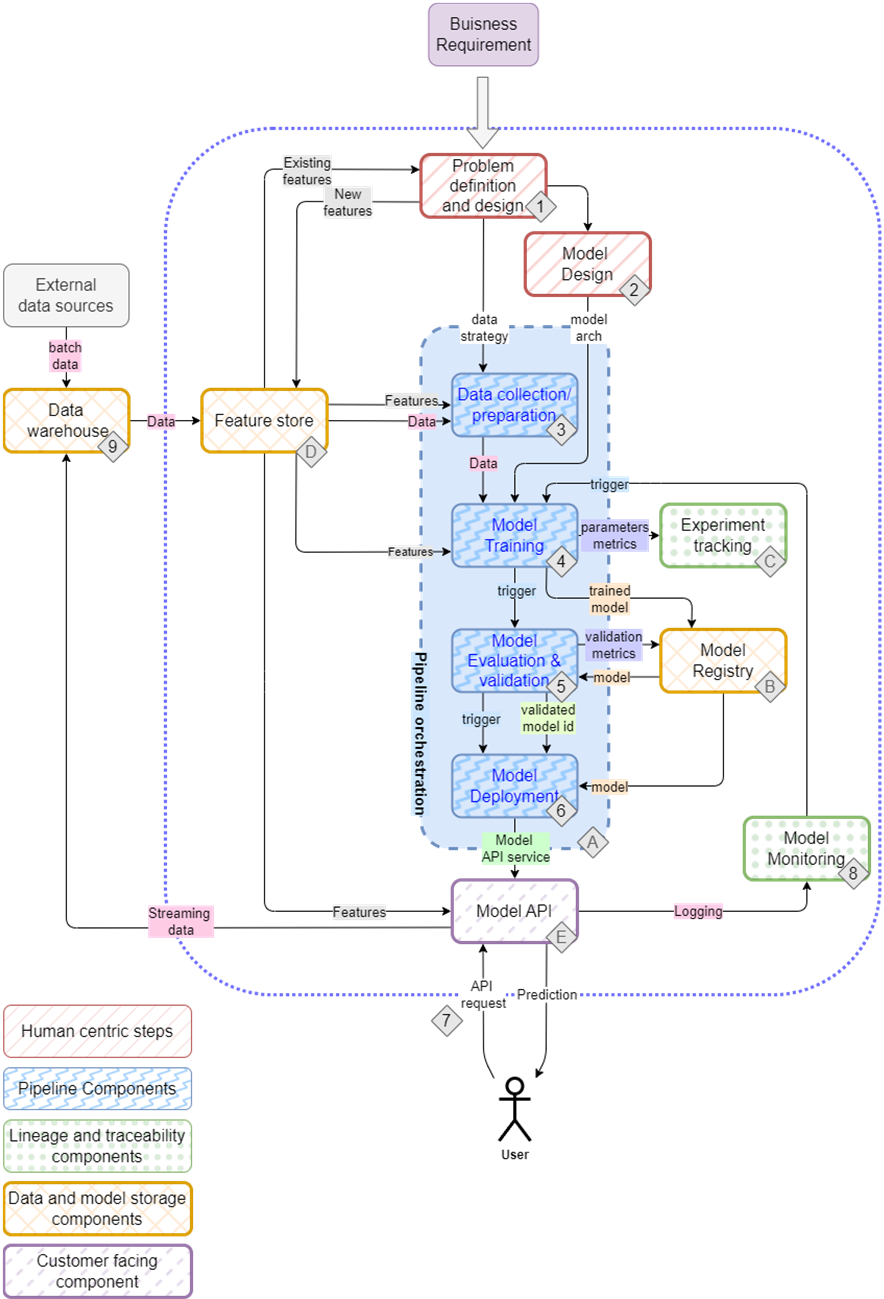

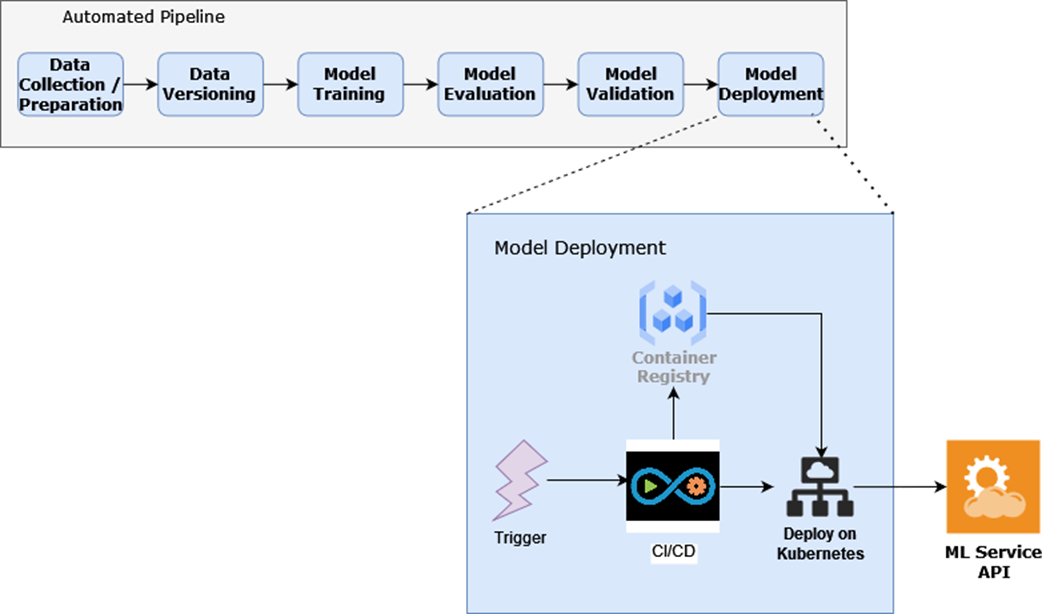

Central to the chapter is the end-to-end ML life cycle. It begins with problem formulation and data work (collection, labeling, and versioning), then proceeds through training, evaluation, and business-oriented validation—steps that are inherently iterative and best orchestrated via automated pipelines. The chapter then distinguishes the dev/staging/production phase, where full automation, CI-triggered workflows, and deployment practices (APIs, containers, cloud, versioned rollouts) take over. Robust monitoring spans both system and ML-specific signals—throughput, errors, drift, and business KPIs—feeding into policies for model retraining and safe promotion. Reproducibility, reliability, and automation are highlighted as non-negotiables throughout.

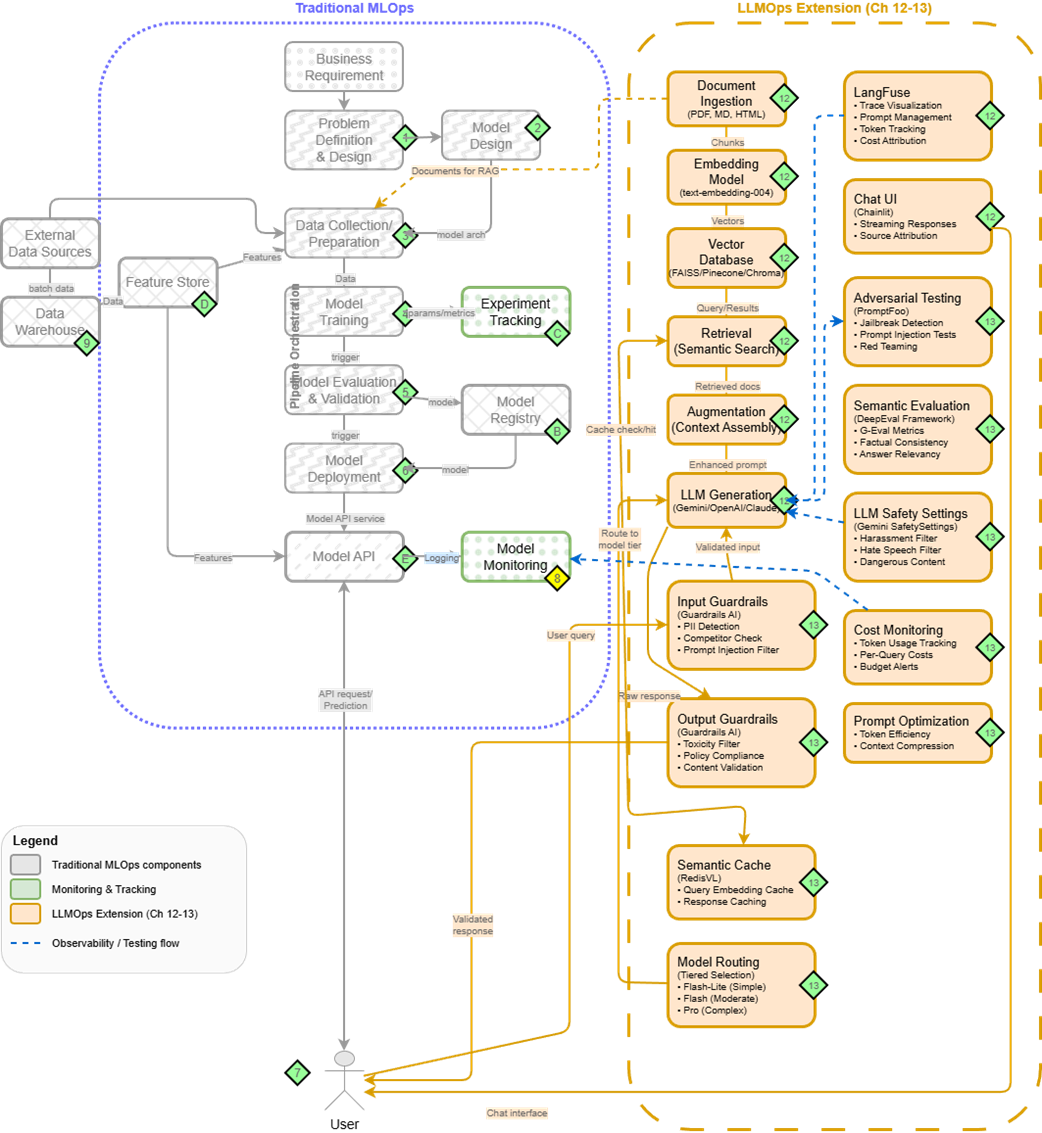

The chapter also maps the skills and platform foundations needed for MLOps: solid software engineering, practical ML know-how, data engineering awareness, and strong automation practices, with Kubernetes as a useful backbone. It introduces an incremental ML platform built around Kubeflow (notebooks and pipelines) and complemented by components such as a feature store, a model registry, container registries, and CI/CD—while noting that tool choices are contextual and “build vs buy” is a pragmatic decision. Finally, it previews three projects that anchor these concepts: an OCR system, a tabular movie recommender (showcasing feature stores, testing, and drift detection), and a RAG-based documentation assistant that extends the same MLOps foundation to LLMOps with vector search, guardrails, and cost-aware operations.

The experimentation phase of the ML life cycle

The dev/staging/production phase of the ML life cycle

MLOps is a mix of different skill sets

The mental map of an ML setup, detailing the project flow from planning to deployment and the tools typically involved in the process

Traditional MLOps (right) extended with LLMOps components (left) for production LLM systems. Chapters 12-13 explore these extensions in detail.

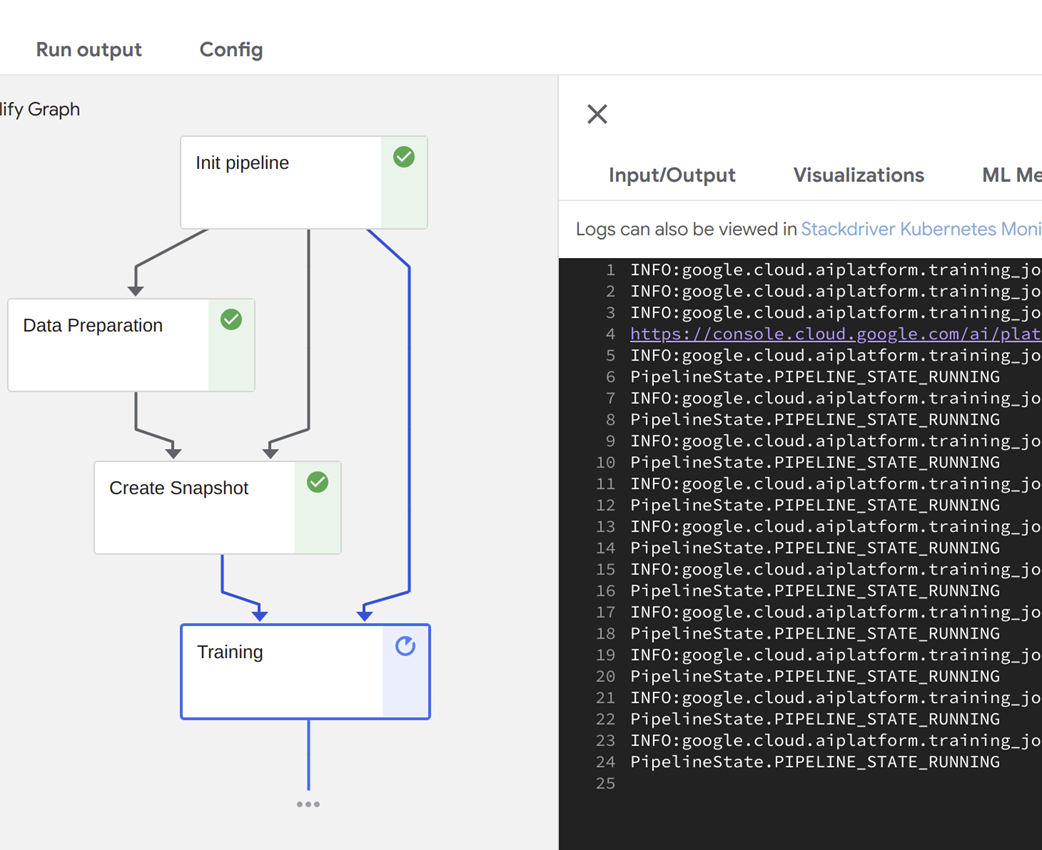

An automated pipeline being executed in Kubeflow.

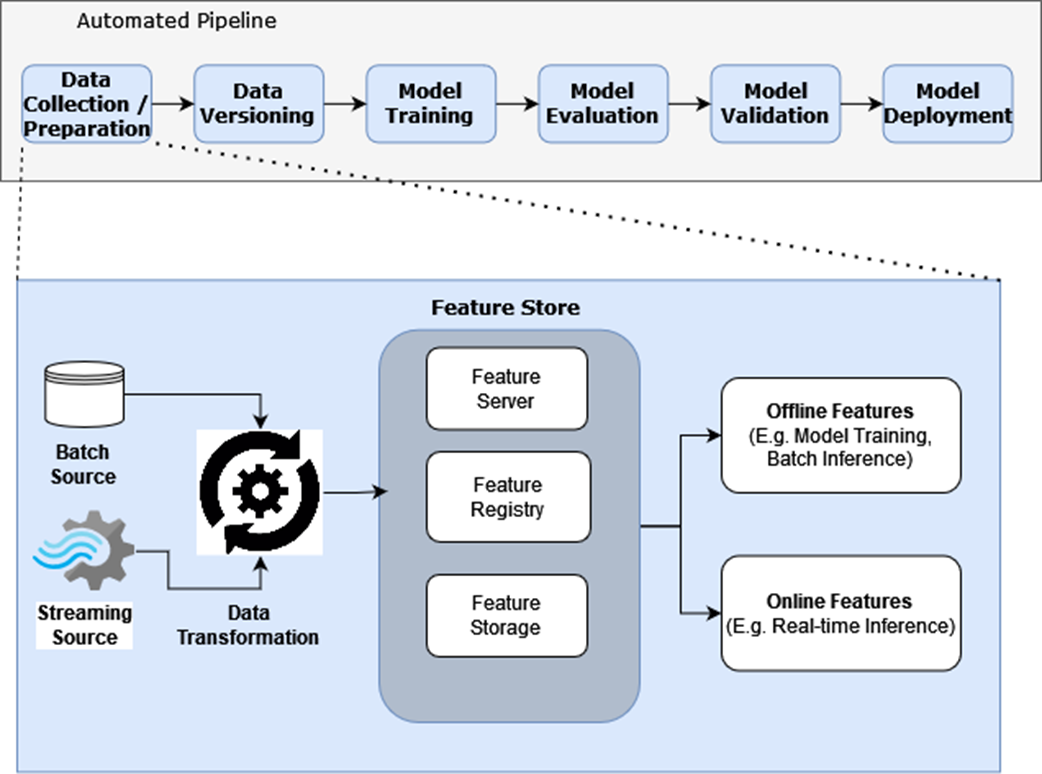

Feature Stores take in transformed data (features) as input, and have facilities to store, catalog, and serve features.

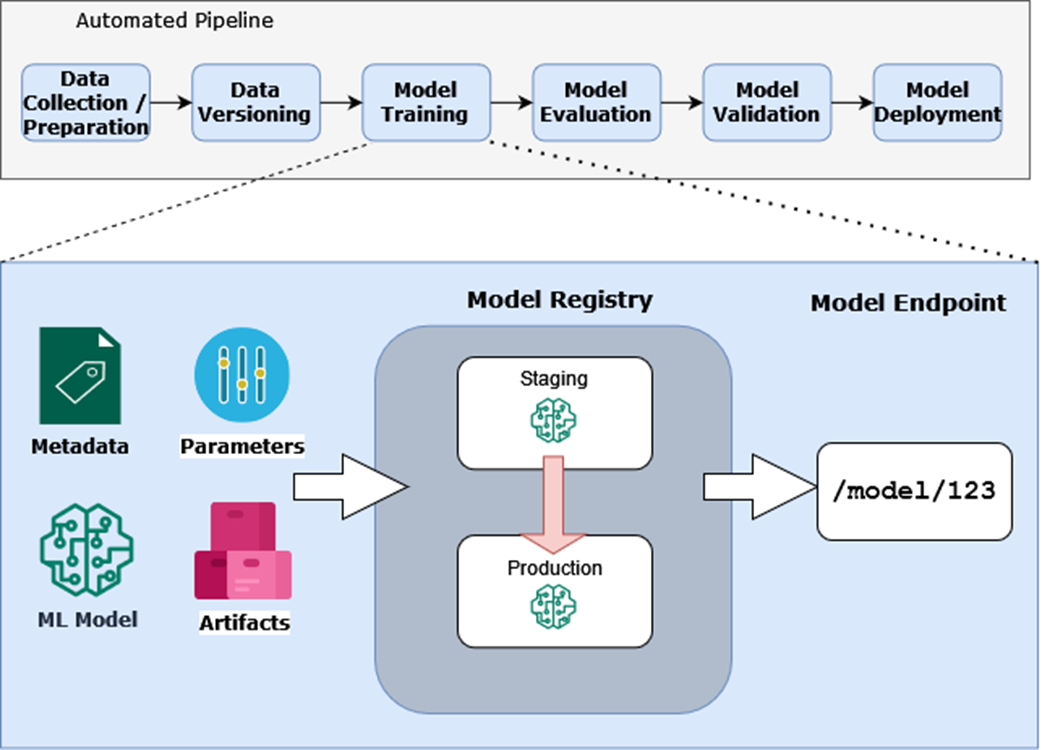

The model registry captures metadata, parameters, artifacts, and the ML model and in turn exposes a model endpoint.

Model deployment consists of the container registry, CI/CD, and automation working in concert to deploy ML services.

Summary

- The Machine Learning (ML) life cycle provides a framework for confidently taking ML projects from idea to production. While iterative in nature, understanding each phase helps you navigate the complexities of ML development.

- Building reliable ML systems requires a combination of skills spanning software engineering, MLOps, and data science. Rather than trying to master everything at once, focus on understanding how these skills work together to create robust ML systems.

- A well-designed ML Platform forms the foundation for confidently developing and deploying ML services. We'll use tools like Kubeflow Pipelines for automation, MLFlow for model management, and Feast for feature management - learning how to integrate them effectively for production use.

- We'll apply these concepts by building two different types of ML systems: an OCR system and a Movie recommender. Through these projects, you'll gain hands-on experience with both image and tabular data, building confidence in handling diverse ML challenges.

- Traditional MLOps principles extend naturally to Large Language Models through LLMOps - adding components for document processing, retrieval systems, and specialized monitoring. Understanding this evolution prepares you for the modern ML landscape.

- The first step is to identify the problem the ML model is going to solve, followed by collecting and preparing the data to train and evaluate the model. Data versioning enables reproducibility, and model training is automated using a pipeline.

- The ML life cycle serves as our guide throughout the book, helping us understand not just how to build models, but how to create reliable, production-ready ML systems that deliver real business value.

Machine Learning Platform Engineering ebook for free

Machine Learning Platform Engineering ebook for free