1 Why we need a new way to test AI opportunities

Artificial intelligence has become a competitive necessity, with spending and adoption surging across industries, yet most initiatives still fail to turn promise into performance. The core problem is not a lack of data or algorithms but a persistent gap between opportunity and execution, which leads to wasted investment, user fatigue, and eroded trust. Although proven practices exist—hypothesis-driven problem solving, Design Thinking, maturity assessments, and exploratory data analysis—they are rarely integrated into a single, practical, unbiased process used before building. This chapter argues for an early, rigorous “AI Road Test” that evaluates value, feasibility, risks, and compliance up front, so organizations commit only to opportunities that can scale ethically, securely, and profitably.

Failures tend to repeat for familiar reasons: solving the wrong problem, misfitting user needs and literacy, weak stakeholder alignment, insufficient talent and infrastructure to operate at scale, poor or biased data, and an ill-suited analytical approach. The Zillow Offers case exemplifies these traps: an algorithm optimized for current prices was used to bet on future resale values in a volatile market, seller self-reporting biased inputs, and growth incentives overrode controls—turning a strong dataset and model into a costly business miss. These patterns are preventable when teams “hear” three critical voices—the user (workflows, adoption, decision horizon), the enterprise (maturity, guardrails, capacity), and the data (quality, bias, drift)—and when they actively counter cognitive biases. Root-cause analysis shows most breakdowns stem from unawareness, inability, or bias—problems a deliberate testing process can surface and mitigate before stakes and sunk costs escalate.

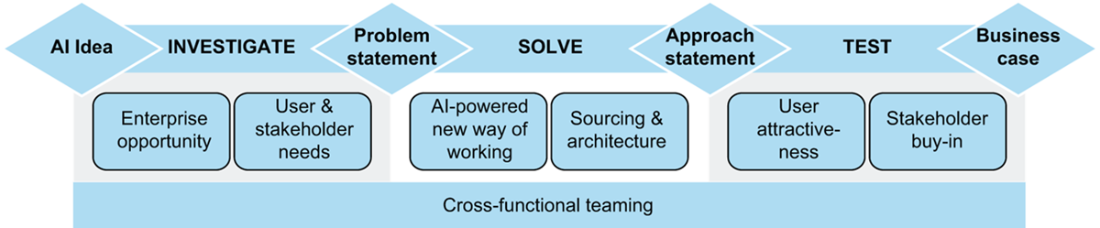

The proposed AI Road Test unifies best-practice tools into a bias-resistant, pre–proof of concept discipline: it clarifies the business problem with issue trees, uncovers user and stakeholder needs with Design Thinking, profiles data via EDA, and aligns organization and tech readiness through maturity and architecture assessments. It forces early, high-impact choices—scope, build vs. buy vs. rent, interpretability and causality needs, regulatory and ethical constraints—and enforces objective stage gates (problem statement, approach statement, business case) that enable GO/NO GO/PIVOT decisions. Far from slowing teams, this upfront clarity accelerates delivery, boosts motivation by tying a clear “to‑be” state to tangible benefits, and preserves flexibility to pivot when plan A falters. Traditional frameworks like CRISP-DM and TDSP remain useful foundations but are too high-level, ML-centric, and light on bias, compliance, and off‑the‑shelf options for today’s realities. A disciplined road test helps organizations select the right use cases—often in core functions where value concentrates—reduce waste, and consistently convert AI’s potential into durable business impact.

The AI Road Test mental model

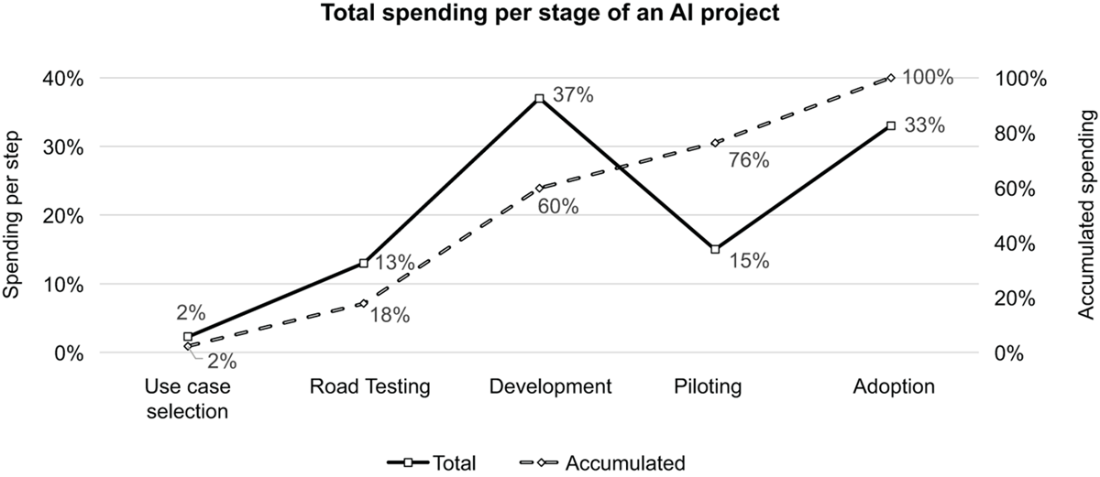

details the percentage of time spent by the project team during the phases of a typical AI project

Summary

- AI can go spectacularly wrong if unproperly tested. Zillow’s failed use of AI-generated property valuations in its homebuying division, known as Zillow Offers, resulted in losses exceeding $300 million and 2,000 jobs.

- Data analytics and AI can improve decisions and create significant economic value in all business functions and sectors of activity, if properly managed.

- AI investments are rising fast and are expected to reach $480 billion in 2026, and most enterprises have now adopted some form of AI.

- However, more than 80% of analytical projects fail to deliver value and it seems to be getting worse.

- The main reasons for failure are known and can be traced back to few, often preventable root causes.

- The identification and mitigation of flaws in AI projects should happen as early as possible, before any Proof of Concept (PoC), for higher rates of success, speed and ROI.

- The reference methodology for the management of analytical projects – CRISP-DM – is too high-level and outdated to test AI opportunities effectively.

FAQ

Why do we need a new way to test AI opportunities?

Because most AI initiatives don’t turn promise into performance. Failure rates exceed 80%, often due to misaligned problems, weak user fit, biased or poor data, inadequate infrastructure, and cognitive biases. Existing project methods are too high-level and assume custom model development, neglecting out-of-the-box options, ethics, and regulation. A new, integrated way is needed to vet ideas holistically before investing.What is the AI Road Test?

A structured, evidence-based process to evaluate AI opportunities before a PoC. It integrates proven tools (issue trees, Design Thinking, analytics maturity assessment, EDA, Agile, ADKAR, Pyramid Principle) and enforces stage gates with objective criteria—problem statement, approach statement, and business case—to reduce risk and bias while maximizing impact and adoption.How does the AI Road Test differ from CRISP-DM or TDSP?

- It goes deeper than high-level lifecycle steps, giving practical “how-to” tools for scoping, user discovery, data profiling, and communication.- It considers develop vs. buy vs. rent from the start (not just custom ML).

- It embeds compliance, ethics, and modern risk factors.

- It explicitly mitigates cognitive biases with gated, criteria-driven decisions.

Why do so many AI projects fail—and what are the main causes?

Seven recurring causes:- The solution doesn’t solve a business-relevant problem.

- Poor fit with user needs or AI literacy.

- Weak stakeholder support.

- Insufficient human resources to maintain or scale.

- Inadequate tech infrastructure for sustainable scaling.

- Low-quality or biased data.

- Inappropriate analytical approach for the problem and data.

What root causes sit behind these failures?

Three roots explain most breakdowns:- Unawareness: teams miss key risks or success criteria.

- Inability: teams lack methods or capabilities to mitigate risks (e.g., stakeholder engagement).

- Bias: teams make distorted choices (e.g., chasing the newest tech over the right one). The project team is accountable for surfacing constraints early and acting—postpone, phase, adapt, or stop.

What are the key lessons from the Zillow Offers failure?

- The model predicted “now,” but the business needed “resale months later” (t + Δt).- Biased inputs (self-reported property conditions) inflated valuations.

- The team scaled during unprecedented market shifts without recalibration.

- Growth targets loosened controls, compounding overpayment.

- The AI worked as designed—but not for the business it served. Holistic, pre-PoC testing could have surfaced these risks.

When should testing happen—and what won’t a PoC fix?

The window is before any PoC. A PoC checks technical feasibility and user acceptance, not whether scope, approach, or economics are optimal. A PoC won’t fix a bad scope, the wrong analytical approach (e.g., when you need causality, not just correlation), or a late develop vs. buy vs. rent decision.How do we “hear the voices” that matter during testing?

- Voice of the user: map workflows and decision horizons; ensure predictions align with when decisions are made; match UI and literacy.- Voice of the enterprise: assess analytics maturity; add guardrails like human-in-the-loop if needed; align with strategy and capacity.

- Voice of the data: profile quality, representativeness, and governance; plan for recalibration and drift.

How does early testing boost speed, motivation, and ROI?

- Speed: a clear to-be workflow, analytics support, and tech architecture serve as a shared blueprint that reduces rework and debate.- Motivation: pairing the target state with quantified impact gives a compelling “why.”

- ROI: filtering out mirage projects and choosing fit-for-purpose approaches early raises success rates and value per use case.

Look Before You Leap ebook for free

Look Before You Leap ebook for free