10 Asynchronous processing

This chapter explains how asynchronous processing hides latency when further reductions aren’t feasible. It contrasts synchronous, blocking workflows with async designs that initiate work without waiting for results, improving perceived responsiveness by overlapping I/O and computation. The chapter frames async as complementary to prior optimization techniques: instead of only shrinking absolute latency, it keeps systems responsive while slow operations proceed, and it introduces the core building blocks you need to make that practical at scale.

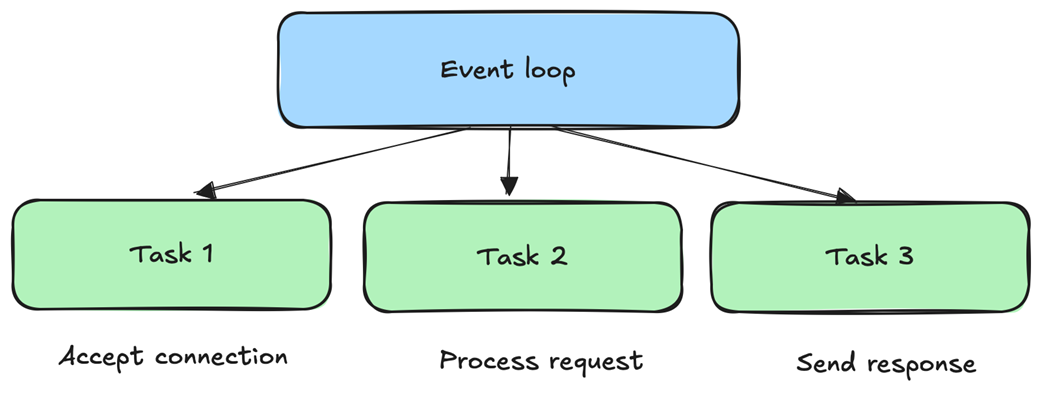

The event loop is presented as the heart of async systems: a dispatcher that polls OS multiplexing interfaces (such as epoll, kqueue, io_uring, or IOCP), processes readiness events, and runs scheduled tasks without blocking. Around this core, the chapter surveys async I/O techniques to hide or amortize latency—multiplexing many connections per thread, batching requests, hedging duplicates against tail spikes (with idempotency caveats), buffered I/O, and memory mapping. It then covers deferring non-critical work via scheduling, priority queues (with anti-starvation), and work stealing for load balance. Resource management is treated as essential to low latency: thread pools versus thread-per-core runtimes, careful memory buffer pooling, and connection pooling combined with asynchronous database queries to increase parallelism while controlling overhead.

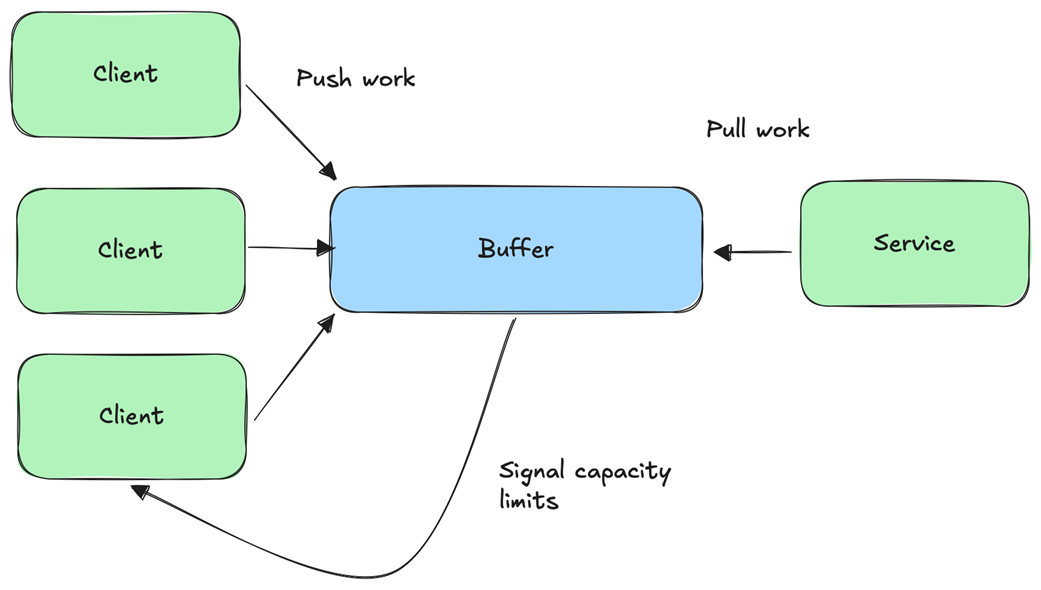

The chapter also addresses the hard parts: complexity, race conditions, and resource blow-ups if concurrency is left unchecked. It advocates backpressure to regulate producers—TCP window-based throttling, bounded buffering, and last-resort dropping or rate limiting—to keep service latency predictable. Robust error handling is required for partial failures, retries with exponential backoff, and safe idempotent operations, plus timeouts and cancellation with thorough cleanup. Finally, it emphasizes observability for async systems: distributed tracing with propagated context, and metrics that capture concurrency, queue depths, error categories, retry behavior, and resource utilization, along with latency decomposition across wait, queue, and processing stages. The takeaway is that async processing can dramatically improve perceived latency, but only with disciplined flow control, resilient error handling, and strong visibility.

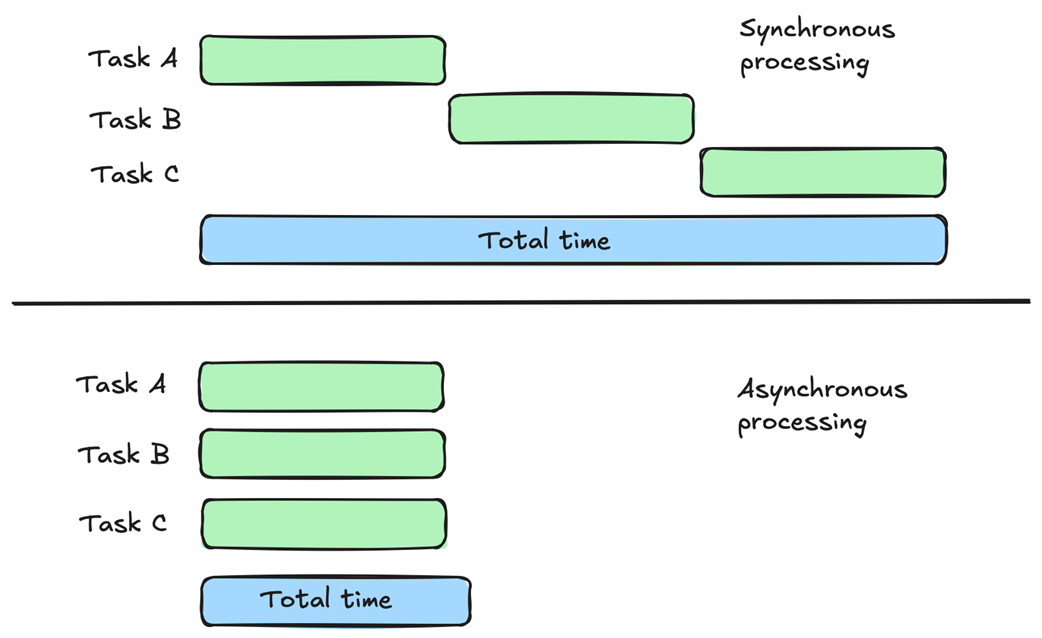

Synchronous vs asynchronous processing. With synchronous processing (at the top of the diagram), a task runs to completion before we start a new task. Therefore, the total time to run tasks A, B, and C is the sum of each task’s time. In contrast, with asynchronous processing (at the bottom of the diagram), all the tasks are started at the same time, resulting in the total time of the slowest task. Asynchronous processing allows you to reduce latency if you can execute the tasks in parallel.

Event loop breaks down work into individual tasks that execute when an event happens. In this example, event loop processes three different tasks, accept connection, process request, and send response, as part of processing a request arriving from the network. Each task runs when an event, such as socket becoming readable, happens.

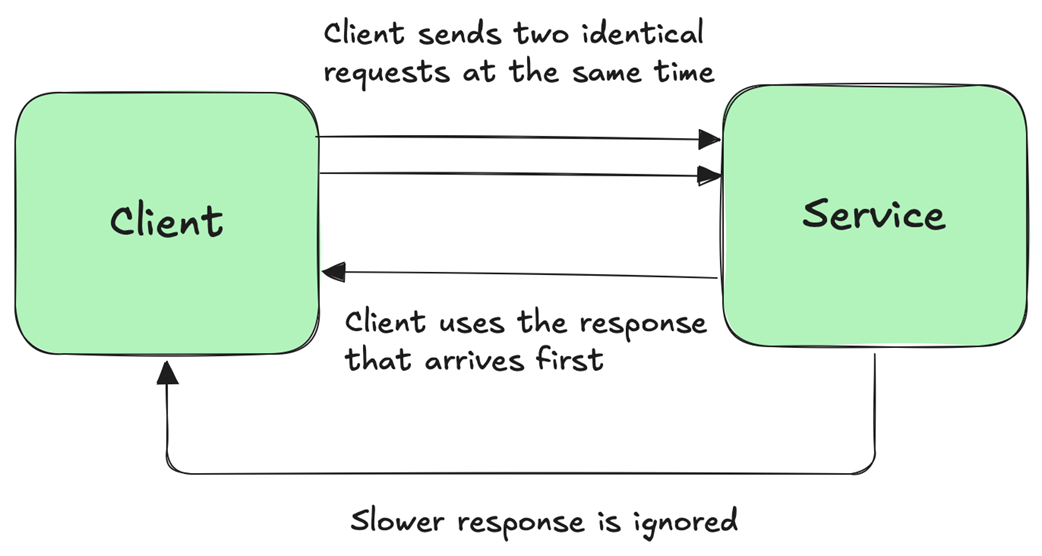

Request hedging is a latency hiding technique where the client sends two or more copies of the same request. The client uses the response that arrives first and ignores responses that arrive later for whatever reason. For example, perhaps the network path for some of the requests and responses is slower than for others or the messages get queued somewhere along the path.

Backpressure controls the flow of work from producer to consumer to avoid overwhelming the consumer. Clients push work to a server, which buffers them. The service itself pulls work from the buffer. The service also signals to the clients of buffer capacity limits so that the clients know when to slow down to avoid overwhelming the system.

Summary

- In synchronous processing, tasks run one after another, waiting for a task to complete before starting another one. In contrast, asynchronous processing is primarily about structuring your application in a way where tasks can start independently, addressing the issue of some tasks taking a long time to complete.

- Event loop is a fundamental concept in asynchronous processing where we have a dispatcher at the core of the system, polling for event such as data arriving from the network, and reacting to them.

- Although asynchronous processing can improve performance and reduce latency, it has some downsides too with resource management and error handling often being more complex.

- I/O multiplexing is a fundamental OS primitive enabling the event loop approach. It allows the event loop to efficiently monitor thousands of event sources, allowing the application to react to events as they happen.

- Asynchronous processing enables various efficient latecy hiding techniques such as request hedging, deferred work, and more.

- Managing concurrency with backpressure is critical in asynchronous systems to avoid overwhelming the system.

- Asynchronous processing requires special attention to error handling. For example, handling partial failures and recovering from them can be tricky. Timeouts and cancellations are also essential to dealing with asynchronous task errors.

Latency ebook for free

Latency ebook for free