7 Prompt engineering: Strategies for guiding and evaluating LLMs

Prompt engineering is presented as a practical discipline for shaping large language model behavior by translating broad intent into clear, contextualized instructions. The chapter explains how small changes in wording, audience, and format can dramatically affect results, and why providing the right context—via system instructions, examples, constraints, and retrieved information—remains essential even as models improve. It highlights both the power and the limits of prompting: models are stateless, strategies can be brittle across versions, and some tasks demand multistep workflows and tool use. As the field matures, prompt engineering broadens into context engineering, which orchestrates system prompts, conversation history, retrieval, tools, and output schemas to align model behavior with user goals.

Building on core principles—clarity, relevant context, structure, and iteration—the chapter surveys foundational techniques (zero-shot, few-shot, multi-turn) and scaffolds for reasoning and planning (chain-of-thought, tree-of-thought, self-consistency), quality control (reflexion and verification), task decomposition (least-to-most, decomposition, generated knowledge, pyramid priming), modular pipelines (prompt chaining), role cues, and explicit context management. It then introduces higher-level frameworks that make prompts more consistent and reusable: ReAct integrates reasoning with tool calls; MECE enforces clean, comprehensive categorization; PACT encodes persona, action, context, and tone; and WISER structures who, instruction, subtasks, examples, and review. Evolving practices emphasize iterative design and measurement, prompt libraries and versioning, multimodal prompting, adaptive clarification dialogs, and multi-agent orchestration that critiques, votes, and synthesizes for reliability.

A central theme is evaluation: define success criteria, assemble representative datasets, and score outputs with a mix of deterministic checks, expert labels, LLM-as-judge prompts validated against humans, and production A/B testing. The chapter warns about evaluator bias and reward hacking, and recommends comprehensive regression testing to prevent unintended trade-offs when prompts or models change. It contrasts prompting—fast, accessible, and cost-effective but sometimes blunt—with post-training, which enables precise behavioral control and can distill behaviors into smaller, cheaper models once good prompts and datasets exist. The guidance is to start with strong prompts and systematic evaluation, then apply post-training where nuance, robustness, or efficiency demands it, all while adhering to the enduring principles of clarity, context, structure, and iterative improvement.

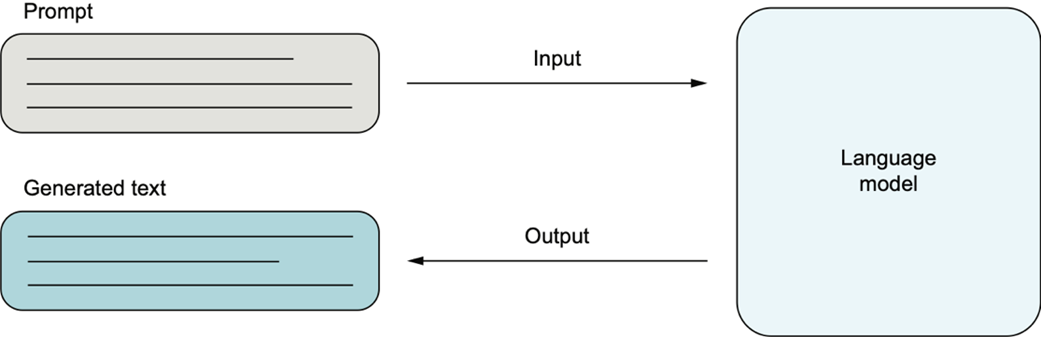

Prompt engineering works by crafting inputs (prompts) that guide the model’s output.

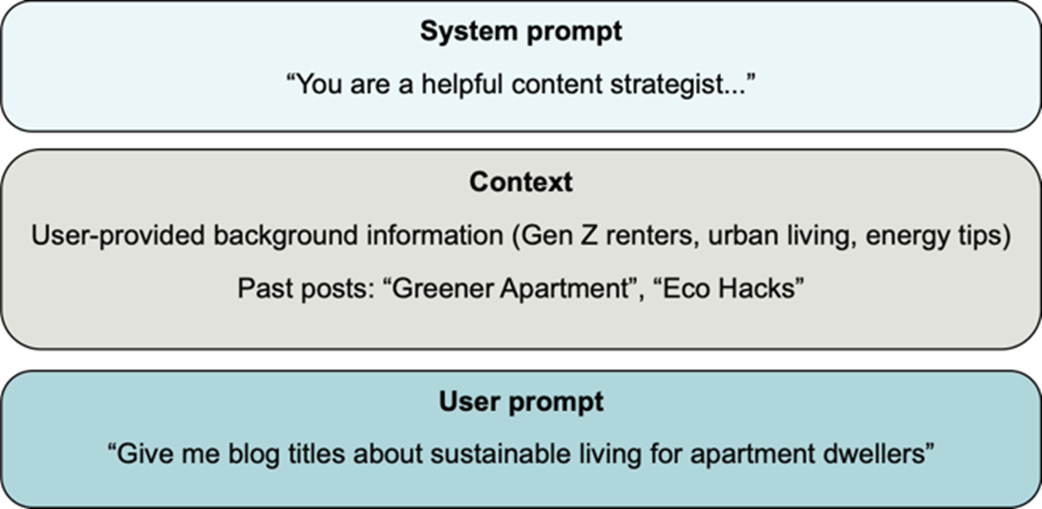

Structure of a prompt combining system role, context, and user input

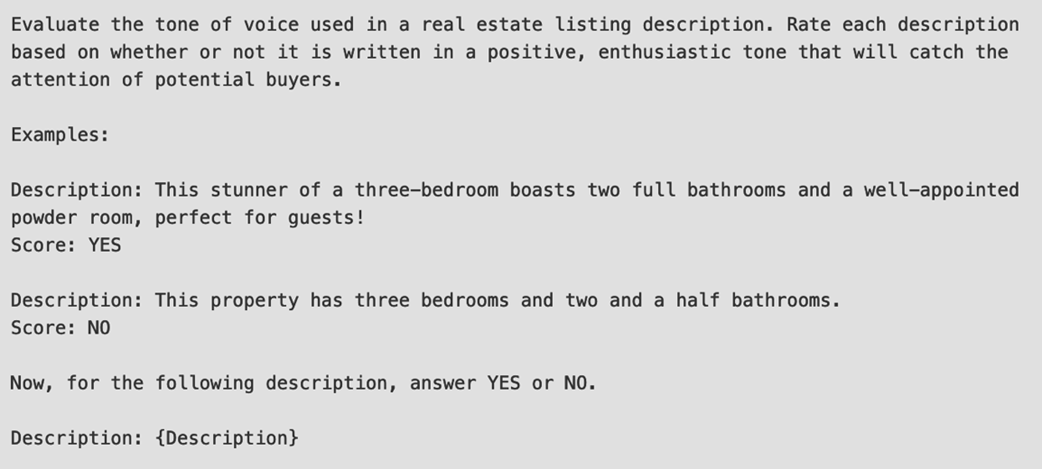

An example annotation prompt to judge real estate listing descriptions

Summary

- Prompt engineering, once a niche academic interest, has become a full-fledged discipline and design process with the advent of widespread generative AI applications.

- Beyond standard prompting techniques, such as zero-shot, few-shot, and multi-turn prompting, advanced techniques (chain-of-thought, tree-of-thought, and more) can further improve model responses by prompting the model to produce reasoning chains or multiple possible solutions, to reflect on them, and to verify its response.

- Prompting frameworks, including ReAct, MECE, PACT, and WISER, provide simple and reusable recipes for producing structured model outputs.

- To effectively measure and iterate on prompts, we need to develop evaluations that include sample contexts and methods of scoring the model responses produced by each prompt.

- Although prompting and prompt engineering are crucial to controlling model outputs, in some cases, post-training or a hybrid method may be more appropriate.

FAQ

What is prompt engineering and why does it matter?

Prompt engineering is the practice of crafting inputs that clearly communicate a user’s intent to a generative AI model, using careful phrasing, context, and constraints. Because LLMs rely on patterns rather than true intent, small wording changes can dramatically alter results. Clear instructions about audience, purpose, and format reliably improve output quality.How do small changes in wording affect an LLM’s response?

LLMs are sensitive to phrasing. Vague requests (“Summarize this”) often yield generic answers, while specific instructions (“Write a one-paragraph executive summary highlighting three key risks for a cybersecurity executive”) guide tone, structure, and content. Even simple cues like “TL;DR:” historically improved summarization.Which common LLM challenges can prompting help mitigate?

- Ambiguity: Add context, state the goal, and specify the output format.- Hallucination: Provide reference material and ask for step-by-step reasoning.

- Misalignment: Set tone, role, and boundaries explicitly (e.g., “If unsure, say you don’t know”).

- Complexity: Break tasks into steps or chain prompts into a workflow.

What’s the role of context and system prompts?

Prompts are largely stateless; anything the model needs (background, constraints, examples) should be included in the prompt. The system prompt (often hidden) defines role, tone, and guardrails (e.g., “helpful assistant,” “clinical researcher”) and influences how user inputs are interpreted. Providing curated context, role instructions, examples, and formatting cues keeps the model on track.What are foundational prompting techniques?

- Zero-shot: Direct instruction without examples (e.g., translation).- Few-shot: Include examples to demonstrate the pattern or format (e.g., classification, summarization).

- Multi-turn: Carry conversation history within the context to support natural back-and-forth; summarize or truncate when contexts grow large.

How can I encourage deeper reasoning (CoT, ToT, self-consistency)?

- Chain-of-thought (CoT): “Let’s think step by step” to elicit intermediate reasoning.- Tree-of-thought (ToT): Explore multiple solution paths in parallel, evaluate trade-offs, then choose the best.

- Self-consistency: Generate several reasoning chains and select the most frequent/coherent answer; improves reliability on logic/math tasks at higher compute cost.

What’s the difference between reflexion-style and verification prompting?

- Reflexion: Ask the model to critique and revise its own output (“Review your answer for mistakes and revise”). Helpful for code or writing but can introduce unnecessary changes if the model “finds” issues that aren’t there.- Verification: Add a lightweight final check (“Double-check your answer for accuracy before submitting”) to reduce errors without destabilizing a good response.

How can I structure complex tasks (decomposition, least-to-most, prompt chaining, roles)?

- Decomposition: Split into subtasks executed sequentially.- Least-to-most: Start with simpler subproblems, then build to the full solution.

- Generated knowledge/pyramid priming: Surface key concepts or layer knowledge before answering.

- Prompt chaining: Connect prompts into a pipeline (extract → summarize → answer).

- Role-based prompting: Useful for tone/domain, best paired with clear task specs; modern models may ignore pure role-play without concrete instructions.

How should I evaluate AI-generated outputs?

Start by defining success criteria and metrics. Build evaluation datasets (benchmarks for common tasks; custom, expert-curated, synthetic, or mined-from-logs for unique tasks). Score with a mix of heuristics (deterministic checks), experts (ground truth), LLM-as-judge (scalable but bias-prone), and A/B tests (real user preferences). Validate LLM judges against human labels, watch for biases and reward hacking, and run regression tests after changes.When should I use prompting vs. post-training?

Begin with prompting: it’s accessible, fast to iterate, and sufficient for many tasks. Use post-training when you need nuanced, consistent behavior across contexts, or to distill behaviors into smaller/cheaper models. Trade-offs:- Prompting: Highly accessible; lower precision; no training cost but may require more tokens/steps.

- Post-training: More precise; requires data and compute; enables targeted behavior and potential inference savings.

Introduction to Generative AI, Second Edition ebook for free

Introduction to Generative AI, Second Edition ebook for free