1 Understanding data-oriented design

Data-Oriented Design is presented as a practical way to build faster, simpler, and more maintainable Unity games by organizing code around data flow rather than object relationships. Instead of asking which class should own a behavior, DOD asks what data a feature needs, how that data should be transformed, and where it should live in memory. This approach is especially useful in games, where performance constraints, frequent feature changes, and growing gameplay complexity can make traditional object-oriented hierarchies difficult to maintain.

The chapter explains that much of game performance depends not on the speed of calculations, but on how efficiently the CPU can access the data needed for those calculations. By grouping related runtime data together—often in contiguous arrays—DOD improves data locality, increases cache hits, and reduces time spent waiting on main memory. The enemy movement example shows how separating positions, directions, velocities, and other attributes into arrays allows systems to process many entities in predictable loops, making better use of CPU cache behavior and preparing the code for later optimizations such as SIMD, Burst, and Jobs.

DOD also reduces complexity and improves extensibility by separating data from logic and treating gameplay systems as transformations from input data to output data. New enemies or mechanics do not require expanding inheritance trees or fitting behavior into existing class relationships; instead, developers identify the new data required and write logic that transforms it. The chapter also clarifies that Unity DOTS, Burst, Jobs, ECS, and TransformAccessArray are tools that can support DOD, but DOD itself is the underlying design approach. The recommended path is to first structure data and logic clearly, then adopt DOTS features selectively when they solve specific performance or organizational problems.

Screenshot from our imaginary survival game, with the player in the middle, and enemies moving around.

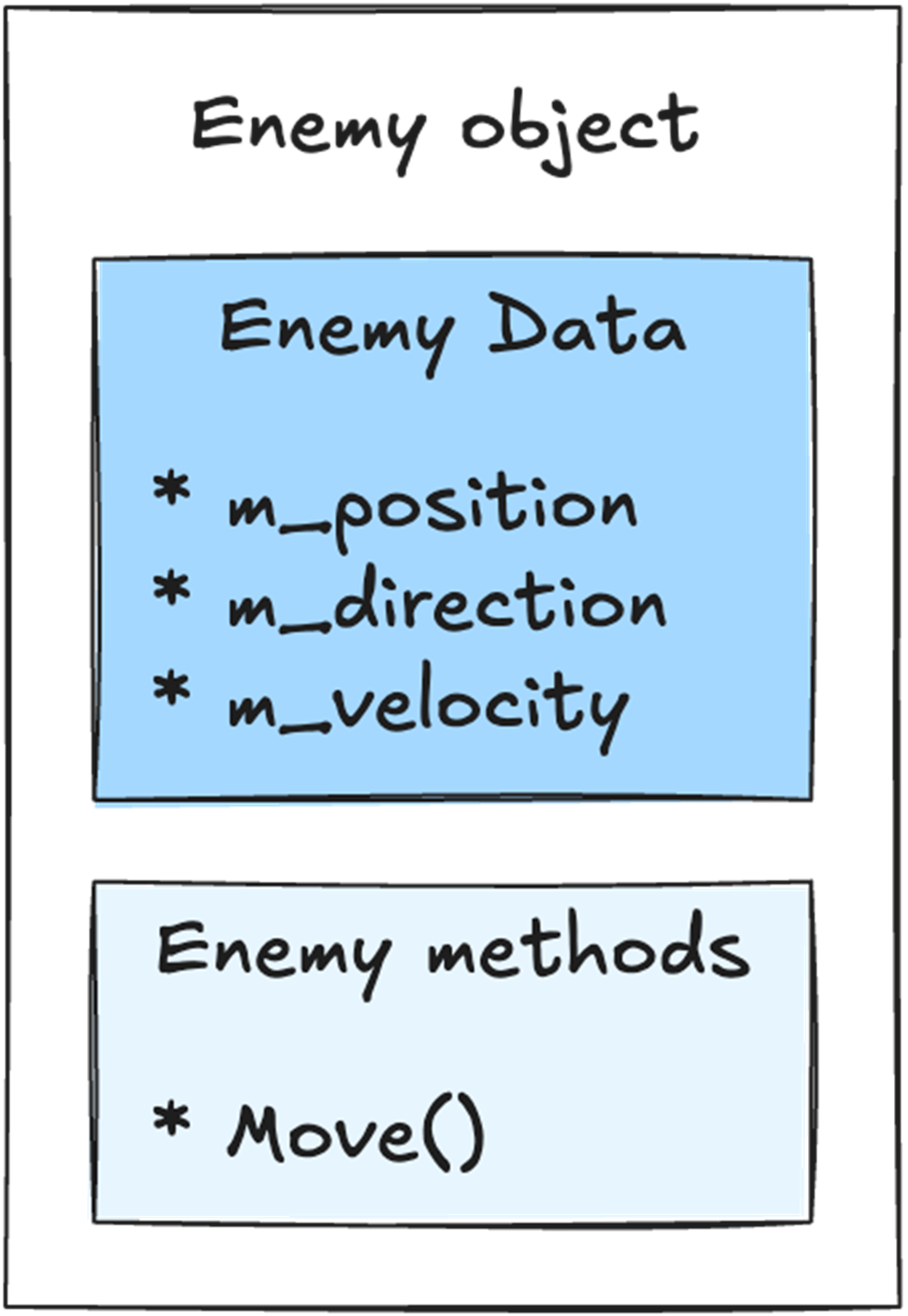

Our Enemy object holds both the data and the logic in a single place. The data is the position, direction, and velocity. The logic is the Move() method that moves this enemy around.

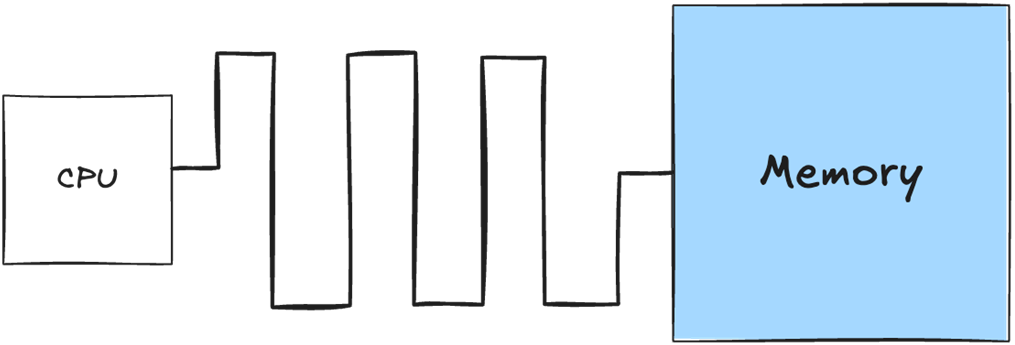

On the motherboard, the memory sits apart from the CPU, regardless if it’s in a console, desktop and mobile device. That physical distance, combined with the size of the memory, makes it relatively slow to retrieve data from memory.

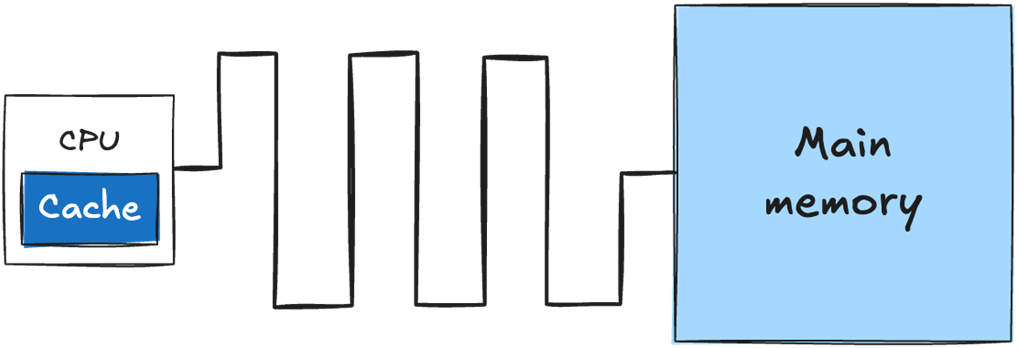

The cache sits directly on the CPU die and is physically small. Retrieving data from the cache is significantly faster than retrieving data from main memory.

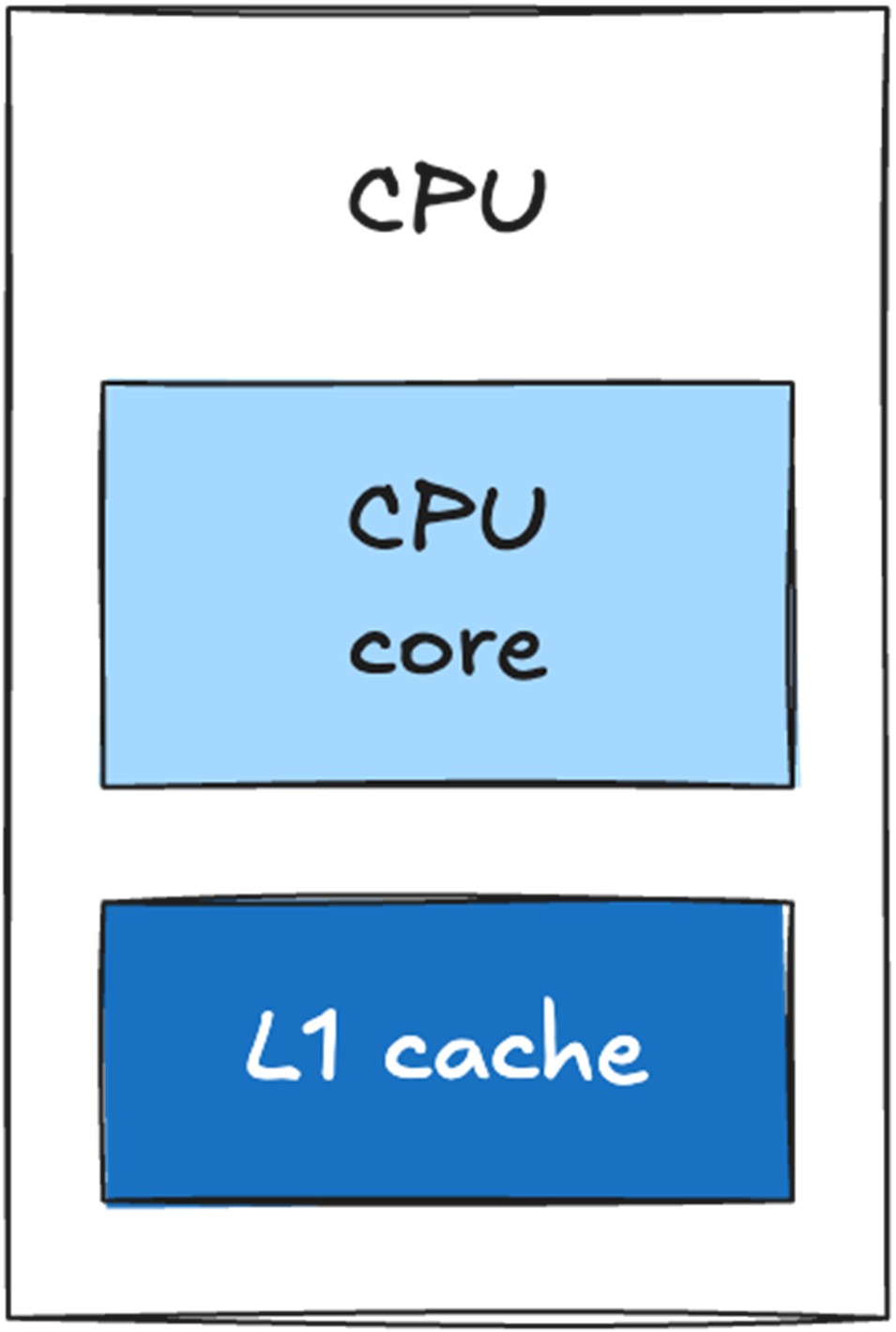

A single-core CPU with an L1 cache directly on the CPU die.

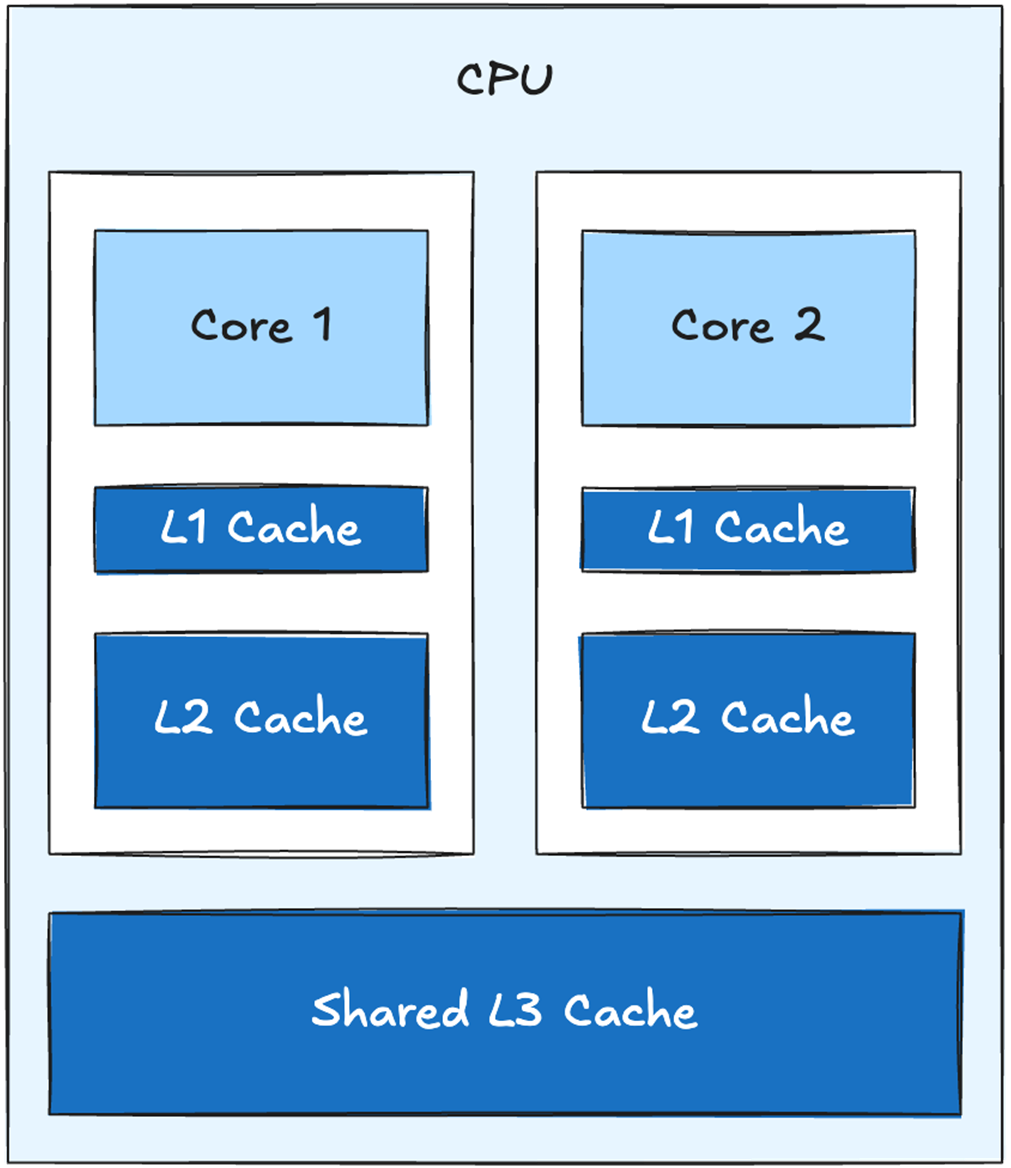

A 2-core CPU with shared L3 cache

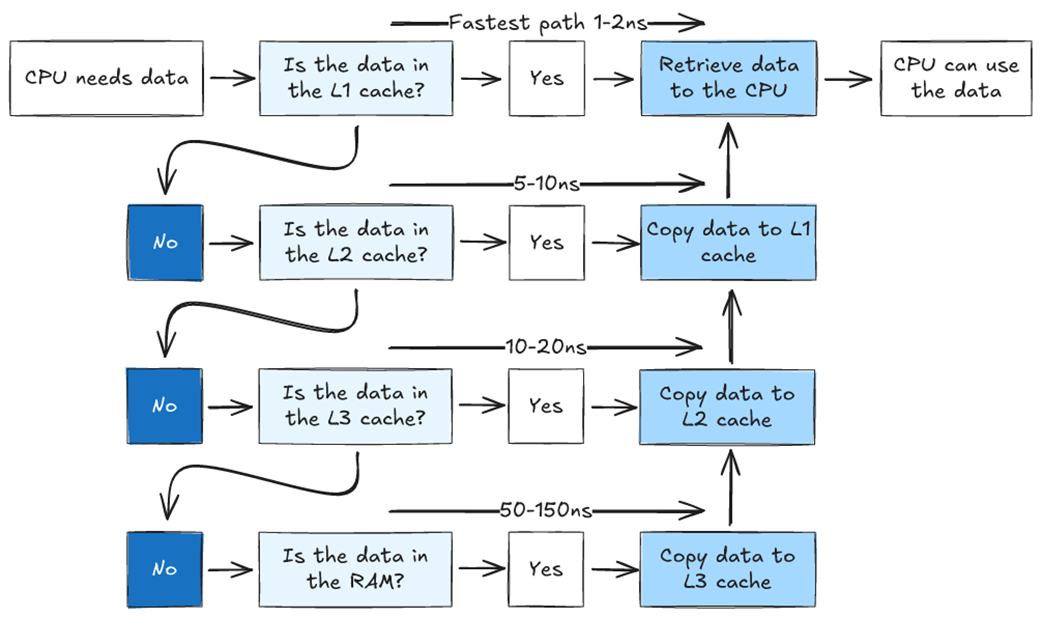

Flowchart showing how the CPU accesses data in a system with three cache levels. If the data is not found in the L1 cache, we look for it in L2. If it is not in L2, we look in L3. If it is not in L3, we need to retrieve it from main memory. The further we have to go to find our data, the longer it takes.

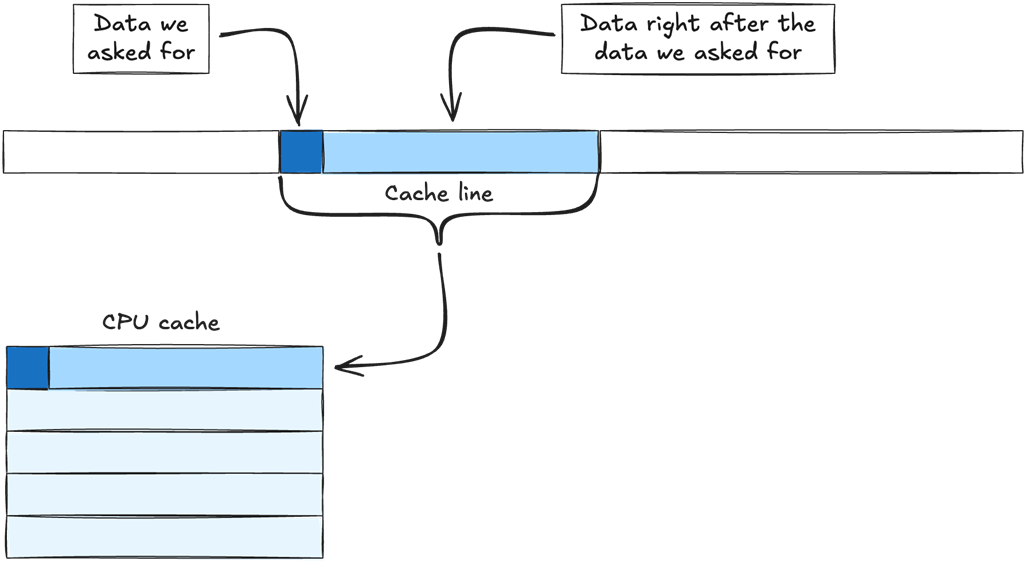

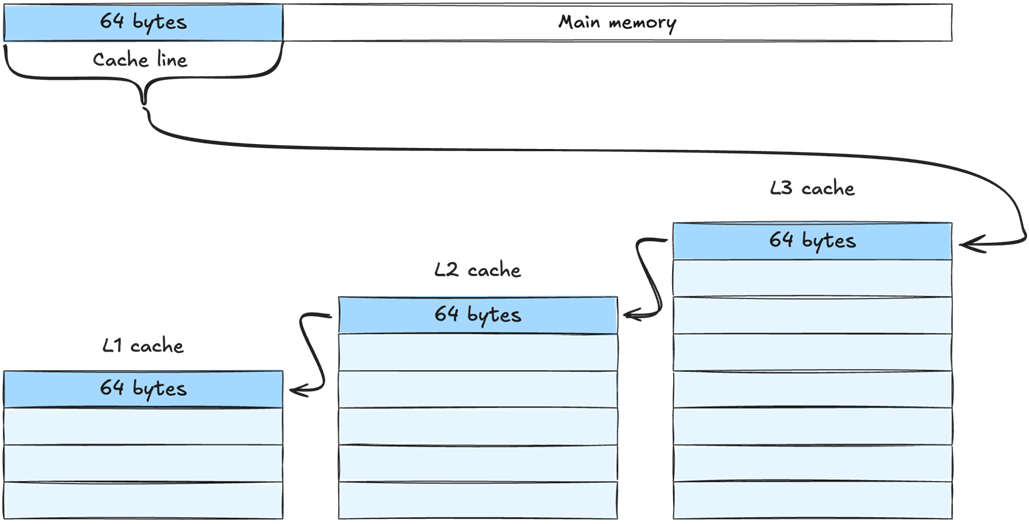

Data is retrieved from main memory in chunks called cache lines. When we ask for data from main memory, the memory manager retrieves the data we need, plus the chunk of data that comes directly after it, and copies the entire chunk to the cache.

When retrieving a cache line from main memory, it is copied to all levels of the cache. In this example it is first copied to L3, then L2 and finally L1. The cache line is the same size at all levels - meaning the same amount of data is copied to every level. L3 can simply hold more cache lines than L2, and L2 can hold more cache lines than L1.

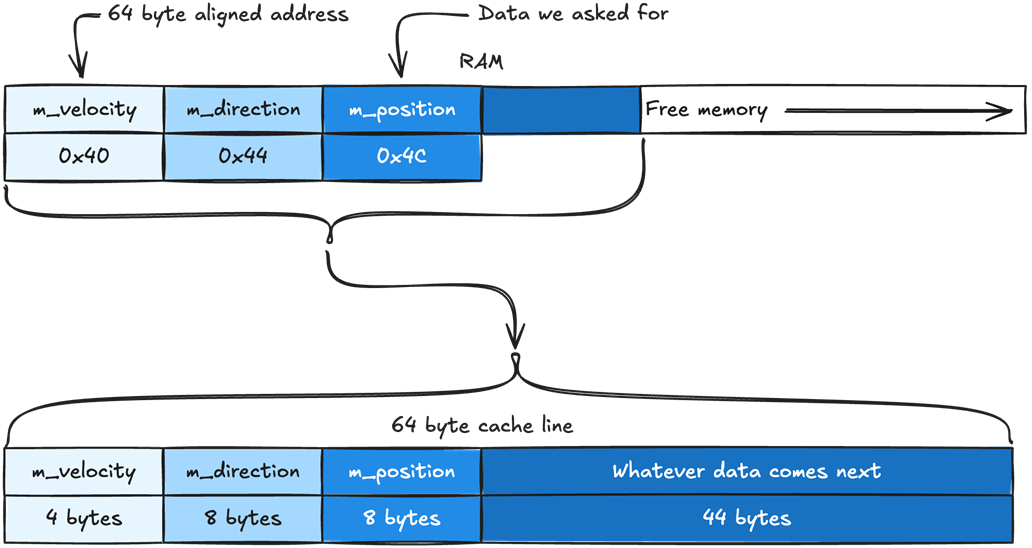

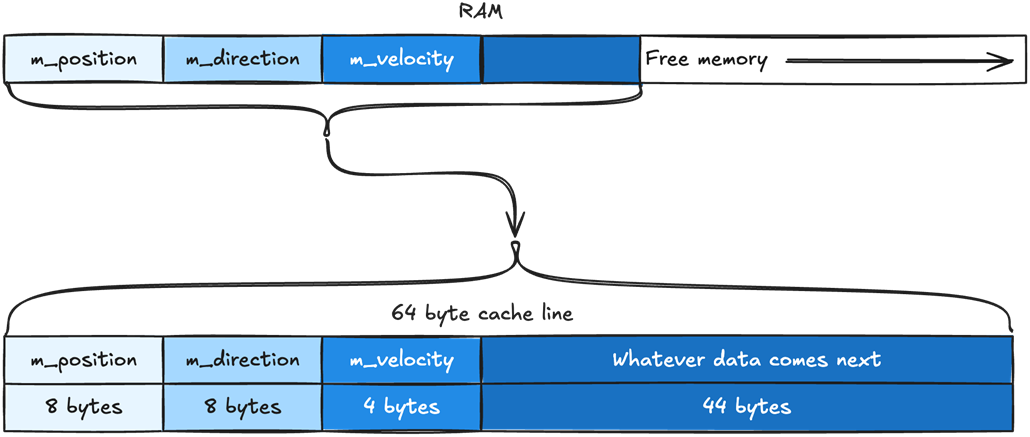

How the member variables of our Enemy object are placed in memory. The position data is placed first, then direction, then velocity. The same order they are defined in the Enemy class. They are packed together in memory without any space between them.

Our cache line will include m_position, m_direction, m_velocity, and whatever data comes right after them. Our cache line is 64 bytes. The variables m_position and m_direction are of type Vector2, which takes 8 bytes. The variable m_velocity is a float, which takes 4 bytes. That means we have 44 bytes leftover, which are automatically filled with whatever data comes after m_velocity.

When our CPU asks for m_position, the Memory Management Unit (MMU) will try to fill the cache line from the nearest address that is aligned with the size of our cache line. If our cache lines are 64-byte long, the cache line will be filled with data from the nearest 64-byte aligned address. In this case, m_position sits at 0x4C and the nearest 64-byte aligned address will be 0x40.

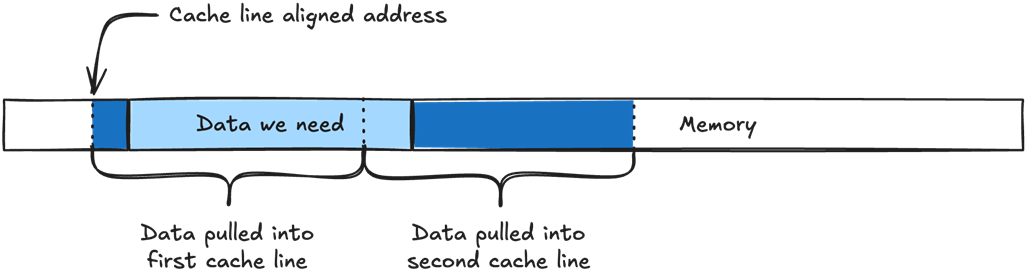

If the data we need does not align with the cache line size, it will need to be split into two cache lines instead of one.

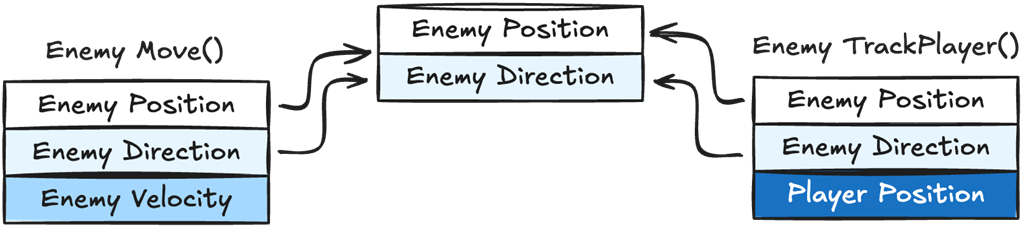

We can see both Move() and TrackPlayer() require the same variables, Enemy Position and Direction, but each one also needs different data as well, Enemy Velocity for Move() and Player Position for TrackPlayer(). When data is shared between different logic functions it makes it impossible to guarantee data locality for every logic function.

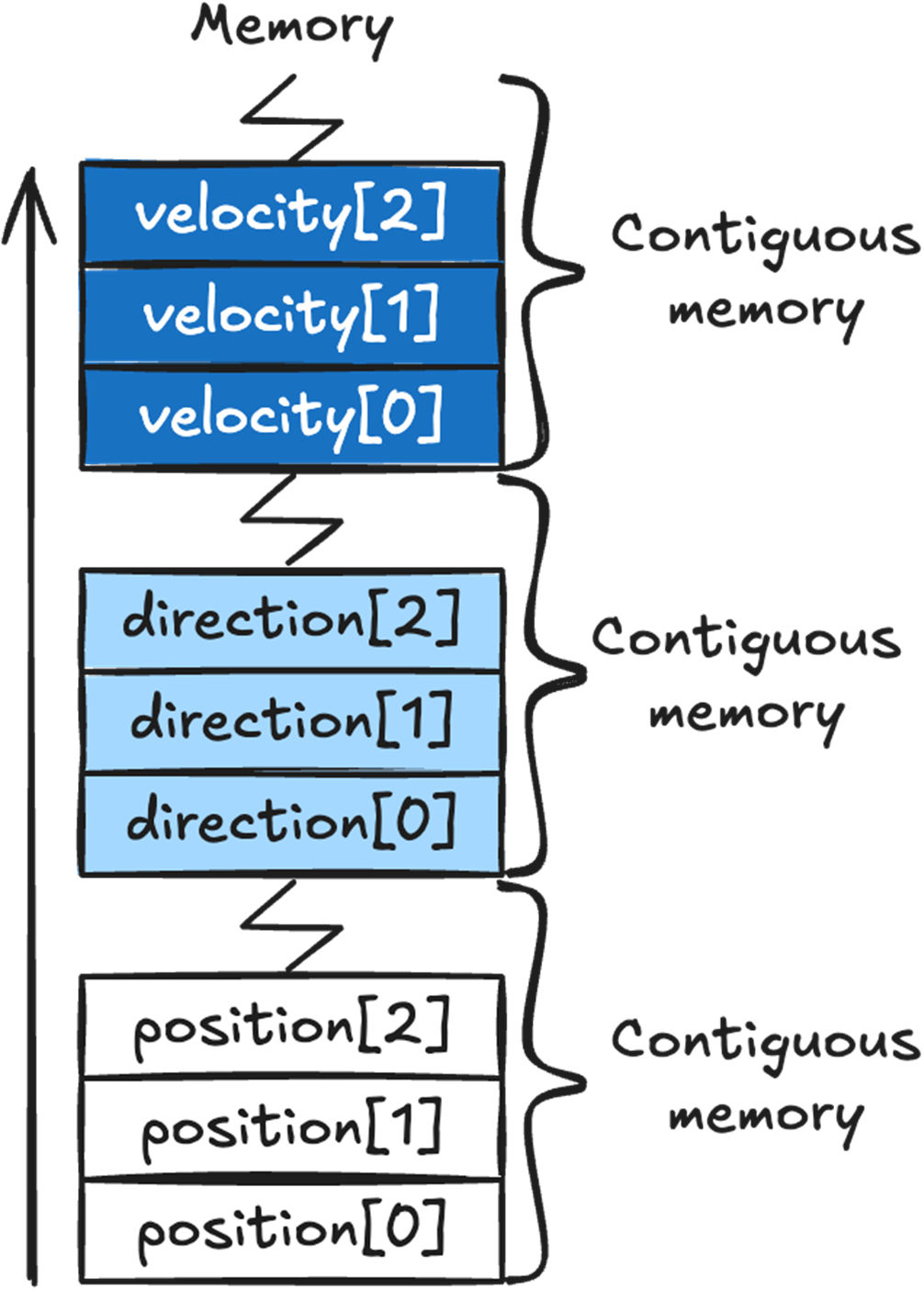

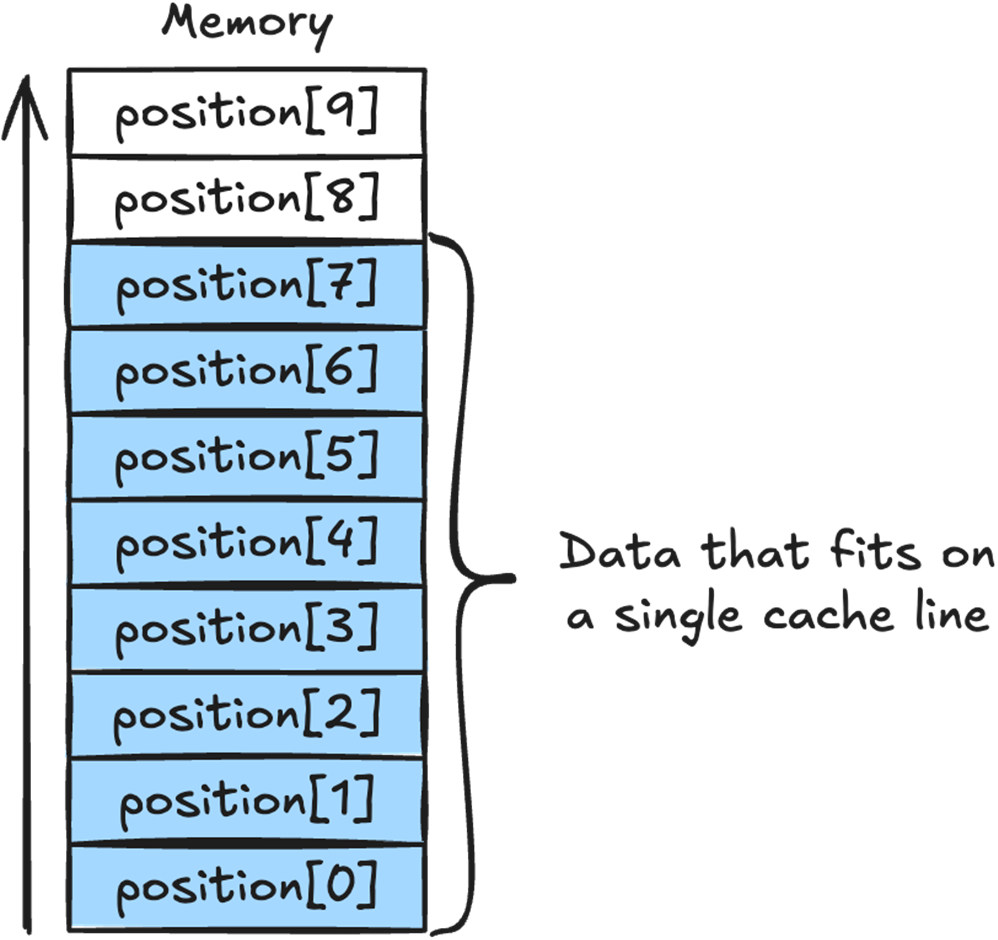

Arrays automatically place their data in contiguous memory. All the position array data will be in a single contiguous chunk of memory, as will direction and velocity’s data.

We can see how the position array sits in memory, and how the array elements 0 to 7 all fit in a single 64 byte cache line.

The two existing enemies in our game, the Angry Cactus, which is a static enemy, and the Zombie, which is a moving enemy.

Task to implement a new enemy, the Teleporting Robot.

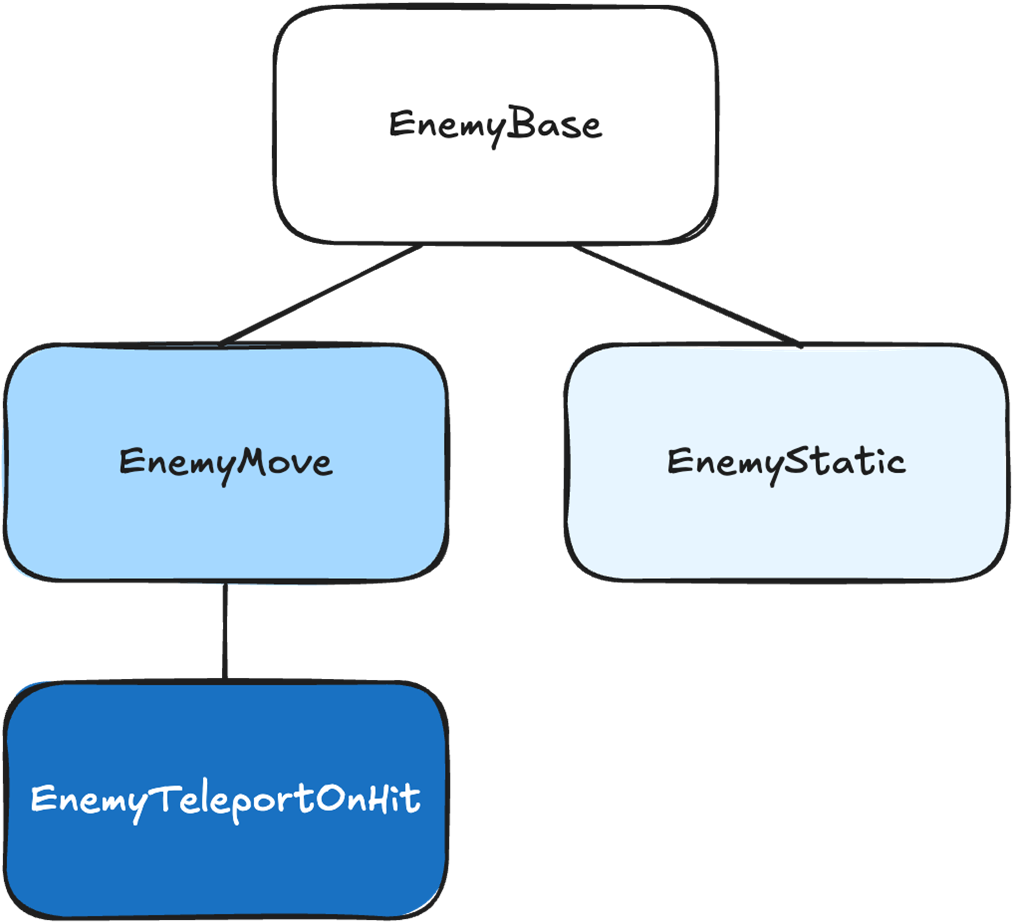

Our game’s enemy inheritance tree, with EnemyTeleportOnHit inheriting from EnemyMove.

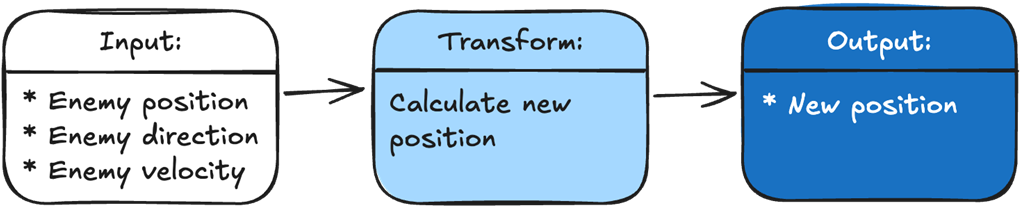

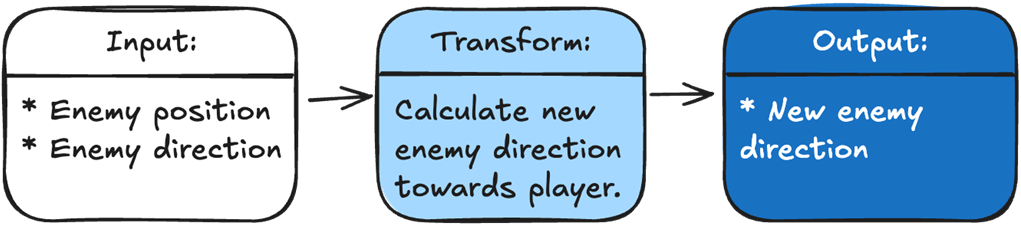

Every function in our game takes in some input data, then transforms it into output data.

The Move() function’s input is the enemy position, direction, and velocity. The transformation is our calculation of the new position. The output is the new position.

To make our enemy track the player, we just add a function that sets the enemy’s direction toward the player. Our input is the enemy position and the player position. The transformation is calculating a new direction for the enemy. The output is the new direction.

To add our new Robot Zombie, we just add a function that teleports the player to a new location if it is hit. Our input is the damage the enemy received, if any, and whether it should teleport if hit. The transformation is calculating a new position if the enemy is hit. The output is either the new position if the hit succeeds, or the old position if the hit fails.

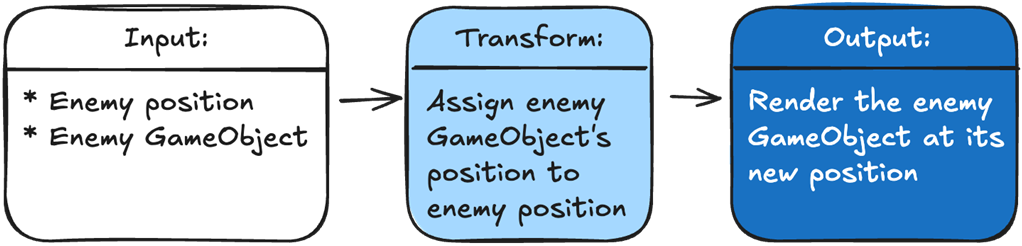

To show an enemy in the correct position, we pass in the enemy’s GameObject and its position. We transform our data by assigning the GameObject’s position to the enemy. The output is Unity rendering our GameObject in the correct position.

Task to implement a new enemy, the Zombie Duck.

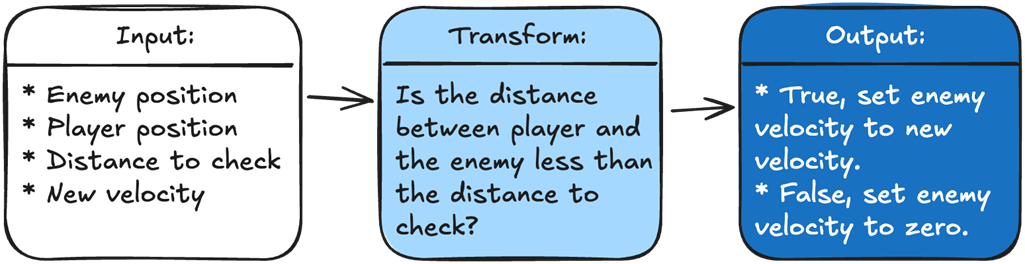

To determine what velocity we should set our enemy, we are going to take in four variables: the enemy position, the player position, the distance we need to check against, and the new enemy velocity. Our logic will calculate the distance between the player and the enemy and check it against the input distance. The output is the new velocity for the enemy based on the logic result.

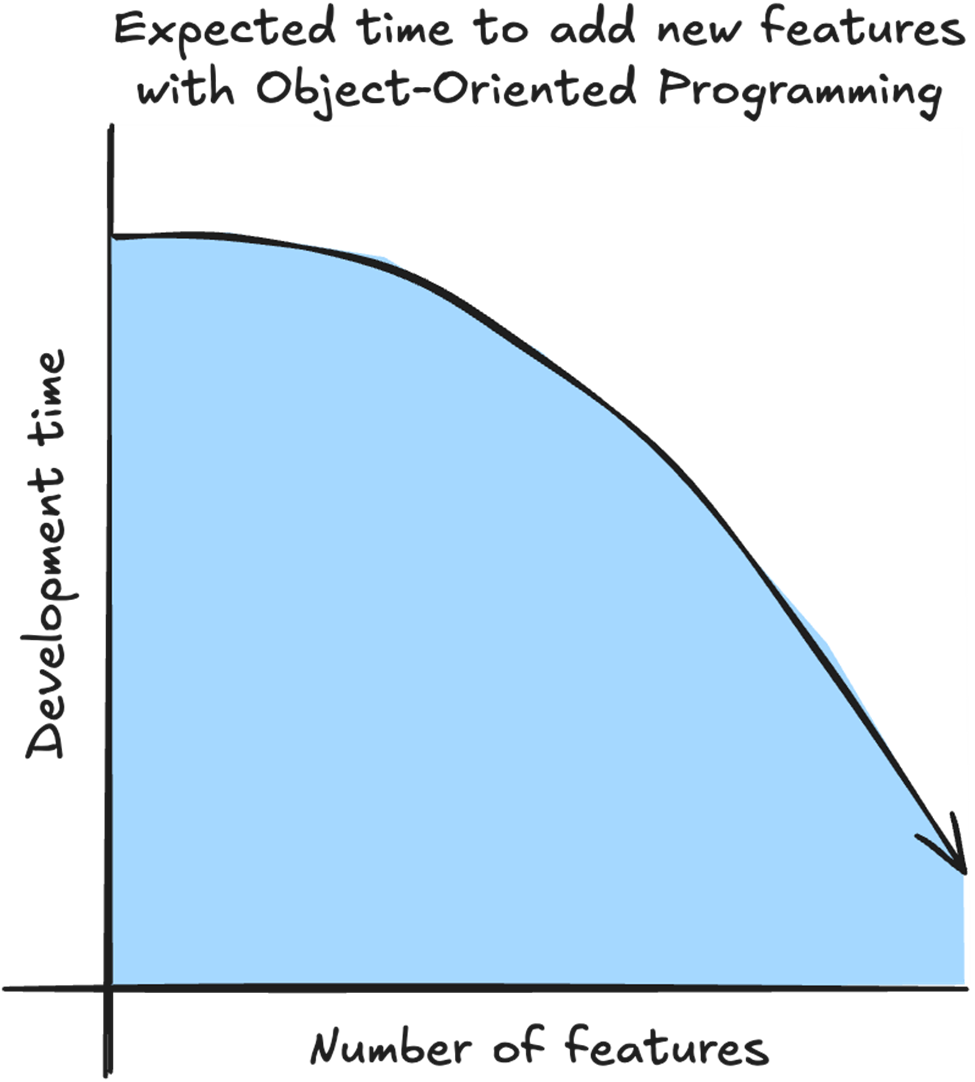

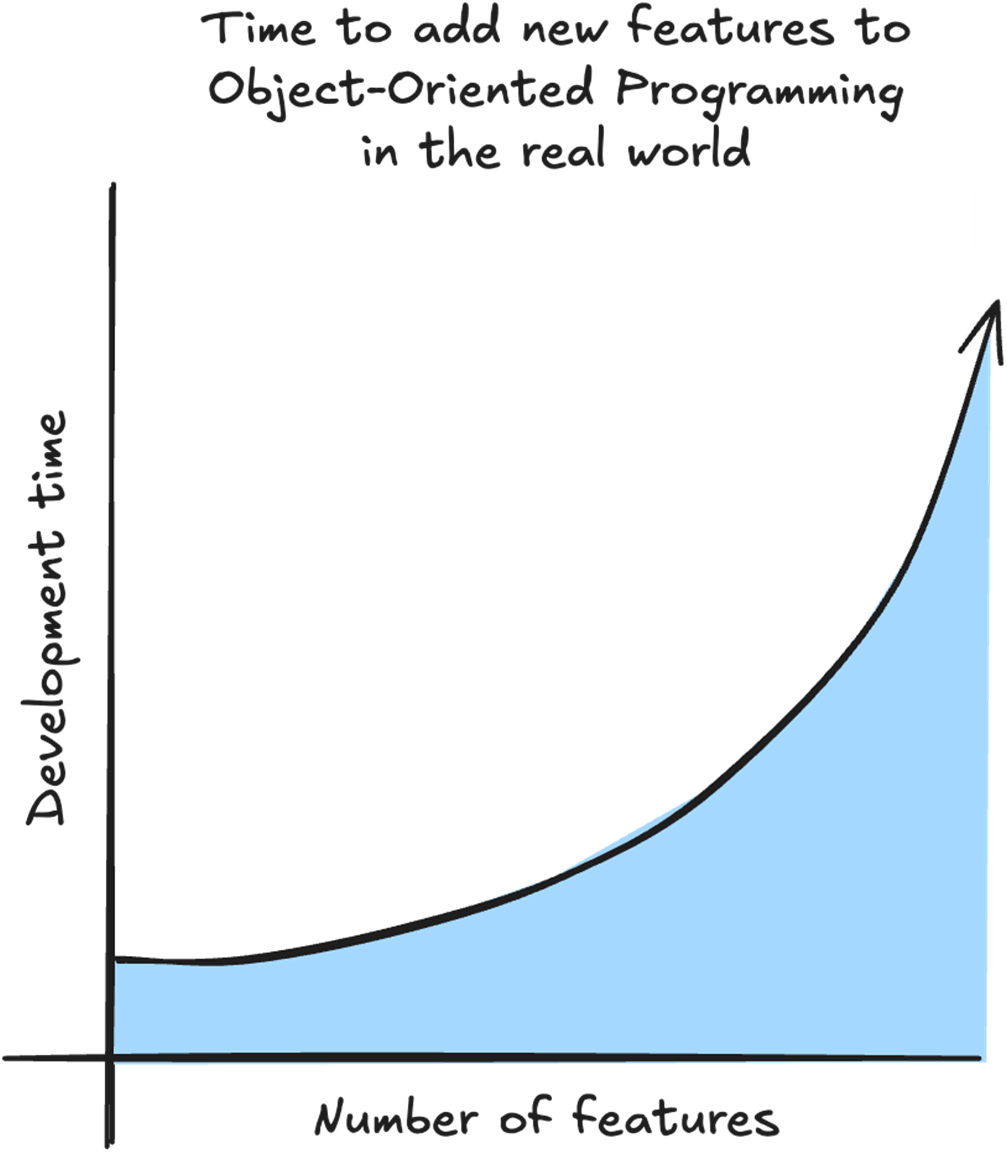

With OOP, in an ideal situation, we start the project by spending time setting up systems and inheritance hierarchies so future features will be quick and easy to implement.

With OOP, what usually happens is that the more features we already have, the longer it takes to add a new feature. For every new feature, we need to take into account the complicated relationship between existing features.

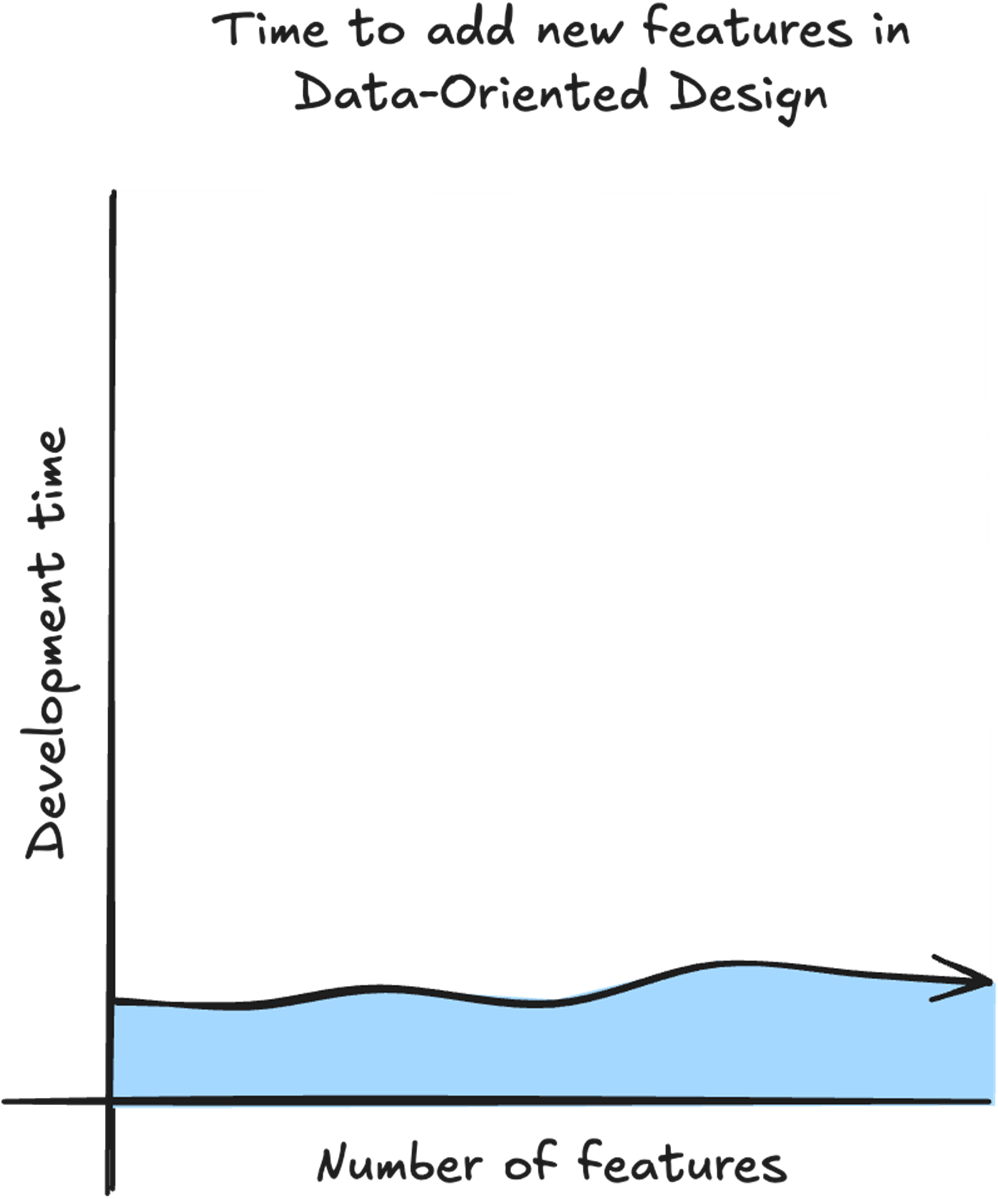

With DOD the time to add a new feature is linear because we don’t need to take into account the existing features. All we need is the data for the feature, and what logic we need to transform the data.

Summary

- With Data-Oriented Design we get a performance boost by structuring our data to take advantage of the CPU cache.

- Your target CPU may have multiple levels of cache, but the first level, called the L1 cache is the fastest.

- The L1 cache is the fastest because it is small and is placed directly on the CPU die.

- Retrieving data from L1 cache is up to 50 times faster than accessing main memory.

- To avoid having to retrieve data from main memory, our CPU uses cache prediction to guess which data we are going to need next and places it in the cache ahead of time.

- Data is pulled from memory into the cache in chunks called cache lines.

- Practicing data locality by keeping our data close together in memory helps the CPU cache prediction retrieve the data we’ll need in the future into the L1 cache.

- Placing our data in arrays makes it easy to practice data locality.

- With Data-Oriented Design we can reduce our code complexity by separating the data and the logic.

- Every function in our game takes input and transforms it into the output needed. The output can be anything from how many coins we have to showing enemies on the screen.

- Instead of thinking about objects and their relationships, we only think about what data our logic needs for input and what data our logic needs to output.

- With Data-Oriented Design, we can also improve our game's extensibility by always solving problems through data. This makes it easy to add new features and modify existing ones.

- Regardless of how complex our game has become, every new feature can be solved using data. This can keep development time more predictable because new features are usually added by identifying the data they need and the logic that transforms it.

- ECS is a design pattern sometimes used to implement DOD. Not all ECS implementations are DOD, and we don’t need ECS to implement DOD.

- Unity DOTS is a collection of tools that can be built on top of a DOD architecture, but DOD does not require DOTS.

FAQ

What is Data-Oriented Design?

Data-Oriented Design (DOD) is an approach to structuring game code around the flow and transformation of data. Instead of organizing gameplay around relationships between objects, DOD focuses on what data systems need, how that data is stored, and how it moves through the game. In practice, this often means storing related runtime data in arrays, separating data from logic, and writing systems that process many items at once.

Why is Data-Oriented Design useful for game development?

DOD helps solve common game development challenges such as maintaining 60fps, supporting complex gameplay, updating live games, and working within hardware limits. It improves performance by making better use of modern CPU architecture, reduces code complexity by separating data from logic, and makes large codebases easier to extend over time.

How does Data-Oriented Design improve performance?

DOD improves performance by organizing data so the CPU can access it efficiently. Modern CPUs are very fast, but they often spend time waiting for data to arrive from memory. By grouping data together and processing it sequentially, DOD increases the chance that required data is already in the CPU cache, reducing slow trips to main memory.

What is the CPU cache, and why does it matter?

The CPU cache is a small, fast memory located close to the CPU core. Accessing data from the L1 cache may take only 1-2 nanoseconds, while retrieving data from main memory can take 50-150 nanoseconds. Because gameplay systems often process large amounts of data every frame, keeping frequently used data in cache can significantly improve performance.

What are cache hits and cache misses?

A cache hit occurs when the data the CPU needs is already available in the CPU cache. A cache miss occurs when the data is not in the cache and must be retrieved from a slower memory location, such as main memory. In DOD, the goal is to maximize L1 cache hits and minimize cache misses.

What is a cache line?

A cache line is the chunk of memory copied into the CPU cache when data is requested. Common cache line sizes include 64 bytes, though the exact size depends on the CPU. When the CPU asks for one piece of data, nearby data is also loaded into the cache line. DOD takes advantage of this by placing data that will be used together close together in memory.

What is data locality?

Data locality means placing data that is used together close together in memory. For example, if enemy movement needs position, direction, and velocity, those values should be stored near each other or in contiguous arrays. Good data locality allows the CPU to load useful data into cache together, reducing memory access costs.

Why does DOD often use arrays instead of object-based data?

Arrays store data contiguously in memory, which makes them cache-friendly. Instead of storing each enemy as an object containing many different fields, DOD may store all enemy positions in one array, all directions in another array, and all velocities in another. A function can then process all enemies in a loop, making memory access more predictable and efficient.

How does separating data and logic reduce code complexity?

Separating data and logic lets developers focus on what data a function needs and how that data is transformed, rather than deciding which object owns the function. Gameplay code can be viewed as input data, transformation logic, and output data. This makes systems easier to reason about, debug, modify, and extend as the project grows.

How are Unity DOTS, Burst, Jobs, and ECS related to Data-Oriented Design?

DOD is the foundation, while Unity DOTS provides tools that can build on it. Burst can help compile code to use SIMD instructions, Jobs can split work across multiple CPU cores, ECS organizes code into Entities, Components, and Systems, and TransformAccessArray can update GameObject transforms from Jobs. However, DOD does not require ECS. A project can apply DOD principles with arrays and simple logic functions first, then add DOTS features when they solve a specific performance problem.

High Performance Unity Game Development ebook for free

High Performance Unity Game Development ebook for free