11 Reducing opt-outs

Opt-outs occur when users attempt to leave a virtual agent to reach a human, and they can erode containment and the overall business case for automation. The chapter explains that understanding where and why users opt out is essential: immediate opt-outs stem from biases, perceived complexity, or a preference for people, while later opt-outs often signal gaps in recognition, clarity, progress, or outcomes. By instrumenting conversations to capture when and at which step opt-outs happen, teams can pinpoint root causes and prioritize improvements that keep users engaged.

To reduce immediate opt-outs, start with a confident, human-sounding greeting and introduction, set clear capabilities and expectations, incentivize self-service with speed and ease, reassure escalation if needed, and invite the user to opt in. To reduce later opt-outs, invest in better understanding (training, retrieval, and coverage), ask clear and concise questions, allow flexible inputs with friendly disambiguation, minimize cognitive load in voice, show progress, anticipate follow-on needs, and write empathetic retries that avoid blaming the user. Technology can ease pain points (e.g., tolerant input handling and custom speech models), while analytics reveal patterns such as loops, dead-ends, or steps that commonly trigger escalation.

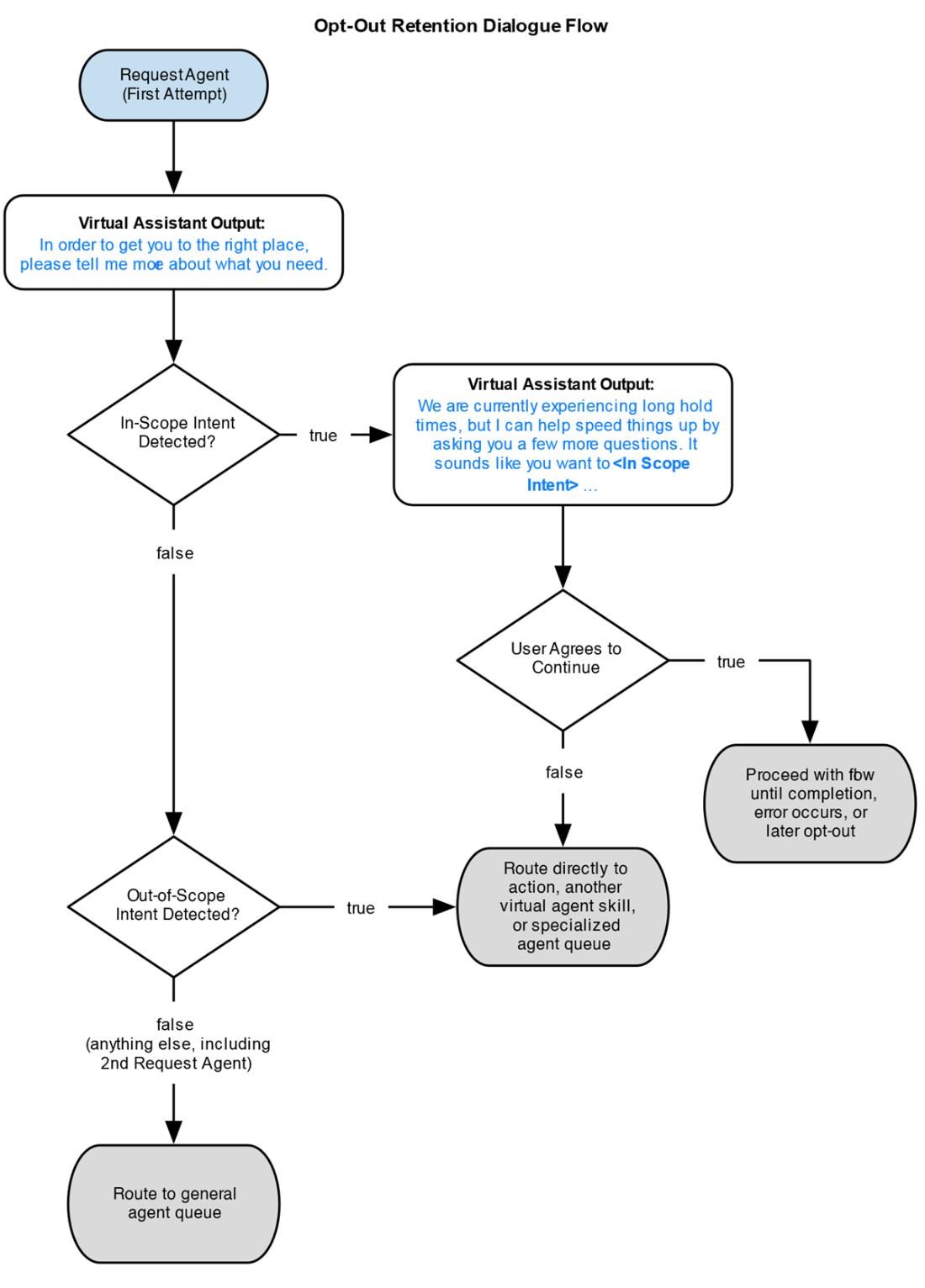

Opt-out retention flows can recover users by first discovering their goal, determining whether it’s in scope, offering an efficient self-service path when it is, or routing them to the right human or skill when it isn’t. Even a simple “reason for opting out” prompt yields valuable data, and a full retention pattern can reframe the user’s request, provide incentives, and preserve goodwill. Generative AI is a practical aid for crafting concise, courteous, and instructive messages—especially greetings and error prompts—without sacrificing control. A utility case study shows that combining these tactics retained 38% of immediate opt-outs (for in-scope intents) and nudged completion from 27% to 30%. Finally, the chapter emphasizes judgment: sometimes escalation is the right outcome; design for it transparently and gracefully.

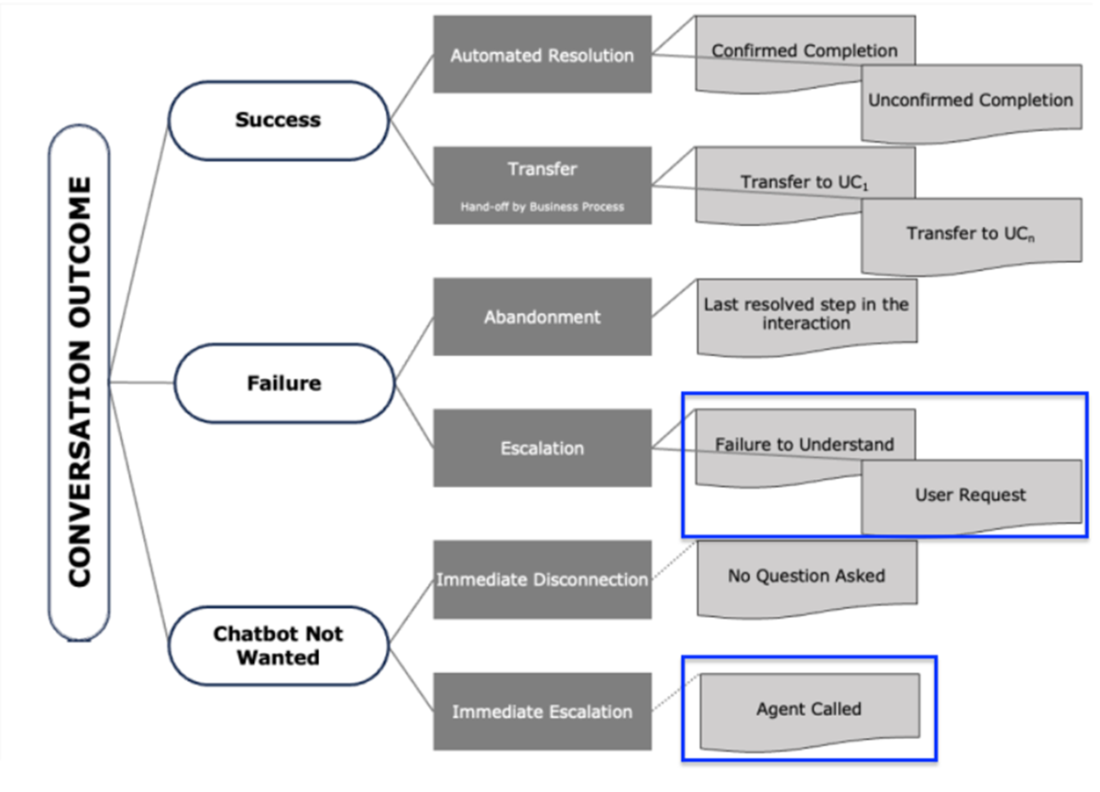

Opt-outs lead to these undesirable outcomes.

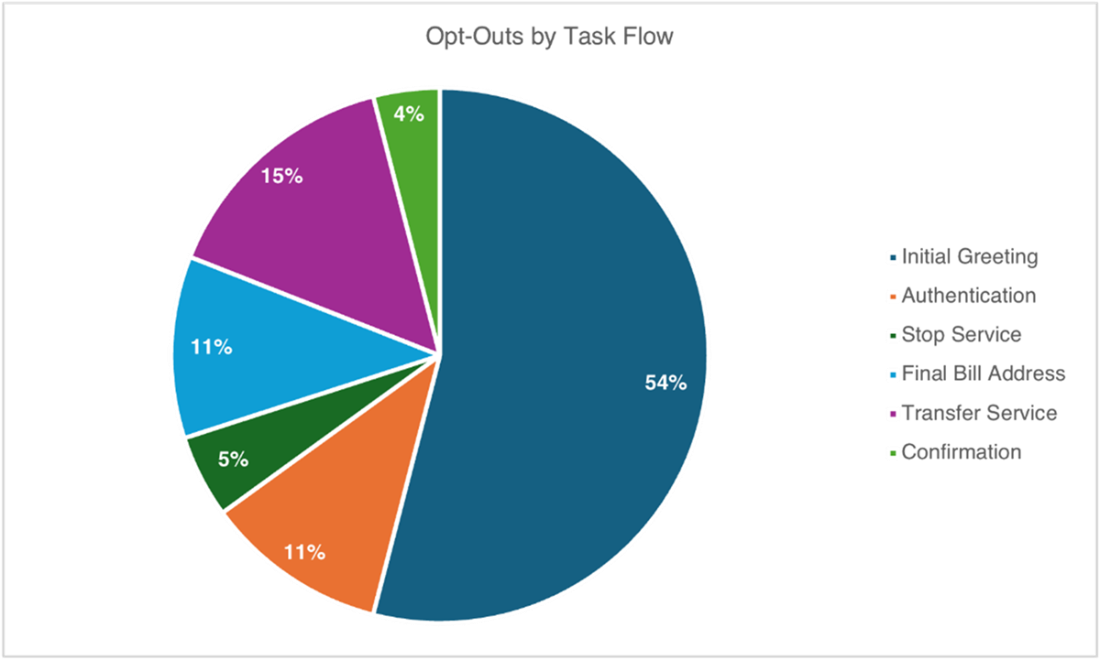

A breakdown of opt-out requests by current task flow shows that immediate opt-outs (requests for agent during the Initial Greeting task flow) occurred more frequently than any other part of the conversational journey for this self-service, process-oriented bot.

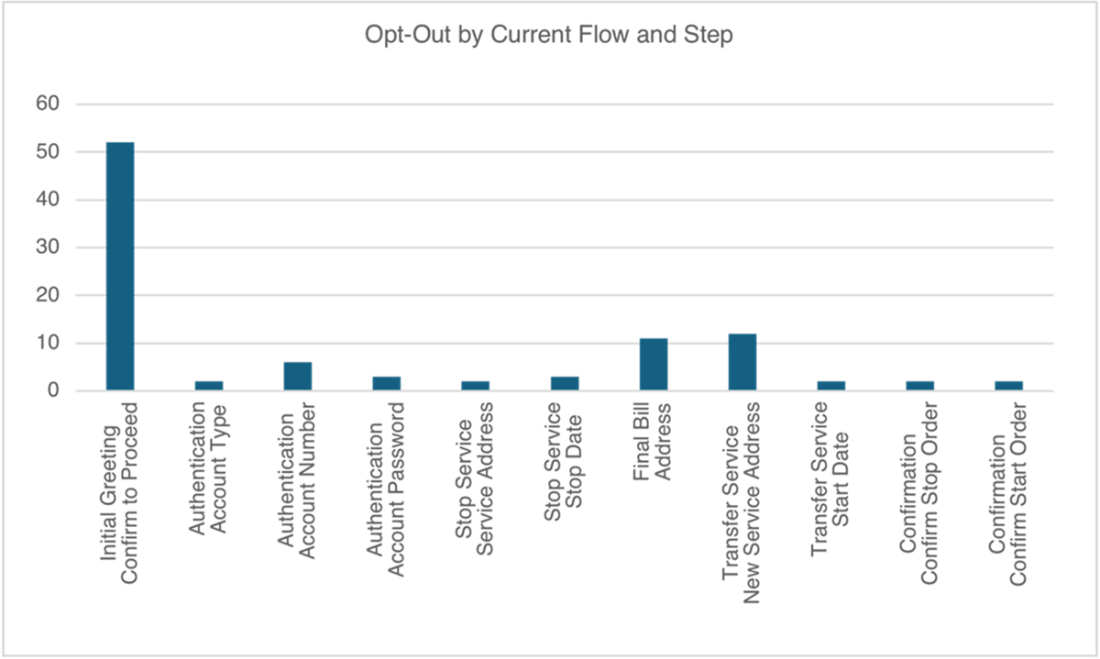

A breakdown of opt-out occurrences by step can aid in root cause analysis. In this chart, immediate opt-outs show prominently on the left, but trends indicate that there may also be a problem with collecting address details in multiple downstream flows.

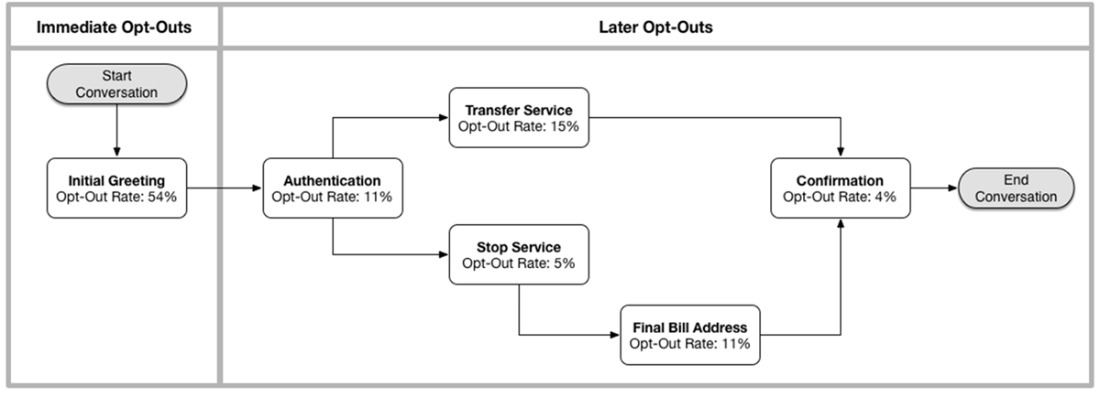

A high-level flow diagram shows how far a user gets into a process before opting out. This information can aid in root cause investigation.

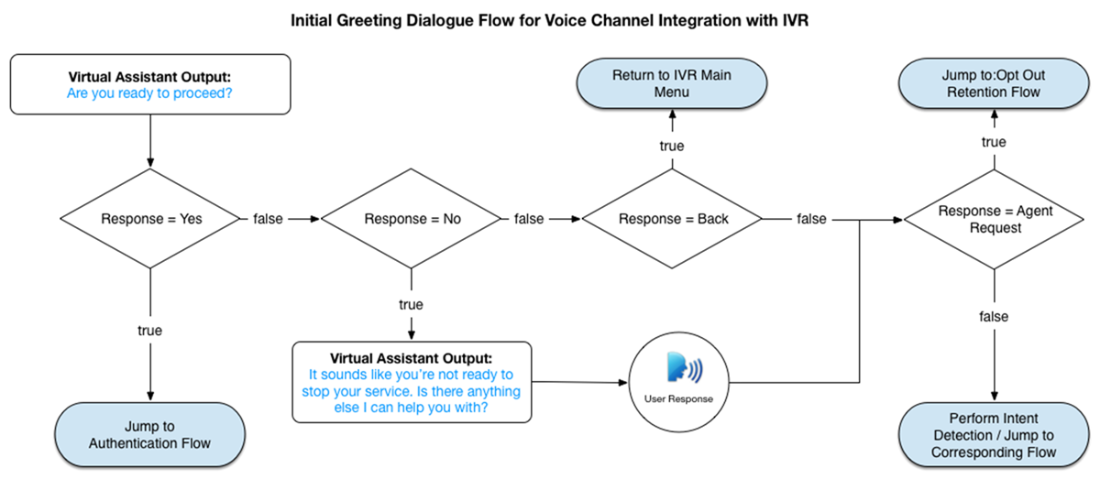

The dialogue logic in this sample greeting flow can handle various responses to the question, “Are you ready to proceed?”. If a response is explicit, such as “No”, “Go back”, or “Speak to an agent”, a pre-defined flow is invoked. Otherwise, the response is sent to the classifier for intent detection and handled by the corresponding flow.

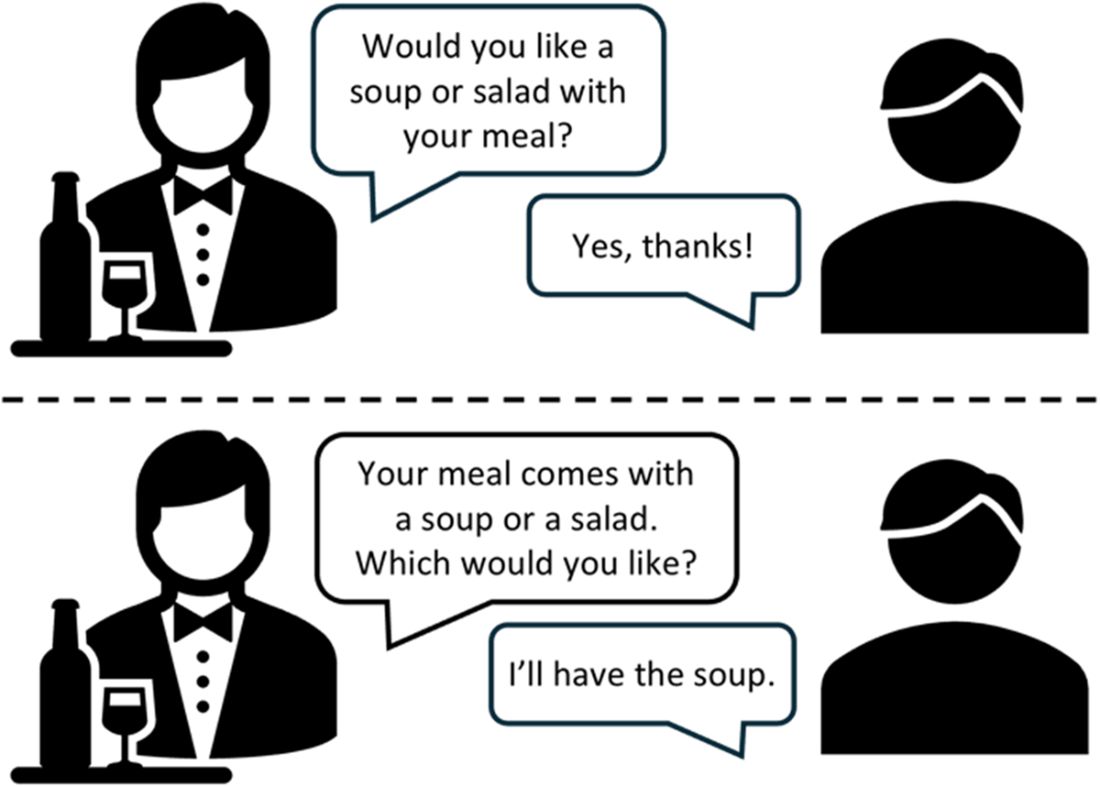

The way you structure a question can help the user understand what type of question you are asking – and what sort of answer you are looking for.

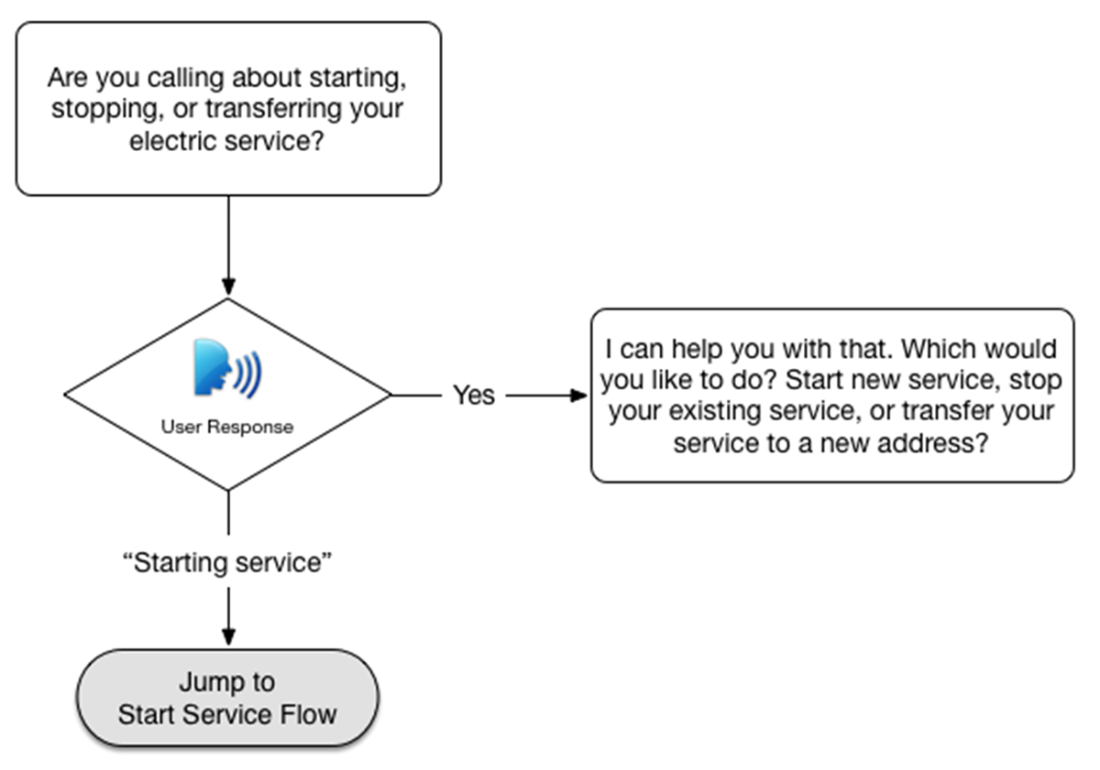

A resilient dialogue flow can handle a range of valid responses. If necessary, a friendly disambiguation step can be invoked to get clarity about the user’s goal.

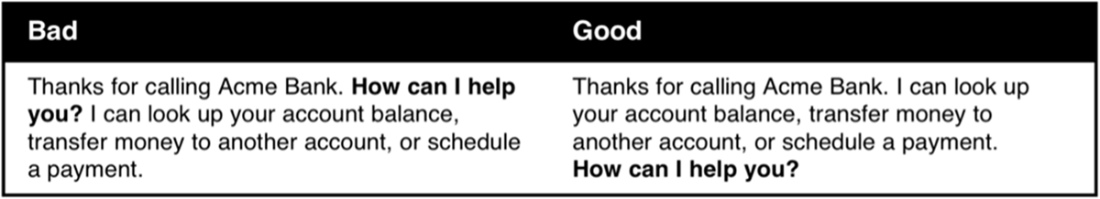

The bad example (left) puts the call to action “How can I help you?” in the middle of the output. This might spur the user to begin speaking while the output is still trying to play. The good example (right) puts informational messaging up front. At the end of the message, the call to action is a clear invitation for the user to begin speaking.

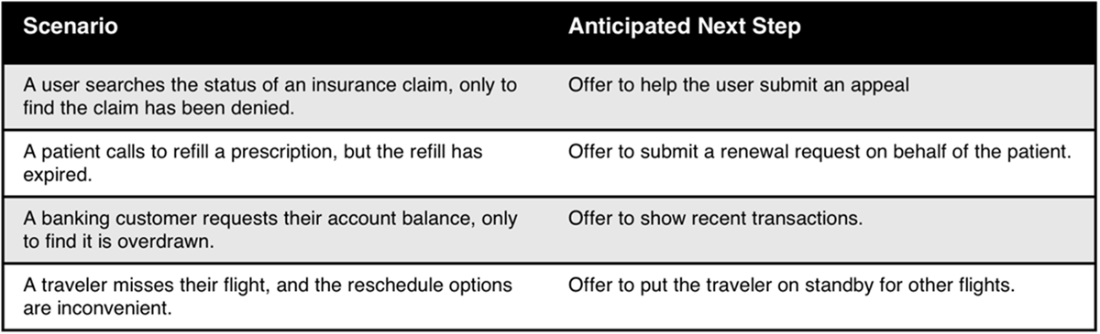

You can avoid escalations by presenting the next best course of action whenever you anticipate a user could still have an unmet need at the conclusion of a dialogue flow.

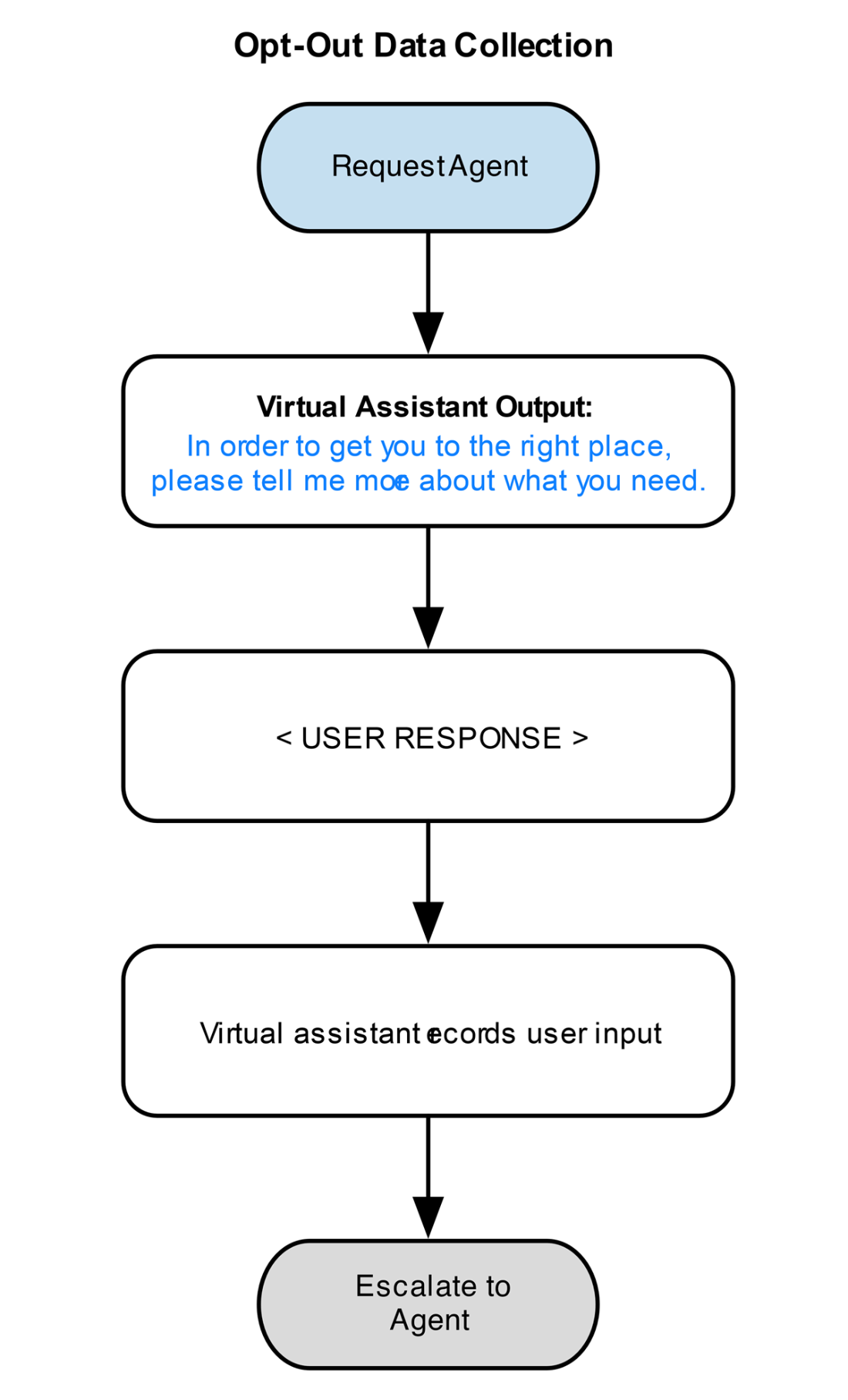

A simple flow to collect the user’s reason for opting out.

A typical opt-out retention pattern will try to find out what the user needs. It will either recognize an in-scope request (a request that it is equipped to handle), an out-of-scope request (a request that it understands, but is not equipped to handle), or it will not recognize the request. When an in-scope request is recognized, the bot may make an incentivized offer to keep the user in-channel. When an out-of-scope request is made, the bot can route the user directly to the appropriate skill or agent queue. When a request is not recognized, the user is routed to a default or general hold queue.

Summary

- Opt-outs are a major source of containment loss, which causes a virtual agent to fail on delivering business value.

- Users who opt-out early in a conversation tend to do so for reasons related to their perception of a virtual agent’s capability, and whether they are confident that the virtual agent can usher the user to their end goal.

- Opt-outs that occur later in a conversation are indicators that a virtual agent might have weak understanding or problems with the dialogue design.

- Opt-out retention is a great strategy for improving containment; it can also provide valuable data about what users expect your bot to be able to do.

- Generative AI can supplement the process of crafting tactful, efficient responses throughout your dialogue flows.

FAQ

What is an opt-out in conversational AI, and why does it matter?

Opt-out is when a user exits the virtual agent experience, often requesting a human. High opt-out rates reduce containment, increase costs, and can undermine the business case for your chatbot or voice assistant.What’s the difference between immediate and later opt-outs?

Immediate opt-outs happen at the very start—users ask for a human before engaging. Later opt-outs occur mid-flow and usually point to specific design, understanding, or process issues within the conversation.What drives immediate opt-outs?

- Prior bad experiences with IVRs/chatbots

- Belief their problem is too complex or unique for automation

- Preference for human interaction (trust, sensitivity, accessibility, language)

What typically causes users to opt out later in a conversation?

- Bot doesn’t understand the request

- User doesn’t understand what the bot is asking for

- User doesn’t have the requested information

- User feels stuck or not progressing

- User dislikes the answer or expected a different outcome

How can I find where and why users opt out?

Use analytics and custom instrumentation. Track opt-outs by task flow and step with context variables and breadcrumbs. Trend reports can reveal immediate vs. downstream hotspots and pinpoint root causes for improvement.What are the most effective ways to reduce immediate opt-outs?

- Open with a warm, context-aware greeting and clear introduction

- Convey capabilities and set expectations up front

- Incentivize self-service (speed, convenience, hold-time comparisons)

- Give users agency by inviting them to opt in

How do I design downstream flows to keep users engaged?

- Try hard to understand: keep training current; use retrieval/RAG if appropriate

- Try hard to be understood: clear prompts, explicit formats, disambiguation

- Be flexible: accept a range of valid inputs; guide progressively on retries

- Voice tips: keep cognitive load low; end messages with the question

- Convey progress: set expectations and signal “almost done” moments

- Anticipate next needs: offer next-best actions to avoid escalations

- Be empathetic: avoid blaming users in error messages

Effective Conversational AI ebook for free

Effective Conversational AI ebook for free