1 Understanding agentic applications

This chapter introduces agentic applications as systems that wrap large language models with tools, memory, retrieval, and reasoning loops so they can complete multi-step work rather than simply generate one response. It distinguishes between fully autonomous AI agents, where the model decides what to do and when to stop, and agentic workflows, where developers define the structure while allowing LLM-powered steps inside it. The main guidance is pragmatic: use autonomy where it adds value, but prefer predictable workflows when the process can be planned in advance.

The chapter explains that the core building block of agentic systems is the augmented LLM, enhanced with retrieval, tool use, and memory. Function calling lets an LLM request structured tool invocations, while application code executes the tools and returns results for further reasoning. It also outlines common design patterns such as prompt chaining, routing, parallelization, orchestrator-worker systems, and evaluator-optimizer loops, emphasizing that real applications often combine these patterns while keeping the design as simple as possible.

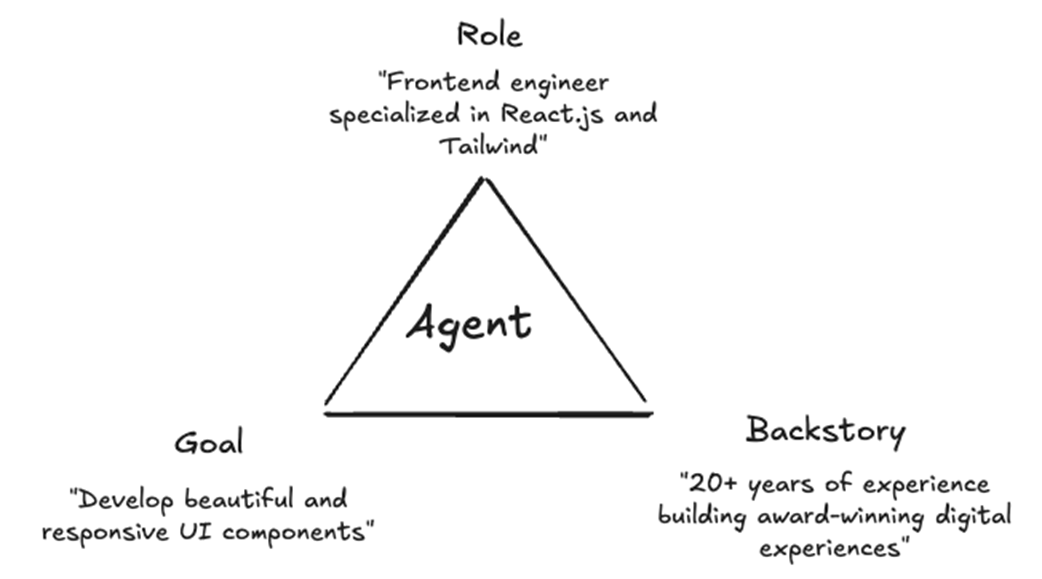

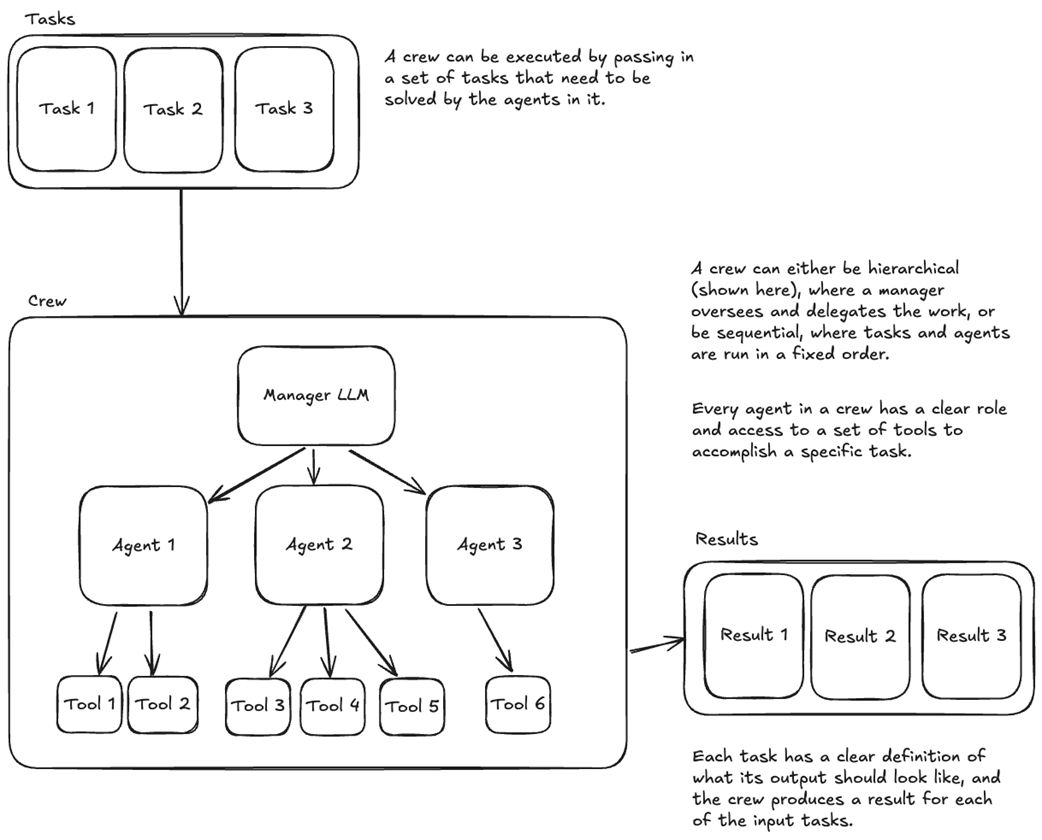

The chapter then presents CrewAI as the framework used to implement these ideas through agents, tasks, tools, crews, and flows. Agents are defined using role, goal, and backstory; tasks specify what success looks like; tools let agents interact with external systems; crews coordinate multiple specialized agents; and flows provide explicit workflow control with state, branching, parallelism, and error handling. It also highlights production challenges—compounding errors, high token costs, difficult evaluation, the need for human oversight, and context degradation—and introduces MCP as a standard way for agentic applications to connect with external tools and the wider AI ecosystem.

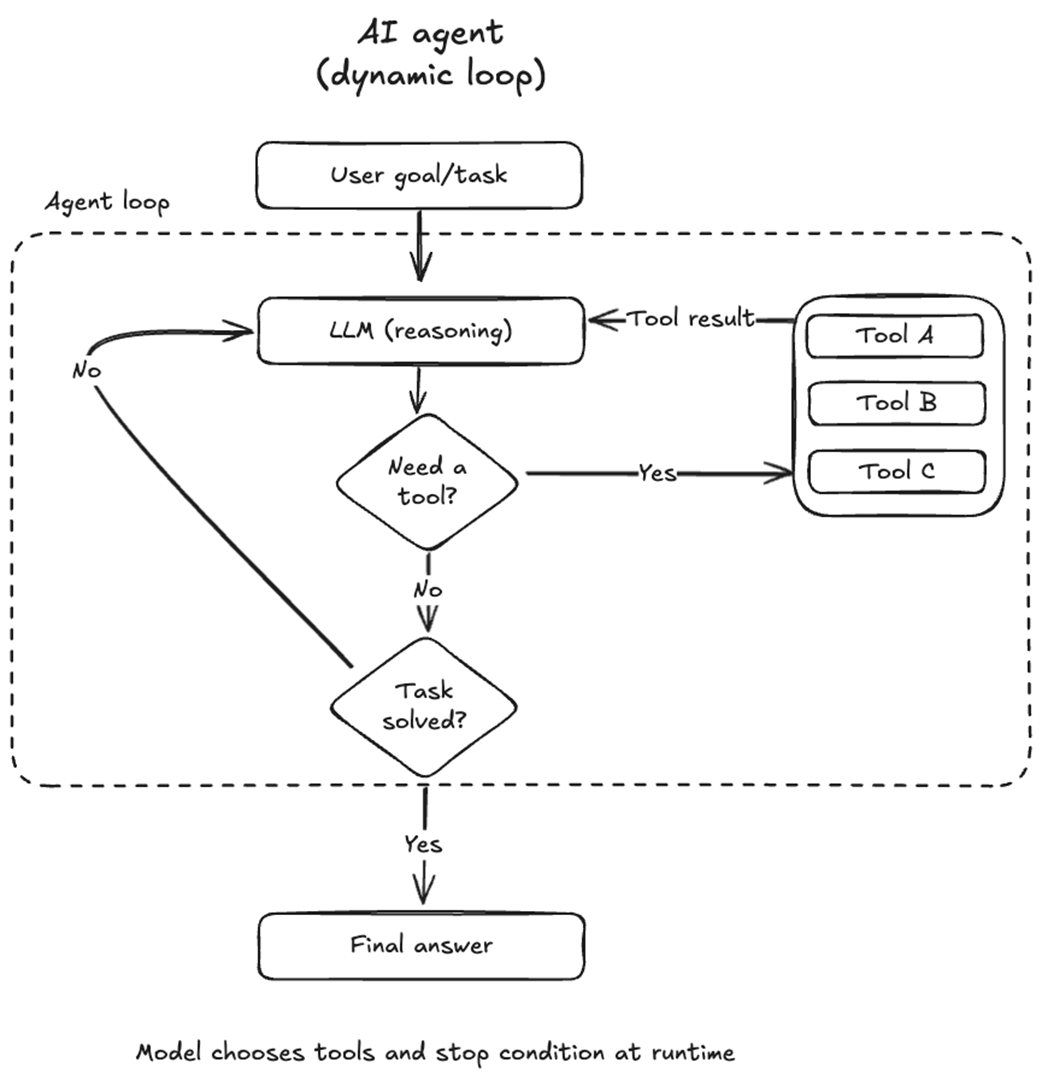

At the heart of each AI agent is the agent loop, in which the LLM reasons, calls tools at will, and decides when to stop.

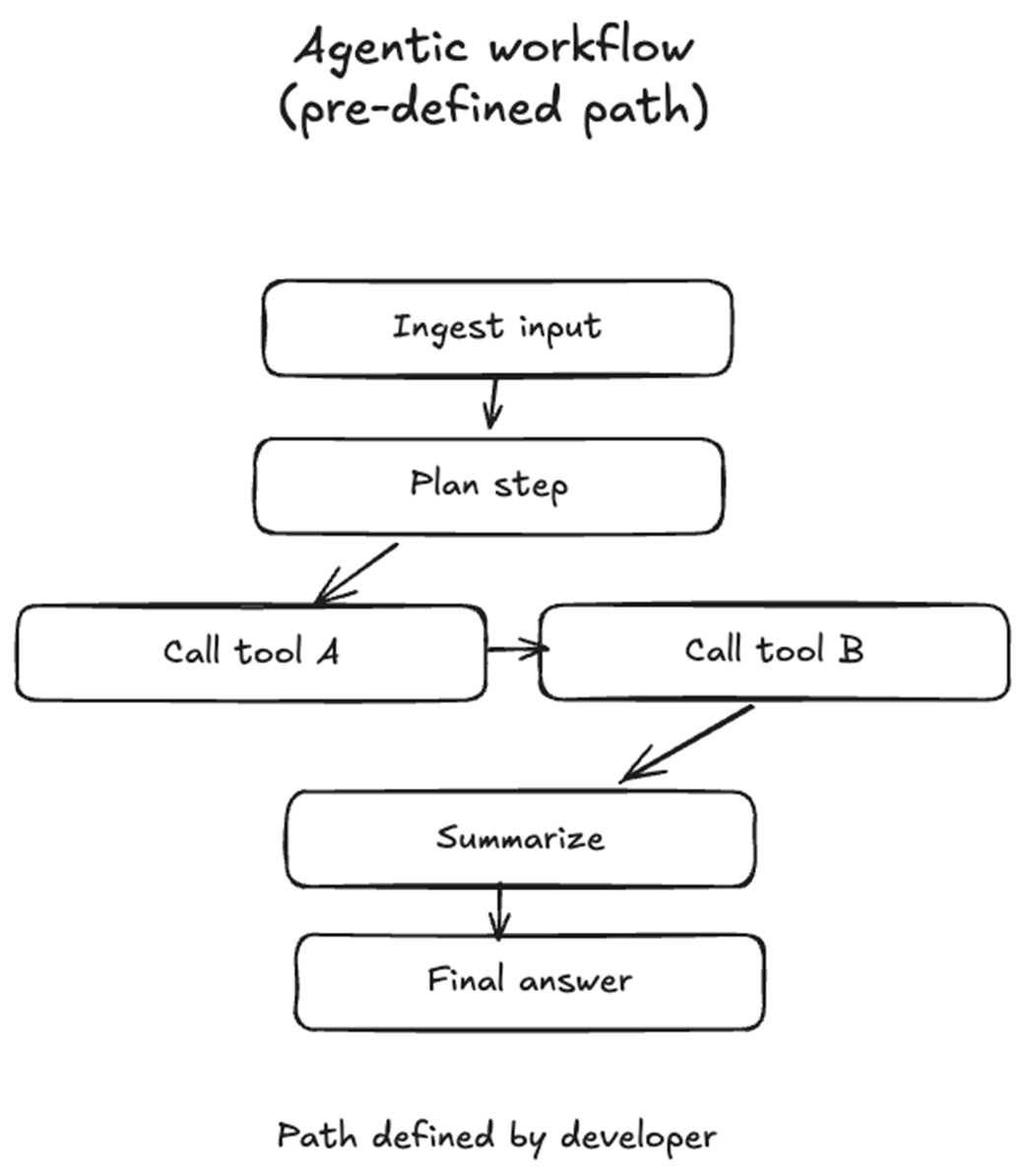

In an agentic workflow, the system follows code paths that were pre-defined by the developer. Those steps can include arbitrary logic expressed in code, LLM calls, as well as dynamic routing based on the results of a previous step.

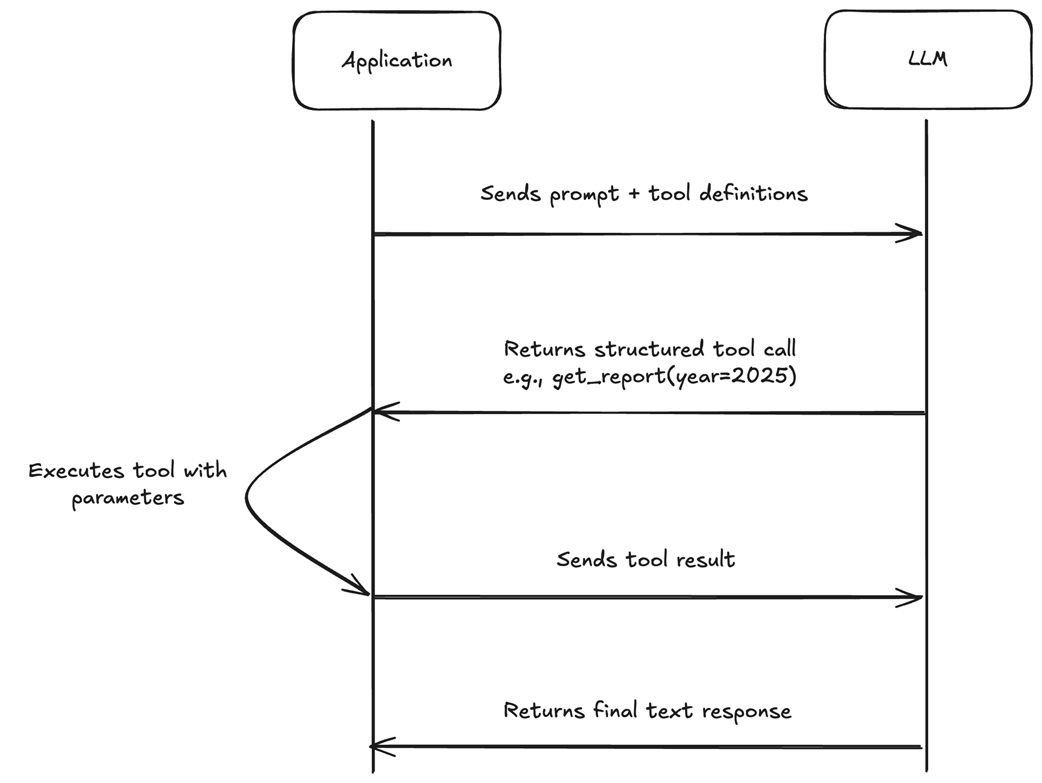

The application sends the user's prompt along with tool definitions to the LLM. Instead of answering directly, the LLM returns a structured tool call. The application + executes the tool and sends the result back, and the LLM produces its final response. The LLM never executes tools itself — it only requests them.

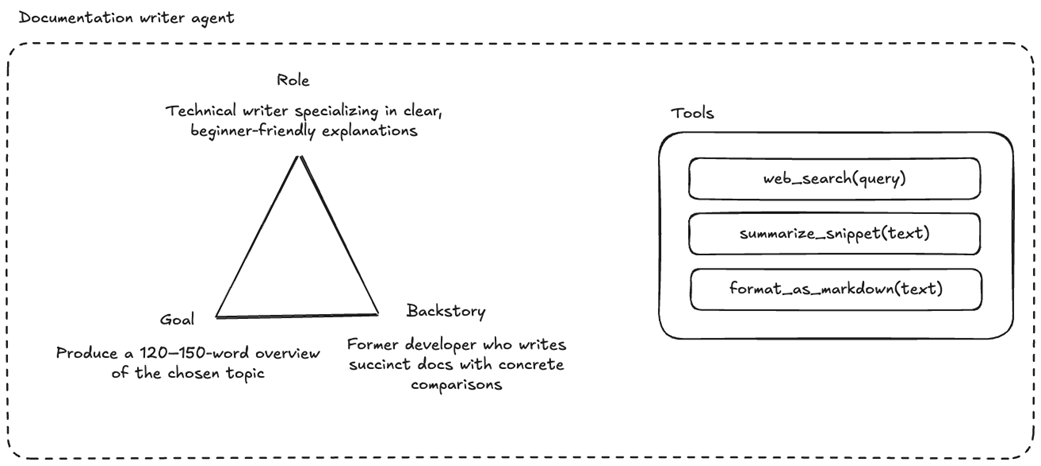

Each agent is initialized with a role, goal, and a compelling backstory.

The documentation writer agent has a well-defined role, a clearly laid out goal, and a compelling backstory. It has access to three tools: one to search the web for a specific query, one to summarize a snippet of text, and one to format any given text as markdown to create a nice document.

A crew is like a cross-functional team that works on a given set of tasks until they are completed.

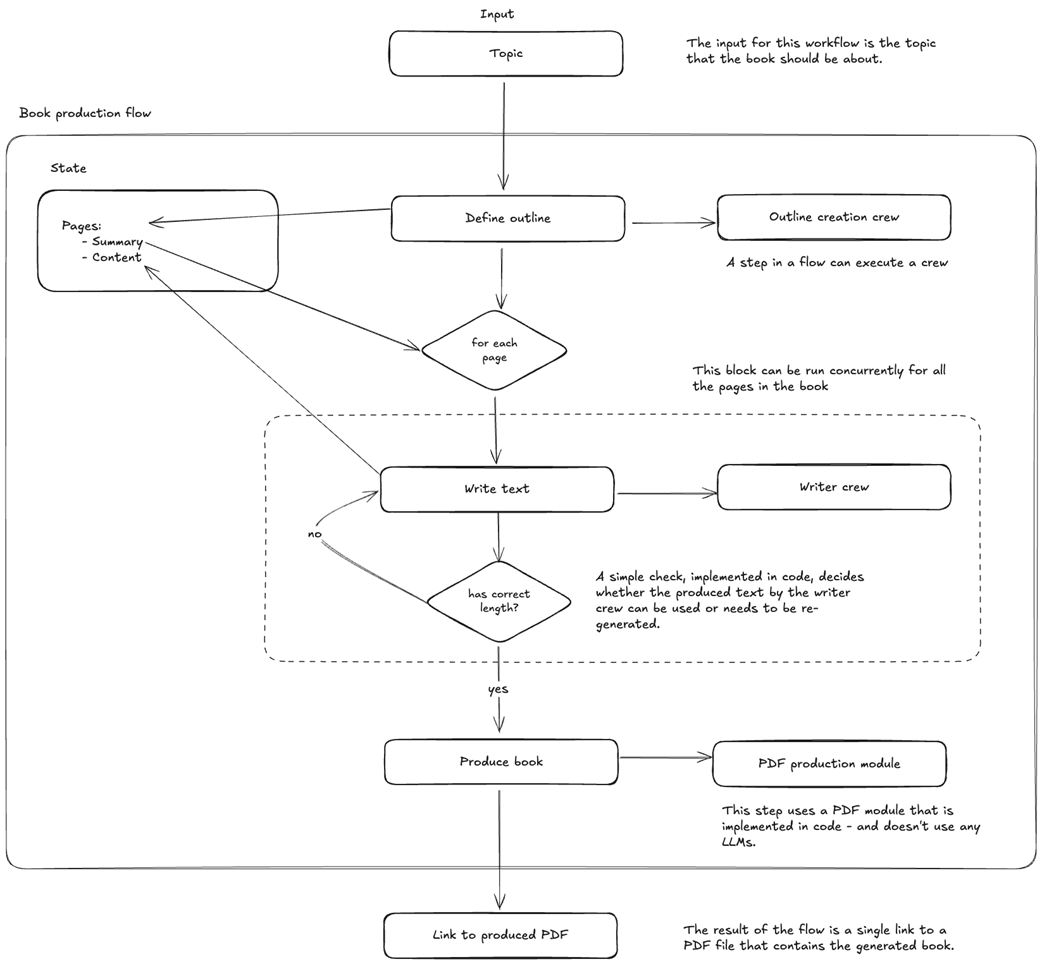

An example flow that generates a book based on a topic that is given as input and returns a link to a generated PDF in the end. It contains two crews that are being executed in two different steps of the workflow, a shared state, parallel execution, and dynamic routing based on the output of a crew.

Summary

- Agentic AI turns a model that predicts text into a system that gets work done. An AI agent reasons, calls tools, observes results, and loops until the task is complete. Agentic workflows follow developer-defined code paths and trade flexibility for predictability.

- The augmented LLM is the atomic building block of every agentic system: a language model enhanced with retrieval, tools, and memory.

- Five recurring design patterns cover most agentic architectures: prompt chaining, routing, parallelization, orchestrator-workers, and evaluator-optimizer. They sit on a spectrum from simple to complex, and real applications often combine several.

- Production agentic systems face compounding errors across steps, high token costs, non-deterministic evaluation, the need for human oversight, and context degradation over long interactions.

- CrewAI organizes agentic systems around agents (defined by role, goal, and backstory), tasks, tools, crews, and flows. Crews handle multi-agent collaboration, while flows give explicit control over execution order and logic. MCP connects agents to external capabilities through a standardized protocol.

FAQ

What is agentic AI?

Agentic AI refers to AI systems that augment LLMs with tools, retrieval, memory, and autonomous reasoning. Instead of responding once to a prompt and stopping, an agentic system can reason about what to do next, call tools such as web search or database queries, observe the results, and continue looping until the task is complete.

How is an AI agent different from an agentic workflow?

An AI agent is a system where the LLM dynamically controls its own reasoning loop, chooses when to call tools, decides what steps to take, and determines when the task is finished. An agentic workflow, by contrast, follows logic defined in advance by a developer, often as a graph of nodes and edges. Workflows can still use LLMs and dynamic routing, but they trade some autonomy for greater predictability.

When should I use a fully autonomous agent instead of a workflow?

Fully autonomous agents are best when the steps cannot be predicted in advance, such as open-ended research or complex debugging. For most production use cases, it is safer to start with a workflow and add autonomy only where it provides clear value. Workflows give you more control over the sequence of operations and are often easier to make reliable.

What is an augmented LLM?

An augmented LLM is a language model enhanced with three core capabilities: retrieval, tools, and memory. Retrieval lets it access external knowledge from sources such as search engines, vector databases, or files. Tools let it take actions such as calling APIs, writing files, or querying databases. Memory lets it maintain state across steps or conversations. The chapter describes the augmented LLM as the atomic building block of agentic systems.

How does function calling allow LLMs to use tools?

Function calling lets developers define tools with names, descriptions, and input/output schemas. The LLM does not execute the tool itself; instead, it emits a structured tool call with the arguments it wants to use. The application code executes the tool, sends the result back to the LLM, and the LLM continues reasoning with that new information.

What are the main patterns for building agentic applications?

The chapter introduces several common patterns: prompt chaining, routing, parallelization, orchestrator-workers, and evaluator-optimizer. Prompt chaining breaks work into sequential LLM calls. Routing sends inputs to specialized handlers. Parallelization runs multiple LLM calls at the same time. Orchestrator-workers uses a central LLM to delegate subtasks. Evaluator-optimizer uses one LLM to generate output and another to critique and improve it.

Why are agentic applications difficult to run reliably in production?

Agentic applications are difficult because errors compound across multiple steps, costs can rise quickly due to repeated LLM calls and tool usage, evaluation is harder than traditional unit testing, human oversight is required for risky actions, and long contexts can degrade model performance. Each additional step adds power but also introduces cost, complexity, and new failure modes.

What principle should guide the design of agentic applications?

The chapter recommends starting with the simplest approach that could work. A single well-prompted LLM call is often better than a complex multi-agent system. The suggested progression is: start with a single LLM call, then add tools to create an augmented LLM, then build a simple agentic workflow, and only then move to a multi-agent system when there is a concrete reason to do so.

What are the core building blocks of CrewAI?

CrewAI provides composable primitives for building agentic applications: agents, tasks, tools, crews, and flows. Agents are specialized AI personas. Tasks describe what needs to be done and what success looks like. Tools let agents interact with the outside world. Crews are teams of agents working together. Flows provide explicit workflow control with logic, state management, routing, parallel processing, and integration with non-AI systems.

What is MCP and how does CrewAI use it?

MCP, or Model Context Protocol, is an open standard for connecting AI clients to external tools and capabilities in a consistent way. It acts like a universal plug for AI tools, allowing one MCP server to work with compatible clients such as Cursor, Claude Desktop, or ChatGPT. CrewAI supports MCP in both directions: agents can consume tools from external MCP servers, and developers can expose their own crews as MCP servers for other AI systems to call.

Building Agentic Applications with CrewAI and MCP ebook for free

Building Agentic Applications with CrewAI and MCP ebook for free