3 Connecting AI models with the Vercel AI SDK

This chapter explains how to evolve a basic AI app into a robust, scalable web experience by adopting the Vercel AI SDK. It begins with the core challenges developers face—vendor lock-in from direct provider APIs, the engineering complexity of real-time streaming, and growing pains around state management—and positions the SDK as a unifying solution. The guidance emphasizes sound integration principles: separation of concerns between intents and actions, abstraction layers to decouple external dependencies, incremental adoption to reduce risk, and continuous testing and documentation to preserve reliability as features expand.

Practically, the chapter shows how to replace direct model calls with SDK utilities that abstract providers and streamline UX. It introduces generateText for non-streaming scenarios and then upgrades to streamText for real-time responses via async iterables, while the useChat and useCompletion React hooks handle buffering, partial updates, and error states for a smooth UI. The Astra AI app is incrementally migrated: first swapping the route handler to use SDK functions, then enabling streaming on the backend and adopting useChat on the frontend so messages arrive and render progressively, improving perceived performance and conversational flow.

Beyond streaming, the chapter demonstrates portable, multi-provider support through a Language Model Specification that applies an Abstract Factory approach. A centralized selector (e.g., getSupportedModel) validates provider/model availability and keys, enabling easy switching between OpenAI, Google, and others from a simple UI control that passes choices through the hook body. Finally, it extends the chat to multimodal interactions by allowing users to upload images alongside text; the backend reformats the last user message into text and image parts for vision-capable models, while the UI handles file selection and optional preview. With notes on limits and quality considerations for images, the result is a more natural, flexible conversational interface that’s provider-agnostic, stream-enabled, and ready for richer media.

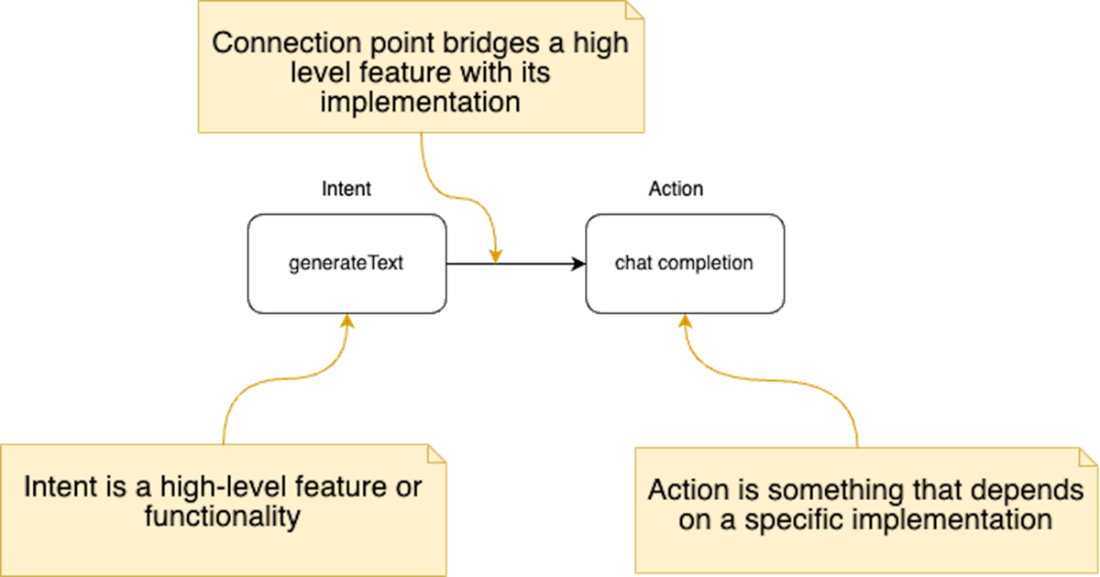

Separation of concerns between intents and actions. The intent (left) represents the high-level feature or functionality, such as "generateText". The action (right) represents the specific implementation or concrete steps to fulfill the intent, such as making a "chatCompletion" request. The arrow signifies the connection point that bridges the intent with its corresponding action, facilitating communication and interaction between the two components.

Speed comparison of gpt-4 models served from OpenAI vs Azure. The output token throughput are merely within a couple of dozen tokens per second maximum. Source https://artificialanalysis.ai.

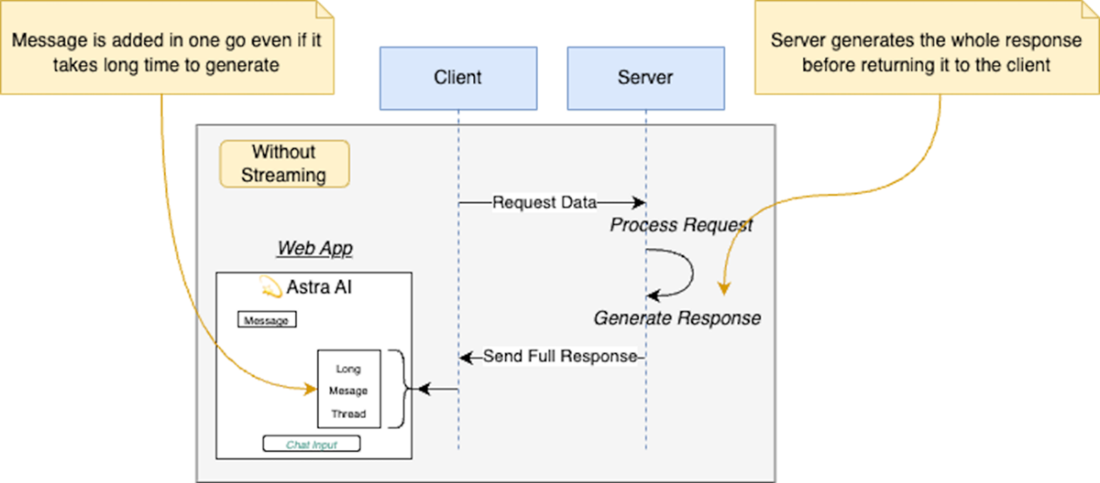

Without streaming the client sends a request and waits for the server to generate and send the full response before displaying it.

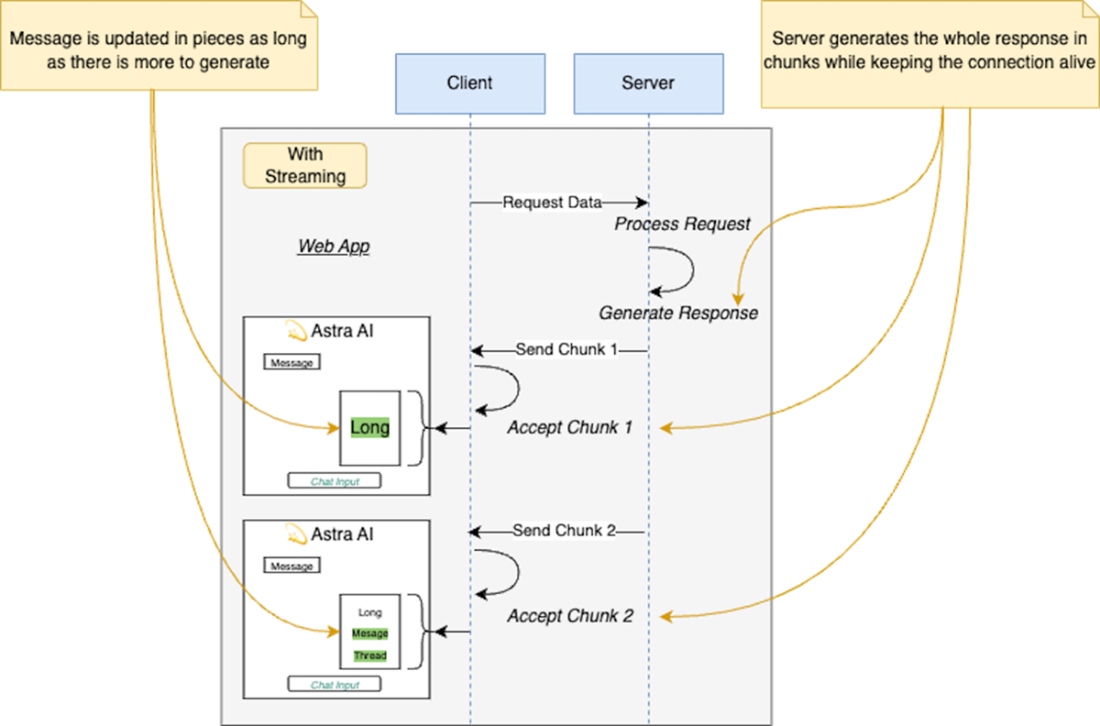

With streaming the client sends a request and the server sends the response in small chunks that are being streamed back to the client for processing.

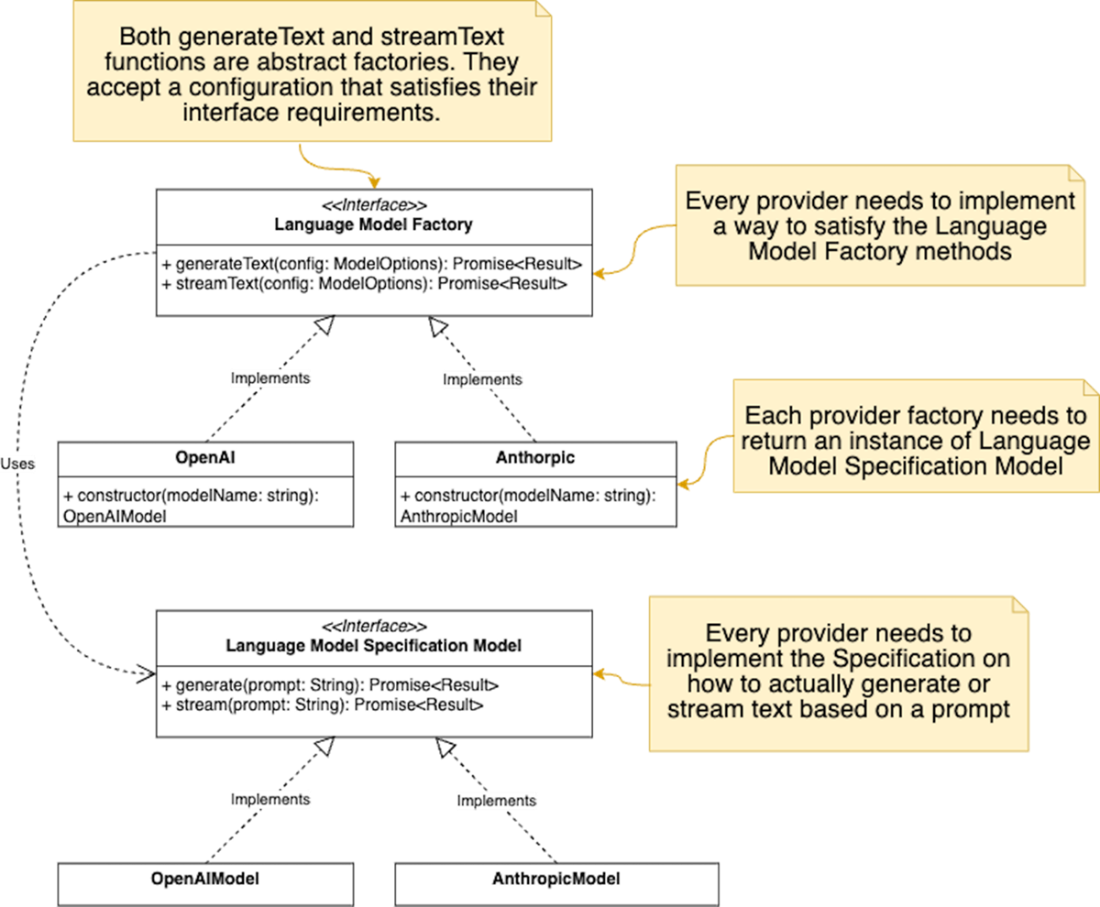

Implementation of the Abstract Factory pattern in the Vercel AI SDK for generating text using different language model providers

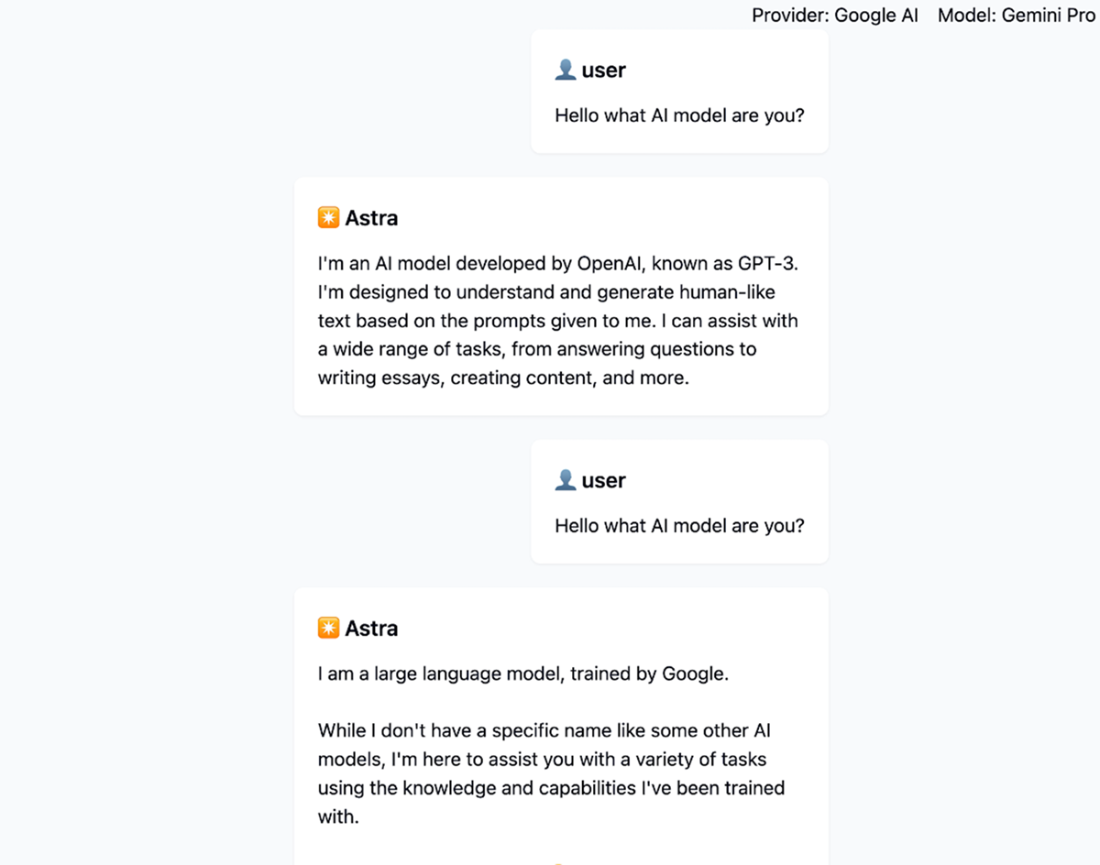

Screenshot of the current application showcasing the usage of multiple providers in the same chat session.

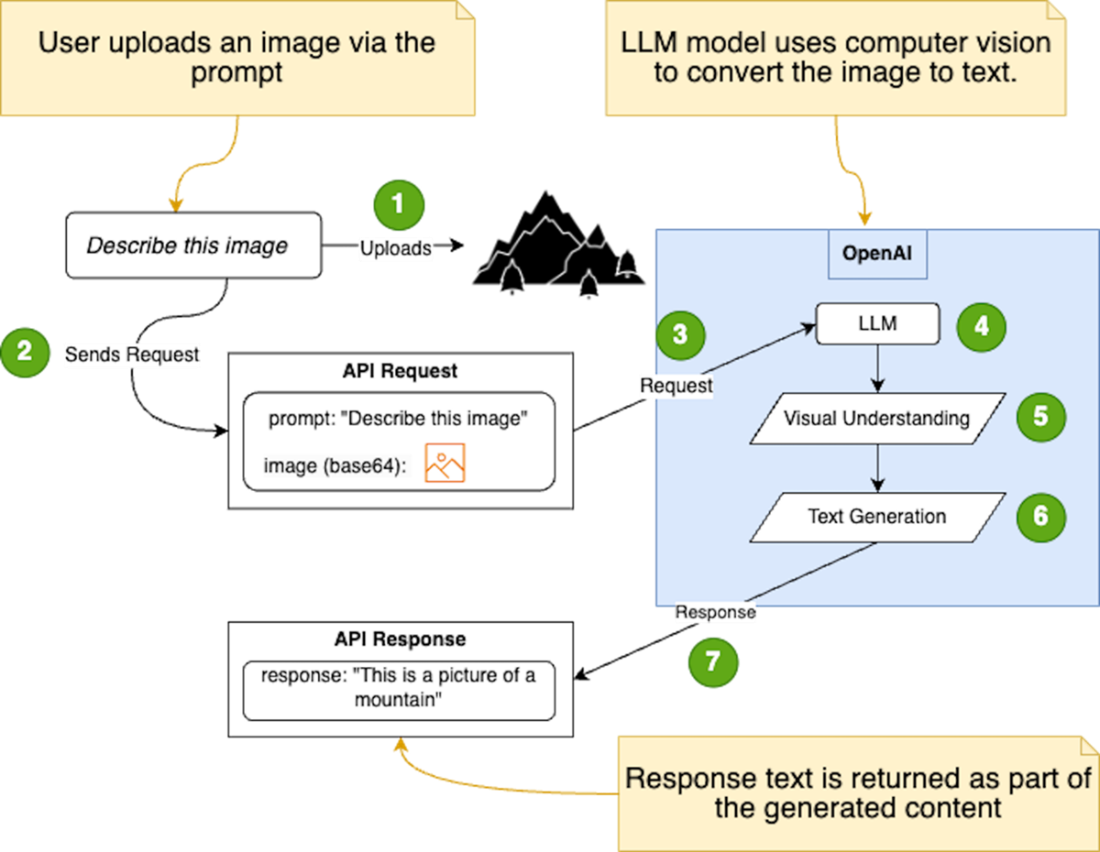

The image illustrates the process of sending an image alongside a text prompt to the OpenAI API, and how the language model utilizes computer vision techniques to understand the image and generate a relevant text response.

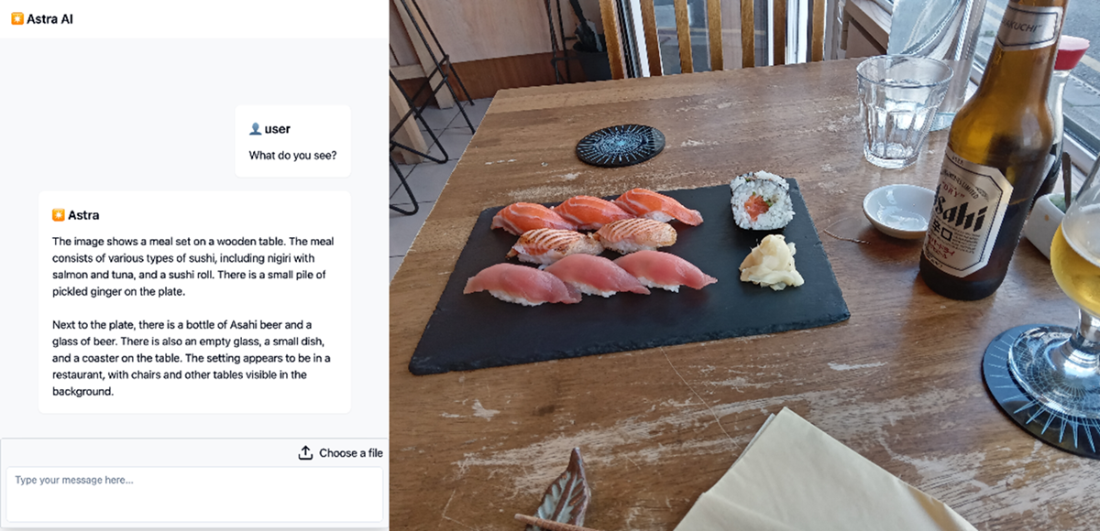

Uploading an image and getting an accurate description from the AI model. This functionality shows how the LLM can generate text descriptions from other media like images.

Summary

- The Vercel AI SDK simplifies AI integration into web applications.

- It also offers features such as provider abstraction, streaming responses, state management, and support for React server components

- The SDK allows developers to break down complex AI tasks into smaller, more manageable components

- Guidelines for integrating the SDK include separation of concerns, abstraction layers, incremental integration, testing and validation, and documentation.

- The SDK provides functions like generateText and streamText for text generation.

- React hooks like useChat and useCompletion are available for creating conversational UI and text completion capabilities.

- Implementing streaming responses with the SDK has challenges like asynchronous processing, connection management, data buffering, and error handling.

- The SDK abstracts away many of these low-level details to simplify handling streaming responses in web applications.

- The SDK leverages the Language Model Specification to simplify working with different AI providers and models.

- The integration of the SDK enhances functionality and user experience by enabling streaming chat, multiple AI provider support, and integration of OpenAI’s vision capabilities.

Build AI-Enhanced Web Apps ebook for free

Build AI-Enhanced Web Apps ebook for free