1 Real-world Decision Making with Reinforcement Learning

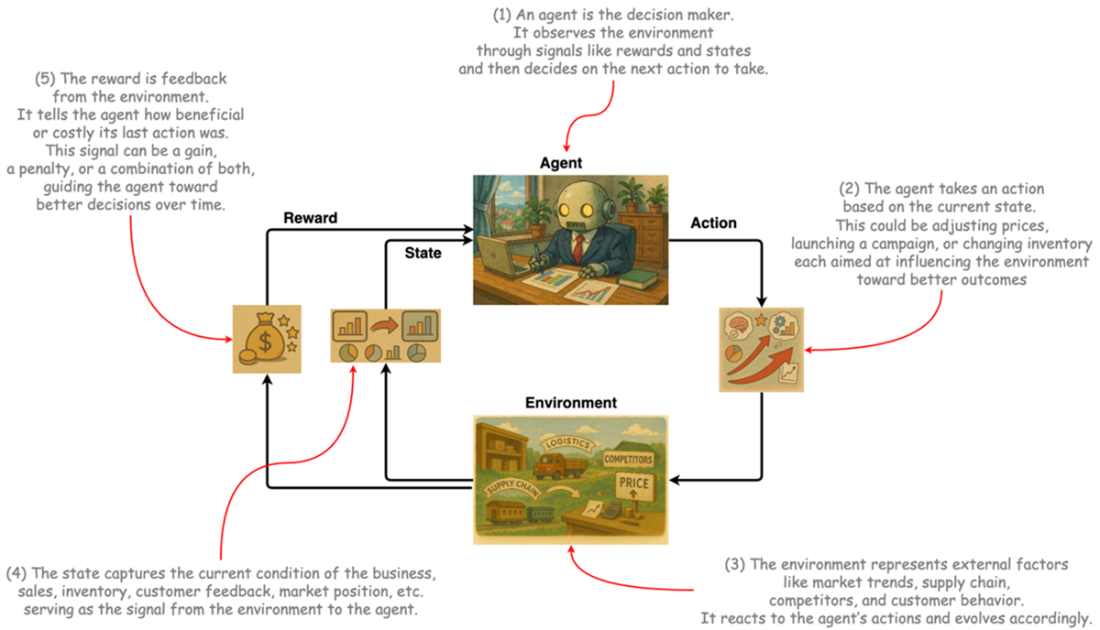

Businesses operate with limited resources in uncertain, competitive environments, so the core managerial task is making sequential decisions that balance present realities with the cumulative impact of past choices. Reinforcement learning (RL) is introduced as a natural fit for this setting: rather than learning labels or patterns, it learns how to act through interaction, optimizing long-term value under uncertainty. Framed alongside broader analytics, the chapter distinguishes questions about external and internal factors across time—what happened, what will happen, why it happened, and what should we do—highlighting that the book’s emphasis is on optimization, where decisions can be quantified and repeatedly improved in practice.

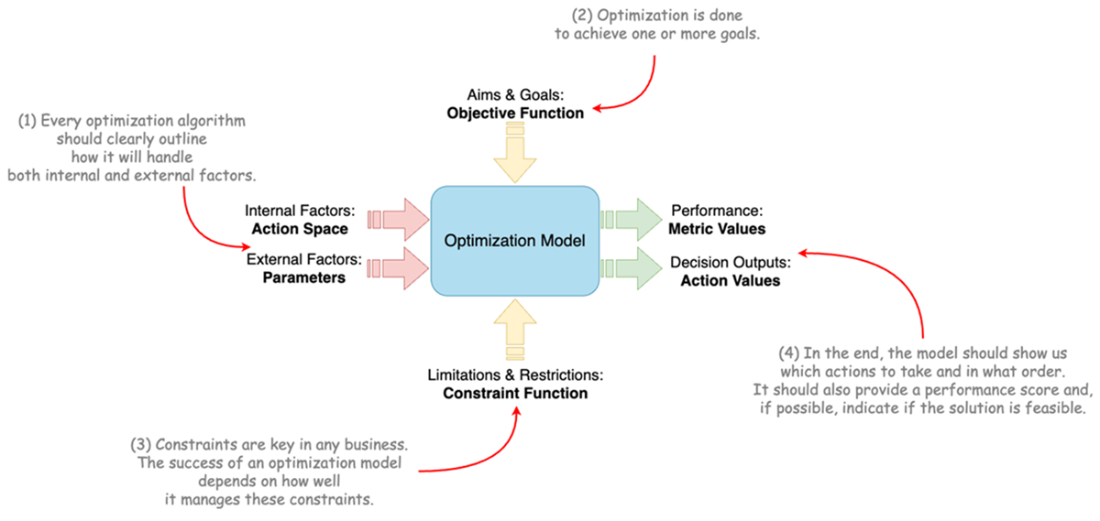

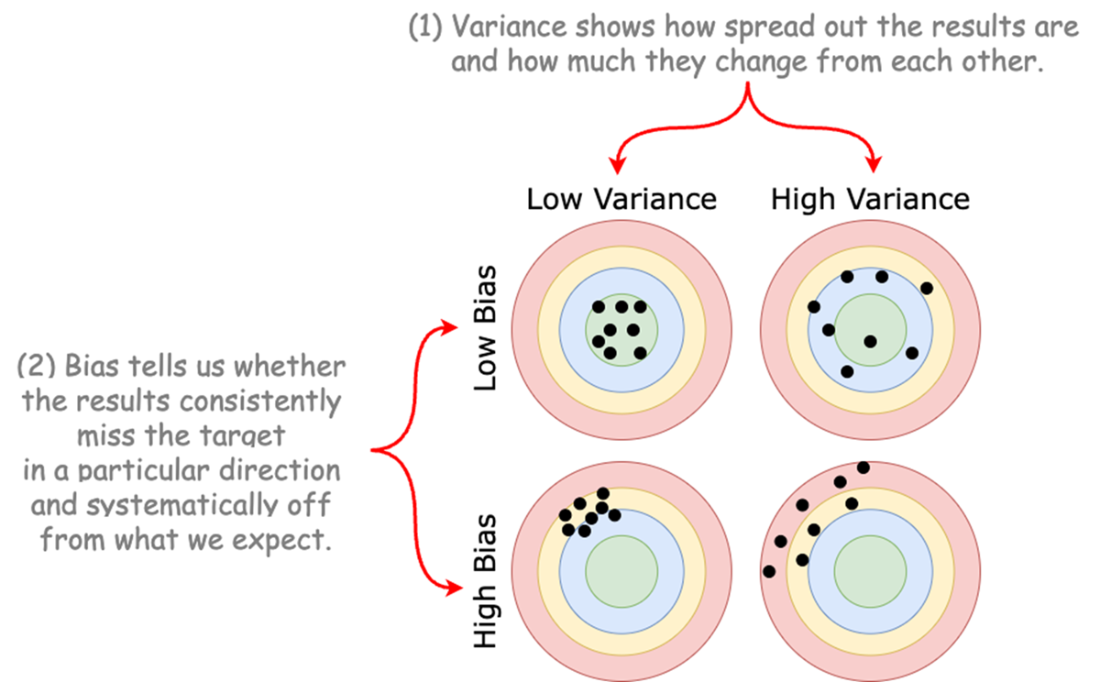

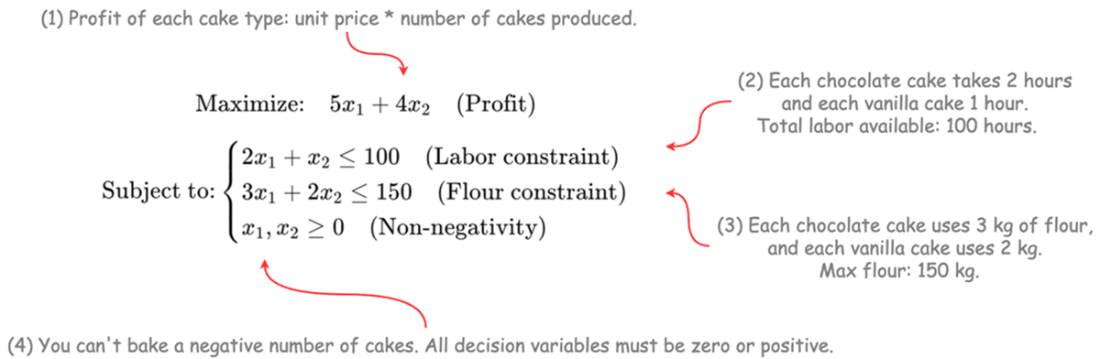

The chapter defines a practical blueprint for business optimization: take external inputs as parameters, choose actions as decision variables, optimize one or more objectives, respect real-world constraints, and produce actionable outputs and performance metrics. It grounds this framework in common, quantifiable, operational problems—inventory replenishment, vehicle routing, production scheduling, workforce shifts, bike-share rebalancing, and dynamic pricing—while underlining the real challenge: handling constraints and managing the bias–variance trade-off across changing conditions. To evaluate solutions, it proposes criteria such as robustness, resilience, real-time responsiveness, adaptability, flexibility, generalizability, customizability, effort to build and operationalize, lifecycle cost, and interpretability. Classical methods—operations research (LP, MIP, NLP), stochastic simulation (including queueing, Monte Carlo, and discrete-event), system dynamics, and game theory—form the foundation for modeling and reasoning about these decisions.

Classical approaches excel when systems are stable and fully specified, but they can falter as markets shift and assumptions break; RL complements them by learning policies from experience, handling delayed rewards, and adapting in real time. Still, RL is not a silver bullet: it demands data or simulators, can be computationally intensive, and raises explainability and operationalization challenges. The chapter concludes that the most effective path is often hybrid—combining structured models and domain knowledge with learning systems that improve through interaction—setting the stage for practical guidance on building simulators, selecting algorithms, and deploying RL to extend, not replace, proven optimization techniques.

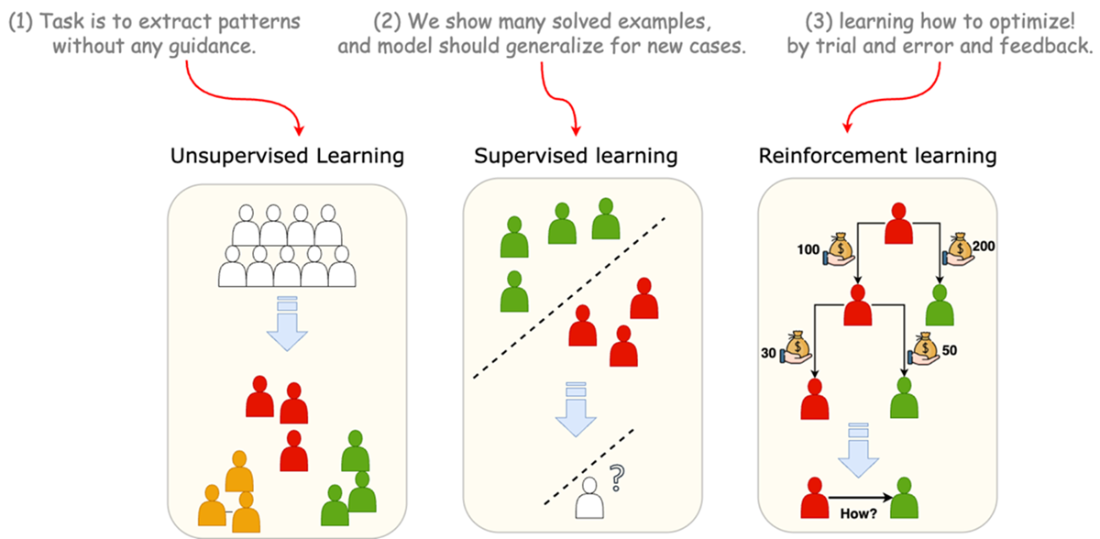

Reinforcement learning in the context of machine learning.

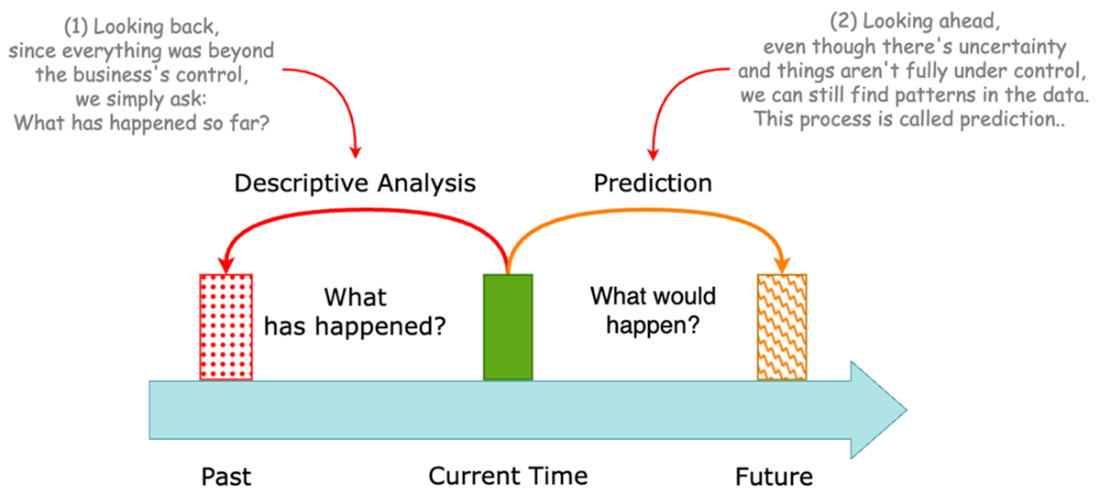

two types of questions and analytical approaches for analyzing external factors.

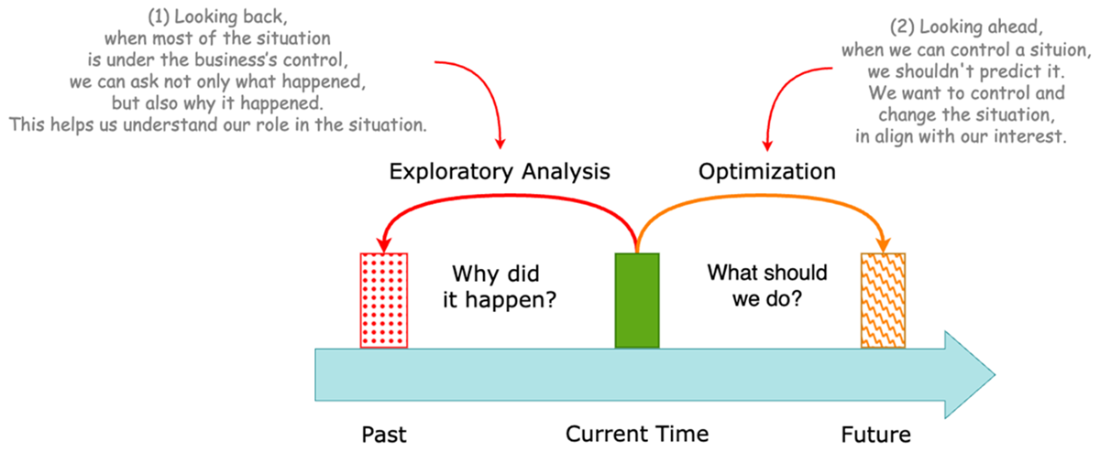

two types of questions and analytical approaches for analyzing internal factors.

Framework for business optimization models.

Variance and bias trade off in business optimization models.

Linear programming formulation of bakery shop problem.

Overview of reinforcement learning framework.

Summary

- Businesses must make smart decisions under uncertainty with limited resources.

- Understanding external (uncontrollable) and internal (controllable) factors is key to effective analysis.

- Business analysis types include descriptive, predictive, explanatory, and optimization.

- Optimization focuses on shaping internal factors to improve future outcomes.

- Decisions in business problems vary by level (strategic/tactical/operational), frequency, scale, and measurability.

- Optimization models include inputs (parameters and decisions), objectives, constraints, objective outputs, and decision values.

- Major challenge in optimization is bias-variance trade-offs in the operational process

- Classical models like operations research, simulation, and system dynamics are powerful but often rigid and static.

- Reinforcement learning extends classical models by enabling adaptive, sequential decision-making.

- Reinforcement learning learns through trial-and-error, using feedback to improve policies over time.

- A comparison shows reinforcement learning excels in adaptability, real-time learning, and dynamic environments.

- Reinforcement learning downsides include training cost, data needs, and explainability—but it's improving rapidly.

- Reinforcement learning is not a replacement but a powerful extension and complement of classical optimization models.

FAQ

What is reinforcement learning and why is it useful for business optimization?

Reinforcement learning (RL) teaches an agent to make sequential decisions through trial and error, optimizing long‑term outcomes via feedback (rewards/penalties). It is well‑suited to business because many decisions unfold over time under uncertainty (pricing, routing, inventory), conditions change, and the “best” action depends on both current context and past choices. RL adapts as it learns, helping decision systems remain effective in dynamic environments.How does reinforcement learning differ from supervised and unsupervised learning?

- Unsupervised learning finds patterns or clusters without labels.- Supervised learning maps inputs to known labels to predict outcomes (e.g., churn).

- Reinforcement learning learns how to act: it chooses actions, observes delayed rewards, and solves the credit‑assignment problem to maximize cumulative value over time.

What does “sequential decision‑making under uncertainty” mean in a business context?

Business outcomes today are shaped by yesterday’s choices, while tomorrow’s conditions remain uncertain (competition, demand, supply shocks). Sequential decision‑making under uncertainty means planning and acting across time, updating actions as new information arrives, and managing trade‑offs between short‑term gains and long‑term value—exactly the setting RL models.What types of business analysis map to external vs. internal factors?

- External factors (limited or no control):• Past: Descriptive analysis (“What happened?”)

• Future: Predictive/forecasting (“What will happen?”)

- Internal factors (some control):

• Past: Explanatory (“Why did it happen?”)

• Future: Optimization (“What should we do?”)

Internal variables can still be influenced by external forces, so it’s common to ask “external‑type” questions about them as well.

When is business optimization the right tool?

Optimization typically fits operational, recurring, multi‑entity, and quantifiable problems (e.g., daily fleet routing). As you move toward strategic, one‑off, qualitative decisions, “optimal” becomes subjective and frameworks (e.g., 5 Forces, 7S, Blue Ocean) are often more appropriate.What are the core components of a business optimization model?

- Inputs:• External factors as parameters (e.g., demand forecasts, lead times)

• Decision variables (actions to choose)

- Problem structure:

• Objective(s) to maximize/minimize (often multi‑objective)

• Constraints (resources, policies, SLAs, regulations)

- Outputs:

• Objective value(s) and recommended actions (e.g., routes, schedules) with metrics for evaluation.

What real‑world problems exemplify business optimization in the chapter?

- Inventory replenishment (minimize total cost while meeting service levels)- Vehicle routing (minimize distance/time/fuel across customers and fleets)

- Production scheduling (sequence/quantities to meet demand and capacity)

- Workforce shift scheduling (coverage, labor cost, fairness/legal rules)

- Bike‑sharing rebalancing (reduce network imbalance with routing cost)

- Dynamic pricing for perishables (maximize revenue over time windows)

What practical challenges and evaluation criteria should we consider?

Models face a bias‑variance trade‑off and must be judged on: robustness, resilience, real‑time responsiveness, adaptability, flexibility, generalizability, customizability, effort to build and operationalize, lifecycle cost, and interpretability. Ignoring these leads to brittle, slow, costly, or untrusted systems.Which classical methods does the chapter cover, and where do they shine?

- Operations Research (LP/MIP/NLP + solvers): precise optimization with explicit objectives/constraints.- Stochastic Simulation (queues, Monte Carlo, discrete‑event): explore performance under uncertainty and variability.

- System Dynamics: long‑horizon feedback loops, stocks/flows, strategic policy effects.

- Game Theory: multi‑agent strategic interaction, equilibria when competitors’ actions matter.

Applied Reinforcement Learning ebook for free

Applied Reinforcement Learning ebook for free