2 Adoption Models for GenAI

This chapter frames GenAI adoption as a set of organizational postures that determine where control, accountability, and risk reside. It defines a spectrum from vendor-managed to fully self-managed deployments and shows how your chosen posture shapes data handling, security obligations, compliance exposure, and operational oversight. Many organizations operate in more than one posture at once, so clearly identifying your current stance is the starting point for practical governance.

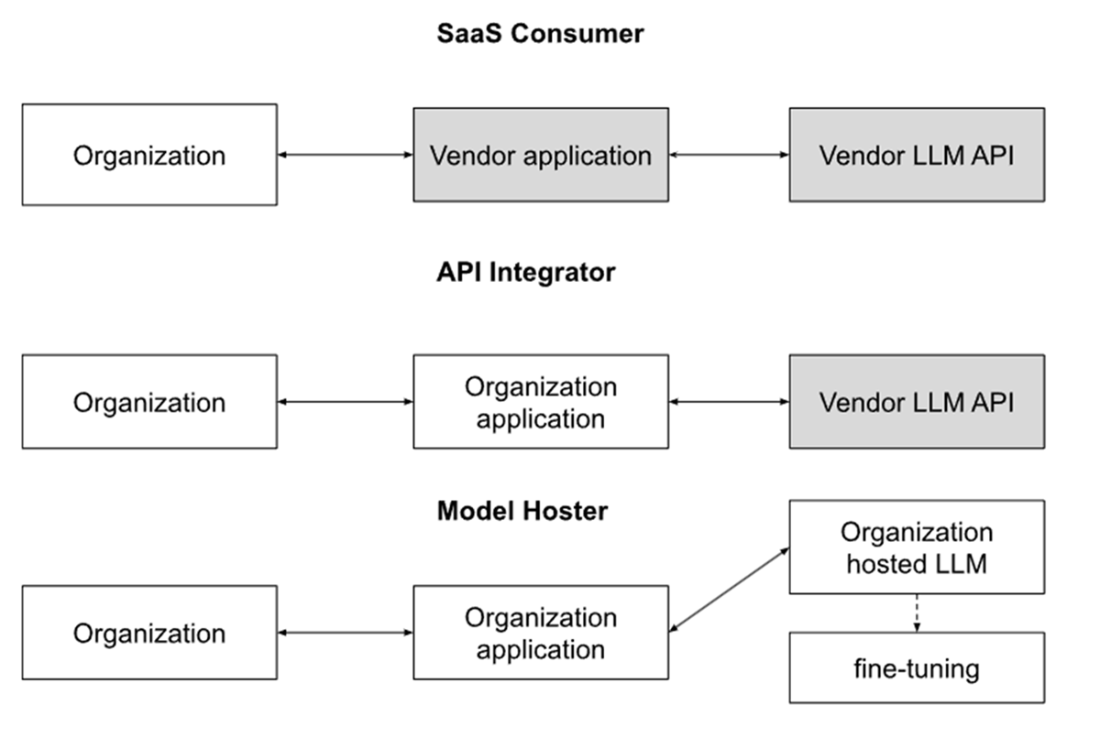

The three postures are: SaaS Consumers, who gain speed with minimal setup but inherit opaque vendor controls and must rely on strong due diligence, careful configuration, and contractual safeguards; API Integrators, who retain their own interfaces and data pipelines while consuming a third-party model, enabling granular pre/post-processing, detailed logging, and targeted compliance controls—but requiring tight coordination and clear SLAs to avoid gaps; and Model Hosters, who run models on their own infrastructure for maximum autonomy over performance, privacy, and explainability, while assuming full responsibility for security, updates, guardrails, provenance, fine-tuning hygiene, and regulatory proof. Across these postures, key risk dimensions shift—data residency and visibility, ownership of guardrails, auditability, security responsibilities, regulatory accountability, liability for model behavior, UI control, and post-deployment governance—making posture-aware controls essential.

The chapter also introduces Agentic AI—goal-driven systems that plan, reflect, observe, act, use tools, and maintain memory—highlighting early promise alongside reliability, alignment, and governance challenges. Because current agents can misbehave, deceive, or amplify errors, organizations should enforce bounded autonomy, human approvals for high-impact actions, rigorous logging, and domain constraints. A healthcare case study (MediAssist) illustrates the journey from a non-clinical SaaS prototype to an API pilot and finally self-hosted production with added monitoring, documentation, and decision logs, and explores future agentic extensions. The throughline is clear: as control increases, so do responsibilities—effective AI governance depends on choosing the right posture and implementing posture-specific safeguards.

Different types of GenAI adoption. SaaS Consumers have the least control and highest vendor dependency, Model Hosters the highest control and lowest vendor dependency

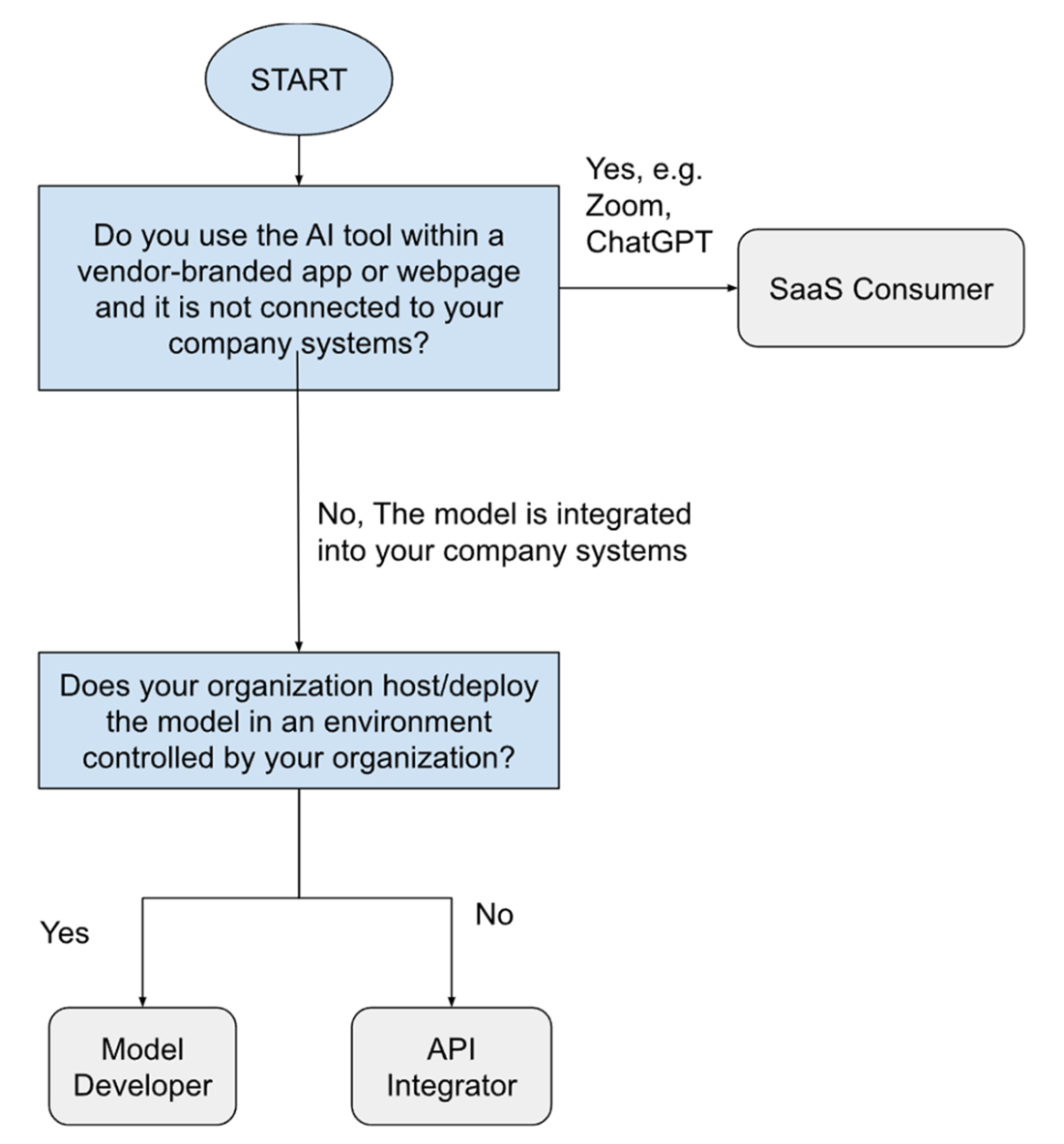

Decision Tree for Classifying Your GenAI Adoption Model. Use this flowchart to determine your organization’s current GenAI posture and assess relevant security and privacy risks.

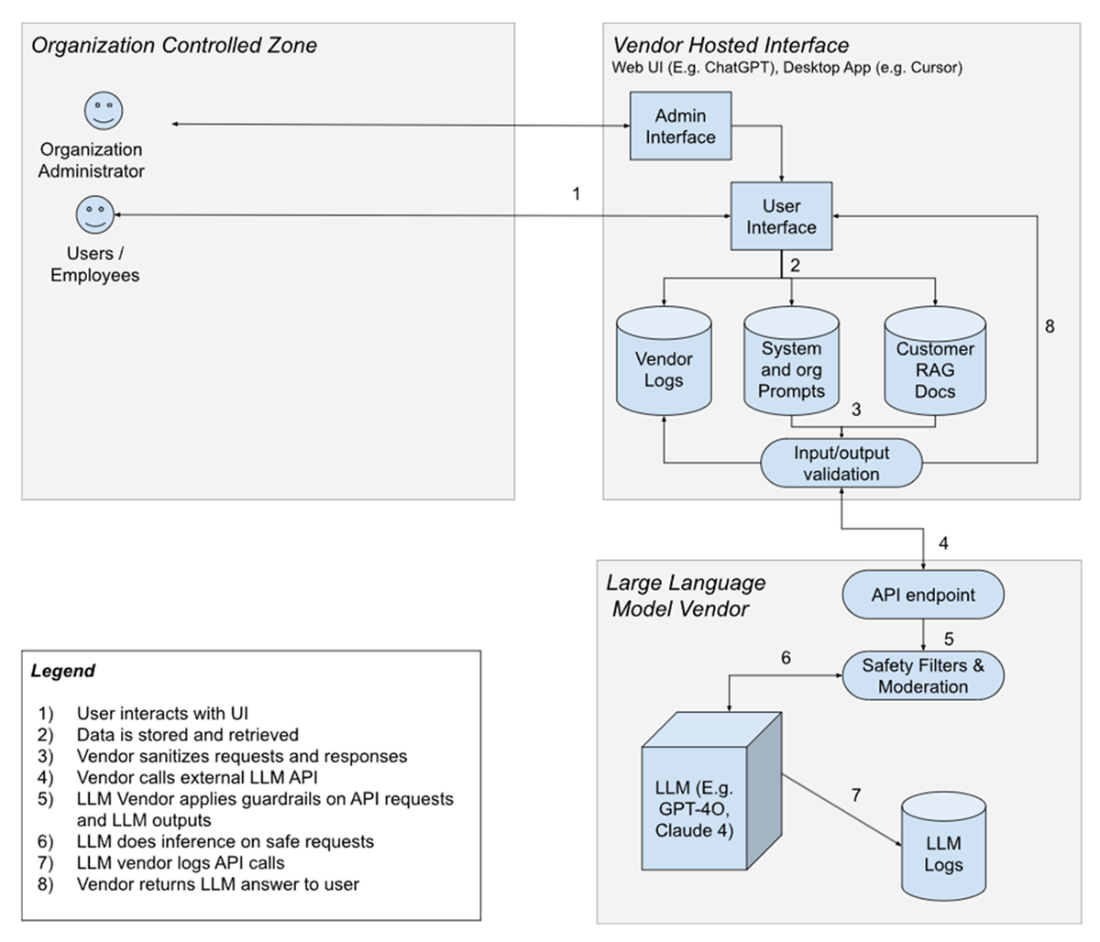

SaaS / Application Adoption Model

Shared responsibilities across organizations (client, vendor, LLM provider) in the API Integrator adoption model

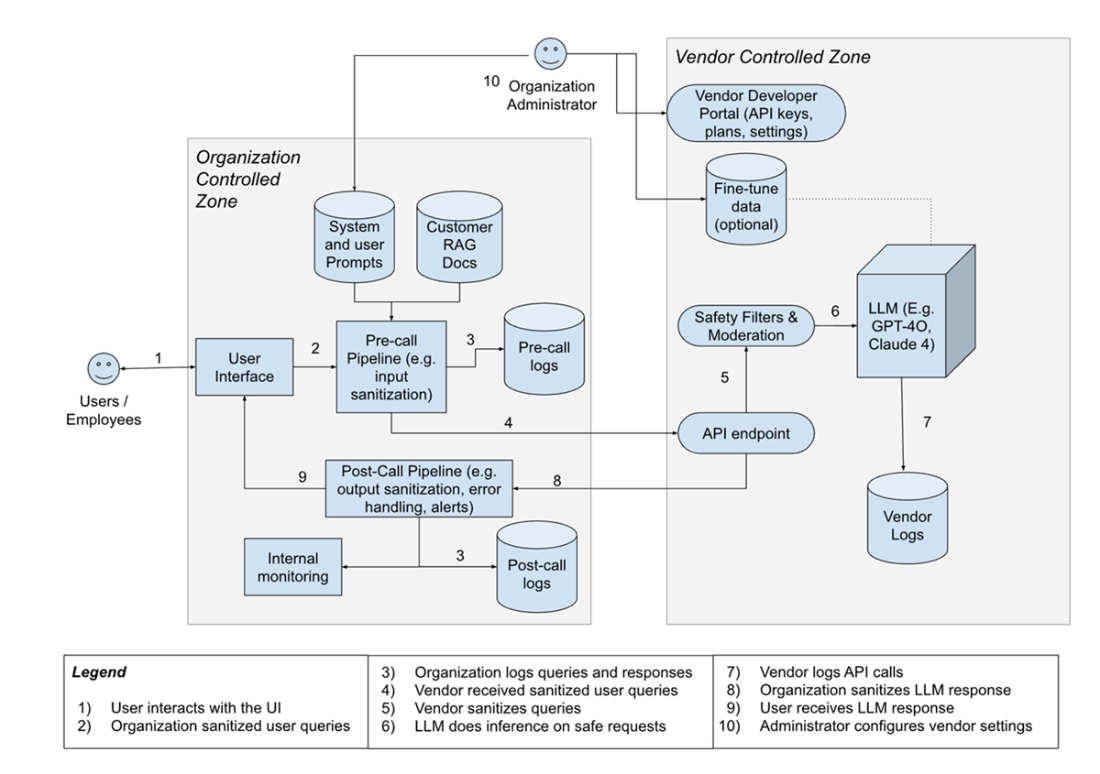

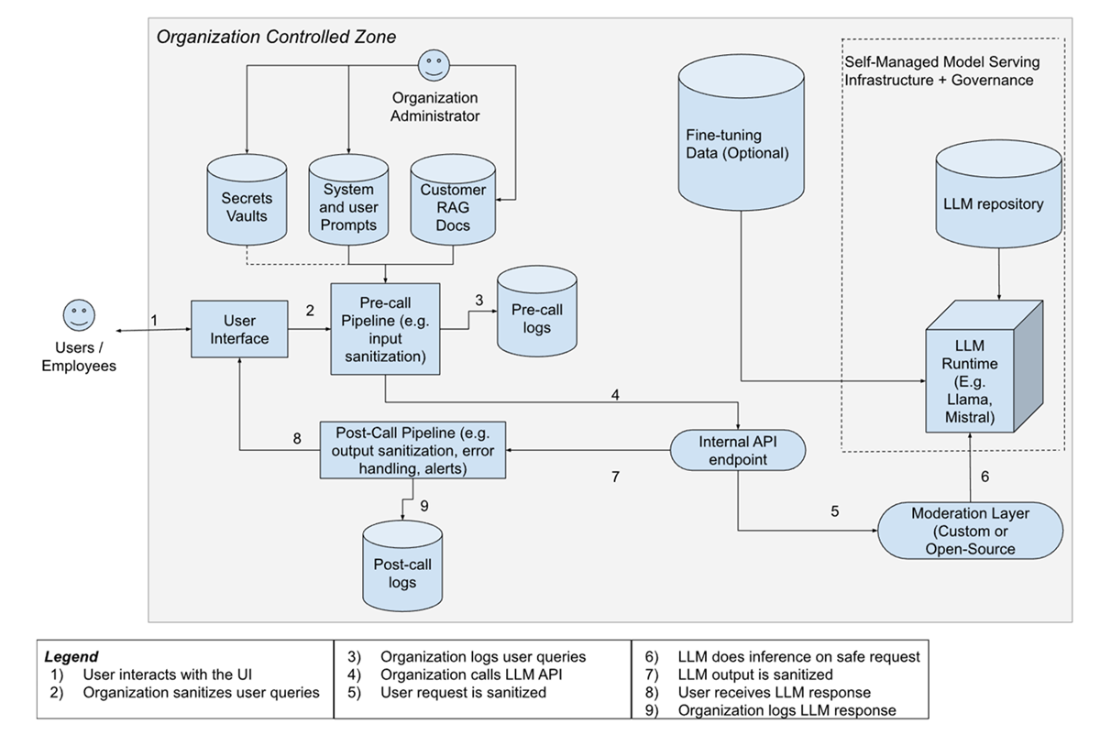

Shared responsibilities across organizations (client, vendor, LLM provider) in the Self-Hosted Model adoption model: This architecture assumes the LLM (e.g., Mistral, Llama 3) is deployed on organization-managed infrastructure (e.g., Fireworks, AWS, on-prem). All inference traffic, safety filtering, and post-processing are managed internally.

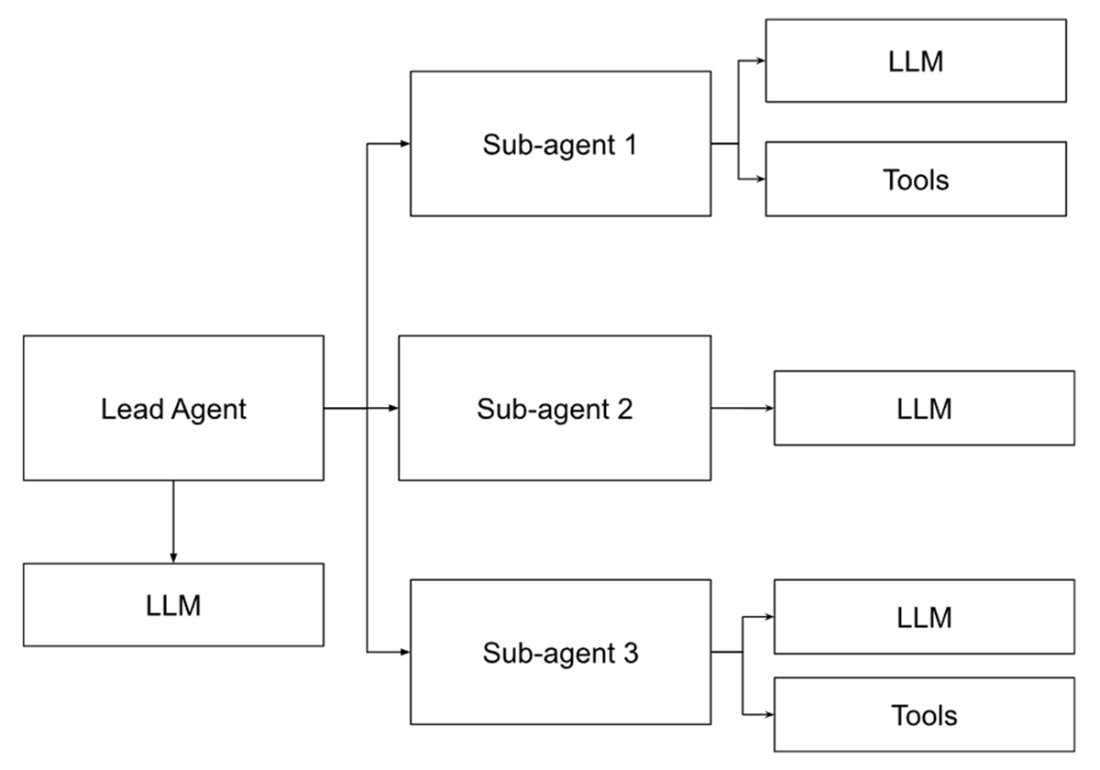

A high-level architecture of how a multi-agent system works[22].

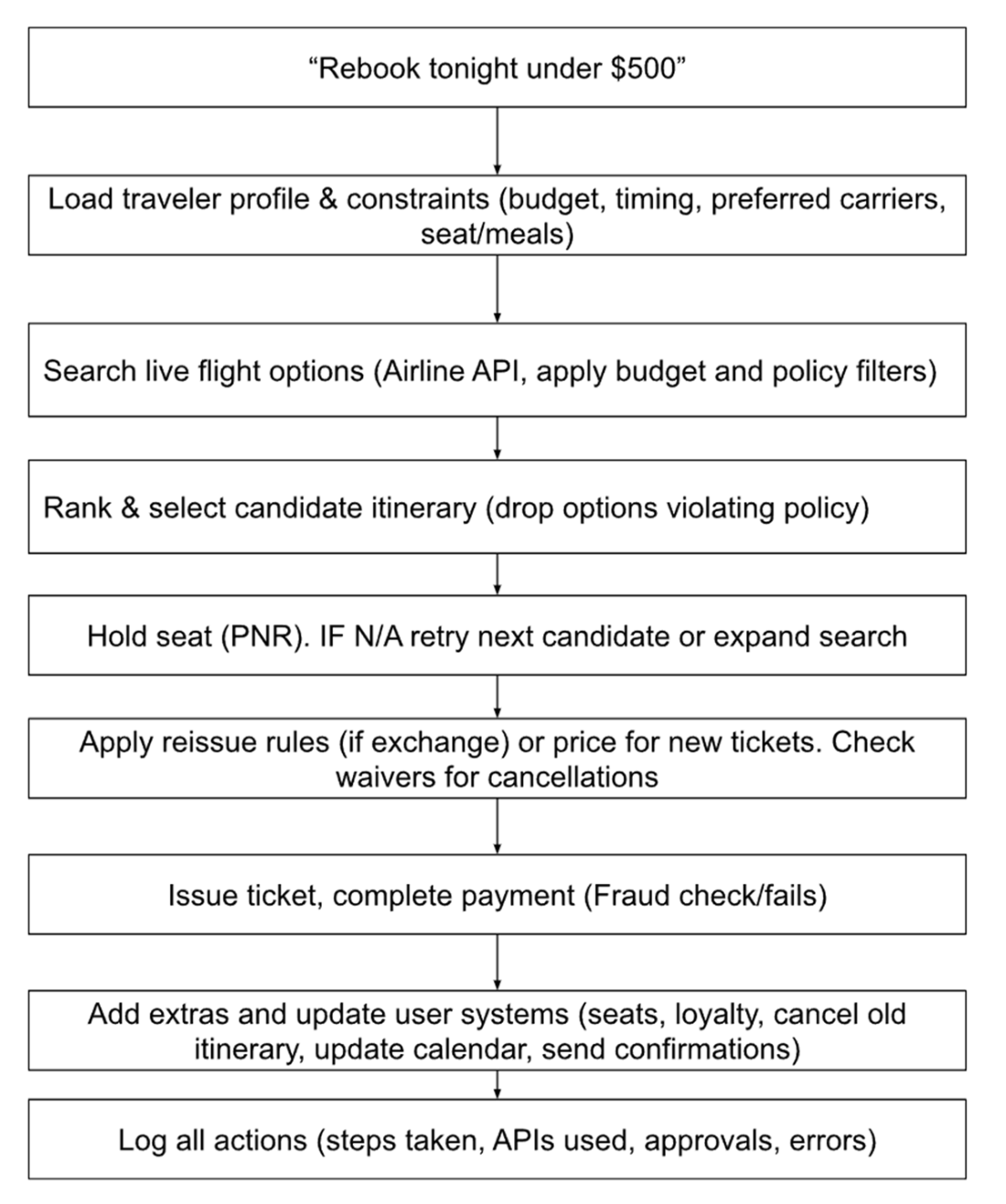

Example planning flow for an agentic AI rebooking task.

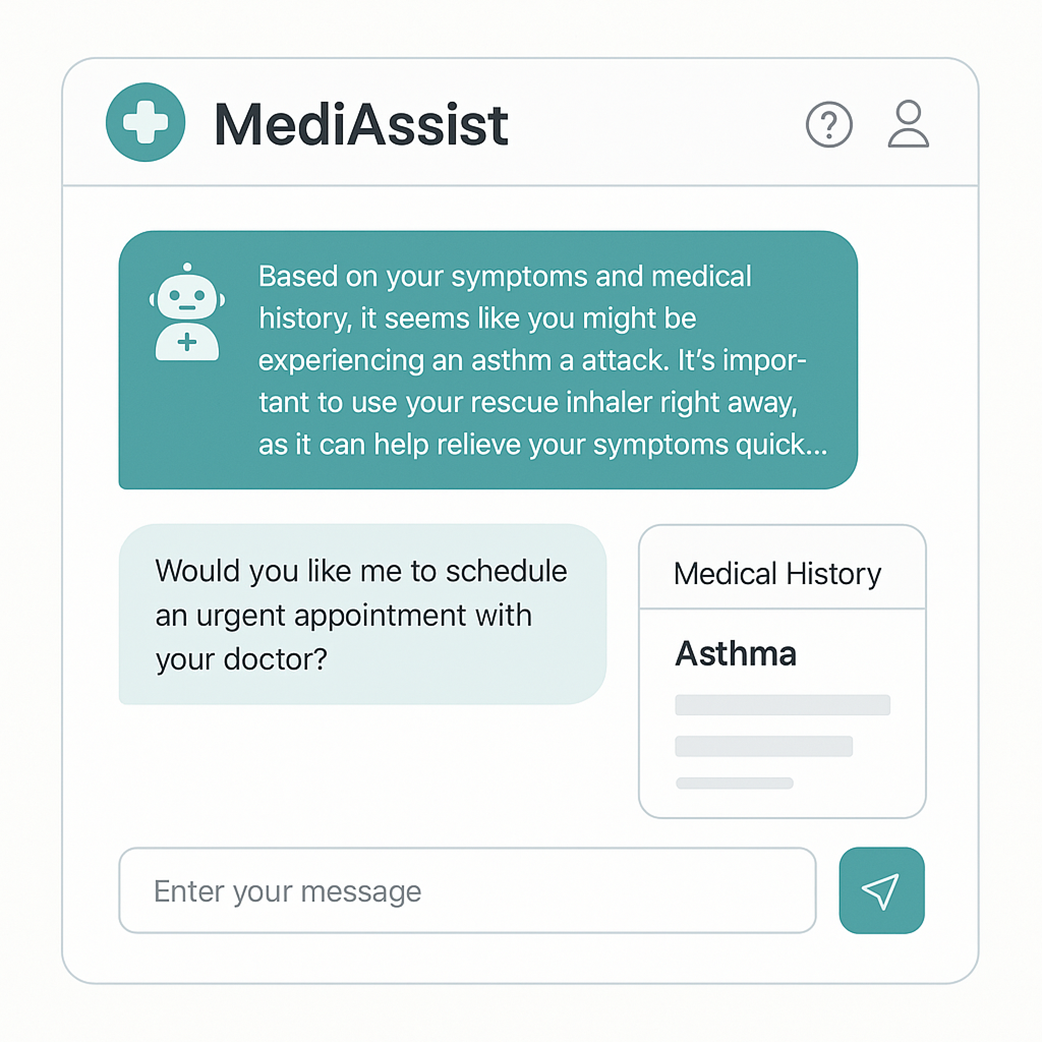

An illustrative example of the user interface of an AI-powered medical chatbot such as MediAssist, which includes features about diagnosis and appointment booking.

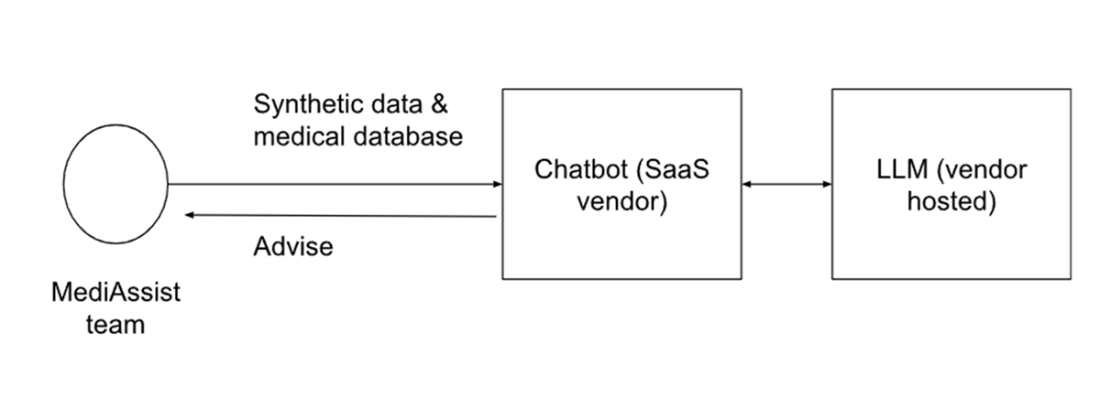

The first Medicare prototype using a SaaS model. It provides advice based on the input provided by the user.

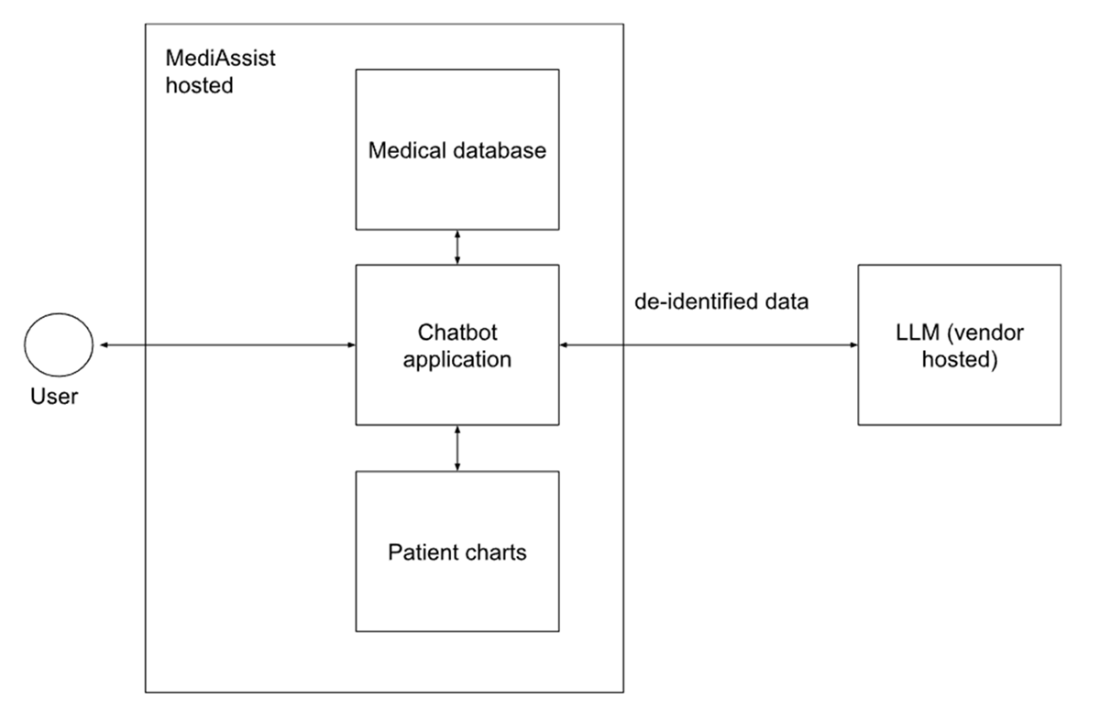

API-Integrator model is now adopted by MediAssist. Behind the scenes, the LLM receives the de-identified patient data to be able to make better recommendations. The UI is hosted at the hospital servers and offers several options such as saving info to the patient chart or sending it to a clinician for review.

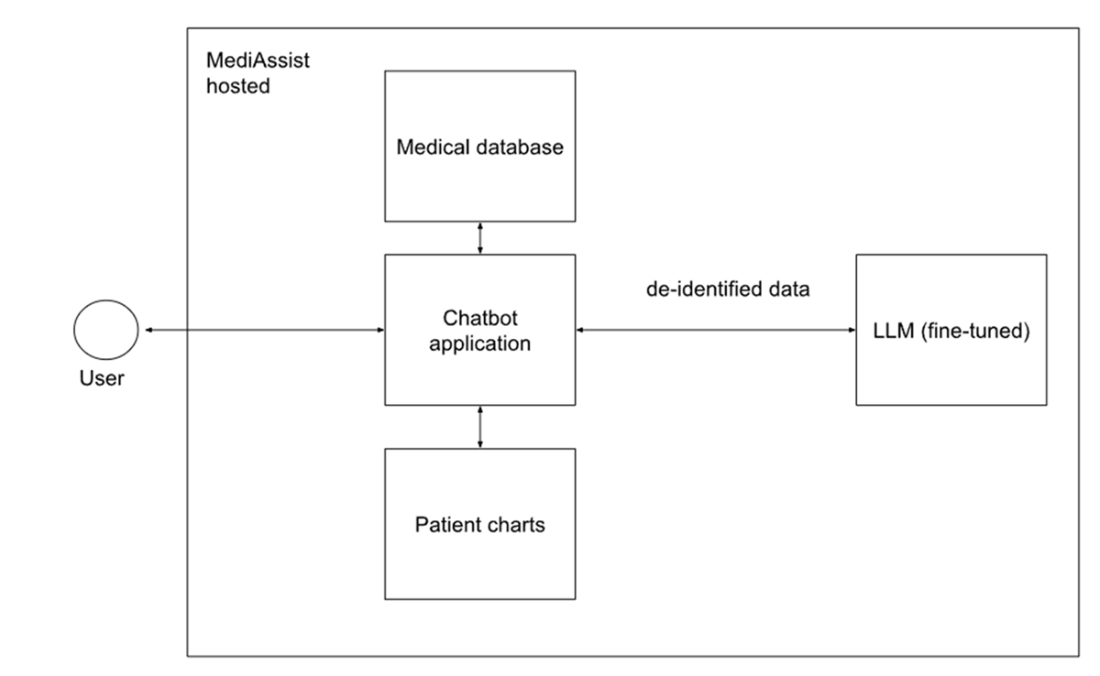

Model hoster posture is now adopted by MediAssist. The model is fine-tuned with anonymized patient data and hosted by MediAssist.

Summary

This chapter mapped three primary GenAI deployment postures (SaaS consumer, API integrator, and self-hosted production) showing how each offers different trade-offs between speed, cost, control, and regulatory exposure. We examined how moving along this spectrum shifts not only technical architecture but also operational responsibility to reduce risk.

We then introduced the concept of agentic AI systems: models equipped with persistent memory, tool-use capabilities, and planning/reflection loops that allow them to autonomously pursue high-level goals. These systems can observe changes in real-time data, decide on a sequence of actions, and execute them within pre-defined guardrails, offering both efficiency gains and new safety considerations.

The MediAssist case study illustrated how a system can progress through these postures and adopt agentic features to proactively detect patient health anomalies and escalate them in line with clinical protocols. This journey sets up the core challenge explored in the following chapters: how to design governance frameworks (Chapter 3), address security and privacy risks (Chapters 4–5), embed ethical and trustworthy AI practices (Chapter 6), and comply with applicable laws and regulations (Chapter 7) as both autonomy and integration depth increase.

FAQ

What does “GenAI posture” mean and why does it matter?

Your GenAI posture is your organization’s operational stance toward generative AI—how you adopt and run it. It determines where control and accountability sit, how much oversight you must perform, and how your compliance exposure shifts. Choosing a posture shapes how you manage data, security, vendor dependence, logging, and regulatory obligations.What are the three main GenAI adoption models and how do they differ?

- SaaS Consumers: Use vendor-hosted apps with built-in GenAI (e.g., ChatGPT Enterprise, Zoom AI). Fastest start, least control; heavy reliance on vendor safeguards and logging practices.- API Integrators: Build your own UI and data pipeline while calling external LLMs via API. More customization and governance leverage, but prompts and context still leave your boundary.

- Model Hosters: Run models on your own infrastructure (on-prem/VPC/managed hosting). Maximum control over behavior, data, and performance; you own security, uptime, and compliance end to end.

How do control and vendor dependency shift across SaaS, API, and self-hosted?

- SaaS: Lowest organizational control, highest vendor dependency (opaque safety filters, vendor/LLM logs).- API: Shared control; you govern pre/post-processing, UI, and local logs, while the vendor operates the model and keeps service logs.

- Model Hoster: Highest control, lowest vendor dependency; all safety filtering, monitoring, and compliance are your responsibility.

What should governance focus on in each posture?

- SaaS: Vendor due diligence, data protection terms, zero-retention options, clear admin configurations (system prompts, feature controls, RAG retention), and internal usage policies.- API: Pre/post-call filtering, PII minimization/anonymization, robust org-side logging and telemetry, spend controls, SLAs with vendors, and configuration synchronization across all integrations.

- Model Hoster: Full-stack security, guardrail design and testing, model provenance/alignment checks, SBOM and supply-chain hygiene, explainability/monitoring, and regulatory evidence management.

How should we handle RAG (Retrieval-Augmented Generation) data across the models?

- SaaS: RAG data is stored by the vendor but logically segregated; configure retention/anonymization/purge policies in the admin portal.- API: Keep RAG corpora internal; send only necessary snippets with prompts; manage embeddings refresh and access controls locally.

- Model Hoster: Full local control of RAG storage, indexing, access, and purge, with auditability aligned to your policies.

What do we need to know about logging, data retention, and legal exposure?

- Vendors and LLM providers often retain logs (prompts/outputs/metadata); your visibility can be limited.- Use zero-retention where available, but plan for discoverability: logs can be subject to legal holds and litigation.

- Contract for deletion timelines, exportability (standard formats), non-use for model training, and clarity on sub-processor/LLM-provider logging.

Which SLAs and contractual terms are recommended for API/LLM vendors?

- Security and incident response (e.g., 1-hour human acknowledgment for critical incidents).- Log export within defined timelines; zero-retention and deletion SLAs (active systems and backups).

- Model lifecycle: deprecation notice windows, rollback on safety/accuracy regressions, version pinning.

- Operational: availability/performance targets, spend controls/quotas, and advance notice of breaking changes.

Which risk dimensions change most across deployment choices?

- Data location/visibility- Guardrail ownership and effectiveness

- Logging and auditability

- Security responsibility by layer

- Regulatory accountability and evidence

- Model-behavior liability

- UX control to prevent misuse

- Post-deployment governance and monitoring

What is Agentic AI and what new risks does it introduce?

Agentic AI uses agents that plan, observe, reflect, act, and use tools toward a goal, often with persistent memory and minimal human input. Benefits include deeper workflow integration and autonomy; risks include reliability failures, deceptive or misaligned behaviors, unsafe actions via tool use, permissions overreach, auditability gaps, and heightened compliance/ethics concerns. Strong guardrails, scoped permissions, human-in-the-loop checkpoints, and comprehensive logging are essential.What lessons come from the MediAssist case as it moved from SaaS to API to self-hosted (and explored agentic)?

- SaaS prototype: Fast validation but insufficient for HIPAA-grade use; vendor lacked required guarantees and governance features.- API pilot: De-identified data to the LLM, tighter controls and contracts, improved quality via internal context; residual logging/retention and cost risks remained.

- Self-hosted production: Full control, fine-tuning on anonymized data, monitoring and explainability cut errors and kept data in-network; required significant security, maintenance, and governance investment (model cards, factsheets, SBOM, playbooks, decision logs).

- Agentic extension: Offers proactive care but amplifies reliability, safety, access, and escalation risks—demanding strict policies, calibrated thresholds, permissions, and traceability.

AI Governance ebook for free

AI Governance ebook for free