3 Actions with Model Context Protocol for AI agents

This chapter introduces the Model Context Protocol (MCP) as a unifying “USB‑C for agents and LLMs” that standardizes how AI systems discover and use tools, data, and other agents. Built on JSON‑RPC 2.0, MCP addresses fragmented tool integrations, inconsistent data access, brittle multi‑agent orchestration, and uneven security controls. By turning external capabilities into discoverable, interchangeable components, MCP shifts agent development from custom glue code to composing reusable parts, enabling richer research workflows and letting developers both consume existing servers and publish their own agent capabilities as servers for reuse.

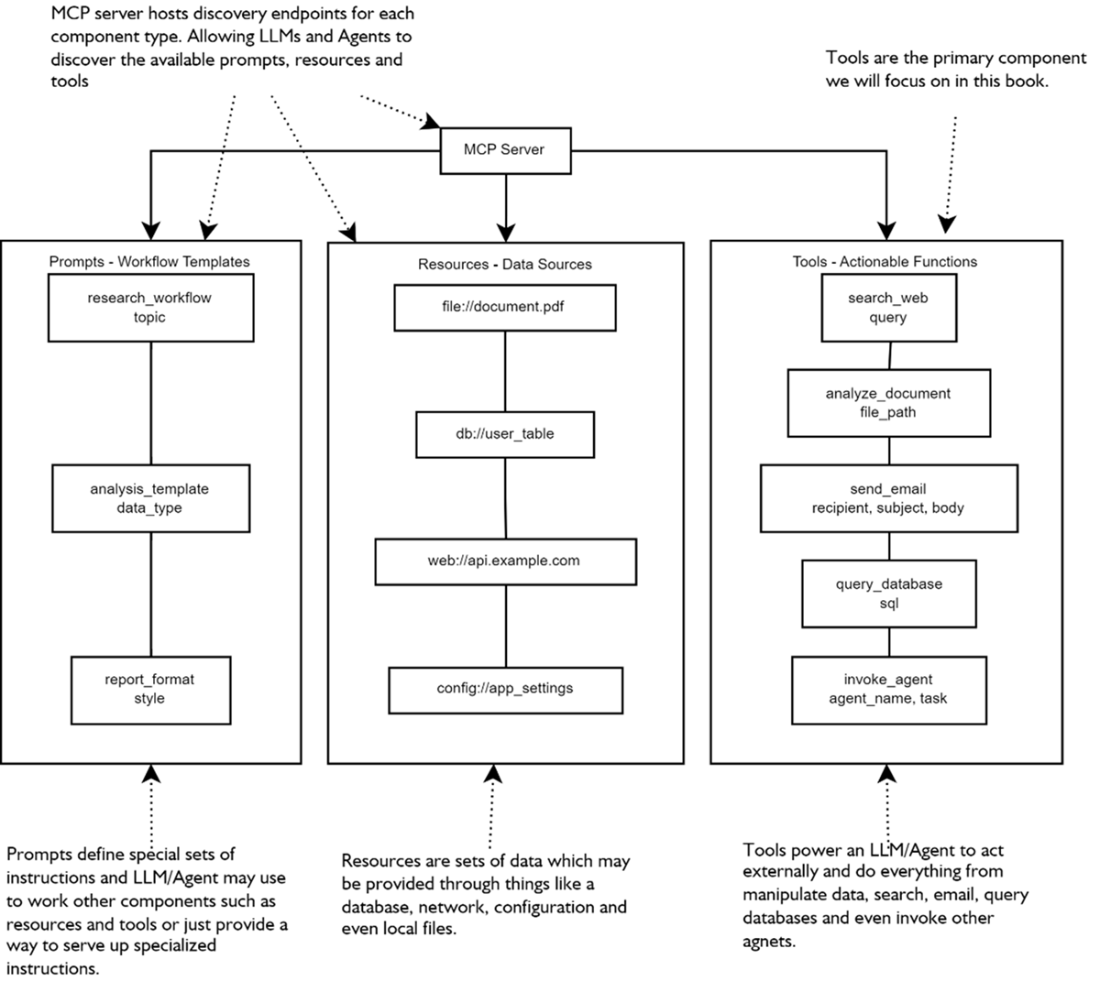

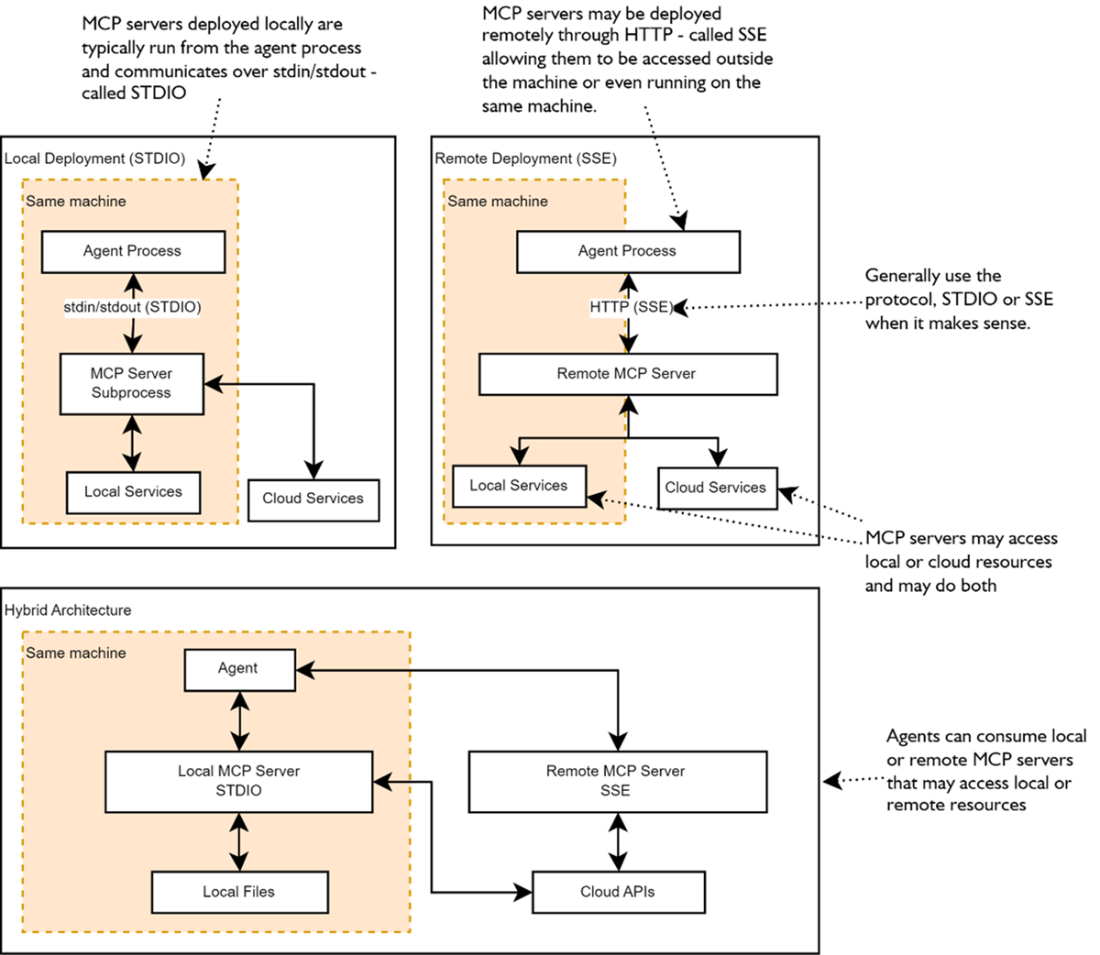

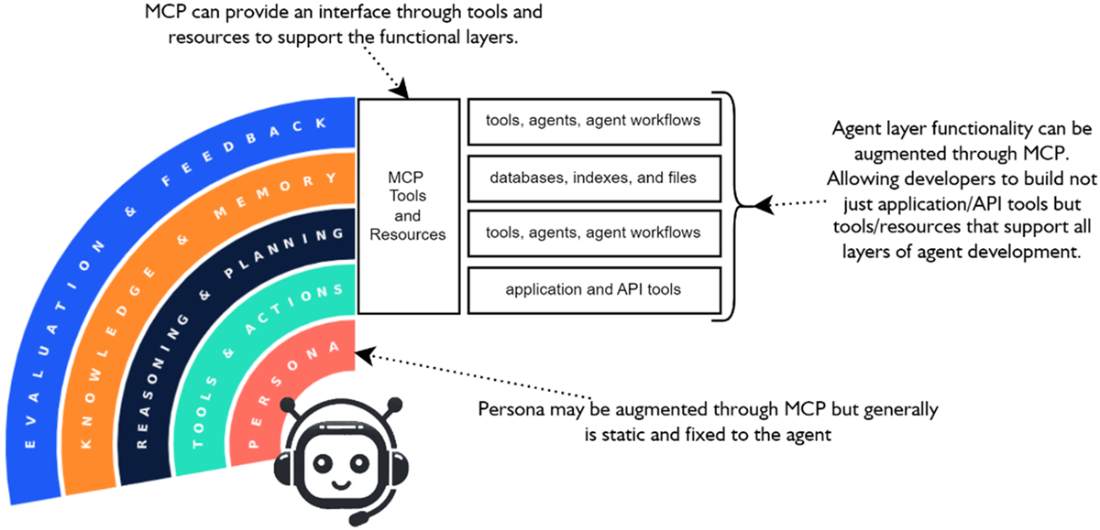

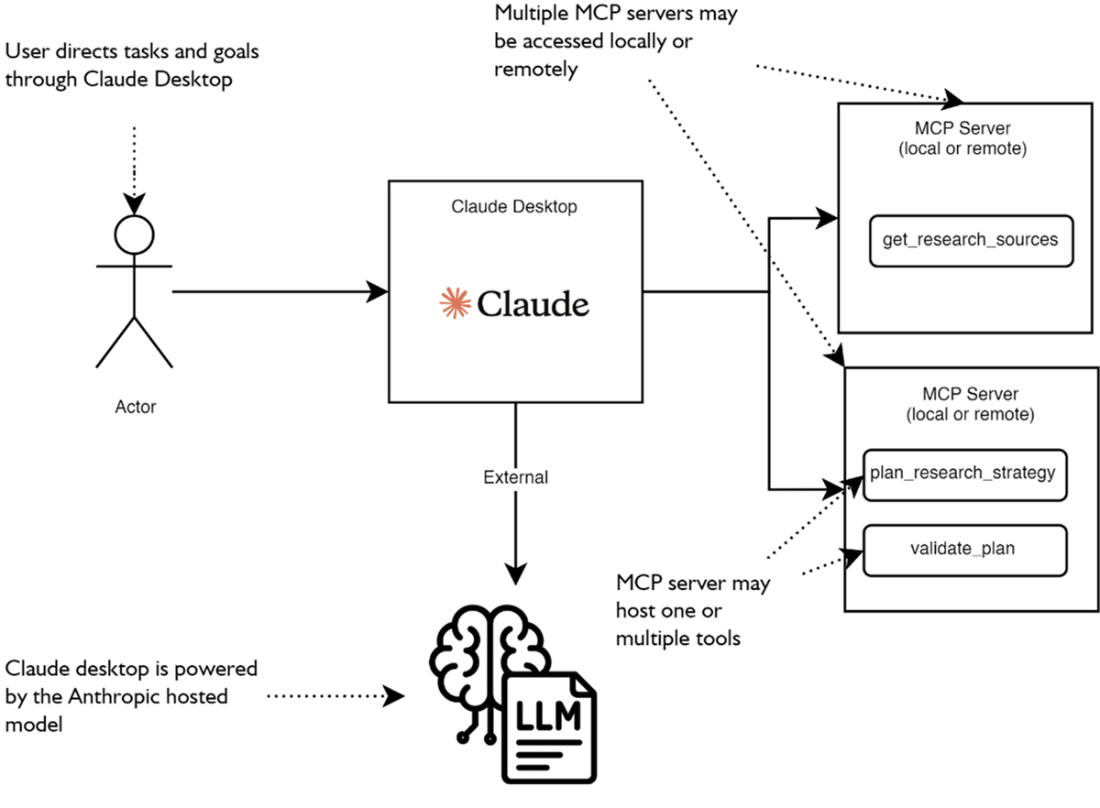

The chapter explains the MCP ecosystem of clients, servers, and services, and the core server components—tools for actions, resources for data, and prompts for standardized interactions—exposed via discovery endpoints. It details deployment and transport patterns: low‑latency local subprocess communication over STDIO, networked HTTP access via Server‑Sent Events (SSE), and hybrid setups that mix both for sensitivity, scale, and sharing. Beyond simple tool use, MCP augments all agent layers—from tools and actions to reasoning/planning, knowledge/memory, and evaluation/feedback—while highlighting crucial behavioral differences between supervised assistants and autonomous agents, and the need for safety, validation, and access control to avoid unintended actions.

Practically, the chapter walks through spinning up a minimal FastMCP server, exercising it with a desktop client and the MCP Inspector, and wiring it into an agent using an SDK—showing how to swap between STDIO and SSE with minimal code changes. It demonstrates consuming a growing catalog of ready‑made servers (for files, planning, web/data APIs, and productivity apps) and motivates building bespoke servers to encapsulate business logic and constrain permissions. A worked example converts a journaling agent’s internal tools into an MCP server, illustrating the benefits of separation of concerns, reuse, and scalability, along with cautions about scoping file access and sharing state across clients. Exercises reinforce the concepts by having readers install, inspect, connect, switch transports, wrap restricted capabilities, and package an agent as a reusable MCP service.

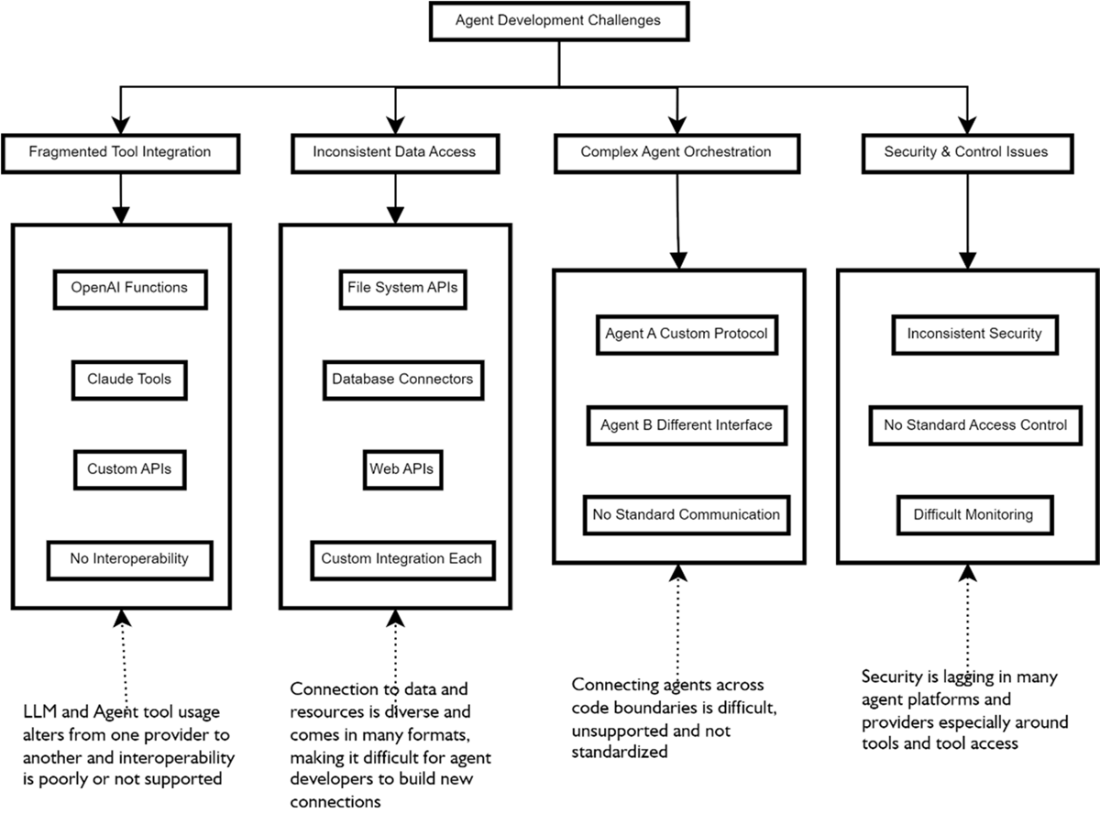

The leading challenges AI Agent developers face when building agents include fragmented tool integration, inconsistant data access, complicated multiple-agent orchestration, and security and control.

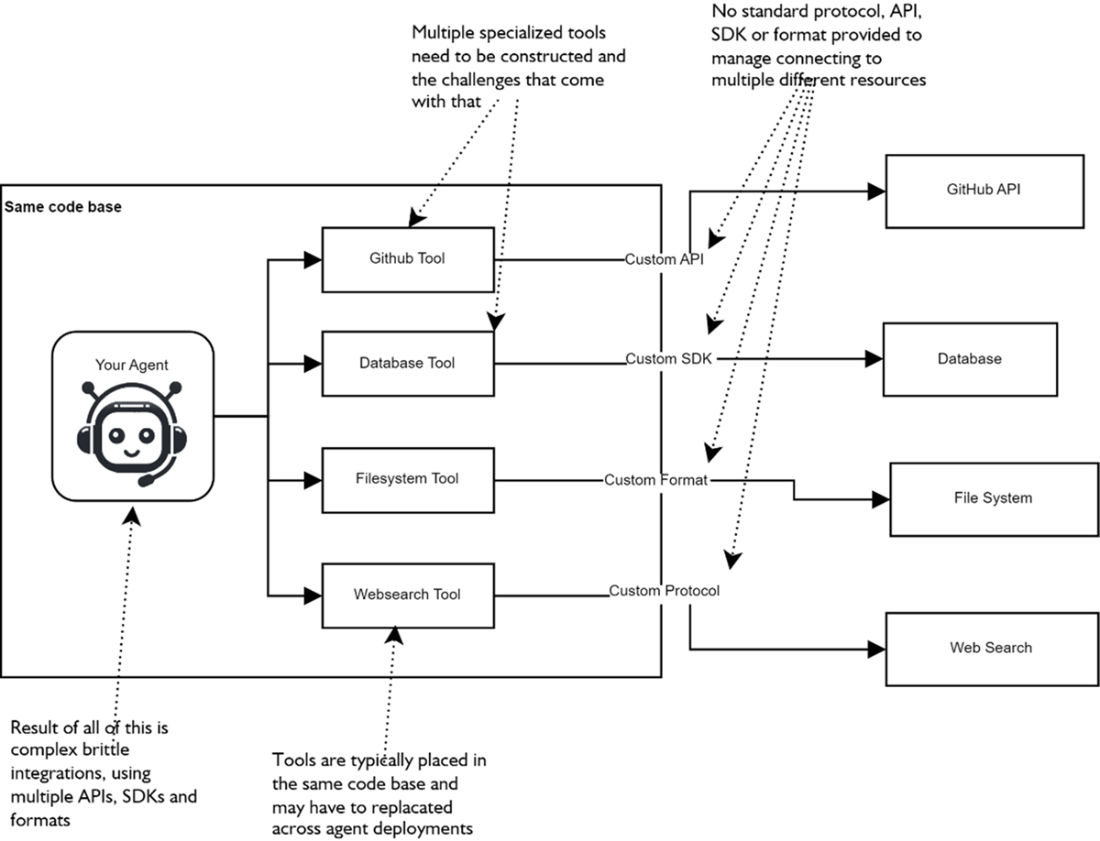

A typical problem agent/LLM developers face when connecting to multiple services and resources is the lack of standardized connections and the need to support multiple different connectors to whatever services they need access to.

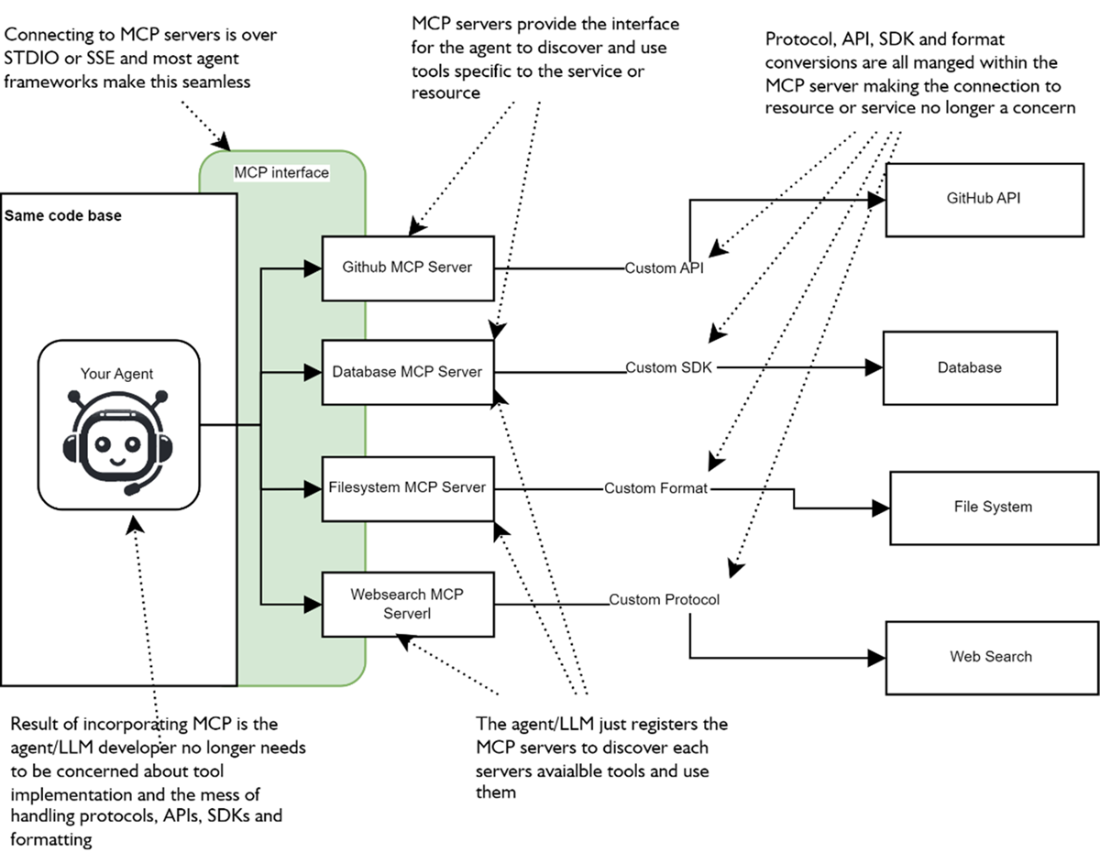

Implementing MCP as a service layer abstracts access to the various services that agent may want to connect to and use.

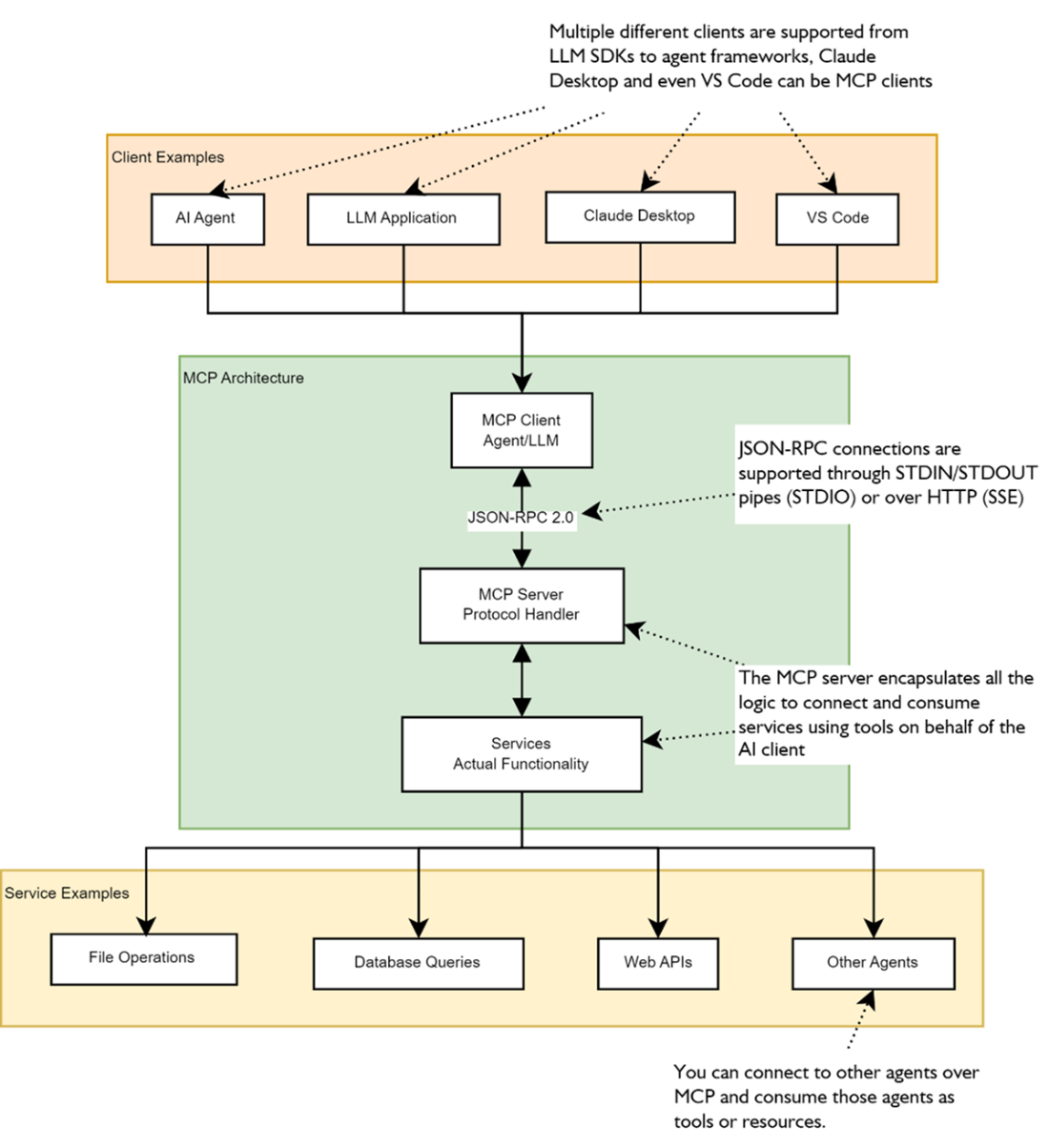

The basic components of MCP architecture are the client, server, and services. Here you can see some common clients (agents, LLM applications, Claude desktop, and VS code) and potential services (file operations, database queries, web APIs, and other agents) that the clients might connect to.

The main components of an MCP server include prompts, specialized system instructions; resources (used for access to files), configuration, and databases; and Tools, which are extensions of an internals agent’s ability to consume and use tools

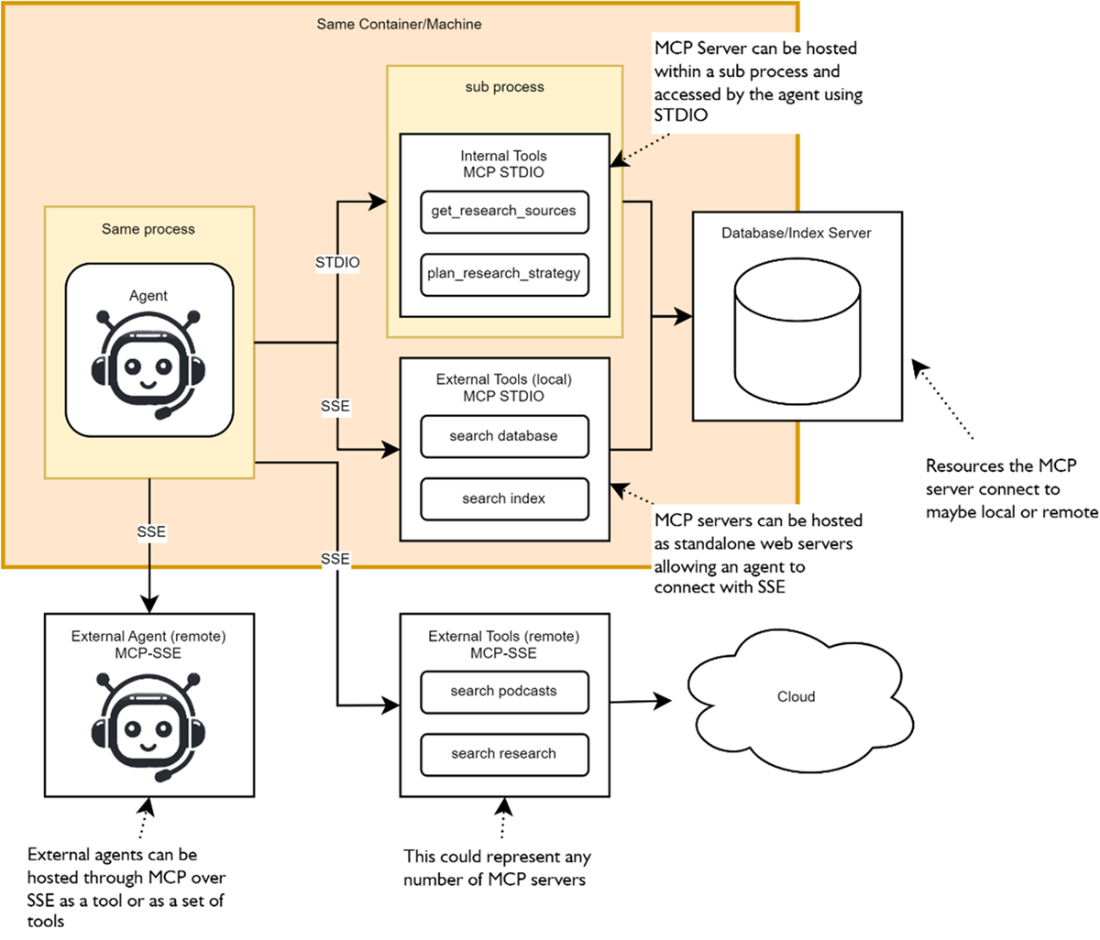

The various deployment patterns that may be used to connect MCP servers to agents. From running locally and within a child process on the same machine, running remotely and accessible over HTTP to include hybrid architectures that blend local and remote MCP servers to single agent.

MCP can be used to add functionality in the form of tools to all the functional agent layers.

Claude desktop may consume multiple MCP servers deployed locally or remotely. The LLM that powers Claude then uses the MCP components (generally tools) to enhance its capabilities.

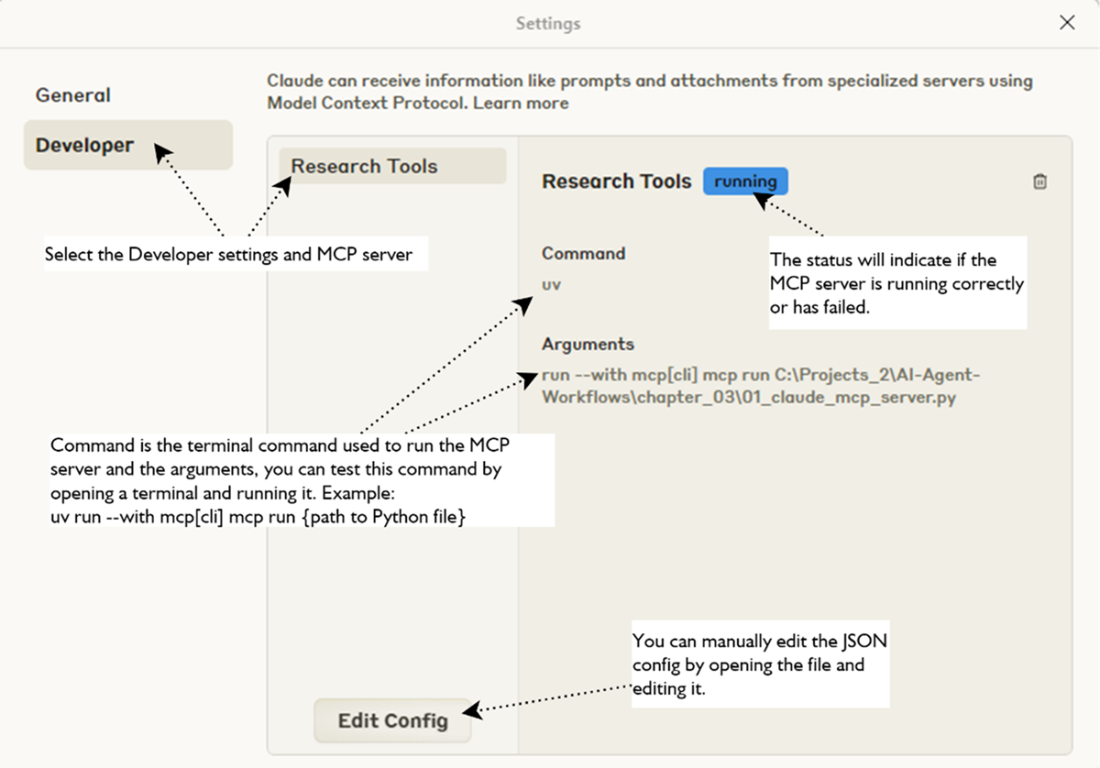

Shows the MCP server settings for Python file

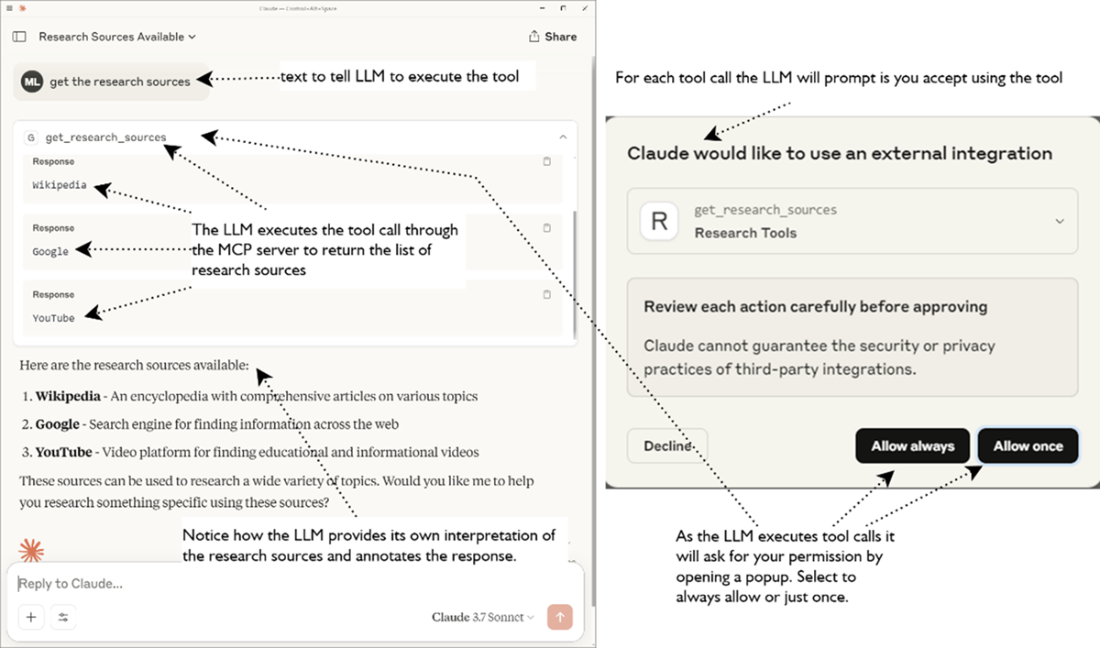

Shows the MCP hosted tool being executed within Claude desktop

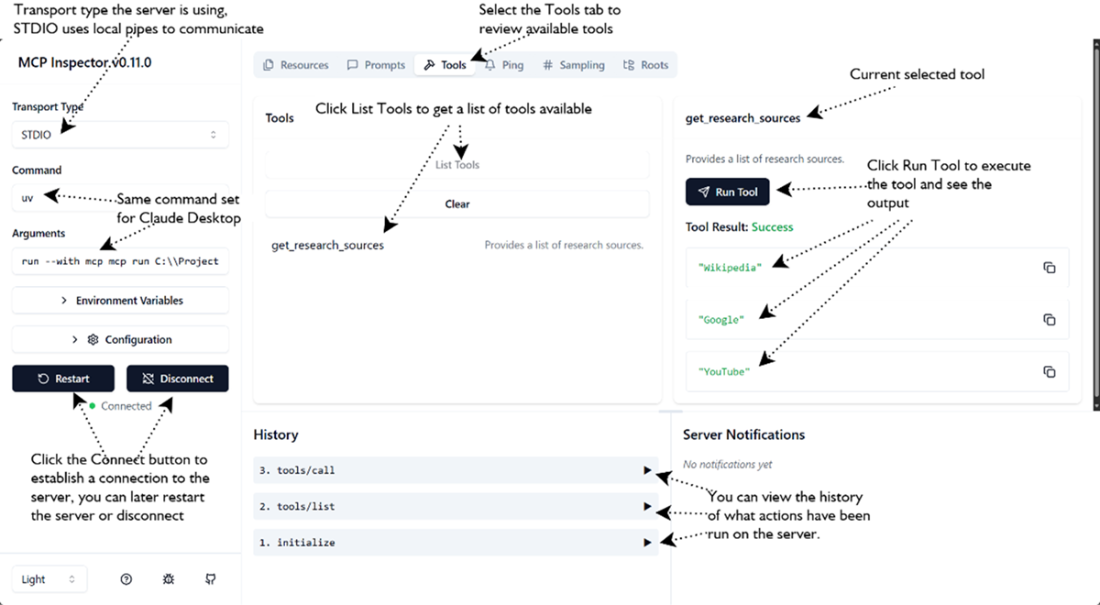

Shows the MCP Inspector interface examing available tools and executing them

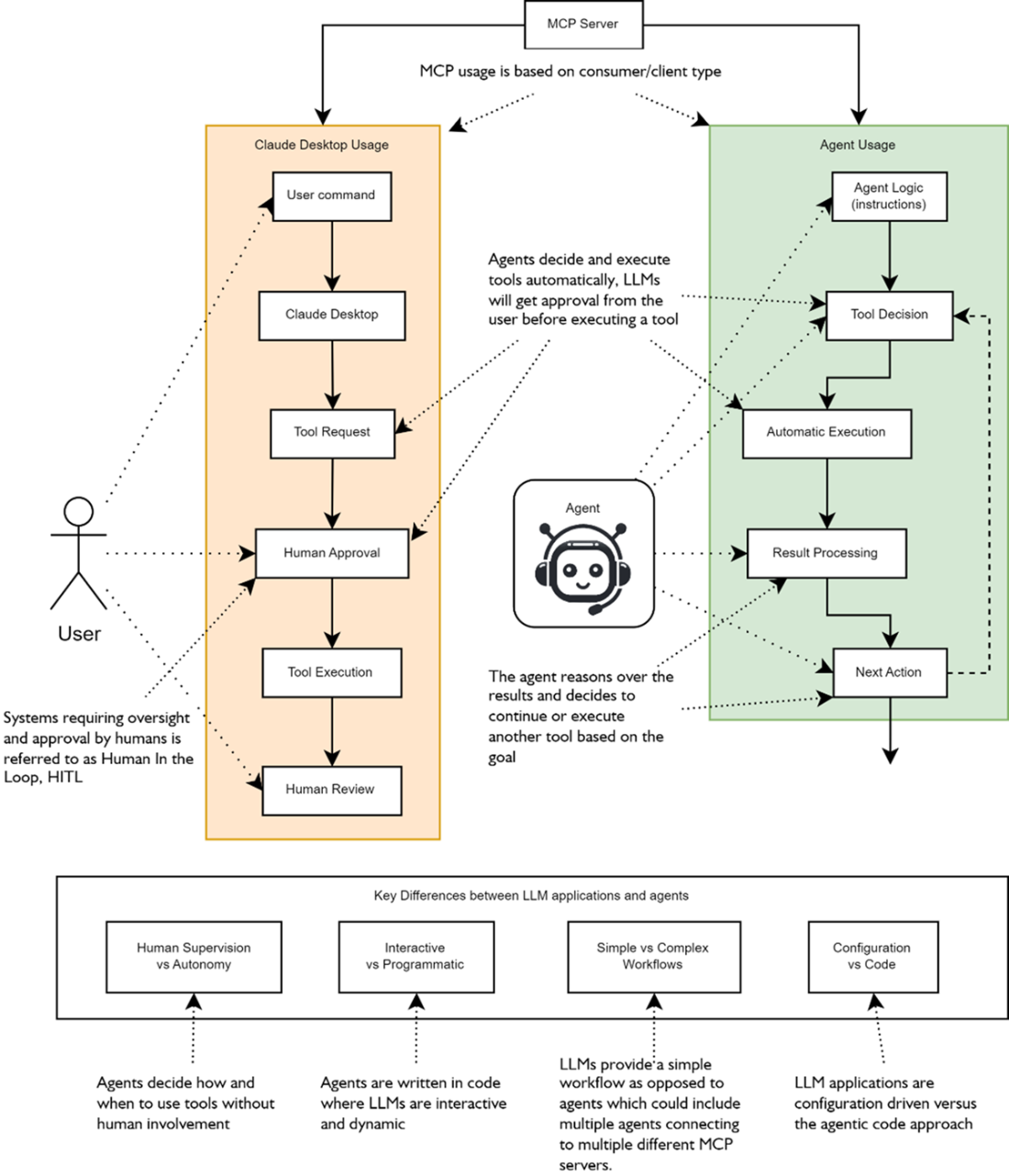

The key differences between MCP tool execution from an LLM application (Claude Desktop) or through using an agent include; assistants require human supervision while agents are autonomous, assistants are interactive to agents programmatic, agents perform more complex multi-step workflows and assistants typically are limited to performing simple plans.

Shows the various ways an agent may interact with and consume MCP servers

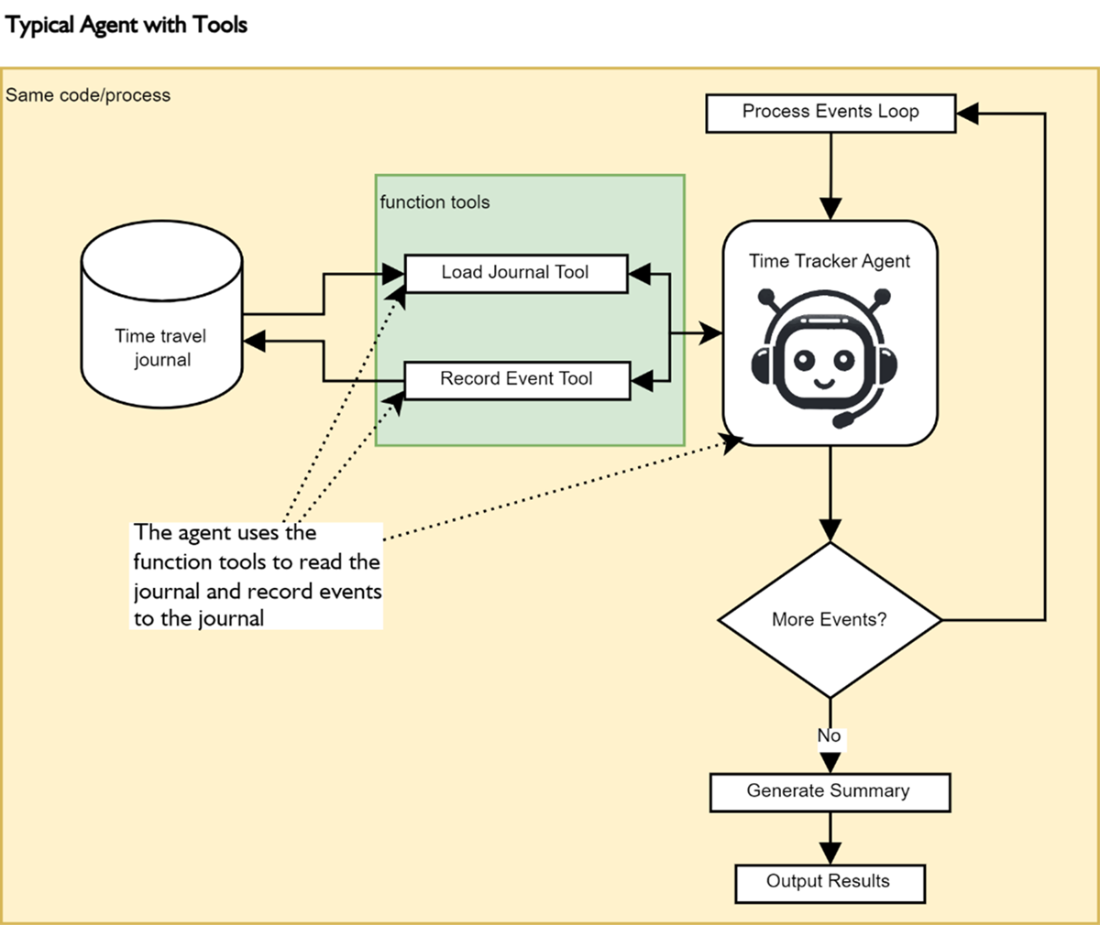

The time tracker agent will record time events using internal function tools as it processes the events in a loop. After the loop finishes the agent is asked to summarize the events and it will use the Load Journal Evants tool to load the journal of events and summarize

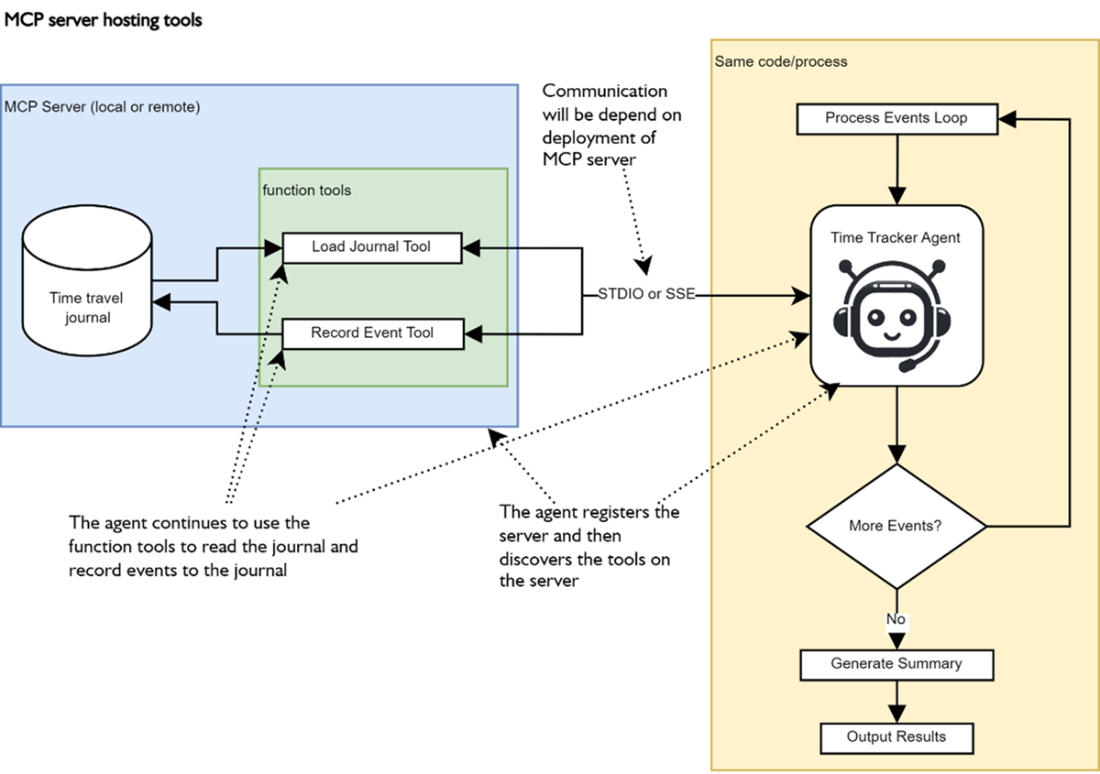

The separation of tools from the agent into a standalone MCP server that could be hosted locally or remotely and access through STDIO (local) or SSE (remote). Now the agent registers the MCP server instead of individual tools and then internally discovers the tools the server supports and how to use those tools.

Summary

- MCP = “USB-C for LLMs & agents.” A JSON-RPC-2.0 spec that erases bespoke glue code for tools, data sources, and even other agents.

- MCP solves fragmentation (multiple tool schemas), brittle data access, ad-hoc orchestration, and uneven security by giving every capability a uniform interface.

- MCP supports three components: Tools (actions), Resources (data/objects), and Prompts (re-usable templates). Agents can treat any of them as callable verbs.

- MCP Architecture is in 3 parts: MCP Client, Server, and the Service/Resource it fronts. An agent is just one kind of client.

- STDIO – sub-process, zero-latency, single caller (great for local development).

- SSE – HTTP + Server-Sent Events, multi-client, cloud-friendly. Switching is literally a constructor swap.

- MCP is not just for tools/actions but can support the other functional layers (Reasoning & Planning, Knowledge & Memory, Evaluation & Feedback)

- MCP can be deployed using a mixture of patterns: Local, remote, or hybrid — mix and match to keep sensitive operations local while sharing heavy APIs remotely.

- The MCP Inspector gives a live, clickable view of any server—perfect for debugging tool schemas and outputs before wiring agents to them.

- MCP reference servers are available for use or inspection and include: filesystem, brave-search, google-calendar, github, etc.—all installable with a single npx or mcp run.

- Agents themselves can be wrapped as servers, turning an entire reasoning pipeline into a reusable, strongly typed tool.

- Typed Pydantic I/O flows end-to-end, eliminating fragile string parsing in multi-agent chains.

- MCP enables LEGO-style composition of agent systems—each block isolated, testable, and instantly swappable without touching the others.

FAQ

What is the Model Context Protocol (MCP) and why does it matter for agents?

MCP is an open standard by Anthropic (built on JSON-RPC 2.0) that standardizes how agents and LLM apps connect to external tools, data, and services. It’s often called “USB‑C for agents” because it replaces bespoke integrations with a single, consistent protocol, letting you plug in capabilities (tools, resources, prompts) quickly and safely.Which development problems does MCP solve?

- Fragmented tool integration: One protocol instead of multiple vendor-specific tool formats.

- Inconsistent data access: Unified access to files, databases, and web APIs.

- Complex multi-agent orchestration: A standard way for agents to expose and consume capabilities.

- Security and control gaps: Consistent policy, permissions, and monitoring across integrations.

How is MCP structured? What are clients, servers, and services?

- MCP client: The agent or LLM app (e.g., Claude Desktop, VS Code, your agent) that discovers and uses capabilities.

- MCP server: Exposes tools, resources, and prompts; handles requests via JSON-RPC 2.0.

- Service/resource: The underlying system (files, DBs, web APIs, or even other agents) the server wraps.

What are the core MCP components: tools, resources, and prompts?

- Tools: Actionable operations agents can invoke (primary way most agents/LLMs interact).

- Resources: Expose external data or artifacts (files, configs, DB records) for consumption.

- Prompts: Reusable templates/instructions for consistent workflows.

What deployment and transport options does MCP support?

- Local (STDIO): Server runs as a subprocess; low latency, no networking, 1:1 client–server. Great for development and single-machine setups.

- Remote (SSE over HTTP): Network-addressable; supports multiple clients, load balancing, and cloud deployments.

- Hybrid: Mix local servers for sensitive tasks (e.g., file access) and remote servers for shared/scale-out services.

How do I get started quickly with a simple MCP server?

- Write a small server with FastMCP and annotate functions with

@mcp.tool(). - Run locally over SSE:

mcp run -t sse your_server.py. - Or integrate with Claude Desktop via

mcp install your_server.pyand enable it in Settings → Developer. - In assistants like Claude Desktop, tool execution requires human approval.

How can I explore and debug an MCP server?

Use the MCP Inspector:- Start:

mcp dev <absolute-path-to>/your_server.py(absolute path required). - Open the printed URL and inspect tools, run them, and view logs/history.

- Note: When launched via uv as a child process, transport typically shows as STDIO.

How do agents connect to MCP servers locally and remotely?

- Local (STDIO) from code: Use

MCPServerStdiowith a command likemcp run your_server.pyto spawn a subprocess and pass it to the agent’smcp_servers. - Remote (SSE): Run the server separately with

mcp run -t sse ..., then useMCPServerSseand point to the server’s/sseURL. - Swapping STDIO ↔ SSE is typically a small code change.

What ready-to-use MCP servers can I plug in?

Common options include filesystem, sequential thinking, fetch, web search, and productivity/integration servers. Examples:- Filesystem:

npx -y @modelcontextprotocol/server-filesystem <path> - Brave Search:

npx -y @modelcontextprotocol/server-brave-search - Fetch:

npx -y @modelcontextprotocol/server-fetch - GitHub:

npx -y @modelcontextprotocol/server-github - Google Drive/Calendar/Maps, Notion, Slack, Todoist, and more

What are key safety and best practices when using MCP with agents?

- Agents are autonomous; unlike assistants, they don’t require human approval. Validate and constrain what tools they can access.

- Least privilege: Scope filesystem servers to a safe directory; avoid broad destructive permissions.

- Test and monitor: Use the inspector; add evaluation/feedback loops (covered later) to prevent “rogue” actions.

- State awareness: Remote/SSE servers may be shared across agents; in-memory state (e.g., journals) can persist and be updated by multiple clients.

- Prefer building your own MCP servers for critical workflows to ensure predictable behavior and reuse.

AI Agents in Action, Second Edition ebook for free

AI Agents in Action, Second Edition ebook for free