1 Threat-modeling agentic pipelines

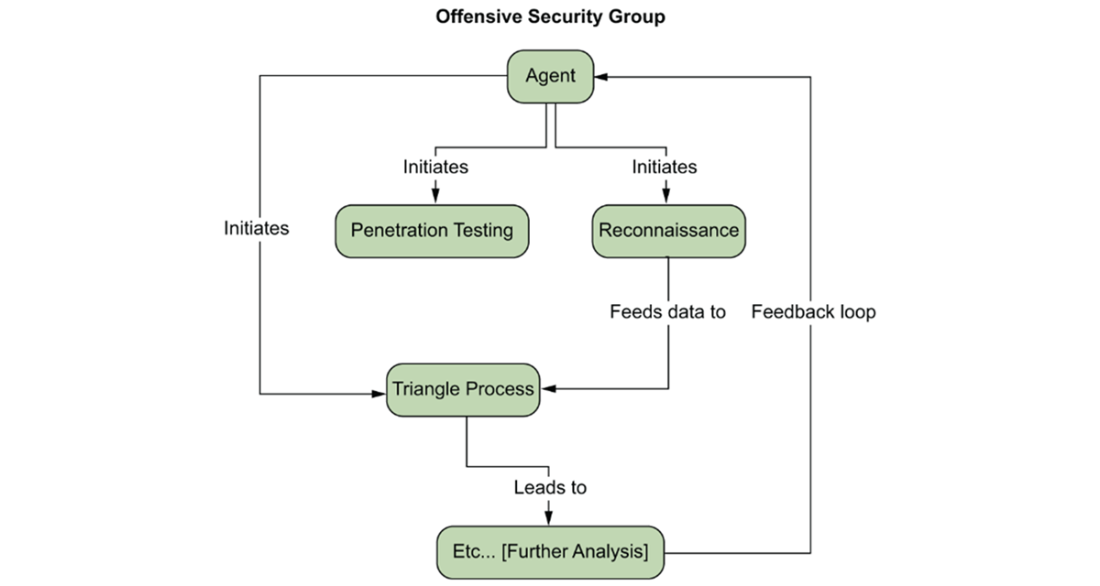

This chapter explains how modern AI reshapes offensive security by adding reasoning and structure to work that was once linear, manual, and brittle. Where traditional pipelines moved from data collection to a one-time decision, agentic systems bring contextual judgment to each stage, turning vast, noisy outputs into prioritized, reproducible next steps. The focus is on artifacts—the concrete, structured findings produced by tools—and on disciplined routing of those artifacts through controlled, auditable pipelines. AI serves as decision support: humans define objectives and approve escalation; tools still execute; agents reduce cognitive load by interpreting results, correlating weak signals, and proposing strategy shifts as environments change.

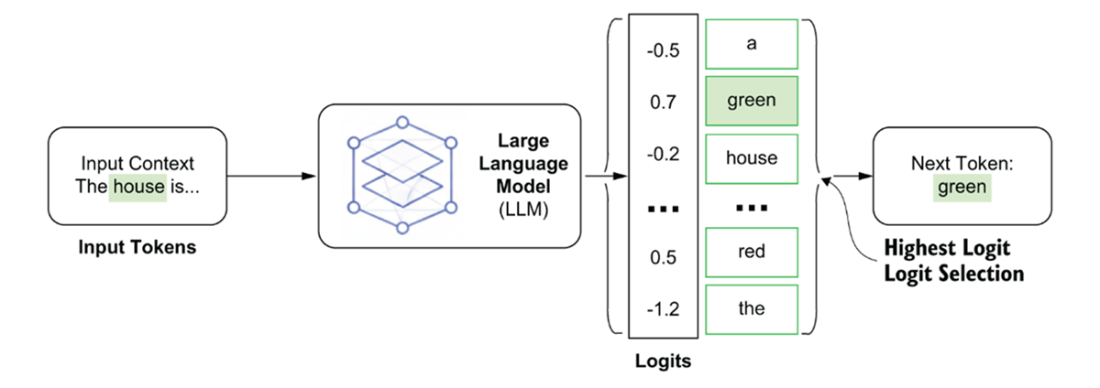

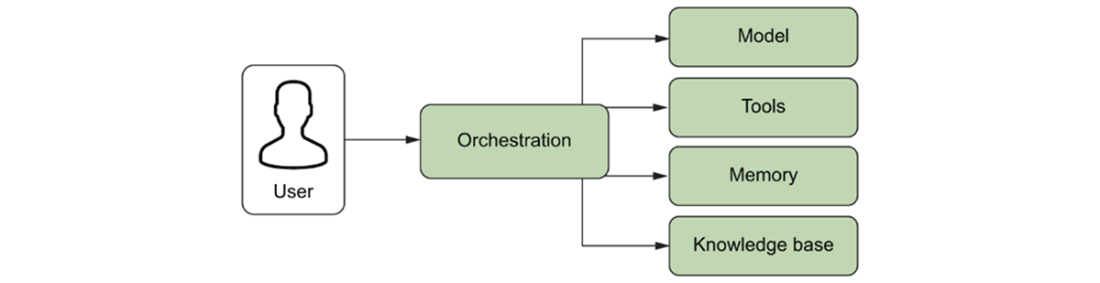

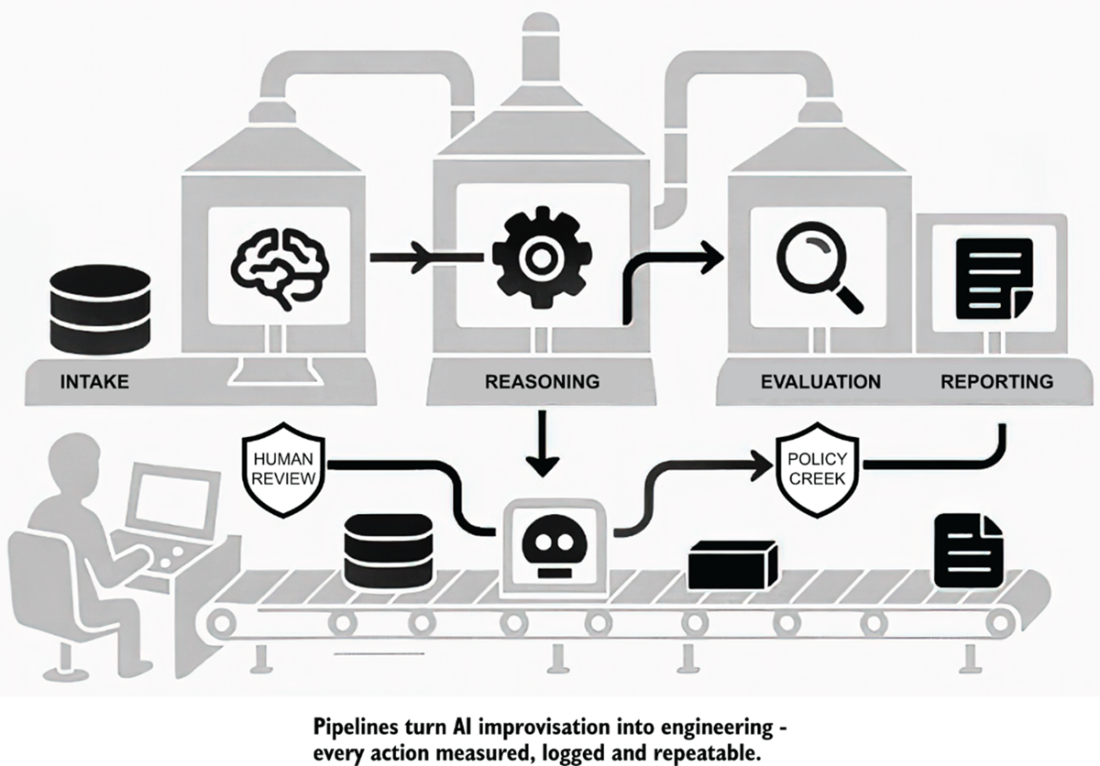

The chapter demystifies the technical building blocks behind that shift. Large language models are positioned as probabilistic reasoning engines that can summarize, explain, and triage—but also hallucinate and remain non-deterministic, requiring human oversight. Agents extend LLMs with orchestration, tools, memory, and a knowledge base so they can plan, act, and adapt toward goals, not just answer prompts. Pipelines then provide the governing architecture: they formalize intake, interpretation, controlled action, evaluation, and reporting, with safety gates and logging at each hop. Treating artifacts as first-class objects separates evidence from judgment, yields clarity (tools execute, agents interpret, pipelines route), and replaces ad-hoc stitching with reproducible, accountable operations.

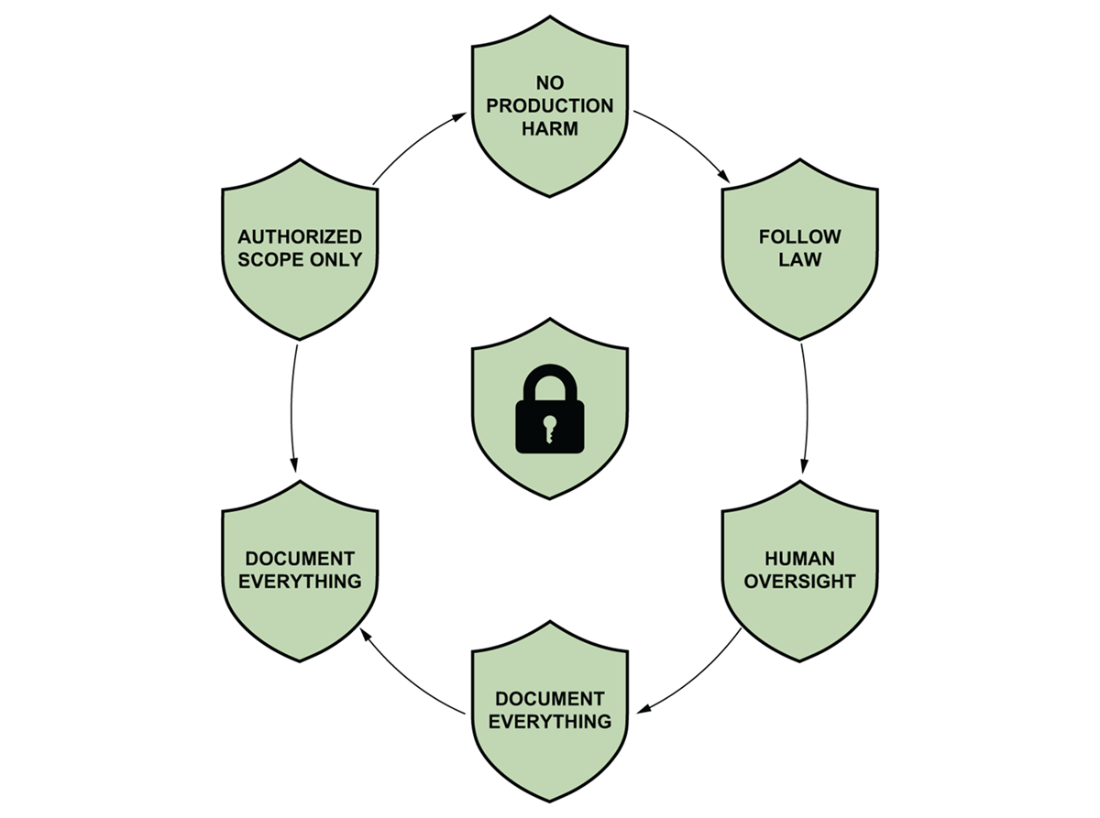

Ethical and operational guardrails are integral: test only within authorized scope, avoid production harm, follow the law, keep humans in the loop for risky actions, protect sensitive data, document everything, and practice responsible disclosure. With these boundaries, agentic pipelines scale precision and consistency across roles: solo hunters gain throughput and better write-ups; red teams capture engagements as reusable playbooks; blue and purple teams convert offensive traces into richer detections and training; leaders get measurable, auditable evidence of scope, controls, and outcomes. In short, the chapter shows how to move from clever prompts to dependable systems—turning creative exploration into controlled, data-driven security practice that preserves human judgment while amplifying impact.

The conventional triage pipeline mental model.This diagram provides a high-level (macro) view of the conventional, human-driven security triage pipeline, as sketched in the notebook. It serves as a roadmap for this linear workflow, starting with data collection and proceeding sequentially through vulnerability assessment, risk scoring, and attack path planning. This entire, predictable sequence traditionally concludes with a human operator making a final decision or handoff.

Seven best practices for offensive security. 1) Authorized scope only: to ensure we do not cause damage or expose sensitive information, we need to work within pre-defined, scoped boundaries. 2) No production harm: do not do things that will negatively affect production traffic. 3) Follow the law: we must stay compliant and follow regulations. 4) Human oversight: humans must be in the loop to review and validate findings. 5) Protect sensitive data: proper processes must be set up to ensure personal and identifiable information is not exposed. 6) Document everything: we must store logs, traces, and other artifacts that will allow us to audit our systems. 7) Reasonable disclosure: We should give the affected party a reasonable amount of time to fix issues before publicly revealing them. These best practices ensure that offensive security teams consistently deliver value and maintain professionalism within their organization.

An illustrative example of how an LLM generates the next token. As the LLM generates a sentence, it considers the context of the previous words that were generated. The LLM takes in the last token and assesses the probability of the next token. In the example above, since green has the highest logit value, it is the next word to be generated in the sentence.

An overview of AI agent systems. AI agents consist of 4 components that are orchestrated together to produce an outcome: 1) the model, which is a foundation model, 2) tools, which are functions that the LLM can use to interact with the world (e.g., custom functions, APIs, MCP servers), 3) memory, where previous interactions are stored either in the context window or in a vector database, and 4) the knowledgebase, where additional context (documents, old conversations, etc) are stored in a vector database.

The Dynamic AI Agent System Mental Model. This diagram models the more dynamic system that results from introducing an AI Agent, representing the technology and its surrounding world. Unlike the linear sequence in Model 1, the central Agent creates a cyclical, event-driven workflow that allows it to initiate reconnaissance, penetration testing, or triage in response to new data. This model provides a framework for understanding the complex, parallel interactions and feedback loops unique to the AI-driven system. A reader can use this model to predict the AI's behavior or debug its emergent actions.

An example: reconnaissance agent pipeline. An AI agent system consists of 1) a data pipeline that feeds an LLM logs and other inputs from the system, 2) a reasoning component that allows AI models to determine appropriate actions and steps, 3) an evaluation component that assesses the impact of the changes, and 4) a reporting system for the security professionals.1.4.2. Core Components of an AI Security Pipeline

Summary

- Large language models (LLMs) introduce contextual reasoning to security testing, turning raw data into actionable intelligence when guided by skilled professionals.

- Because LLMs are probabilistic systems, their outputs can be unreliable without validation; human oversight is essential to ensure accuracy and safety.

- AI agents build on LLMs by adding memory, planning, and tool-use capabilities, enabling reasoning systems that can act rather than merely respond.

- Pipelines provide the structure agents need to remain reliable and accountable—defining clear stages for input, reasoning, action, evaluation, and reporting.

- AI agent pipelines allow offensive security teams to scale intelligence without losing control—empowering individuals, red teams, and CISOs alike to achieve measurable, repeatable outcomes.

AI Agents for Offensive Security ebook for free

AI Agents for Offensive Security ebook for free